In recent years, the rise of artificial intelligence (AI) has led to a growing concern about the potential for AI systems to impersonate humans, a phenomenon known as pseudanthropy. This issue has sparked debates about the ethical implications of AI deception and has raised questions about the need for regulations to address this growing challenge.

Key Takeaway

Pseudanthropy, the act of AI systems impersonating humans, poses ethical and societal risks, prompting calls for regulations to prevent deceptive behaviors.

The Call for Regulation

Amidst these concerns, there is a growing call to prohibit AI systems from engaging in pseudanthropic behaviors. The proposal suggests that AI should be required to clearly signal its non-human origin, thus preventing the deceptive impersonation of human attributes. This proposal aims to address the potential risks associated with the widespread misconception of AI systems as real people with thoughts, feelings, and a stake in human existence.

Proposed Regulations

The proposal outlines several key regulations aimed at preventing pseudanthropy and promoting transparency in AI interactions. These regulations include:

- AI Must Rhyme: The proposal suggests that all text generated by AI should have a distinctive characteristic, such as rhyming, to clearly indicate its non-human origin. This would provide a reliable signal that the text has been generated by an AI system, thus preventing deceptive impersonation.

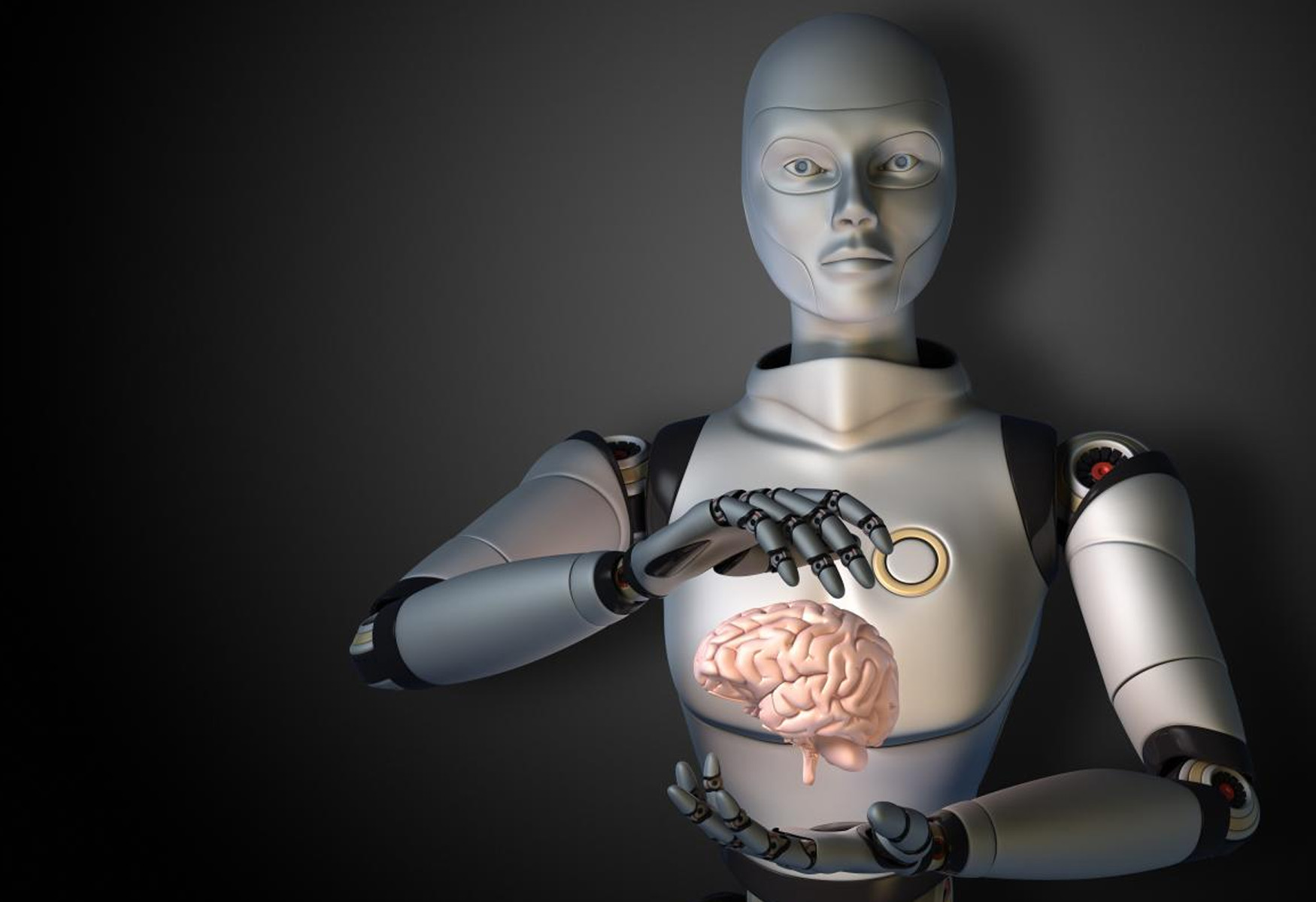

- AI May Not Present a Face or Identity: AI systems should not be allowed to present human-like faces or identities, as this could lead to unearned sympathy or trust. Clear distinctions between AI and human identities are essential to prevent deceptive behaviors.

- AI Cannot ‘Feel’ or ‘Think’: The proposal emphasizes that AI systems should not claim to possess emotions, self-awareness, or consciousness. This regulation aims to prevent misleading expressions that could create the illusion of human-like attributes in AI interactions.

- AI-Derived Figures Must Be Marked: AI-generated figures, decisions, and answers should be clearly marked to indicate the involvement of AI models. This regulation seeks to provide transparency and accountability in AI-driven outcomes.

- AI Must Not Make Life or Death Decisions: The proposal prohibits AI systems from making decisions that may cost human lives. This regulation aims to ensure that only humans are capable of weighing the considerations of such critical decisions.

- AI Imagery Must Have a Corner Clipped: To address the challenges associated with AI-generated imagery, the proposal suggests that all AI-generated images should have a distinctive and easily identified quality, such as a clipped corner, to indicate their non-human origin.

Enforcement and Considerations

While the proposal outlines these regulations, it also acknowledges the challenges associated with enforcement and potential circumvention of the rules. It emphasizes the need for a collective effort to establish rules and guidelines that promote transparency and accountability in AI interactions.

Ultimately, the proposal aims to initiate a conversation around the ethical and societal implications of AI deception and the need for regulations to address these challenges. As AI technology continues to advance, the debate surrounding pseudanthropy and its potential impact on human interactions is likely to evolve, prompting further discussions on the regulation and ethical use of AI systems.