Introduction

Machine learning has revolutionized the way we approach problem-solving and decision-making in various industries. It is a subset of artificial intelligence (AI) that focuses on developing algorithms and models that enable computers to learn and make predictions or decisions without explicit programming.

At its core, machine learning involves analyzing and interpreting complex data patterns to create predictive models. These models can then be used to automate tasks, make recommendations, or gain insights from large datasets. Machine learning methods are designed to improve over time as they receive more data and feedback, enabling them to continuously enhance accuracy and performance.

One of the most significant advantages of machine learning is its ability to handle vast amounts of data, far beyond human capacity. By extracting meaningful patterns and relationships hidden in the data, machine learning algorithms can identify trends, detect anomalies, and unveil valuable insights that can drive business decisions.

There are several types of machine learning methods, each with its own approach and applicability. Some of the most common methods include supervised learning, unsupervised learning, reinforcement learning, deep learning, decision trees, support vector machines, naive Bayes classifiers, clustering algorithms, neural networks, and genetic algorithms.

Supervised learning involves training an algorithm on a labeled dataset, where the desired output is already known. The algorithm learns from the input-output pairs and generalizes its knowledge to make predictions on new, unseen data.

Unsupervised learning, on the other hand, deals with unlabeled data. The algorithm analyzes the data to identify patterns or group similar instances together without any prior knowledge of the classes or categories.

Reinforcement learning is a type of learning where an agent interacts with an environment, learning from trial and error. The agent receives feedback in the form of rewards, which helps in determining its actions and optimizing its performance.

Deep learning is a subset of machine learning that focuses on artificial neural networks with multiple layers. It is particularly useful for tasks like image recognition, natural language processing, and speech recognition.

Decision trees are models that make predictions by mapping decisions and their possible consequences in a hierarchical structure. Support vector machines are supervised learning models that analyze data and use it to classify instances into different classes.

Naive Bayes classifiers are probabilistic models that assign class labels to instances based on the likelihood of their occurrence. Clustering algorithms group similar instances together based on their similarities or distances.

Neural networks, inspired by the structure and functioning of the human brain, consist of interconnected artificial neurons that can recognize patterns and make predictions. Genetic algorithms use evolutionary principles like mutation and selection to solve complex optimization problems.

Each machine learning method has its strengths and limitations, making it suitable for different types of problems. By understanding these methods and their applications, businesses and individuals can harness the power of machine learning to drive innovation, improve decision-making, and unlock new possibilities.

Supervised Learning

Supervised learning is one of the fundamental approaches in machine learning. In this method, a model is trained on a labeled dataset, where the input data and the corresponding output or target values are known. The goal is to learn a mapping function that can accurately predict the output for new, unseen inputs.

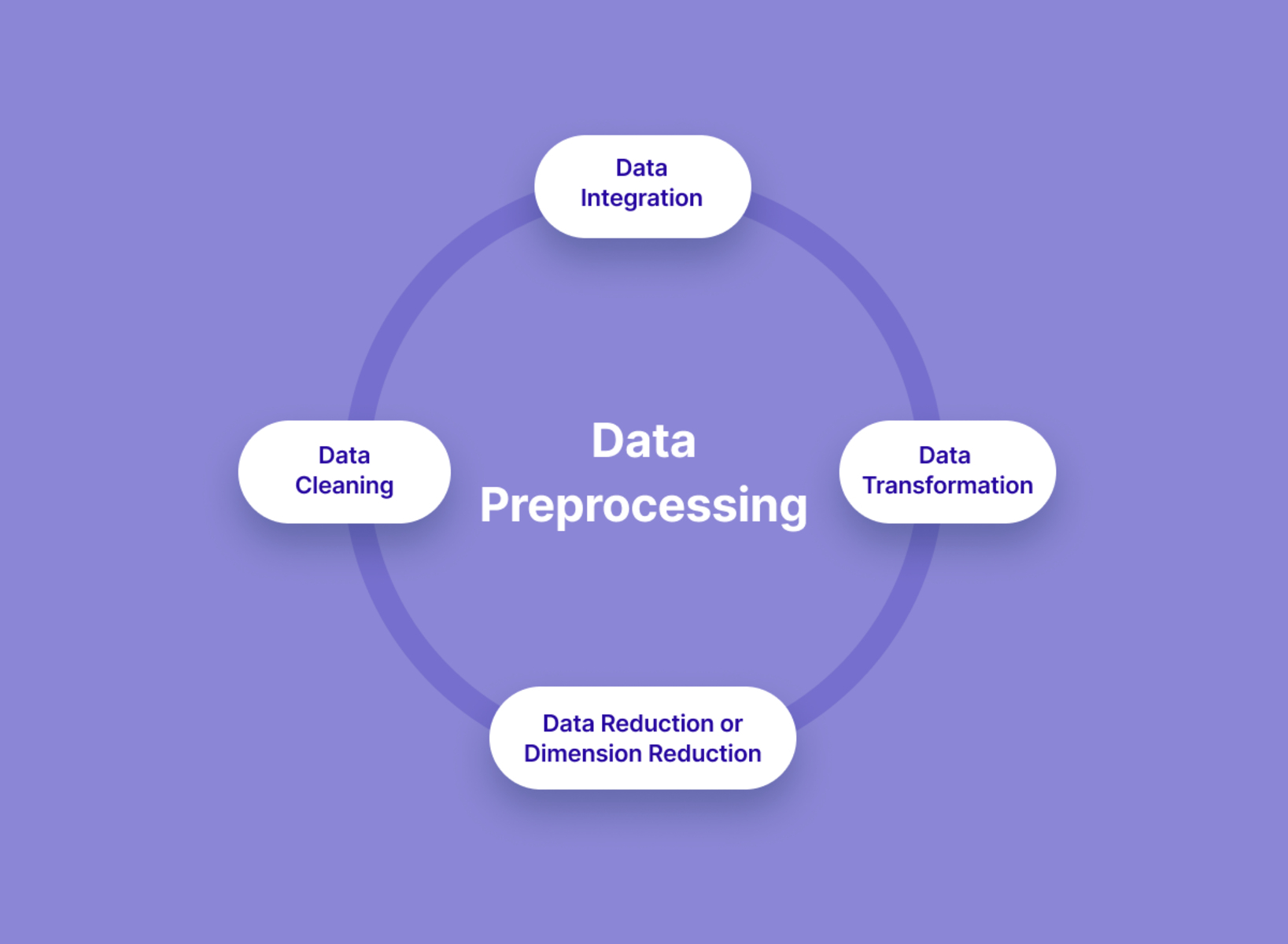

At its core, supervised learning involves the creation of a training set that comprises input-output pairs. The model analyzes this training data and learns to generalize its knowledge to make predictions on unseen data. The key idea is to find patterns and relationships between the input features and the target variable, which enables the model to make accurate predictions.

There are various algorithms used in supervised learning, depending on the type of problem at hand. Some common algorithms include linear regression, logistic regression, decision trees, random forests, support vector machines, and neural networks.

Linear regression is a popular algorithm used for predicting continuous numeric values. It establishes a linear relationship between the input features and the target variable, making it suitable for tasks like price prediction or demand forecasting. Logistic regression, on the other hand, is used for binary classification problems, where the target variable has two possible outcomes.

Decision trees are versatile algorithms that can handle both regression and classification problems. They consist of nodes that represent decisions and branches that define the possible outcomes. Decision trees are easy to interpret and visualize, making them valuable in understanding the decision-making process.

Random forests, an ensemble method, combine multiple decision trees to make predictions. This approach increases accuracy and reduces overfitting by averaging the predictions of each individual decision tree.

Support vector machines (SVMs) are powerful algorithms that are commonly used for classification tasks. They map the input data into a high-dimensional space and find the optimal hyperplane that separates the different classes. SVMs have proven to be effective in various domains, including image classification, text categorization, and data mining.

Neural networks, inspired by the structure of the human brain, are highly flexible and capable of learning complex relationships. They consist of multiple layers of interconnected artificial neurons, each performing simple computations. Neural networks can handle a wide range of tasks, from image and speech recognition to natural language processing.

Supervised learning is widely used in various domains, including finance, healthcare, marketing, and customer relationship management. It enables businesses to make predictions, classify data, detect anomalies, and automate decision-making processes. By training models on historical data, organizations can leverage supervised learning to gain insights and optimize their operations.

Unsupervised Learning

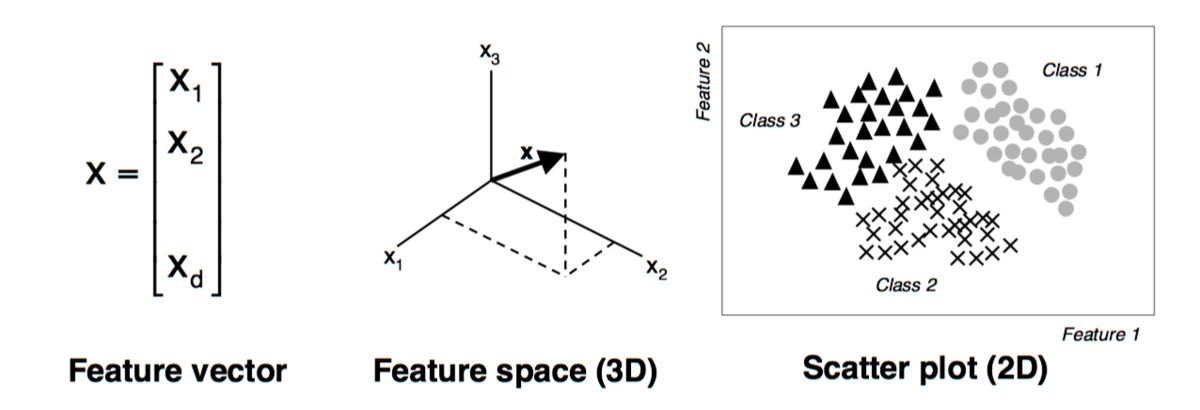

Unsupervised learning is a machine learning approach that deals with unlabeled data. Unlike supervised learning, there are no predefined target values or output labels to guide the learning process. Instead, the algorithm aims to discover patterns, structures, and relationships within the data on its own.

In unsupervised learning, the focus is on clustering and dimensionality reduction. Clustering algorithms group similar instances together based on their intrinsic similarities or distances. By doing so, they uncover natural groupings or clusters within the data. Common clustering algorithms include k-means clustering, hierarchical clustering, and DBSCAN (Density-Based Spatial Clustering of Applications with Noise).

Dimensionality reduction techniques, on the other hand, aim to reduce the number of input features while preserving as much relevant information as possible. This is particularly useful in dealing with high-dimensional datasets where the presence of numerous features can lead to computational complexity and overfitting. Principal Component Analysis (PCA) and t-SNE (t-Distributed Stochastic Neighbor Embedding) are popular dimensionality reduction methods used in unsupervised learning.

Unsupervised learning methods can be used for various purposes. One application is customer segmentation, where clustering algorithms can group customers based on their preferences, behaviors, or demographics. This information can help businesses tailor marketing strategies and personalize customer experiences.

Another application is anomaly detection, where the goal is to identify unusual or abnormal instances in a dataset. Unsupervised learning algorithms can identify patterns within the majority of the data and flag any outliers or anomalies. This is particularly valuable in fraud detection, network intrusion detection, or identifying medical conditions.

Unsupervised learning also plays a crucial role in exploratory data analysis. By visualizing and summarizing the data, clustering algorithms and dimensionality reduction techniques can uncover hidden patterns and structures. This can lead to valuable insights and guide further analysis.

It is important to note that unsupervised learning methods cannot provide explicit interpretations or labels for the discovered patterns. The interpretation and labeling are generally left to domain experts or further analysis. However, unsupervised learning can serve as a valuable exploratory tool to gain a deeper understanding of the data and uncover meaningful information.

In summary, unsupervised learning is a powerful technique in machine learning that enables the discovery of hidden patterns and structures within unlabeled data. Clustering algorithms and dimensionality reduction methods play a crucial role in grouping similar instances and reducing the dimensionality of high-dimensional datasets. By utilizing this approach, businesses can optimize their operations, gain insights, and make data-driven decisions.

Reinforcement Learning

Reinforcement learning is a machine learning paradigm that focuses on training agents to make sequential decisions through interaction with an environment. Unlike supervised and unsupervised learning, reinforcement learning does not require labeled data as feedback. Instead, the agent learns from trial and error, receiving feedback in the form of rewards or penalties.

The goal of reinforcement learning is to maximize a cumulative reward over a period of time. The agent explores different actions and observes the consequences to learn which actions lead to desirable outcomes. Through this iterative process, the agent develops a policy that maps states to actions, efficiently navigating the environment to achieve optimal results.

In reinforcement learning, the environment is represented as a Markov Decision Process (MDP), which consists of states, actions, transition probabilities, and reward functions. The agent takes actions in each state, and based on the environment’s feedback, updates its knowledge to make better decisions in the future.

There are two main components in reinforcement learning: exploration and exploitation. Exploration involves trying new actions to gather information about the environment and discover potentially better strategies. Exploitation, on the other hand, involves leveraging the learned knowledge to maximize the expected reward.

Reinforcement learning has been successfully applied to various domains, including robotics, gaming, recommendation systems, and autonomous vehicles. For example, in robotics, reinforcement learning can be used to train robots to perform complex tasks, such as grasping objects or navigating through dynamic environments.

In gaming, reinforcement learning has achieved remarkable results, particularly in games like Go and chess. Deep reinforcement learning, combining deep neural networks with reinforcement learning techniques, has led to groundbreaking achievements in game playing, surpassing human performance in many cases.

Another area where reinforcement learning excels is in recommendation systems. By learning from user interactions and feedback, reinforcement learning algorithms can personalize recommendations, leading to improved user satisfaction and engagement.

Autonomous vehicles also benefit from reinforcement learning. Agents can learn to make driving decisions based on environmental cues, traffic conditions, and optimal routing to ensure safe and efficient transportation.

Reinforcement learning, however, is known for its computational complexity and difficulty in learning optimal policies in large state spaces. The exploration-exploitation trade-off, convergence issues, and the need for significant amounts of training data are some of the challenges associated with reinforcement learning algorithms.

Nonetheless, with advancements in algorithms and computing power, reinforcement learning continues to make significant strides in solving complex decision-making problems.

In summary, reinforcement learning focuses on training agents to make sequential decisions through interaction with an environment. By maximizing cumulative rewards and learning from trial and error, agents can develop optimal policies for a wide range of applications, including robotics, gaming, recommendation systems, and autonomous vehicles.

Deep Learning

Deep learning is a subset of machine learning that focuses on the development and training of artificial neural networks with multiple layers, known as deep neural networks. These networks are designed to simulate the structure and functioning of the human brain, allowing them to learn and make predictions from complex datasets.

The key characteristic of deep learning is the ability of deep neural networks to automatically learn hierarchical representations of data. Each layer in the network extracts higher-level features from the input data, enabling the network to capture intricate patterns and relationships. This hierarchical representation learning is what gives deep learning models their power and flexibility in handling a wide range of tasks.

Deep learning has demonstrated remarkable success in various domains. One of the most notable achievements is in image recognition and computer vision. Convolutional Neural Networks (CNNs), a type of deep neural network, are capable of accurately classifying and detecting objects in images and videos. This has led to significant advancements in fields like autonomous driving, facial recognition, and medical imaging.

Natural Language Processing (NLP) is another area where deep learning excels. Recurrent Neural Networks (RNNs) and their variants, such as Long Short-Term Memory (LSTM) networks, are used to process and understand sequential data, such as text and speech. Deep learning models have achieved state-of-the-art performance in tasks like sentiment analysis, machine translation, and speech recognition.

Deep learning is also applied in the field of reinforcement learning. Deep Q-Networks (DQNs) combine deep neural networks with reinforcement learning algorithms to train agents that can play and excel at complex games, such as Atari games, by learning directly from pixel-level inputs.

One of the challenges in deep learning is the need for a large amount of labeled training data. However, recent advancements in techniques like transfer learning, where pre-trained models are used as starting points, have helped mitigate this challenge. Transfer learning allows deep learning models to leverage knowledge learned from one task or domain to perform well on related tasks or domains with limited data.

Another challenge is the computational requirements of deep learning models. Training deep neural networks often requires significant computational resources, including powerful GPUs and specialized hardware accelerators. However, advancements in hardware and cloud computing have made deep learning more accessible and feasible for researchers and practitioners.

In summary, deep learning is a powerful approach in machine learning that leverages deep neural networks to learn hierarchical representations of data. With applications ranging from computer vision to natural language processing and reinforcement learning, deep learning has revolutionized various industries and continues to advance the boundaries of what is possible with machine intelligence.

Decision Trees

Decision trees are versatile machine learning models that are widely used for both regression and classification tasks. They provide a visual and intuitive representation of decision-making processes by mapping decisions and their possible consequences in a hierarchical structure.

In a decision tree, each internal node represents a test on one of the input features, while each leaf node represents a class label or a predicted value. The decision tree algorithm recursively splits the data based on feature values, aiming to minimize impurity or maximize information gain at each step.

One of the advantages of decision trees is their interpretability. By examining the branches and nodes of the tree, we can gain insights into the decision-making process. Decision trees allow us to understand the most important features and the key decision points that lead to different outcomes.

Decision trees are particularly effective when dealing with both categorical and numerical features. They can handle missing values and outliers, making them robust and suitable for real-world datasets. Decision trees also have the capability to handle data with both discrete and continuous features.

Ensemble methods, such as random forests and gradient boosting, often leverage decision trees as base learners. Random forests combine multiple decision trees and make predictions by averaging the results, reducing the risk of overfitting and increasing the overall accuracy. Gradient boosting, on the other hand, trains decision trees sequentially, with each subsequent tree aiming to correct the mistakes made by the previous ones.

Decision trees have found applications in various fields. In finance, decision trees can be used for credit scoring and risk assessment, aiding in loan approval processes. In healthcare, decision trees can assist in medical diagnosis, predicting disease outcomes, and recommending treatment options based on patient characteristics.

Decision trees are also utilized in customer relationship management. By analyzing customer data, decision trees can segment customers based on their buying patterns, behaviors, or demographics, enabling personalized marketing campaigns and targeted advertising.

Despite their strengths, decision trees have some limitations. They tend to overfit the training data if not properly controlled by pruning or regularization techniques. Decision trees are also sensitive to small changes in the training data, leading to different structures and possibly different predictions.

Overall, decision trees provide an intuitive and interpretable way of decision-making and have proven to be effective in various domains. Their adaptability to different types of data and their ability to handle both classification and regression tasks make them a valuable tool in the machine learning toolbox.

Support Vector Machines

Support Vector Machines (SVMs) are widely used supervised machine learning models primarily used for classification tasks. SVMs excel in handling complex datasets and finding the optimal hyperplane that separates different classes in a high-dimensional space.

The goal of SVM is to find the decision boundary that maximizes the margin between different classes. The margin is the distance between the decision boundary and the closest data points from each class. SVM aims to find the hyperplane that not only separates the classes but also maximizes the margin, ensuring better generalization and robustness to new data.

SVMs can handle both linearly separable and non-linearly separable datasets. In the case of linearly separable data, SVMs find the optimal hyperplane directly. However, for non-linearly separable data, SVMs utilize a kernel function to transform the data into a higher-dimensional space, where it becomes linearly separable. This allows SVMs to capture complex patterns and make accurate predictions.

One of the advantages of SVMs is their ability to handle high-dimensional datasets. SVMs can effectively deal with datasets that have a large number of features without suffering from the “curse of dimensionality.” They are also robust to outliers, as they only consider the support vectors, which are the data points located near the decision boundary.

SVMs have found applications in various domains, including image classification, text categorization, and bioinformatics. In image classification, SVMs can distinguish between different objects or detect specific features within images. In text categorization, SVMs can classify documents into different categories based on their content or sentiment.

Moreover, SVMs have been successfully used in solving binary and multi-class classification problems. They can solve both linear and non-linear problems with high accuracy, making them valuable in numerous real-world scenarios.

However, SVMs suffer from some limitations. They can be computationally expensive, especially when dealing with large datasets. In some cases, it can be challenging to determine the appropriate kernel function and its parameters, which might affect the performance of the model.

Despite these limitations, SVMs remain a popular choice for classification tasks due to their ability to find an optimal decision boundary, handle high-dimensional data, and provide good generalization. With proper tuning and regularization, SVMs can deliver accurate and robust classification results.

Naive Bayes Classifiers

Naive Bayes classifiers are probabilistic machine learning models commonly used for classification tasks. They are based on Bayes’ theorem and the strong assumption of feature independence, which is why they are called “naive.” Despite this simplifying assumption, Naive Bayes classifiers have proven to be effective in many real-world applications.

The underlying principle of Naive Bayes classifiers is to calculate the probability of a sample belonging to a particular class based on the probabilities of its features. The classifier estimates the conditional probability of each feature given the class and the prior probability of each class occurring in the training set. By multiplying the conditional probabilities of the features for each class and comparing them, the classifier assigns the sample to the class with the highest probability.

Naive Bayes classifiers are particularly useful when dealing with large feature spaces and high-dimensional data. They are known for their computational efficiency and fast training times, as they only require estimating the probabilities from the training data. Moreover, Naive Bayes classifiers can handle continuous and discrete features, making them versatile in various domains.

There are different variants of Naive Bayes classifiers, including Gaussian Naive Bayes, Multinomial Naive Bayes, and Bernoulli Naive Bayes. Gaussian Naive Bayes assumes that the features follow a Gaussian distribution, making it suitable for continuous numeric data. Multinomial Naive Bayes, on the other hand, is commonly used for discrete count data, such as word frequencies in text classification. Bernoulli Naive Bayes is appropriate when dealing with binary features, where each feature represents the presence or absence of a particular attribute.

Naive Bayes classifiers have found applications in various areas, such as spam filtering, sentiment analysis, document categorization, and medical diagnosis. In spam filtering, the classifier can analyze the frequency of specific words or patterns in an email to predict whether it is spam or not. In sentiment analysis, Naive Bayes classifiers can determine the sentiment of a text by considering the occurrence of certain words or phrases associated with positive or negative sentiments.

It is worth noting that Naive Bayes classifiers depend on the assumption of feature independence, which may not hold true in real-world scenarios. This assumption can lead to inaccurate predictions if there are strong dependencies or interactions between the features. However, Naive Bayes classifiers often perform well in practice despite this simplifying assumption.

Overall, Naive Bayes classifiers are simple yet effective models for classification tasks. With their computational efficiency, ability to handle high-dimensional data, and good performance in many domains, they continue to be a popular choice for various machine learning applications.

Clustering Algorithms

Clustering algorithms are an essential aspect of unsupervised learning, focusing on grouping similar instances together based on their inherent characteristics and patterns. These algorithms aim to discover hidden structures within unlabeled data, allowing for data exploration, pattern discovery, and segmentation.

Clustering algorithms operate based on the notion of similarity or distance between data points. The goal is to maximize the similarity within clusters while minimizing the similarity between different clusters. By doing so, clustering algorithms create partitions or groups that share common characteristics.

There are various types of clustering algorithms, each with its approach and strengths. One of the most commonly used clustering techniques is K-means clustering. In K-means, the algorithm partitions the data into K clusters, where each cluster is represented by its centroid. The algorithm iteratively assigns data points to the nearest centroid and recalculates the centroids until convergence.

Hierarchical clustering is another commonly used technique, which builds a hierarchy of clusters through a bottom-up or top-down approach. The algorithm starts by treating each data point as a separate cluster and gradually merges or splits clusters based on their similarity.

DBSCAN (Density-Based Spatial Clustering of Applications with Noise) is a density-based clustering algorithm that groups together data points that are close to each other in terms of density. It can discover clusters of different shapes and sizes, while also identifying data points that do not belong to any cluster, often referred to as noise or outliers.

Clustering algorithms are used in various domains and applications. In customer segmentation, clustering algorithms are employed to divide customers into distinct groups based on their preferences, behaviors, or demographic profiles. This enables businesses to deliver personalized marketing strategies and tailor products or services to specific customer segments.

In image and object recognition, clustering algorithms can group similar images or detect objects with similar characteristics. This is invaluable in tasks like image search, content-based image retrieval, and object detection in computer vision applications.

Furthermore, clustering algorithms are used in anomaly detection, where they can identify unusual patterns or outliers in data. This is especially useful in fraud detection, network intrusion detection, and identifying rare medical conditions.

Despite their effectiveness, clustering algorithms face challenges in determining the optimal number of clusters and handling high-dimensional and noisy data. The selection of distance metrics and similarity measures can also impact the clustering results. Therefore, it is essential to understand the nature of the data and choose appropriate clustering algorithms and evaluation techniques.

In summary, clustering algorithms play a crucial role in unsupervised learning, allowing for data exploration, pattern identification, and segmentation. With their ability to discover hidden structures within unlabeled data, clustering algorithms have diverse applications across domains, including customer segmentation, image recognition, and anomaly detection.

Neural Networks

Neural networks, inspired by the structure and functioning of the human brain, are a powerful subset of machine learning models. They consist of interconnected artificial neurons, also known as nodes or units, that work together to analyze and learn from complex input data.

At a high level, neural networks consist of layers: an input layer, one or more hidden layers, and an output layer. Each layer comprises multiple neurons, which receive input signals, perform computations, and pass on the resulting output signals to the next layer.

The connections between neurons are represented by weights. During the training phase, neural networks adjust these weights based on the provided training data, aiming to minimize the difference between the predicted output and the actual output. This process, known as backpropagation, iteratively updates the weights to improve the network’s ability to make accurate predictions.

Neural networks can handle various types of data, including numerical, categorical, and text-based data. They can perform both regression and classification tasks, making them versatile for a wide range of applications.

One of the major advantages of neural networks is their ability to learn representations of data. Through the multiple layers, neural networks can automatically extract meaningful features and patterns from the input data, eliminating the need for manual feature engineering. This makes neural networks well-suited for tasks like image recognition, natural language processing, and speech recognition.

Convolutional Neural Networks (CNNs) are a specific type of neural network that excel in image and video analysis. CNNs use convolutional layers to detect spatial patterns in images, learning to recognize objects and complex features. This has led to significant advancements in tasks like object detection, image segmentation, and facial recognition.

Recurrent Neural Networks (RNNs) are another variant of neural networks that are designed to handle sequential data, such as time series data or natural language data. RNNs have memory capabilities, allowing them to capture dependencies and patterns across time steps. This makes them suitable for tasks like machine translation, sentiment analysis, and speech recognition.

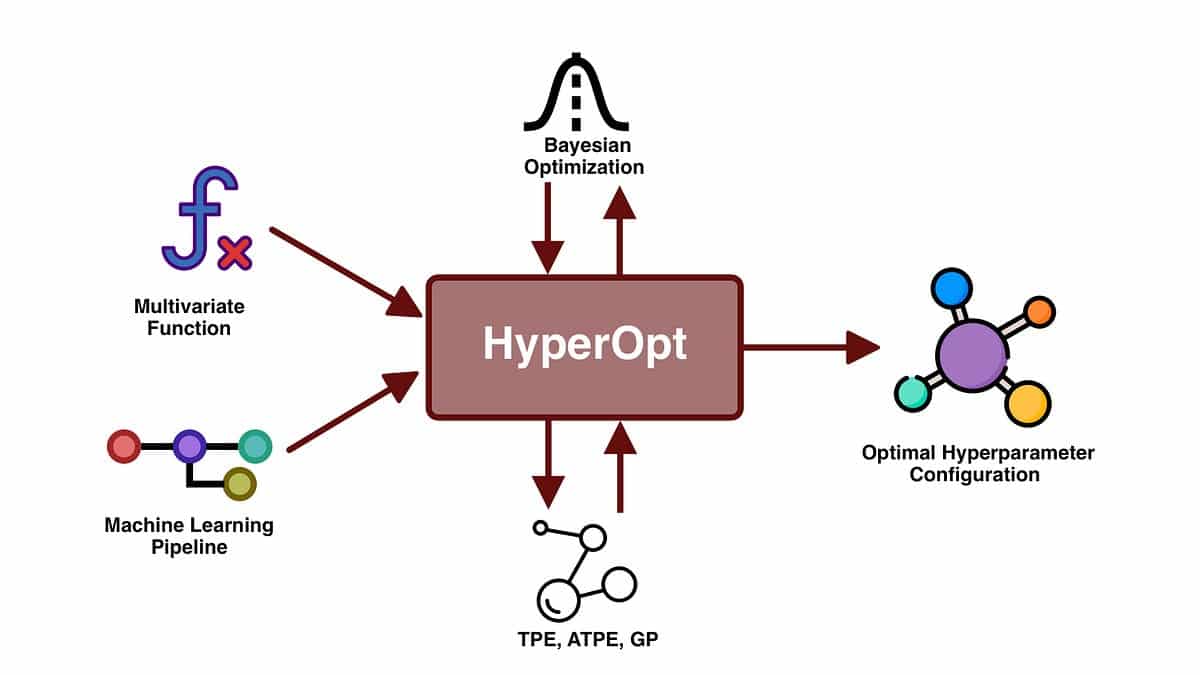

Despite their power, neural networks have some limitations. They often require a large amount of labeled training data to achieve good performance. Training neural networks can be computationally intensive and time-consuming, especially for deep networks with many layers and parameters. The architecture design and hyperparameter tuning of neural networks are also critical to their performance, requiring expertise and careful experimentation.

Overall, neural networks have revolutionized machine learning with their ability to learn complex patterns and make accurate predictions. With advancements in hardware and algorithms, they continue to push the boundaries of what is possible in areas such as computer vision, natural language processing, and speech recognition.

Genetic Algorithms

Genetic algorithms are a type of optimization algorithm inspired by the principles of natural selection and genetics. They are widely used in machine learning and evolutionary computing to find optimal solutions to complex problems by mimicking the process of genetic evolution.

The core idea behind genetic algorithms is to model a population of potential solutions to a problem and apply genetic operators, such as selection, crossover, and mutation, to evolve and improve the solutions over successive generations.

At the start, a population of candidate solutions, referred to as chromosomes or individuals, is randomly generated. Each chromosome represents a possible solution to the problem being solved. The quality or fitness of each individual is evaluated based on a fitness function, which quantifies the suitability or performance of the solution.

During the evolutionary process, the genetic operators are applied to create new offsprings. The selection operator favors individuals with higher fitness values, increasing their chances of being selected for reproduction. The crossover operator combines genetic information from two parent individuals to create new offspring, mimicking the genetic recombination process. The mutation operator introduces small random changes to the genetic material of the individuals to maintain diversity within the population.

The new offspring then undergo fitness evaluation, and the process continues through multiple iterations or generations until a termination condition is met, such as reaching a maximum number of generations or the desired level of solution quality.

Genetic algorithms can handle complex optimization problems with large search spaces and multiple objectives. They have been successfully applied to various domains, including engineering design, financial portfolio optimization, scheduling problems, and machine learning model selection.

One of the strengths of genetic algorithms is their ability to explore the search space efficiently and avoid getting stuck in local optima. By maintaining diversity within the population through the genetic operators, genetic algorithms can thoroughly explore different regions of the search space, increasing the chances of identifying globally optimal or near-optimal solutions.

However, genetic algorithms also have some challenges. They can be computationally expensive, especially for large-scale problems or complex fitness functions. The choice of appropriate genetic operators and their parameters can significantly impact the performance and convergence of the algorithm. There is also a trade-off between exploration and exploitation, as balancing the exploration of new solutions with the exploitation of promising ones can be challenging.

In summary, genetic algorithms are powerful optimization techniques that employ concepts from natural selection and genetics to find optimal solutions to complex problems. With their ability to explore large search spaces and handle multiple objectives, genetic algorithms continue to be valuable tools in solving various optimization problems.