Introduction

Welcome to the world of machine learning, where data and algorithms come together to drive intelligent decision-making. As businesses increasingly rely on machine learning models to make critical predictions and automate processes, the need for effective governance of these models becomes paramount. Without proper governance, organizations may face issues such as biased decision-making, inaccurate predictions, or models that fail to perform as expected.

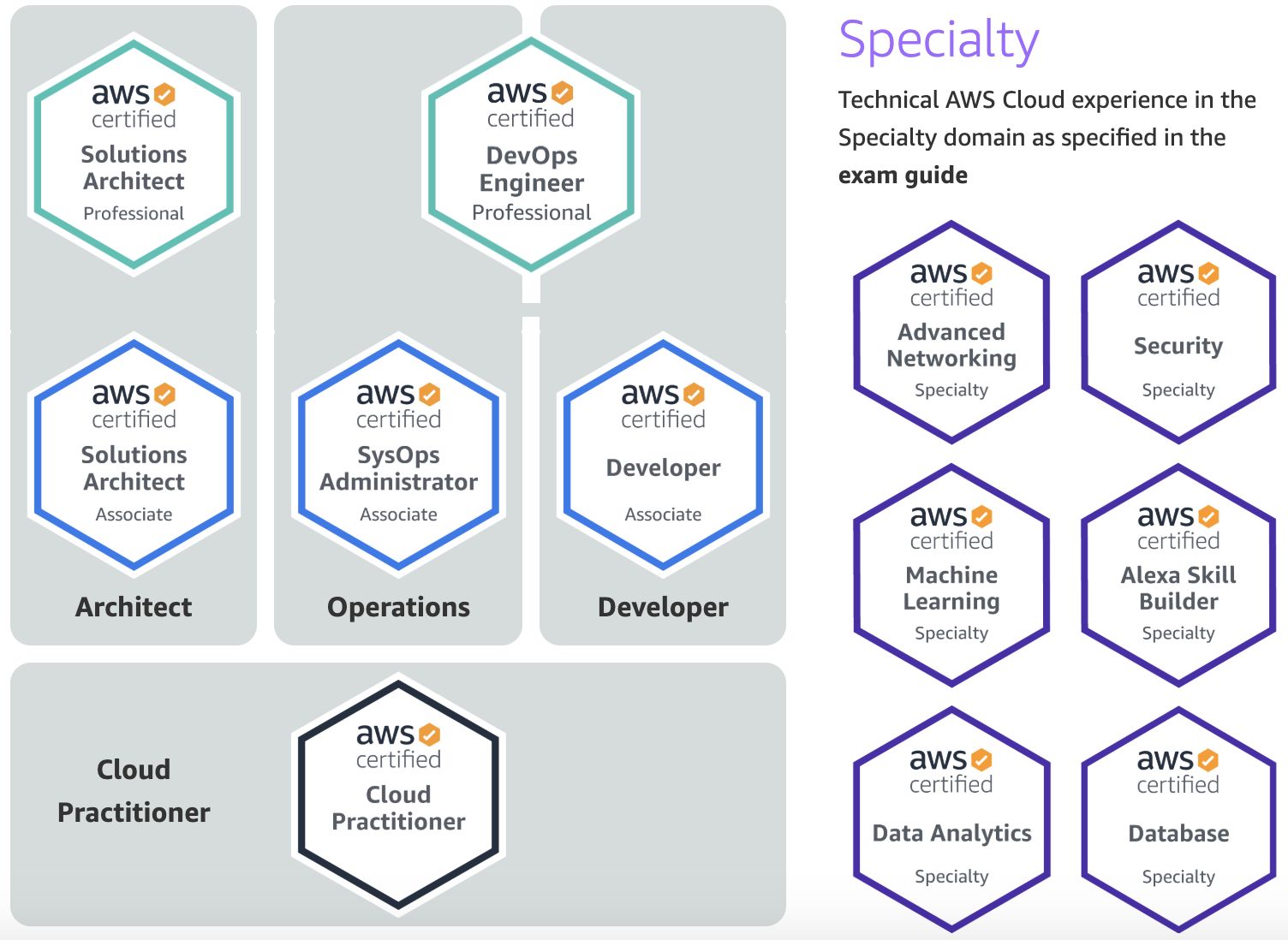

Amazon SageMaker, a comprehensive machine learning service offered by Amazon Web Services (AWS), provides a range of features that can help customers effectively govern their machine learning models. These features not only enable organizations to manage their data, models, and resources efficiently but also ensure transparency, accountability, and compliance throughout the machine learning lifecycle.

In this article, we will explore some of the key features offered by Amazon SageMaker that customers can utilize to govern their machine learning models effectively. From data management and model deployment to monitoring, auditing, and cost optimization, Amazon SageMaker offers a comprehensive suite of tools to address the governance challenges faced by organizations.

Whether you are a data scientist, machine learning engineer, or a business executive responsible for overseeing machine learning projects, understanding these features and incorporating them into your workflow can bring significant benefits. So, let’s dive deeper into the various governance features provided by Amazon SageMaker and learn how they can help make your machine learning journey smoother and more successful.

Data Management

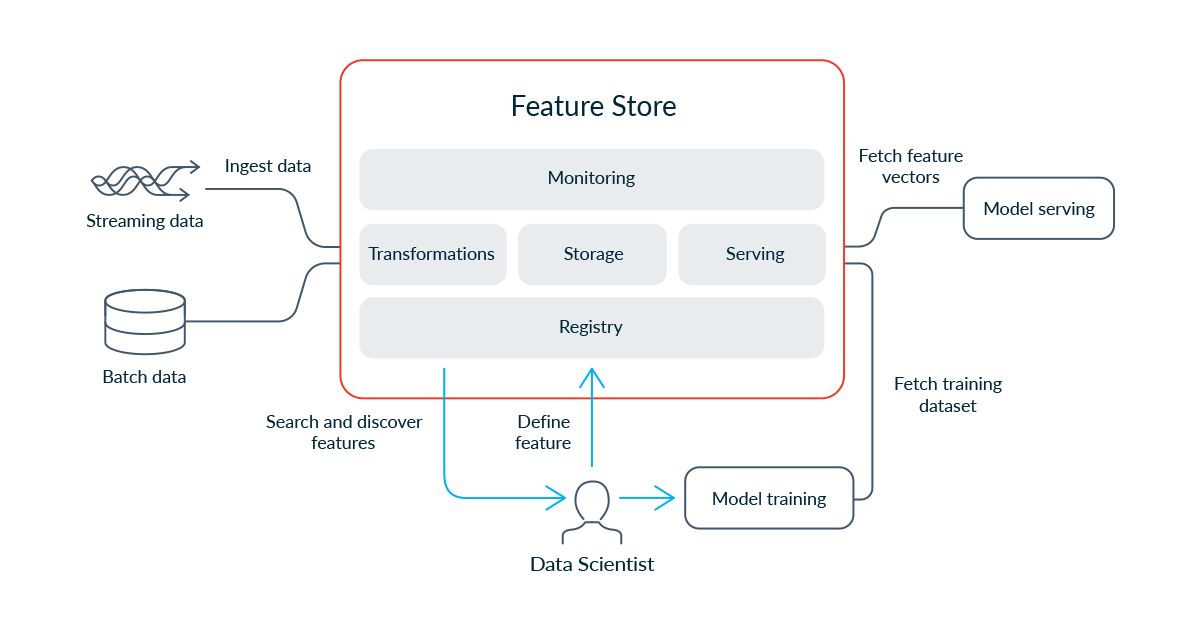

Effective data management is crucial for the success of any machine learning project. Amazon SageMaker offers several features that aid in the efficient management of data throughout the model lifecycle.

One of the key features is the Data Wrangler, which simplifies the process of data preprocessing and feature engineering. With Data Wrangler, data scientists can easily explore, clean, and transform their data using a visual interface. This feature saves significant time and effort by automating repetitive tasks and allowing users to easily apply data transformations.

To ensure data quality and reliability, Amazon SageMaker also provides Data Quality Monitoring. This feature enables customers to detect and monitor data drift, missing values, and other data anomalies. By continuously monitoring data quality, organizations can identify issues early on and take necessary steps to rectify them, ensuring the accuracy and reliability of their machine learning models.

Furthermore, AWS Glue DataBrew, integrated with Amazon SageMaker, allows users to easily clean and normalize data from various sources. With its built-in transformation library, users can standardize, enrich, and validate data, ensuring consistent and reliable input for their machine learning models.

In addition to data preprocessing, Amazon SageMaker provides a Data Labeling feature that enables users to efficiently annotate data for supervised learning tasks. With a simple interface, users can easily define labeling jobs, assign tasks to human labelers, and monitor the progress of labeling tasks. This feature greatly simplifies the process of creating high-quality training datasets for machine learning models.

Lastly, Amazon SageMaker offers Data Lineage, which helps track the origin and transformation history of data. Organizations can easily trace the lineage of their data, understand the relationships between datasets, and ensure compliance with data governance policies.

With these data management features, Amazon SageMaker empowers organizations to efficiently preprocess, clean, monitor, and annotate their data, laying the foundation for accurate and reliable machine learning models.

Model Hosting and Deployment

Once the machine learning model is trained and ready, the next step is to deploy it in a production environment. Amazon SageMaker simplifies the model hosting and deployment process with its powerful features.

The Endpoint feature of Amazon SageMaker allows users to deploy their models as endpoints, making them accessible via API calls. This enables real-time inference and integration with applications and services. With automatic scaling and load balancing capabilities, endpoints can handle high-volume traffic efficiently.

To facilitate seamless integration with different runtimes and frameworks, Amazon SageMaker provides the Bring Your Own Environment (BYOE) feature. Users can bring their own custom containers and define their runtime environment, enabling flexibility and compatibility with existing infrastructure and tooling.

In addition, Amazon SageMaker offers integration with AWS Lambda, allowing users to create serverless functions that can invoke the deployed machine learning models. This serverless architecture ensures cost-effective and scalable model serving without the need for provisioning and managing server instances.

For organizations operating in regulated industries or with strict compliance requirements, Amazon SageMaker provides the VPC (Virtual Private Cloud) Endpoints feature. VPC endpoints establish a private connection between the deployment environment and the model, ensuring secure and compliant model serving.

To facilitate A/B testing and experimentation, Amazon SageMaker offers Multi-Model Endpoints. With this feature, users can deploy multiple versions of a model simultaneously and route traffic to different versions based on defined rules. This allows organizations to seamlessly roll out new models, evaluate their performance, and make informed decisions about model deployment.

Moreover, Amazon SageMaker supports Edge Deployment, allowing users to deploy their models on edge devices and IoT devices. This brings the power of machine learning closer to the data source, enabling real-time inference and reducing latency. This feature is particularly useful in scenarios where low latency and offline capabilities are critical.

By providing seamless deployment options, integration with existing infrastructure, and support for edge deployment, Amazon SageMaker empowers organizations to efficiently host and serve their machine learning models, ensuring their availability and scalability in production environments.

Model Monitoring and Debugging

Monitoring and debugging machine learning models are essential to ensure their performance and reliability over time. Amazon SageMaker offers several features that enable users to monitor and debug their models effectively.

One of the key features is Amazon CloudWatch integration, which allows users to monitor the real-time performance and utilization of their deployed models. With CloudWatch, users can set up alarms and alerts to notify them of any anomalies or issues, ensuring proactive monitoring and timely action.

Amazon SageMaker also provides Distributed Model Monitoring, which automatically monitors the input data distribution and identifies any inconsistencies or outliers. This helps detect data drift and enables users to retrain or fine-tune the model to maintain its accuracy and performance.

To facilitate a deeper understanding of model behavior, Amazon SageMaker offers Model Insights. This feature provides visualizations and insights into the model’s performance, making it easier for users to identify patterns, trends, and issues. With these insights, users can enhance the model’s accuracy and interpretability.

In addition, Amazon SageMaker provides Model Debugging capabilities. Users can capture and analyze real-time data and predictions to identify any errors or unexpected behavior. This helps in debugging and fixing issues during the model deployment and inference stages.

For advanced debugging needs, Amazon SageMaker provides TensorBoard Integration. TensorBoard is a powerful visualization tool that allows users to analyze the training process, track metrics, and visualize model performance. With this integration, users can easily debug and optimize their models by analyzing various aspects such as loss, gradients, and activations.

Furthermore, Amazon SageMaker offers Automatic Model Monitoring, which continuously monitors the deployed model’s behavior and performance. It generates detailed reports and alerts users of any deviations from the expected behavior. This feature enables organizations to quickly detect and rectify any issues, ensuring the reliability and effectiveness of their models.

With these monitoring and debugging features, Amazon SageMaker empowers organizations to proactively monitor their models, detect anomalies, and resolve issues efficiently. This ensures that the models perform optimally and deliver reliable predictions over time.

Model Approval and Deployment

Before deploying machine learning models into production, organizations often have approval processes and workflows in place to ensure that only high-quality and reliable models are deployed. Amazon SageMaker offers features to facilitate model approval and deployment, enabling organizations to streamline this process.

One of the key features is the Model Registry, which acts as a central repository for managing trained models and their associated metadata. The Model Registry allows users to version their models, track changes, and set access controls. This ensures proper governance and traceability throughout the model deployment lifecycle.

Amazon SageMaker also provides a built-in Model Approval workflow, allowing users to define approval rules and criteria for each model. This workflow ensures that models go through a rigorous review process before being deployed into production. Users can set up a multi-step approval process and involve relevant stakeholders, ensuring quality control and compliance.

To aid in the model approval process, Amazon SageMaker offers an intuitive Model Deployment UI. This UI provides a visual interface for deploying and managing models, making it easier for users to navigate through the approval workflow and track the status of each model.

In addition to the approval workflow, Amazon SageMaker facilitates Continuous Integration and Continuous Deployment (CI/CD) for machine learning models. With integration with popular CI/CD tools like AWS CodePipeline, organizations can automate the deployment pipeline and ensure a smooth and efficient delivery of models into production.

Moreover, Amazon SageMaker supports Model Deployment through AWS Marketplace. This feature allows organizations to publish their models and make them available to other users through the AWS Marketplace. This not only expands the reach of the models but also provides a monetization opportunity for the model creators.

With these features, Amazon SageMaker simplifies the model approval and deployment process, ensuring that only approved and high-quality models are deployed into production environments. This facilitates efficient collaboration, compliance, and scalability in the deployment of machine learning models.

Model Versioning and Rollbacks

When working with machine learning models, it is important to keep track of different versions and have the ability to roll back to a previous version if needed. Amazon SageMaker offers a robust set of features to manage model versioning and rollbacks effectively.

With Amazon SageMaker, users can easily create Model Versions for their trained models. Each model version represents a snapshot of the model’s parameters, code, and configuration at a specific point in time. By versioning models, users can track the changes made during training and deployment and have a complete record of the model’s evolution.

When it comes to model deployment, Amazon SageMaker allows users to specify the desired Model Version to be deployed. This gives organizations the flexibility to deploy different versions of the model simultaneously and evaluate their performance in real-world scenarios.

In case a newly deployed model version shows unexpected behavior or underperforms, Amazon SageMaker offers the functionality of Rollbacks. Users can easily rollback to a previous model version with a single click, reverting back to a known and stable model configuration. This feature is invaluable in mitigating any potential risks and ensuring the reliability and accuracy of deployed models.

To facilitate seamless versioning and rollbacks, Amazon SageMaker provides Endpoint Configurations. With endpoint configurations, users can specify the model version associated with an endpoint and define the desired deployment settings. This decoupling of model versions from endpoints makes it easy to switch between different versions and maintain a high level of flexibility for deployment configurations.

Additionally, Amazon SageMaker provides Automatic Rollbacks in case of issues or failures. If a newly deployed model version significantly deviates from the expected performance, Amazon SageMaker can automatically trigger a rollback to a previously known good version. This automatic rollback feature helps maintain the reliability and stability of deployed models without manual intervention.

By incorporating model versioning and rollback features into the workflow, Amazon SageMaker empowers organizations to effectively manage different versions of their machine learning models. This helps ensure reproducibility, traceability, and the ability to quickly resolve any issues that may arise during model deployment.

Model Auditing and Explainability

As machine learning models play an increasingly important role in critical decision-making processes, there is a growing need for transparency, accountability, and model explainability. Amazon SageMaker offers a range of features that enable organizations to audit their models and gain insights into their decision-making process.

One of the key features provided by Amazon SageMaker is Model Bias Detection. This feature helps organizations identify instances where the model may be exhibiting biased behavior, thereby ensuring fairness and mitigating potential discriminatory outcomes. By analyzing various demographic and input features, users can detect and measure bias, allowing for fair and unbiased decision-making.

In addition, Amazon SageMaker offers Model Explainability capabilities. This feature helps users understand and interpret the factors that contribute to the model’s predictions and decisions. By providing insights into the model’s internal workings, users can gain confidence and trust in their model’s outputs and explain them to stakeholders and regulatory bodies.

With Amazon SageMaker’s Explainability Reports, users can generate detailed documentation that explains how the model arrives at its predictions. These reports capture information about the model’s input features, feature importance rankings, and the impact of each feature on the predictions. This helps users comply with regulatory requirements, understand potential biases, and facilitate transparency in decision-making.

To enhance model auditing, Amazon SageMaker provides Model Metrics and Model Performance Monitoring. Users can track key metrics such as accuracy, precision, recall, and F1 score to evaluate the overall performance of their deployed models. Performance monitoring enables organizations to detect any degradation in model performance and take corrective actions promptly.

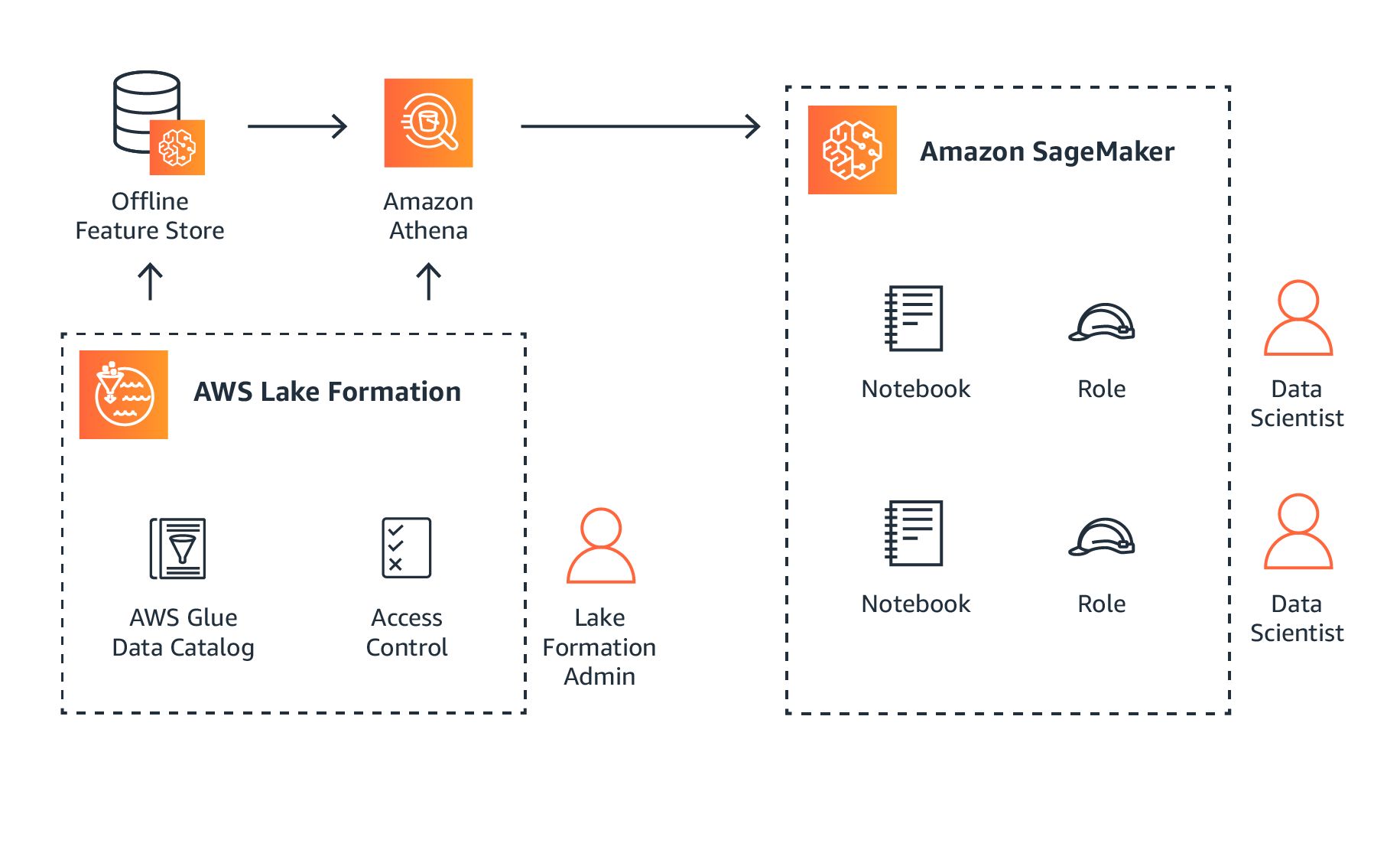

Furthermore, Amazon SageMaker integrates with various feature stores, such as AWS Glue, to capture and monitor the lineage of features used in the model. This feature lineage information assists in auditing and compliance efforts, ensuring visibility into the data sources and transformations applied to train the model.

By offering model bias detection, explainability, performance monitoring, and feature lineage tracking, Amazon SageMaker empowers organizations to audit and justify the decisions made by their machine learning models. These features enable organizations to build and deploy models that are not only accurate but also fair and explainable.

Resource Management

Efficient resource management is crucial for optimizing costs and ensuring the scalability of machine learning workflows. With Amazon SageMaker, organizations have access to a range of features that enable effective management of resources.

Amazon SageMaker offers Automatic Model Tuning, which automates the process of hyperparameter optimization. By automatically searching through a defined hyperparameter space, this feature finds the optimal combination of hyperparameters that maximize model performance. This saves valuable time and resources that would otherwise be spent on manual hyperparameter tuning.

To manage and monitor the compute resources used during training and inference, Amazon SageMaker provides Resource Utilization Monitoring. Users can track CPU and GPU utilization, memory usage, and network traffic. This helps optimize resource allocation and identify any bottlenecks or inefficiencies in the machine learning workflow.

In addition, Amazon SageMaker offers Cost Allocation Tags, which enable users to allocate costs associated with Amazon SageMaker resources to different projects, teams, or departments. This feature allows organizations to accurately track and manage costs, improving cost efficiency and transparency.

For organizations with multiple teams working on different machine learning projects, Amazon SageMaker offers Multi-tenancy capabilities. With multi-tenancy, teams can securely share resources while maintaining data and model isolation. This reduces infrastructure costs and promotes collaboration and resource efficiency across the organization.

To further enhance resource management, Amazon SageMaker integrates with AWS Service Catalog. Service Catalog allows users to create and manage catalogs of approved AWS resources, promoting standardized and controlled resource provisioning. This feature ensures compliance with governance policies and improves resource visibility and management.

Moreover, Amazon SageMaker provides Auto Scaling capabilities, enabling the automatic scaling of compute resources based on workload demands. With auto scaling, organizations can efficiently allocate resources and handle sudden spikes in computational needs without manual intervention. This feature optimizes resource utilization and reduces costs by dynamically adjusting resources according to the workload.

By utilizing the resource management features offered by Amazon SageMaker, organizations can optimize costs, improve resource efficiency, and scale their machine learning workflows seamlessly. These features allow businesses to allocate their resources effectively and focus on developing and deploying high-quality machine learning models.

Cost Optimization

Cost optimization is a critical aspect of any machine learning project, and Amazon SageMaker provides several features to help organizations optimize their costs effectively.

One of the key features offered by Amazon SageMaker is Spot Instances. Spot Instances allow users to take advantage of spare AWS compute capacity at significantly reduced prices. By using Spot Instances for training and inference, organizations can achieve significant cost savings while maintaining high performance.

To further optimize costs, Amazon SageMaker offers Managed Model Hosting with Auto Scaling. With managed hosting, organizations can automatically scale their deployed models based on the workload demand. This ensures that the right amount of compute resources are allocated at all times, eliminating unnecessary costs associated with underutilized resources.

Another cost optimization feature is Integration with AWS Cost Explorer. With this integration, users can visualize and analyze their Amazon SageMaker costs using Cost Explorer. This helps organizations identify cost trends, detect anomalies, and make informed decisions to optimize their machine learning spending.

Furthermore, Amazon SageMaker provides the Savings Plans feature, which allows users to commit to a consistent amount of Amazon SageMaker usage over 1 or 3 years. By committing to a Savings Plan, organizations can save up to 72% on their Amazon SageMaker costs compared to pay-as-you-go pricing.

In addition, Amazon SageMaker provides Cost Allocation Tags, which allow users to allocate costs to different projects, teams, or departments. This enables organizations to track and manage costs accurately and make data-driven decisions to optimize spending.

To help users estimate their costs before deploying machine learning models, Amazon SageMaker provides a Cost Estimator. This feature allows users to get a detailed estimate of the costs associated with specific instance types, training time, and data transfer. This enables organizations to plan and budget their machine learning projects effectively.

Lastly, Amazon SageMaker integrates with various AWS services, such as AWS Budgets and AWS Cost Anomaly Detection, allowing users to set budget thresholds and receive alerts when costs exceed predefined limits. This proactive cost management feature helps organizations avoid unexpected expenses and optimize their machine learning spending.

By leveraging the cost optimization features provided by Amazon SageMaker, organizations can effectively manage and control their machine learning expenses. These features enable businesses to optimize costs without compromising on performance, ensuring the maximum return on investment for their machine learning projects.

Conclusion

Amazon SageMaker offers a comprehensive suite of features that empower organizations to effectively govern their machine learning models throughout the entire lifecycle. From data management and model hosting to auditing, explainability, and cost optimization, Amazon SageMaker provides a range of tools to address the challenges faced by organizations in the realm of machine learning.

The data management features provided by Amazon SageMaker, such as Data Wrangler and Data Quality Monitoring, enable organizations to preprocess, clean, and monitor their data, ensuring the accuracy and reliability of their machine learning models. Additionally, the model hosting and deployment features, including Endpoint creation, Bring Your Own Environment, and Lambda integration, simplify the process of deploying models into production environments, while offering flexibility and scalability.

Monitoring and debugging are critical aspects of model governance, and Amazon SageMaker provides features such as CloudWatch integration, Distributed Model Monitoring, and Model Insights to enable users to monitor, analyze, and debug their models effectively. Moreover, the model approval and deployment features, including Model Registry, Model Approval Workflow, and Multi-Model Endpoints, streamline the model deployment process, ensuring only high-quality models make it to production.

Ensuring transparency and accountability, Amazon SageMaker offers model auditing and explainability features, allowing organizations to detect bias, explain model decisions, and monitor model performance. The resource management features, including Automatic Model Tuning, Resource Utilization Monitoring, and Multi-tenancy, optimize resource allocation, improve cost efficiency, and support collaboration across teams.

Cost optimization is an essential aspect of machine learning projects, and Amazon SageMaker provides features such as Spot Instances, Managed Model Hosting with Auto Scaling, and Savings Plans, enabling organizations to optimize their costs while maintaining high performance. The integration with AWS Cost Explorer, Cost Allocation Tags, and the cost estimator further help organizations track and manage their machine learning expenses effectively.

In conclusion, Amazon SageMaker offers a comprehensive set of features that empower organizations to govern their machine learning models effectively. With its extensive capabilities in data management, model hosting and deployment, monitoring, auditing, resource management, and cost optimization, Amazon SageMaker provides a robust platform that enables organizations to navigate the complexities of machine learning and drive successful outcomes.