Amazon Web Services (AWS) has unveiled SageMaker HyperPod, a new purpose-built service designed to streamline the training and fine-tuning process for large language models (LLMs). As part of AWS’s machine learning strategy, SageMaker has been a key component for building, training, and deploying ML models. With the rise of generative AI, Amazon is leveraging SageMaker as the core product to simplify and optimize LLM training and fine-tuning.

Key Takeaway

SageMaker HyperPod, Amazon’s new service for training and fine-tuning large language models (LLMs), accelerates the process by up to 40%. By creating distributed clusters with accelerated instances and providing tools for efficient model and data distribution, HyperPod significantly speeds up training and enhances the user experience. With fail-safes and the ability to save checkpoints, users can easily analyze and optimize training processes. Early users have reported positive experiences, testifying to the effectiveness of AWS’s infrastructure for LLM development.

Introducing SageMaker HyperPod

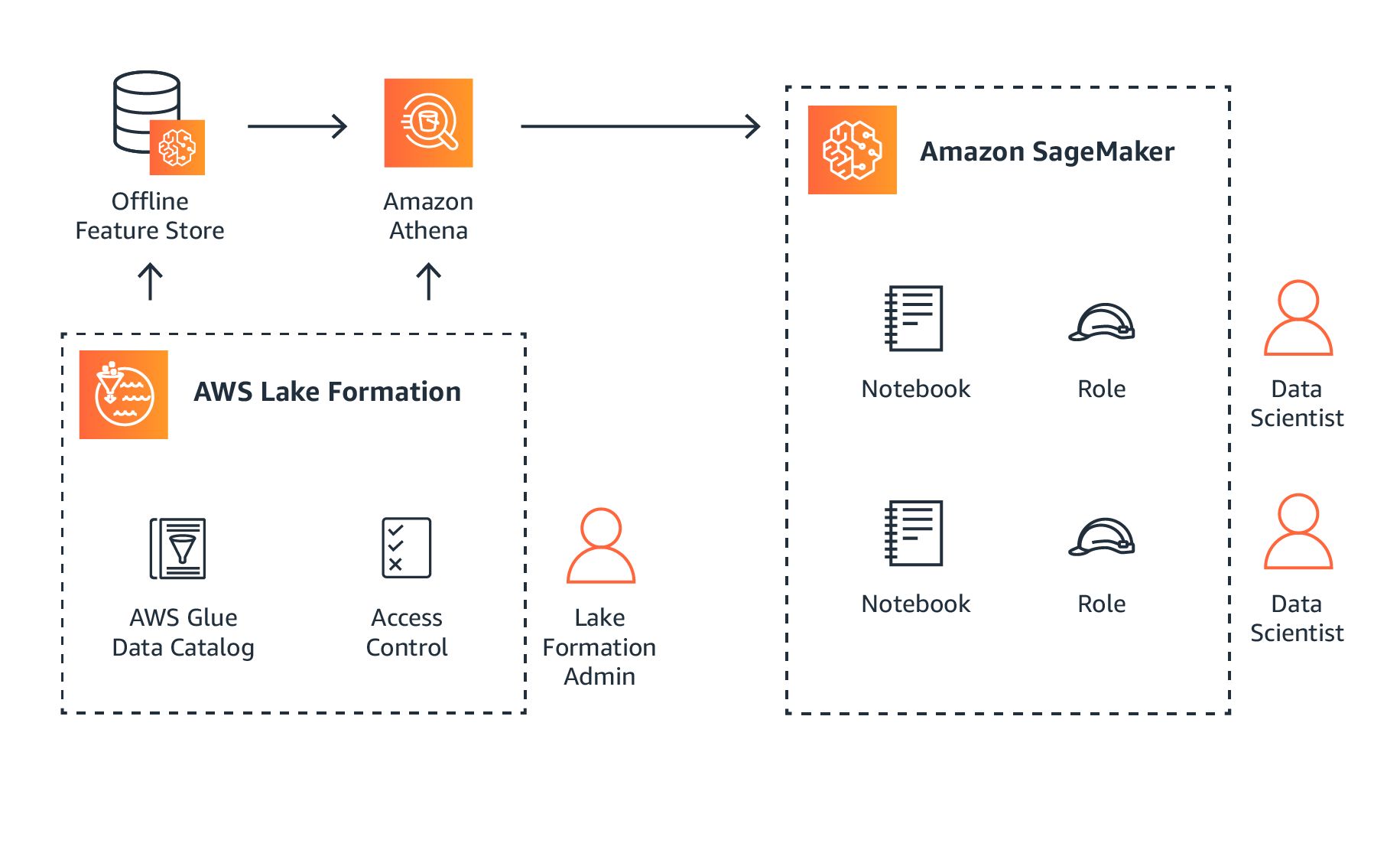

SageMaker HyperPod enables users to create a distributed cluster with accelerated instances that are specifically optimized for efficient training. This service provides the necessary tools for effectively distributing models and data across a cluster, resulting in faster training processes. Additionally, SageMaker HyperPod allows users to save checkpoints frequently, allowing for analysis and optimization without the need to start over. The service also incorporates fail-safes to prevent the entire training process from failing if a GPU goes down.

SageMaker HyperPod offers ML teams a streamlined and efficient experience, effectively creating a self-healing cluster. With these capabilities, foundation models can be trained up to 40% faster, providing significant advantages in terms of costs and time to market.

Accelerated Training Process

Users have the option to train models using Amazon’s custom Trainium and Trainium 2 chips or Nvidia-based GPU instances, including those equipped with the H100 processor. Amazon claims that SageMaker HyperPod can expedite the training process by up to 40%. This advancement builds upon Amazon’s previous experience using SageMaker for LLM development. For instance, the Falcon 180B model was trained on a SageMaker cluster consisting of thousands of A100 GPUs. Leveraging insights from past scaling experiences, AWS developed HyperPod to further optimize the training and fine-tuning of LLMs.

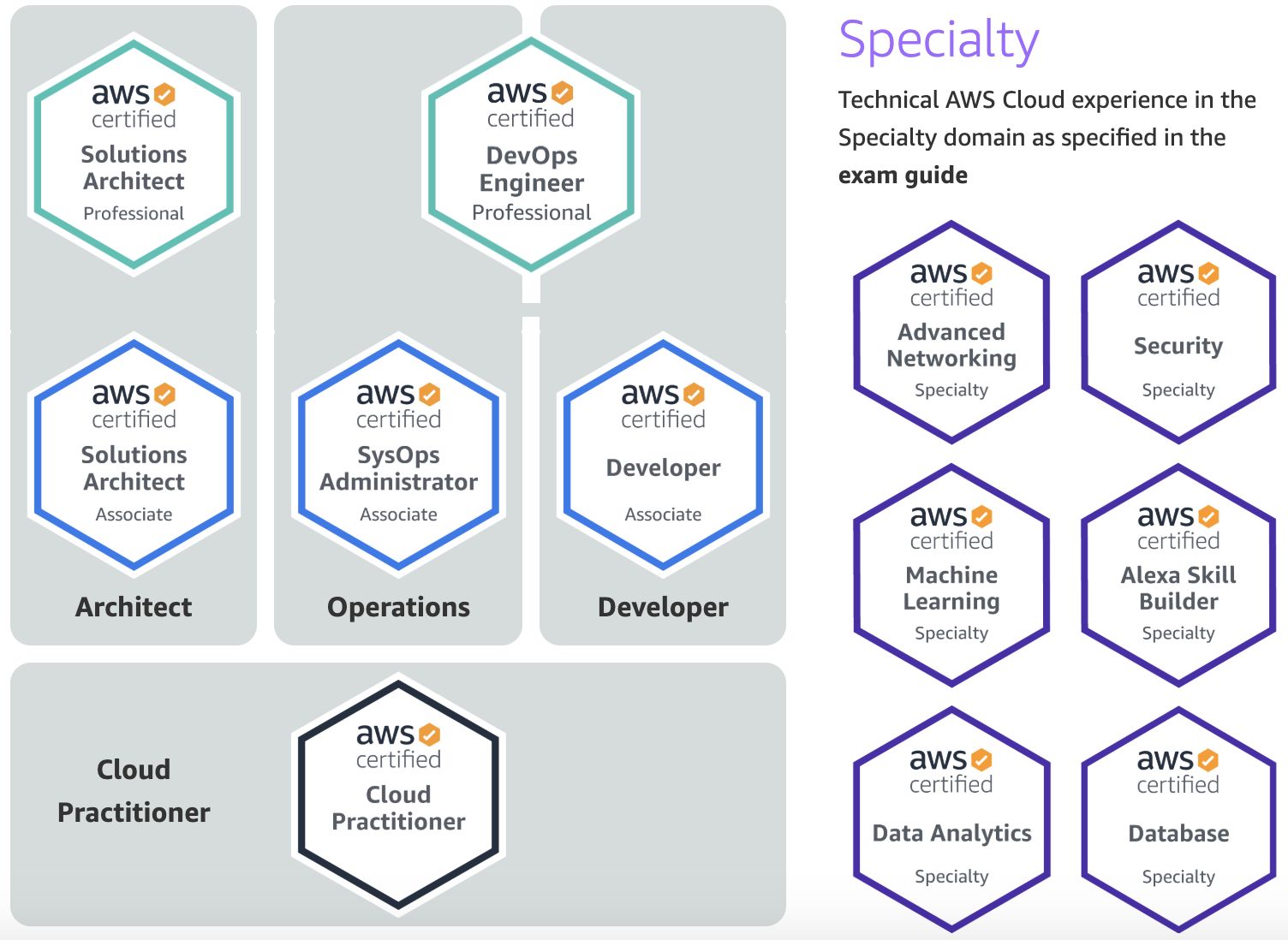

Positive Feedback from Early Users

During its private beta phase, Perplexity AI, a technology company focused on AI models, had the opportunity to test SageMaker HyperPod. Initially skeptical about AWS’s infrastructure for large model training, the Perplexity AI team found it easy to obtain support and access GPUs for their use case. The optimization of interconnects between Nvidia’s graphics cards by the AWS HyperPod team was also highlighted, allowing for efficient communication of gradients and parameters across different nodes.