Introduction

Welcome to our article on “What is Noise in Data in Machine Learning?” In the world of machine learning, data is the lifeblood that fuels the development and training of intelligent models. However, not all data is created equal. It is essential to understand the concept of noise in data and its impact on the accuracy and reliability of machine learning models.

Noise in data refers to any irrelevant, redundant, or erroneous information that can adversely affect the performance of machine learning algorithms. It is like a sneaky intruder that corrupts the quality of the data, making it less accurate and less trustworthy.

As the name suggests, noise disrupts the signal or the meaningful information in the data. It can originate from various sources, such as human error during data collection, equipment malfunction, measurement inaccuracies, or even natural variations in the data. Noise is ubiquitous, and if not properly handled, can lead to incorrect predictions, inaccurate insights, and unreliable decisions based on machine learning models.

To dive deeper into the understanding of noise in data, we will explore its different sources and types, and how it impacts machine learning models. Furthermore, we will discuss some effective strategies to handle and mitigate noise in data to enhance the performance and robustness of machine learning systems.

Now, let’s explore the fascinating world of noise in data and unravel its mysteries to elevate our understanding of machine learning algorithms.

What is Noise in Data?

Noise in data refers to any unwanted or irrelevant information that contaminates the underlying signal, making it difficult to extract meaningful patterns or relationships. It is considered an unwanted variability that hinders the accuracy and reliability of data analysis and machine learning models.

Think of data as a symphony, where the signal represents the beautiful melody, and the noise represents the discordant notes that disrupt the harmony. Just as the discordant notes can distort the melody, noise in data can distort the patterns and relationships we seek to uncover.

In the context of machine learning, noise can manifest in various forms, such as outliers, inconsistencies, measurement errors, missing data, or even irrelevant features. It can occur during data collection, data preprocessing, or even through natural variations in the data.

It is essential to identify and quantify the amount of noise present in the data, as it directly affects the accuracy and performance of machine learning models. Too much noise can lead to erroneous predictions and unreliable insights, while too little noise can result in overfitting, where the model becomes too tailored to the training data and performs poorly on new, unseen data.

By understanding the nature of noise in data and its potential sources, we can develop effective strategies to mitigate its impact and improve the overall quality and reliability of our machine learning models. This involves implementing various data preprocessing techniques, feature engineering approaches, and outlier detection methodologies.

Now that we have a solid grasp on what noise in data entails, let’s explore the different sources and types of noise, and how they can impact the performance of machine learning models.

Sources of Noise in Data

Noise in data can originate from various sources, each contributing to the overall degradation of data quality. It is important to identify and understand these sources to effectively mitigate their impact on machine learning models. Let’s explore some common sources of noise in data:

- Data Collection Errors: During the process of data collection, errors can occur due to human factors, equipment malfunctions, or inaccuracies in the measuring instruments. For example, a sensor measuring temperature may occasionally provide erroneous readings, introducing noise into the data.

- Data Entry Mistakes: When humans manually input data into a system, there is a possibility of typographical errors, misspellings, or incorrect data values. These mistakes can introduce noise and distort the accuracy of the dataset.

- Data Interference: In some cases, external factors can interfere with the data during its collection or transmission. This interference can be caused by electromagnetic radiation, electrical noise, or other environmental factors, leading to corrupted data.

- Irrelevant Features: Including irrelevant features in the dataset can introduce noise into the model training process. These features may not contribute to the target variable and can mislead the model, leading to reduced performance and accuracy.

- Measurement Inaccuracies: Inaccurate measuring devices or insufficient sampling rates can lead to measurement noise in the data. This type of noise can affect the precision and reliability of the collected data.

It is important to identify and address the sources of noise in data to ensure that the machine learning models are trained on high-quality, accurate data. By mitigating the impact of noise, we can improve the performance and reliability of our models. In the next section, we will explore the different types of noise that can be present in the data.

Types of Noise in Data

Noise in data can manifest in different forms, each with its own characteristics and impact on machine learning models. Understanding the different types of noise is crucial for developing appropriate strategies to handle them. Let’s explore the most common types of noise in data:

- Random Noise: Also known as stochastic noise, random noise refers to the unpredictable variations or fluctuations in the data. It can result from measurement errors, environmental factors, or inherent randomness in the system being observed. Random noise disrupts the underlying signal, making it challenging to discern meaningful patterns or relationships.

- Systematic Noise: Systematic noise, also known as bias or non-random noise, follows a specific pattern or trend. It is caused by consistent and repeatable factors that affect the data. Systematic noise can arise from equipment calibration issues, biases in data collection methods, or systematic errors in measurement instruments. Unlike random noise, systematic noise introduces a consistent bias in the data, which can impact the accuracy and reliability of machine learning models.

- Measurement Noise: Measurement noise is a type of noise that occurs due to errors in the measurement process itself. It can arise from limitations in the measuring instruments, specific conditions during data collection, or human errors in recording the measurements. Measurement noise can distort the accuracy of the data, leading to unreliable insights and predictions.

- Missing Data: Missing data occurs when certain observations or attributes are not recorded or are incomplete. This can happen due to various reasons, such as data entry errors, equipment failures, or subjects not providing the information. Missing data can introduce uncertainty and bias into the analysis and modeling process, making it crucial to handle it appropriately during data preprocessing.

- Outliers: Outliers are data points that deviate significantly from the normal distribution or expected patterns. They can occur due to measurement errors, data entry mistakes, or extreme values in the underlying process being observed. Outliers can introduce noise into the data and impact the performance of machine learning models, as they can skew statistical measures and influence the learning process.

Identifying and addressing the different types of noise in data is essential for building robust machine learning models. By understanding the characteristics of each type of noise, we can implement appropriate techniques to handle and mitigate their impact on the models. In the next section, we will explore the implications of noise on machine learning models and the strategies to handle it effectively.

Random Noise

Random noise, also known as stochastic noise, is an unpredictable variation or fluctuation that occurs in data. It is the result of inherent randomness, measurement errors, or environmental factors. Random noise can manifest in various forms, such as small fluctuations or irregularities in the data points.

One of the main challenges posed by random noise is its ability to obscure the underlying signal or pattern in the data. It introduces a level of uncertainty and can make it challenging to extract meaningful insights or relationships. Random noise can affect the accuracy and reliability of machine learning models by misguiding them to learn from the noise rather than the true underlying patterns.

It is important to distinguish between random noise and the variability that arises from genuine patterns or relationships in the data. Statistical techniques such as hypothesis testing and confidence intervals can help in differentiating between meaningful variability and random noise.

Handling random noise requires careful preprocessing of the data. Some common approaches include:

- Smoothing Techniques: Smoothing can help reduce the impact of random noise by applying filters or algorithms that average out the fluctuations. Techniques such as moving averages or Gaussian smoothing can be used to smooth out the data and extract the underlying signal.

- Feature Selection and Regularization: Selecting the most relevant features and applying regularization techniques, such as L1 or L2 regularization, can help reduce the impact of noise in the data. By focusing on the most informative features, the model can be more robust to random noise.

- Cross-Validation: Implementing cross-validation techniques, such as k-fold cross-validation, can help assess the model’s performance and generalizability. By evaluating the model’s performance on multiple subsets of the data, cross-validation helps identify if the model’s predictions are consistently affected by random noise.

- Ensemble Methods: Utilizing ensemble learning techniques, such as bagging or boosting, can help mitigate the impact of random noise by combining multiple models’ predictions. Ensemble methods take advantage of the diversity among models to reduce the influence of noisy data and improve the overall performance and robustness.

By employing these strategies, it is possible to reduce the impact of random noise and improve the accuracy and reliability of machine learning models. Although random noise is unavoidable in many real-world datasets, proper preprocessing techniques can address its effects and enable better predictions and insights.

Systematic Noise

Systematic noise, also known as bias or non-random noise, is a type of noise that follows a consistent pattern or trend. It is caused by factors that introduce a systematic deviation from the true underlying signal in the data. Unlike random noise, which is unpredictable and fluctuates, systematic noise introduces a fixed bias that affects the entire dataset.

Systematic noise can arise from various sources, such as calibration issues in measuring devices, biases in data collection methods, or even systematic errors in the measurement instruments. It can lead to a distortion of the true relationships and patterns in the data, impacting the accuracy and reliability of machine learning models.

One of the challenges posed by systematic noise is its potential to mislead machine learning models into learning incorrect relationships or associations. If the systematic noise is not properly accounted for, the models may generalize the bias and produce inaccurate predictions or insights.

To address systematic noise, it is important to identify its presence and apply appropriate techniques. Some common strategies to handle systematic noise include:

- Data Normalization: Normalizing the data can help mitigate the impact of systematic noise by scaling the features to a standard range. This ensures that the systematic bias is minimized and does not disproportionately influence the model’s learning process.

- Bias Correction: If the bias in the data is known or can be estimated, bias correction techniques can be applied. These techniques involve adjusting the data or model to remove the systematic bias and improve the accuracy of predictions.

- Feature Engineering: Carefully crafting and selecting features can help mitigate the impact of systematic noise. By incorporating relevant features and removing those that are influenced by the bias, the model can focus on capturing the true relationships in the data.

- Cross-Validation: Implementing cross-validation techniques, such as stratified cross-validation, can help assess the model’s performance across different subsets of the data. This can help identify if the systematic noise is consistently influencing the model’s predictions.

Handling systematic noise requires a comprehensive understanding of the data and the sources of bias. By applying appropriate preprocessing techniques and feature engineering methods, it is possible to mitigate the impact of systematic noise and improve the accuracy and reliability of machine learning models.

Measurement Noise

Measurement noise is a type of noise that occurs due to errors in the measurement process itself. It can stem from limitations in the measuring instruments, specific conditions during data collection, or human errors in recording the measurements. Measurement noise can introduce variability and inaccuracies in the collected data, affecting the overall quality and reliability.

One of the challenges posed by measurement noise is its potential to distort the true values of the measured variables. The noise can create deviations from the actual signal, leading to discrepancies between the observed measurements and the underlying ground truth.

Measurement noise can have a detrimental impact on machine learning models, as it can introduce uncertainties and inaccuracies in the learned relationships. It can mislead models into making incorrect predictions or attributing the variations to noise rather than the genuine patterns in the data.

To handle measurement noise effectively, it is important to employ appropriate preprocessing techniques and feature engineering strategies. Some common approaches to address measurement noise include:

- Filtering Techniques: Applying digital signal processing filters can help mitigate the impact of measurement noise. Techniques such as low-pass, high-pass, or band-pass filters can be used to remove or reduce specific frequency components associated with noise, retaining the useful signal.

- Feature Scaling: Properly scaling the features can help minimize the influence of measurement noise. Techniques such as standardization or normalization can bring the features to a similar scale, allowing the model to focus on the inherent patterns rather than the noise-induced variations.

- Improved Data Collection Protocols: Enhancing the data collection process by ensuring accurate calibration of instruments, proper training of personnel, and following standardized protocols can help minimize measurement noise. By reducing the variability introduced during data collection, the impact of measurement noise can be minimized.

- Ensemble Methods: Utilizing ensemble learning techniques, such as bagging or stacking, can help mitigate the impact of measurement noise. These methods involve training multiple models on different subsets of the data and combining their predictions. By aggregating the outputs of multiple models, the impact of noise can be reduced, improving the overall performance and robustness.

By utilizing these strategies, it is possible to mitigate the impact of measurement noise and improve the accuracy and reliability of machine learning models. Proper handling of measurement noise ensures that the models are trained on high-quality data, minimizing the influence of inaccuracies and uncertainties.

Missing Data

Missing data refers to the absence of certain observations or attributes in a dataset. It can occur for various reasons, such as data entry errors, equipment failures, subjects not providing certain information, or simply because the data was not collected for specific variables. Dealing with missing data is essential in order to avoid biased or incomplete analyses and inaccurate machine learning models.

Missing data can present challenges in data analysis and modeling because it can lead to biased results, reduced statistical power, and compromised generalizability. It is important to handle missing data appropriately to ensure the validity and reliability of the analysis.

There are several common strategies for handling missing data:

- Complete Case Analysis: This approach involves analyzing only the cases or observations that have complete data for all variables of interest. While simple, this approach can lead to biased results if the missing data is not random, potentially excluding valuable information.

- Imputation Methods: Imputation techniques are used to fill in the missing values with estimated or imputed values. This allows for the inclusion of all cases in the analysis and reduces bias. Imputation methods can range from simple techniques, such as mean or median imputation, to more sophisticated methods, such as regression imputation or multiple imputation.

- Classification and Regression: If the variable with missing data is the target variable, classification or regression models can be used to predict the missing values based on other variables in the dataset. This approach can be useful when the missing data is related to the other variables in a predictable manner.

- Domain Knowledge: In some cases, domain knowledge can be utilized to infer or estimate missing values. This approach leverages the expertise of domain experts to make reasonable assumptions about the missing data based on contextual information.

- Handling Missing Indicators: Creating a binary indicator variable to flag missing values can be helpful in understanding the impact of missing data on the analysis. This indicator variable can be included as a predictor in the modeling process to account for the missingness mechanism.

Choosing the appropriate strategy to handle missing data depends on the nature and extent of the missingness, as well as the specific requirements of the analysis or modeling task. It is important to carefully consider the potential biases and assumptions introduced by the chosen method.

By carefully addressing missing data, we can ensure that machine learning models are trained on complete and accurate data, leading to more reliable and robust results.

Outliers

Outliers are data points that significantly deviate from the general pattern or distribution of the dataset. They can arise due to various reasons, such as measurement errors, data entry mistakes, or extreme values in the underlying process being observed. Outliers can have a significant impact on data analysis and machine learning models, as they can introduce noise and influence the results.

One of the challenges posed by outliers is their potential to distort statistical measures and relationships within the data. Outliers can skew the mean, standard deviation, or correlation coefficients, leading to misleading interpretations and inaccurate model predictions. They can also have a disproportionate influence on the parameters estimated by machine learning algorithms, which can lead to biased results.

Handling outliers is crucial to ensure the accuracy and reliability of the analysis. Some common strategies for dealing with outliers include:

- Visual Inspection: Visualizing the data through scatter plots, histograms, or box plots can help identify potential outliers. Visual inspection allows for a better understanding of the data distribution and provides insights into any extreme values.

- Statistical Methods: Applying statistical techniques, such as the Z-score or modified Z-score, can help in identifying outliers based on their deviation from the mean or median. Observations that fall outside a certain threshold can be flagged as potential outliers and further investigated.

- Trimming: Trimming involves removing the extreme values from the dataset. This approach effectively eliminates the influence of outliers on statistical measures and model fitting. However, it is important to carefully consider the impact of removing outliers and the potential loss of information.

- Winsorization: Winsorization replaces extreme values with more moderate values within a specified range. This approach can help mitigate the impact of outliers while retaining the information they provide.

- Robust Modeling Techniques: Utilizing robust modeling techniques, such as robust regression or robust estimators, can help reduce the influence of outliers on the model fitting process. These techniques assign lower weights to outliers, leading to more robust and reliable parameter estimates.

Choosing the most appropriate strategy for handling outliers depends on the specific characteristics of the dataset and the requirements of the analysis or modeling task. It is important to strike a balance between removing the influence of outliers and maintaining the integrity of the data.

By properly addressing outliers, we can ensure that machine learning models are trained on data that accurately represents the underlying patterns and relationships, leading to more reliable and accurate predictions and insights.

Impact of Noise on Machine Learning Models

Noise in data can have a significant impact on the performance and reliability of machine learning models. It introduces uncertainties, biases, and inaccuracies that can hinder the model’s ability to learn and make accurate predictions. Understanding the impact of noise is crucial in developing effective strategies to handle and mitigate its effects.

One of the main effects of noise on machine learning models is the degradation of predictive accuracy. Noise can introduce random variations in the data, making it difficult for the model to discern the true underlying patterns and relationships. This can lead to overfitting, where the model becomes overly complex and tailored to the noise in the training data, resulting in poor generalization to unseen data.

Noise can also introduce biases in the model’s predictions. Systematic noise or biases in the data can mislead the model, causing it to learn incorrect relationships or associations. This can lead to biased predictions and inaccurate insights. Additionally, measurement noise or missing data can introduce uncertainties and affect the overall reliability of the model’s results.

Another consequence of noise is the increased complexity of the learning task. When the data contains significant amounts of noise, the model needs to differentiate between signal and noise, making the learning process more challenging. It can lead to longer training times, a need for more complex models, and a higher risk of overfitting.

Furthermore, noise can impact the interpretability and explainability of machine learning models. When the data contains significant noise, it becomes difficult to interpret the model’s learned relationships and understand the factors driving its predictions. This can be problematic, especially in sensitive domains where interpretability is crucial for gaining trust and making informed decisions.

Overall, the impact of noise on machine learning models can be detrimental, leading to decreased accuracy, biased predictions, increased complexity, and reduced interpretability. Therefore, it is essential to implement strategies to handle and mitigate the effects of noise in data preprocessing, feature engineering, and model evaluation.

By effectively addressing noise, machine learning models can be trained on high-quality data, leading to improved performance, increased reliability, and more meaningful insights.

Strategies to Handle Noise in Data

Dealing with noise in data is essential to ensure the accuracy and reliability of machine learning models. By applying appropriate strategies, we can mitigate the impact of noise and improve the performance of our models. Let’s explore some effective strategies for handling noise in data:

- Data Preprocessing Techniques: Data preprocessing plays a crucial role in handling noise. Techniques like data cleaning, normalization, and standardization can help reduce the impact of noise and improve the quality and consistency of the data. Removing outliers, handling missing data, and correcting measurement errors are important steps to ensure the data is prepared for accurate modeling.

- Feature Selection and Engineering: Carefully selecting the most relevant features and engineering new features can help reduce the impact of noise. Removing irrelevant features can enhance the model’s ability to focus on the important patterns while introducing informative features can strengthen the model’s predictive power.

- Outlier Detection and Removal: Identifying and removing outliers is crucial in handling noise. Outliers can introduce significant variation and bias in the data, disrupting the model’s learning process. Statistical techniques and visualizations can help in outlier detection, allowing for their removal or treatment.

- Imputation Methods for Missing Data: Missing data can introduce noise and bias into the analysis. Imputation techniques such as mean imputation, regression imputation, or multiple imputations can be applied to estimate missing values. This allows for the inclusion of cases with missing data, improving the representativeness of the dataset.

- Ensemble Learning Techniques: Ensemble learning, such as bagging or boosting, can help handle the impact of noise. By combining the predictions of multiple models, ensemble methods can reduce the influence of random noise and outliers, leading to more robust and accurate predictions.

It is important to note that the choice of strategy depends on the specific characteristics of the data and the modeling task at hand. Additionally, it is crucial to assess the performance and reliability of the models after applying noise mitigation techniques, using appropriate evaluation metrics and validation methods.

By implementing these strategies, we can effectively handle noise in data, improve the quality and robustness of our machine learning models, and enhance the accuracy of our predictions and insights.

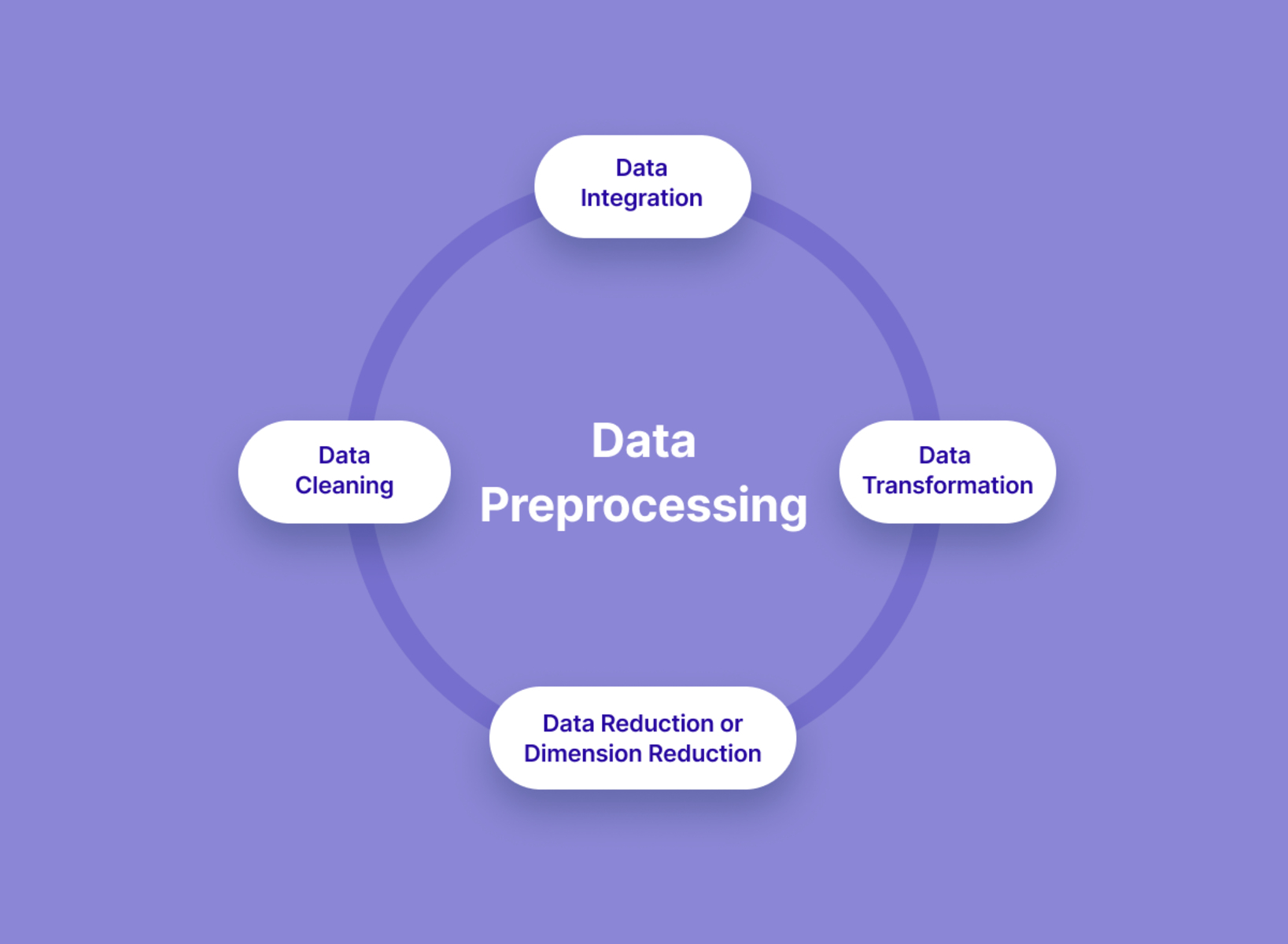

Data Preprocessing Techniques

Data preprocessing is a critical step in handling noise and improving the quality of the data for machine learning models. It involves transforming and preparing the data before feeding it into the model. By applying appropriate preprocessing techniques, we can minimize the impact of noise and enhance the accuracy and reliability of our models.

Let’s explore some common data preprocessing techniques that help handle noise:

- Data Cleaning: Data cleaning involves identifying and correcting errors, inconsistencies, and inaccuracies in the dataset. This step helps eliminate noisy data points that can negatively impact the model’s performance. Techniques such as removing duplicate records, correcting data entry errors, and dealing with inconsistent formatting are used to ensure the integrity of the data.

- Data Normalization: Normalizing the data helps bring the features to a standard range and scale. It eliminates the influence of different units or measurement scales, making the data more suitable for modeling. Common normalization techniques include min-max scaling or z-score standardization, which adjust the values to a common scale.

- Data Discretization: Discretization involves transforming continuous numerical variables into categorical ones. This technique can be useful in handling noise by reducing the impact of small fluctuations. Discretization can be done using methods such as equal-width binning or equal-frequency binning, grouping similar values into bins.

- Dimensionality Reduction: Dimensionality reduction techniques, such as Principal Component Analysis (PCA) or t-distributed Stochastic Neighbor Embedding (t-SNE), help reduce the dimensionality of the dataset while retaining important information. These techniques can effectively handle noise by capturing the most informative features and minimizing the impact of noise-induced variations.

- Feature Scaling: Feature scaling involves transforming the features to a similar scale. It helps mitigate the influence of noise and outliers by ensuring that all features contribute equally to model training. Scaling techniques such as min-max scaling or standardization are used to bring the features within a specific range or distribution.

These data preprocessing techniques play a vital role in handling noise, improving the data quality, and ensuring proper model training. It is important to carefully select and apply these techniques based on the specific characteristics of the dataset and the requirements of the machine learning task.

By employing appropriate data preprocessing techniques, we can effectively handle noise, reduce bias, and enhance the accuracy and reliability of our machine learning models.

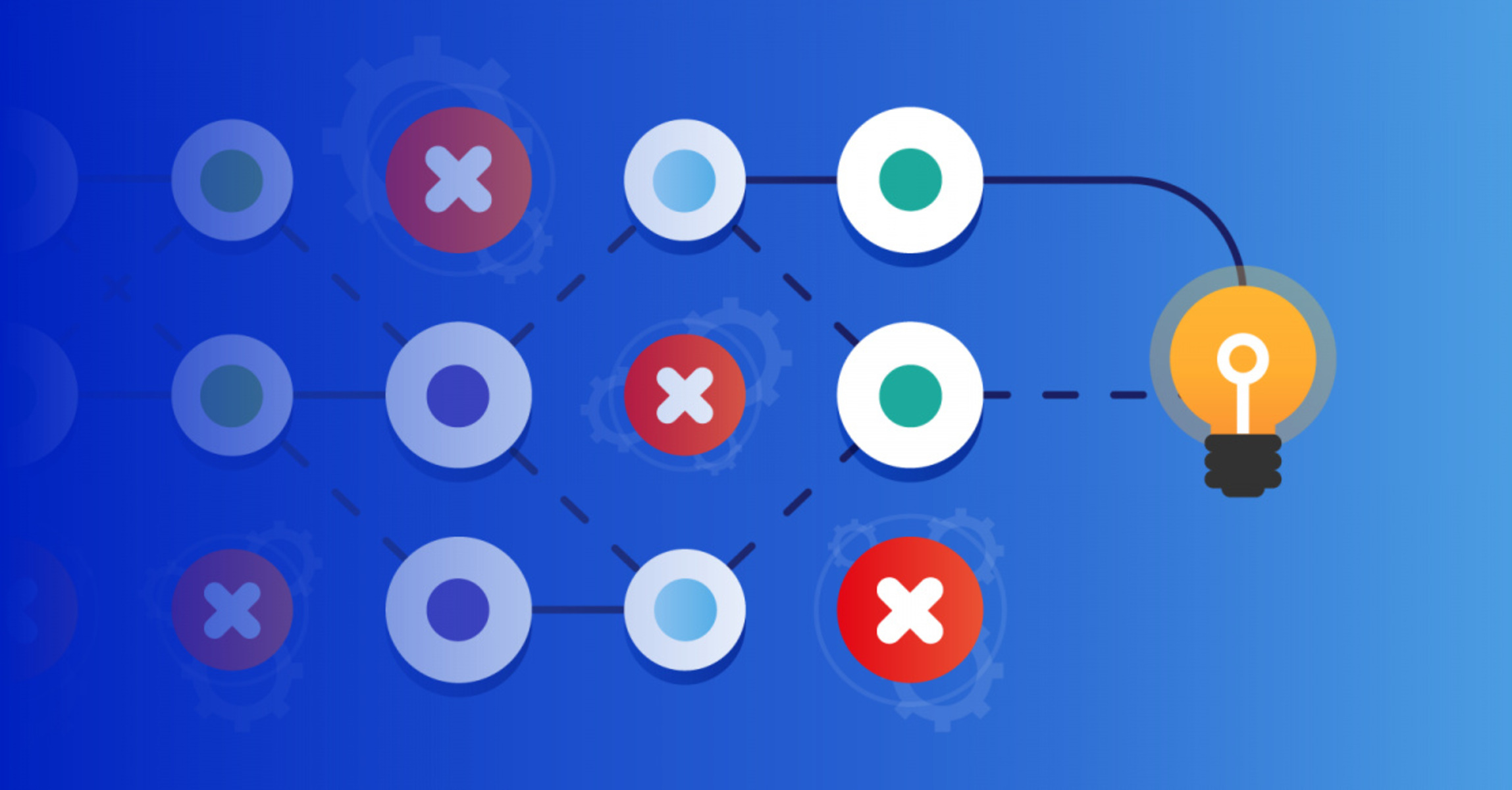

Feature Selection and Engineering

Feature selection and engineering are crucial steps in handling noise and improving the performance of machine learning models. By carefully selecting relevant features and creating new informative ones, we can reduce the impact of noise and enhance the model’s ability to capture the underlying patterns and relationships in the data.

Let’s explore some common techniques for feature selection and engineering:

- Feature Selection: Feature selection involves choosing a subset of features that are most relevant to the target variable or provide the most valuable information. By removing irrelevant or redundant features, we can reduce the noise introduced by irrelevant information. Techniques such as filter methods (e.g., correlation, mutual information), wrapper methods (e.g., recursive feature elimination), or embedded methods (e.g., Lasso regularization) can be used to select the most informative features.

- Feature Engineering: Feature engineering involves creating new features or transforming existing ones to enhance the information contained in the data. This process aims to capture meaningful patterns and relationships. Feature engineering techniques include polynomial features, interaction terms, transformations (e.g., logarithmic, square root), or domain-specific transformations. Adding informative features can help the model better distinguish signal from noise.

- Dimensionality Reduction: Dimensionality reduction techniques, such as Principal Component Analysis (PCA) or Singular Value Decomposition (SVD), can effectively handle noise by reducing the number of features while retaining important information. These techniques identify and combine the most informative features, reducing the impact of noise-induced variations.

- Domain Knowledge: Incorporating domain knowledge can help guide feature selection and engineering. Domain experts can identify relevant features based on their understanding of the problem and the possible relationships within the data. This can lead to more informed decisions and better handling of noise.

By applying feature selection and engineering techniques, we can improve the model’s ability to separate meaningful information from noise. Filtering out irrelevant features and creating informative ones can allow the model to focus on the most relevant aspects of the data, reducing overfitting and improving generalization capabilities.

It is important to carefully select and validate the features to ensure they enhance the model’s performance and are robust to noise. Additionally, monitoring the impact of feature engineering on performance metrics is crucial to determine if the introduced features are indeed improving the model’s accuracy.

By leveraging feature selection and engineering techniques, we can effectively handle noise, increase the model’s interpretability, and improve the overall performance and reliability of our machine learning models.

Outlier Detection and Removal

Outliers can significantly impact the performance and accuracy of machine learning models. Detecting and handling outliers is essential in order to reduce their influence on the data and improve the reliability of the models. Outlier detection involves identifying data points that deviate significantly from the normal distribution or expected patterns, while outlier removal aims to mitigate their effects.

Let’s explore some common techniques for outlier detection and removal:

- Visualization: Visualizing the data through scatter plots, histograms, or box plots can help identify potential outliers. Outliers often stand out as data points that fall far away from the majority of the data. Visual inspection allows for a preliminary understanding of the presence and influence of outliers.

- Statistical Methods: Statistical methods, such as the Z-score or modified Z-score, can help identify outliers based on their deviation from the mean or median. Observations that fall outside a certain threshold, typically defined as a certain number of standard deviations, can be flagged as potential outliers and further investigated.

- Percentiles and Range: Observations that fall outside a specified range, such as the 95th or 99th percentile, can be considered potential outliers. This approach focuses on identifying extreme values that lie beyond a certain threshold.

- Clustering: Clustering techniques, such as k-means or DBSCAN, can be used to identify outliers based on their distance from the centroid or their isolation from dense regions. Outliers often form their own clusters or represent data points that do not belong to any particular cluster.

- Machine Learning Models: Machine learning algorithms, such as isolation forests or one-class SVM, can be trained specifically to detect outliers. These models learn the underlying patterns in the data and identify observations that do not conform to these patterns, marking them as potential outliers.

Once outliers are detected, we can decide whether to remove or handle them based on the specific characteristics of the data and the modeling task. Outlier removal techniques include outright deletion of the outliers, Winsorization (replacing extreme values with more moderate ones), or adjusting the outliers to plausible values based on domain knowledge or neighboring observations.

It is important to exercise caution when removing outliers, as their elimination can lead to the loss of valuable information or introduce bias. Proper documentation of the decision-making process and the rationale behind outlier removal is crucial for transparency and reproducibility.

By effectively detecting and handling outliers, we can reduce their impact on the data and improve the accuracy, robustness, and reliability of our machine learning models.

Imputation Methods for Missing Data

Missing data is a common problem that can introduce noise and bias into the analysis, making it crucial to handle it appropriately. Imputation techniques offer ways to estimate or fill in missing values, allowing for a more complete dataset and reducing the impact of missing data on machine learning models. These methods help to ensure that missing values are handled in a way that preserves the integrity and representativeness of the data.

Let’s explore some common imputation methods for handling missing data:

- Mean or Median Imputation: In this simple technique, missing values are replaced with the mean or median value of the available data. This approach assumes that the missing values are roughly representative of the central tendency of the data. Mean imputation is more suitable for data that follows a normal distribution, while median imputation is robust to outliers.

- Regression Imputation: Regression imputation involves using regression models to predict missing values based on the observed data. A regression model is trained using the available data, and the model is then used to estimate the missing values. This method takes into account the relationships between variables and can provide more accurate imputations.

- Multiple Imputation: Multiple imputation generates multiple plausible imputations, taking into account the uncertainty associated with the missing values. The missing values are imputed multiple times based on different imputation models or techniques. This creates multiple versions of the dataset, allowing for more robust analysis that incorporates the uncertainty introduced by missing data.

- Hot Deck Imputation: Hot deck imputation assigns missing values by matching them with similar observations based on certain criteria (e.g., nearest neighbor). The missing value is replaced with a value from a donor observation that is most similar to the one with missing data. This method preserves the relationships in the data but does not rely on statistical modeling.

- Domain-Specific Imputation: Depending on the specific domain or field of study, domain knowledge can be used to impute missing values. For example, in healthcare, medical experts may provide informed estimates based on their expertise. This approach leverages the contextual understanding of the problem to ensure sensible imputations.

It is important to note that choosing the appropriate imputation method depends on factors such as the nature of the missing data, the distribution of the variables, and the context of the problem. Additionally, it is crucial to evaluate the impact of imputation on the model’s performance and ensure that the imputed values are consistent with the statistical properties of the data.

By applying suitable imputation methods, we can handle missing data effectively, reduce the bias introduced by missing values, and ensure the integrity and completeness of the dataset for machine learning models.

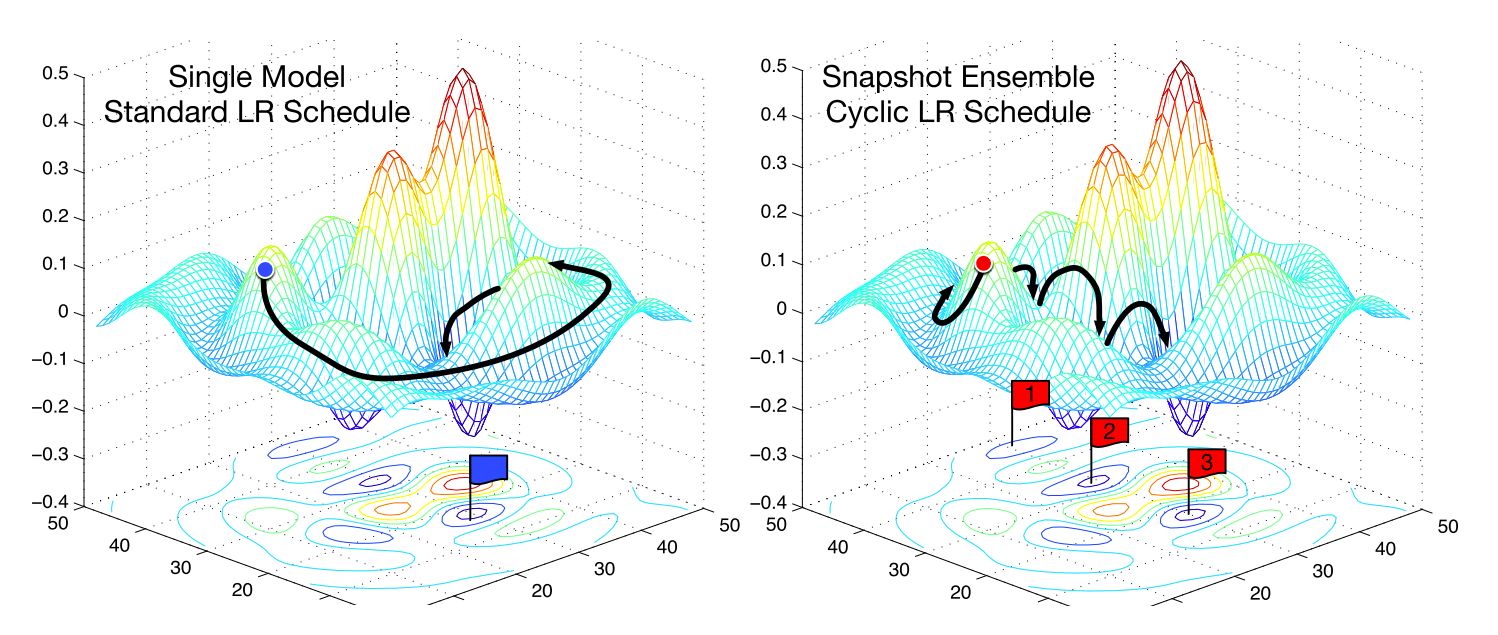

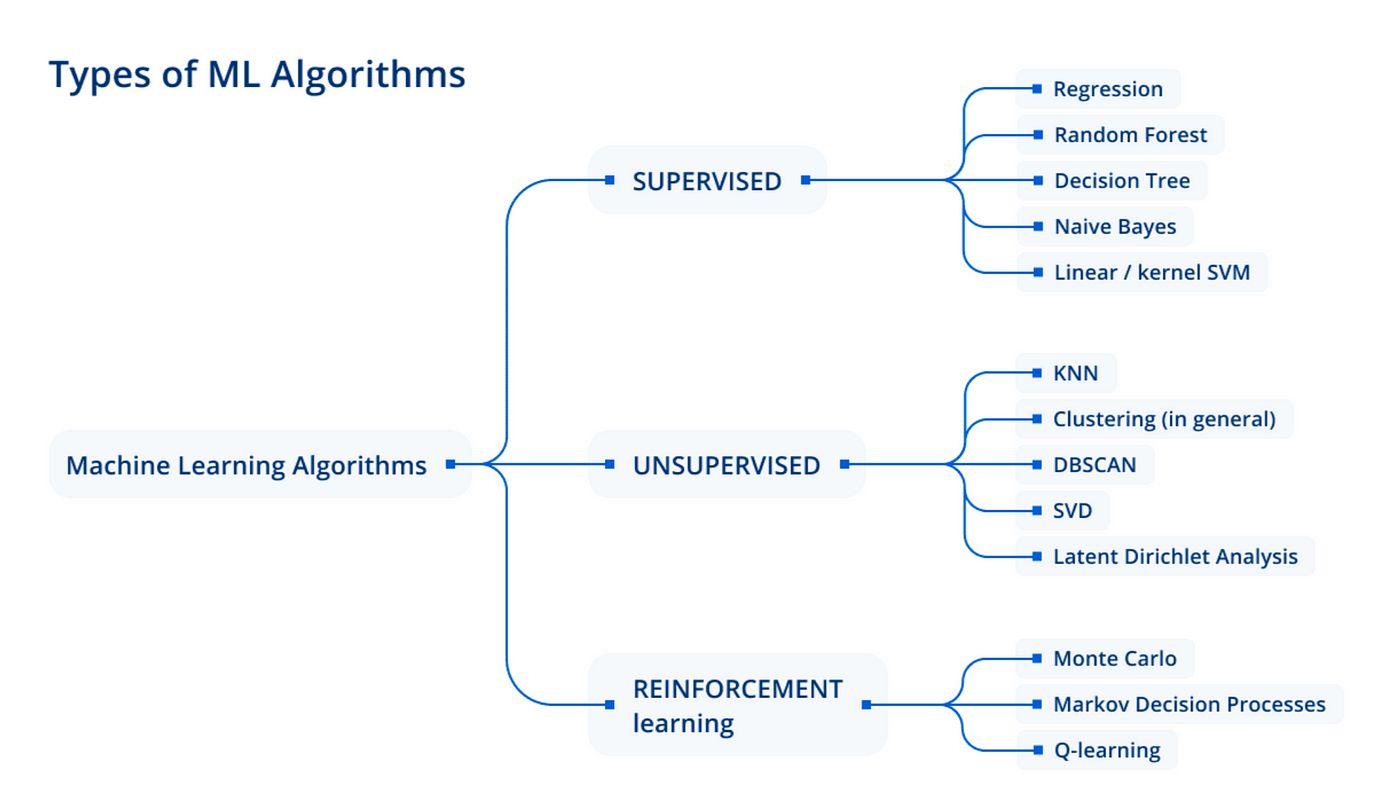

Ensemble Learning Techniques

Ensemble learning techniques are powerful approaches that leverage the collective wisdom of multiple models to improve the performance and robustness of machine learning models. These techniques are particularly beneficial for handling noise and reducing the impact of individual model errors or biases.

Let’s explore some common ensemble learning techniques:

- Bagging: Bagging, or bootstrap aggregating, involves training multiple models on different subsets of the data using bootstrapping. Each model learns independently, and their predictions are combined through voting or averaging. Bagging helps to reduce the impact of noise and overfitting by capturing the consensus of multiple models.

- Boosting: Boosting is an ensemble technique that aims to sequentially build a sequence of models, where each subsequent model focuses on correcting the mistakes made by its predecessors. Boosting assigns weights to observations, allowing the subsequent models to focus more on the misclassified or difficult instances. This adaptive learning process helps to improve the model’s performance and robustness.

- Random Forests: Random Forests combine the power of decision trees with the principles of bagging. Multiple decision trees are trained on different subsets of the data, and their predictions are aggregated through voting. Random Forests handle noise and overfitting by reducing the individual tree’s variance and capturing the collective decision-making power of the ensemble.

- Stacking: Stacking involves training multiple models on the same data and then combining their predictions using a meta-model. The meta-model learns from the individual model’s outputs and provides the final prediction. Stacking can help to capture the strengths of different models and compensate for their weaknesses, leading to improved predictive performance.

- AdaBoost: AdaBoost, short for Adaptive Boosting, is a boosting algorithm that assigns weights to observations based on their difficulty for classification. The subsequent models focus more on the misclassified instances, effectively improving the model’s performance and handling noise-induced errors.

Ensemble learning techniques provide robustness and better generalization capabilities by reducing the impact of noise and error-inducing factors. By combining the predictions of multiple models, ensemble methods capture a more comprehensive understanding of the data, ultimately improving the accuracy and reliability of the models.

It is important to note that ensemble learning requires careful consideration and tuning of the models used, as well as evaluation of their performance and interaction. Additionally, choosing diverse models that have different sources of error can lead to a more effective ensemble.

By effectively employing ensemble learning techniques, we can handle noise, improve generalization, and enhance the overall performance and reliability of our machine learning models.

Conclusion

Noise in data poses a significant challenge in the field of machine learning. It can disrupt the underlying patterns, introduce uncertainties, and lead to inaccurate predictions and unreliable insights. Understanding the sources and types of noise is crucial in effectively handling and mitigating its impact.

Throughout this article, we explored the concept of noise in data and its various sources, including data collection errors, measurement inaccuracies, and missing data. We also discussed different types of noise such as random noise, systematic noise, and outliers. It is evident that noise can have a detrimental effect on machine learning models, including reduced predictive accuracy, biased results, increased complexity, and decreased interpretability.

To handle noise effectively, we outlined several strategies, including data preprocessing techniques, feature selection and engineering, outlier detection and removal, imputation methods for missing data, and ensemble learning techniques. These strategies provide valuable approaches to reduce the impact of noise and improve the accuracy and reliability of machine learning models.

It is important to note that different datasets and modeling tasks require tailored approaches when dealing with noise. The choice of strategies and techniques should be based on a thorough understanding of the data and the specific challenges posed by noise.

By implementing these strategies and techniques, we can enhance the quality of the data, improve the model’s ability to capture meaningful patterns, and increase the robustness and generalization capabilities of machine learning models. Handling noise is essential for developing models that can provide accurate predictions and reliable insights, enabling data-driven decision-making in various domains.

The field of machine learning continues to grow, and as we further our understanding of noise and develop more sophisticated methods to handle it, we can unlock the full potential of our data and maximize the performance of our machine learning models.