Introduction

When it comes to the functioning of a computer system, one of the key aspects is the storage and retrieval of data by the central processing unit (CPU). In order to efficiently access and process data, the CPU relies on various components that store different types of data.

In this article, we will explore the different components that store data being accessed by the CPU. These components play a crucial role in the overall performance and functionality of a computer system. Understanding how they work can help us appreciate the complexity behind the seemingly instantaneous retrieval of data.

From high-speed memory units to long-term storage devices, each component serves a specific purpose in the data storage hierarchy. By delving into each of these components, we can gain a better understanding of how data is managed in a computer system.

So, let’s dive into the world of data storage and uncover the secrets of these essential components that facilitate the smooth operation of our computers.

Cache Memory

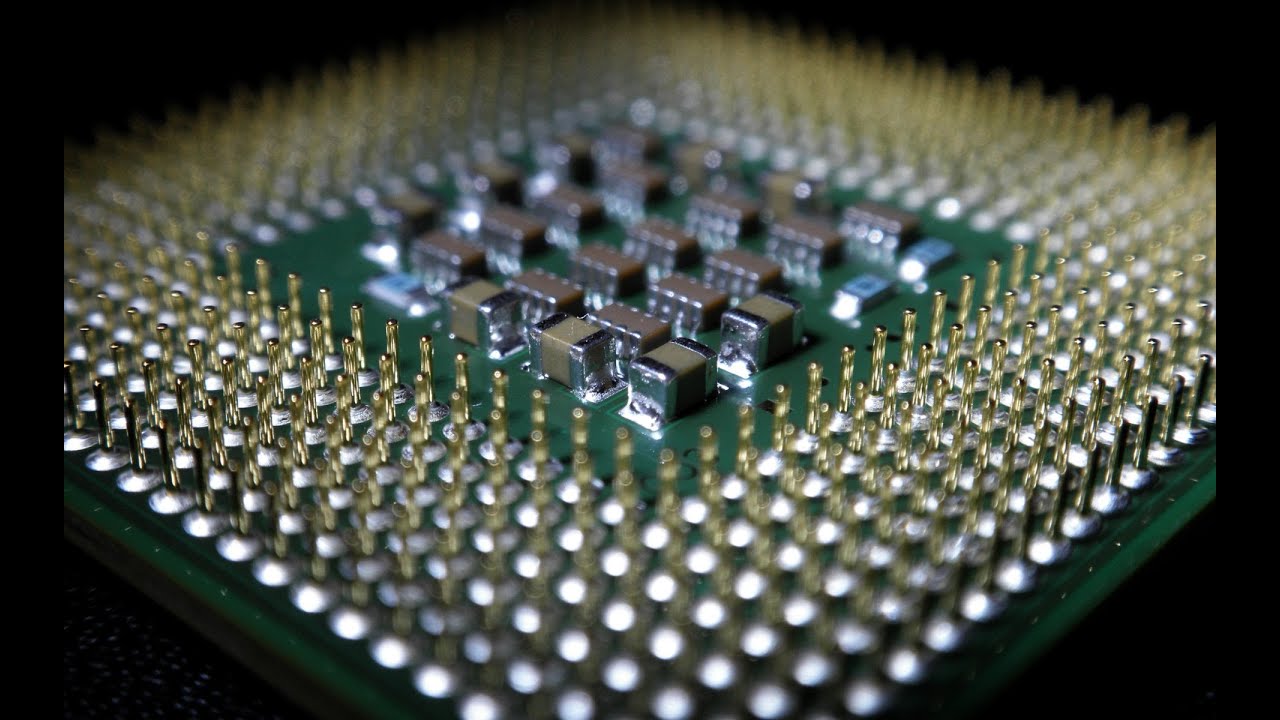

At the forefront of the CPU’s data storage hierarchy lies the cache memory. Cache memory is a small, but incredibly fast, storage unit that stores frequently accessed data and instructions. It acts as a buffer between the CPU and the main memory (RAM), reducing the time taken to retrieve information and improving overall system performance.

Cache memory is divided into multiple levels, commonly referred to as L1, L2, and L3 caches. L1 cache is the closest to the CPU and has the fastest access time, while L2 and L3 caches are larger in size and provide additional storage capacity.

The primary purpose of cache memory is to reduce the number of memory accesses to the slower main memory. When the CPU needs to retrieve data or instructions, it first checks the cache memory. If the desired information is found within the cache, it is referred to as a cache hit, and the data is quickly retrieved. However, if the information is not present in the cache, it results in a cache miss, and the CPU has to access the main memory, which takes more time.

Cache memory operates on the principle of locality, which means that the most recently used data and instructions are likely to be accessed again in the near future. By storing this data in the cache, the CPU can quickly retrieve it without the need to access the slower main memory.

Cache memory is an essential component of modern CPUs, greatly contributing to their speed and efficiency. Its presence allows for faster data access and improves the overall performance of the computer system.

Registers

Registers are small, high-speed storage units located directly within the CPU. They are used to store and manipulate data during the execution of instructions. Unlike cache memory, which stores frequently accessed data, registers store data that is actively being processed by the CPU.

Registers are the fastest form of data storage in a computer system. They operate at the same clock speed as the CPU, allowing for near-instantaneous access and manipulation of data. Registers are designed to hold small pieces of data, such as numerical values, memory addresses, and control signals.

One of the primary functions of registers is to store the operands and results of arithmetic and logical operations performed by the CPU. By keeping the data within registers, the CPU can perform calculations quickly and efficiently without the need to access the slower cache or main memory.

Registers also play a crucial role in the execution of instructions. The CPU fetches instructions from memory and stores them in an instruction register. The instruction register holds the current instruction being executed by the CPU, allowing it to decode and execute the instruction using the appropriate operands and data from other registers.

In addition to the general-purpose registers, CPUs may also have specialized registers, such as the program counter (PC) and the stack pointer (SP). The program counter keeps track of the memory address of the next instruction to be fetched, while the stack pointer points to the top of the stack in memory. These registers are vital for the control flow and memory management of a program.

Registers play a critical role in the efficient execution of instructions and data manipulation within the CPU. Their high-speed operation ensures that the CPU can swiftly process data and perform computations, contributing to the overall speed and performance of the computer system.

Main Memory (RAM)

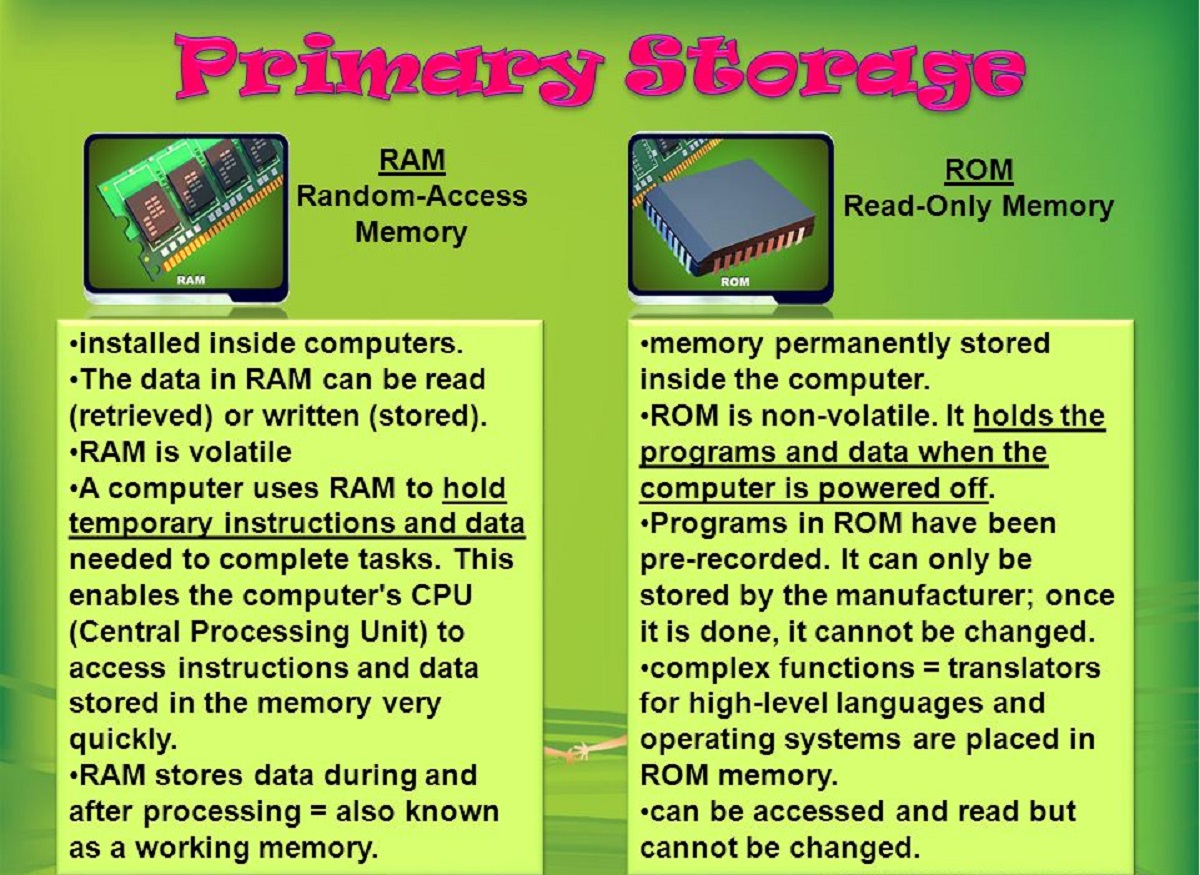

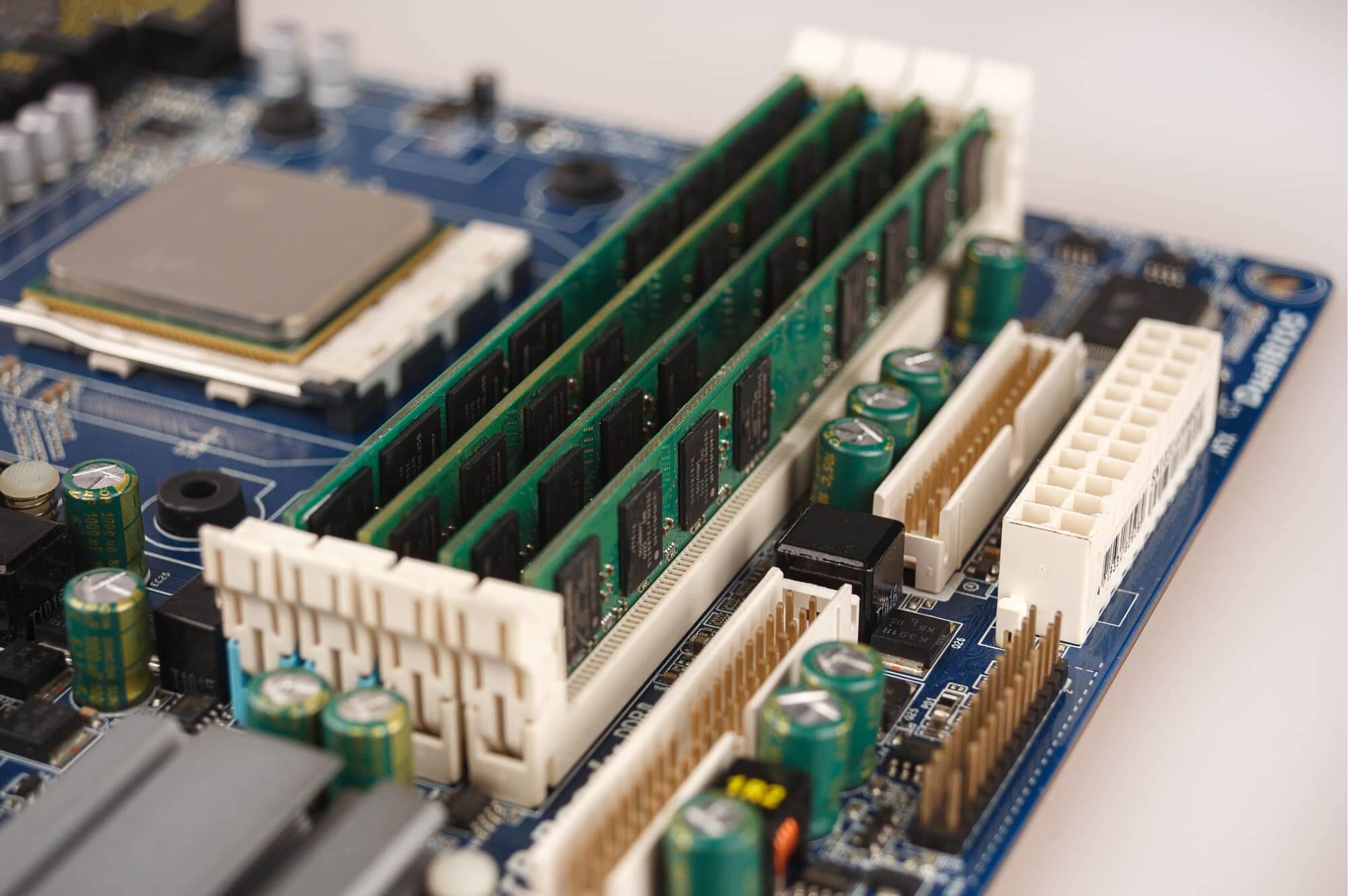

Main memory, also known as random access memory (RAM), is a key component of a computer system’s data storage hierarchy. It serves as a temporary storage space for data and instructions that are actively being accessed by the CPU.

RAM is responsible for holding the data and instructions needed by the CPU to perform calculations and carry out tasks. Unlike cache memory and registers, which have limited storage capacity, RAM provides a larger storage space, allowing for the storage of a variety of data and instructions.

When the CPU needs to access data that is not present in the cache or registers, it looks to the main memory. RAM stores data in a way that allows for quick retrieval, making it much faster compared to long-term storage devices like hard disk drives or solid-state drives.

One of the key characteristics of RAM is its volatility. This means that data stored in RAM is lost when the computer is powered off or restarted. However, it offers the advantage of fast read and write speeds, making it ideal for temporary data storage and quick access by the CPU.

RAM is organized into memory modules, such as dual in-line memory modules (DIMMs), that are installed on the motherboard of a computer. The capacity of RAM can vary from a few gigabytes to several terabytes, depending on the requirements of the system.

In addition to temporarily storing data and instructions for the CPU, RAM also plays a crucial role in virtual memory management. It allows the operating system to swap data between RAM and long-term storage devices, such as hard drives, to optimize the use of available memory.

Main memory, or RAM, provides the CPU with the necessary resources to efficiently access and process data. Its fast read and write speeds make it an essential component for the overall performance and responsiveness of a computer system.

Hard Disk Drive (HDD)

The hard disk drive (HDD) is a common long-term storage device in computer systems. It provides a large capacity for storing data and files that are not actively being accessed by the CPU. Unlike cache memory, registers, and RAM, the HDD offers a non-volatile storage option, meaning that data remains on the drive even when the computer is powered off.

The HDD consists of one or more spinning magnetic disks, called platters, that are coated with a magnetic material. Data is stored on these platters in the form of magnetic patterns. The read/write head, which is mounted on an actuator arm, accesses and manipulates the data by moving across the platters.

When the CPU needs to access data that is not present in the cache, registers, or RAM, it looks to the HDD. Despite being slower than cache, registers, and RAM, the HDD provides significantly larger storage capacity at a more affordable cost per unit of storage. It is commonly used for storing the operating system, software applications, documents, and multimedia files.

Because the HDD relies on mechanical components, such as the spinning platters and the moving actuator arm, it has slower read and write speeds compared to solid-state drives (SSD). However, it remains a popular choice due to its affordability and the ability to store large amounts of data.

One of the downsides of HDDs is their susceptibility to physical damage and data loss in the event of mechanical failure. Fragmentation of data on the platters can also lead to slower read and write speeds over time. However, advances in technology and the development of techniques like error correction and SMART (Self-Monitoring, Analysis, and Reporting Technology) have mitigated these issues to some extent.

Overall, the hard disk drive (HDD) is a reliable and cost-effective long-term storage option for computer systems. Its large storage capacity makes it suitable for storing a wide range of data and files, although it may be slower than other components in the data storage hierarchy.

Solid State Drive (SSD)

The solid-state drive (SSD) is a modern storage device that offers faster read and write speeds compared to traditional hard disk drives (HDD). SSDs have become increasingly popular due to their improved performance and reliability. Unlike HDDs, which use spinning magnetic disks, SSDs store data on flash memory chips.

SSDs are designed with no moving parts, which eliminates the mechanical limitations and fragility associated with HDDs. This results in faster access times, reduced power consumption, and improved durability. The absence of mechanical components also makes SSDs less susceptible to physical damage and data loss in case of rough handling or impact.

One of the main advantages of SSDs is their incredibly fast read and write speeds, which significantly improve the overall performance of a computer system. These faster speeds allow for quick boot times, rapid file transfers, and speedy application loading. Additionally, SSDs provide consistent performance even when accessing fragmented data, unlike HDDs, which can experience performance degradation.

While SSDs offer superior performance, they do come at a higher cost compared to HDDs for the same amount of storage capacity. However, advancements in technology have led to reductions in SSD prices over time, making them a more affordable option for both personal and professional use.

SSDs are available in various form factors, including 2.5-inch drives for desktops and laptops, M.2 drives for ultra-thin laptops and compact desktops, and PCIe drives for high-performance gaming and professional applications. They also come in different storage capacities, ranging from a few hundred gigabytes to multiple terabytes.

With their impressive speed, reliability, and reduced power consumption, SSDs have become the go-to choice for many computer systems. Their ability to significantly improve the overall performance of a computer makes them highly sought after by gamers, content creators, and professionals who rely on fast and responsive systems.

Graphics Processing Unit (GPU)

The Graphics Processing Unit (GPU) is a specialized component in a computer system that is primarily responsible for rendering and displaying visual graphics. While CPUs handle general-purpose computing tasks, GPUs excel at parallel processing and performing complex calculations necessary for graphics rendering.

GPUs are designed with hundreds or even thousands of processing cores, which allow them to handle massive amounts of data simultaneously. They excel at tasks such as rendering 2D and 3D graphics, encoding and decoding media files, and running complex simulations. Their parallel processing capabilities enable them to perform these tasks more efficiently and faster than CPUs.

One of the main applications of GPUs is in gaming, where they are responsible for rendering realistic and immersive graphics in real-time. The GPU takes the instructions from the CPU, processes the data, and generates the images that are then displayed on the monitor. The more powerful the GPU, the higher the resolution and graphical detail that can be achieved in games.

In addition to gaming, GPUs are also widely used in fields such as computer-aided design (CAD), video editing, scientific research, and artificial intelligence (AI) applications. Tasks such as rendering high-resolution images, performing complex calculations, and training machine learning models benefit greatly from the parallel processing power of GPUs.

Modern GPUs are equipped with advanced technologies, such as ray tracing and tensor cores, which further enhance their ability to create realistic and high-fidelity graphics. Ray tracing simulates how light interacts with objects in a scene, resulting in more accurate reflections, shadows, and lighting effects. Tensor cores, on the other hand, are specialized hardware units that accelerate deep learning workloads, enabling faster training and inferencing in AI applications.

GPUs can be integrated directly into the motherboard or come as separate graphics cards that can be added to a computer system. These dedicated graphics cards offer even more processing power and memory capacity, providing a significant boost to graphics-intensive tasks.

The Graphics Processing Unit (GPU) is an essential component in any computer system that requires high-performance graphics rendering. Its parallel processing capabilities and specialized architecture enable it to handle complex graphical tasks efficiently, making it indispensable in gaming, design, video editing, scientific research, and AI applications.

Network Storage

Network storage refers to the storage of data on network-attached devices that are accessible to multiple computers and users over a network. It allows for centralized storage and sharing of data, enabling collaboration and easy access to files and resources across an organization or network.

Network storage solutions are typically in the form of network-attached storage (NAS) devices or storage area networks (SAN). NAS devices are dedicated file servers that provide storage space and file sharing capabilities to clients connected to the network. SAN, on the other hand, is a high-speed network dedicated to storage, allowing multiple servers to access shared storage resources.

Network storage offers several benefits for organizations and users. It allows for data centralization, which simplifies management, backup, and disaster recovery processes. Instead of storing files on separate local hard drives, users can store and access data from a centralized location, promoting better organization and data security.

With network storage, multiple users can access the same files simultaneously. This promotes collaboration and eliminates the need for file sharing via external storage devices or email attachments. Users can easily share documents, edit files together, and manage version control, increasing productivity and efficiency.

Network storage also provides scalability, as additional storage capacity can be added to the network as needed. This allows organizations to accommodate growing data needs without having to replace existing storage devices or disrupt operations.

In addition, network storage solutions often include features such as data deduplication, compression, and encryption. Data deduplication eliminates duplicate data, reducing storage requirements and improving efficiency. Compression reduces file size, optimizing storage space and speeding up data transfer over the network. Encryption ensures that data stored on network devices is secure and protected from unauthorized access.

Cloud storage services, such as Dropbox, Google Drive, and Microsoft OneDrive, can also be considered forms of network storage. These services allow users to store and access their data over the internet, providing convenience, accessibility, and data synchronization across multiple devices.

Overall, network storage is a crucial component in modern computer systems and organizations. It provides a centralized and scalable solution for data storage, sharing, and collaboration, enhancing productivity, data security, and efficiency in today’s interconnected world.