Introduction

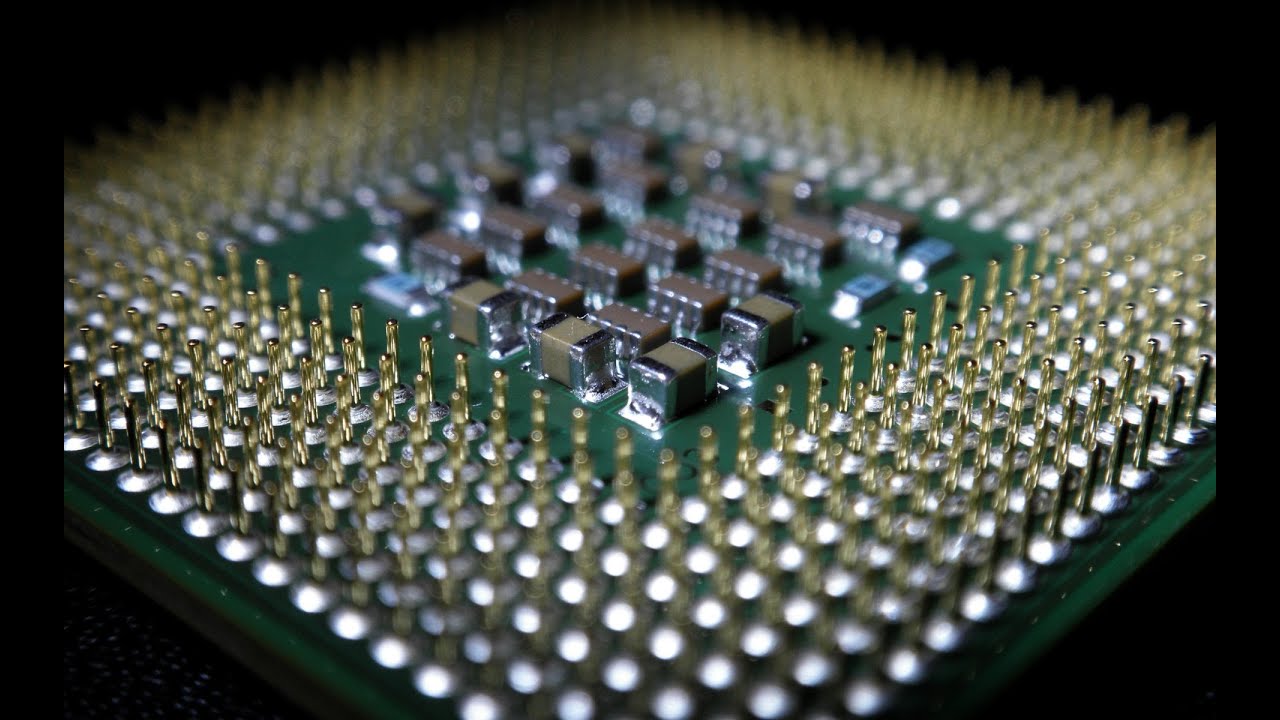

Computers have become an indispensable part of our lives, powering everything from our smartphones to complex data centers. At the heart of these technological marvels lies a crucial component known as the Central Processing Unit (CPU). The CPU is responsible for executing instructions and performing calculations, making it the brain of the computer.

The CPU is a complex semiconductor device that is made up of several interconnected components and circuits. Understanding the inner workings of a CPU can provide us with valuable insights into how computers function and process information.

In this article, we will explore the various components that make up a CPU and how they work together to carry out the tasks assigned to the computer. From transistors to logic gates, registers to cache memory, we will delve into the fascinating world of CPU architecture.

By the end of this article, you will have a clearer understanding of what goes on inside a CPU and how it enables your computer to perform various operations with speed and precision.

Transistors

Transistors are the building blocks of modern electronic devices, including CPUs. They are small electrical switches that control the flow of electric current within a circuit. Transistors can be in two states: on or off, representing the values of 1 or 0 in binary code, the language of computers.

Transistors are typically made from semiconducting materials, such as silicon or germanium, which have the ability to conduct electricity under certain conditions. The most common type of transistor found in CPUs is the Metal-Oxide-Semiconductor Field-Effect Transistor (MOSFET).

A MOSFET transistor consists of three basic components: a source, a drain, and a gate. When a voltage is applied to the gate, it creates an electric field that controls the flow of current between the source and the drain. By varying the voltage at the gate, the transistor can be switched on or off, allowing or blocking the flow of current.

In a CPU, millions or even billions of transistors are compactly integrated onto a single silicon chip. These transistors form the basis of the CPU’s ability to process and store data. The more transistors a CPU has, the more calculations it can perform simultaneously, leading to higher processing power.

Transistor technology has evolved over the years to accommodate the increasing demand for faster and more efficient CPUs. Smaller transistors allow for denser packing on the chip, resulting in more powerful CPUs with lower power consumption. The ongoing development of transistor technology has been a key driving force in advancing computer capabilities.

While transistors serve as the fundamental building blocks of CPUs, it is the combination and coordination of numerous transistors working together that enables the CPU to carry out complex tasks. The next section will explore how these transistors are interconnected to perform logical operations.

Logic Gates

Logic gates are electronic circuits built using transistors that perform logical operations on binary inputs to produce a binary output. These gates form the backbone of digital logic, enabling computers to process and manipulate data.

There are several types of logic gates, each with its own unique functionality: AND, OR, NOT, NAND, NOR, XOR, and XNOR gates. These gates operate based on predefined truth tables that specify the output value for all possible input combinations.

The AND gate, for example, produces a HIGH output (1) only when all of its inputs are HIGH. The OR gate, on the other hand, produces a HIGH output if any of its inputs are HIGH. The NOT gate, also known as an inverter, produces the inverse of its input.

Logic gates are implemented using transistors as switches. By carefully arranging the transistors and their connections, the desired logical operations can be achieved. The output of one gate can be connected to the input of another gate, allowing for complex combinations and sequences of logical operations.

In a CPU, logic gates are used to perform arithmetic calculations, logical comparisons, and control flow operations. Through the arrangement and interconnection of millions of logic gates, the CPU can execute complex instructions and algorithms.

Advancements in transistor technology, such as the shrinking of transistor sizes, have allowed for the integration of more logic gates on a single chip. This increased density has greatly enhanced CPU performance, enabling faster and more efficient computations.

The constant pursuit of smaller and more efficient logic gates has driven the evolution of CPU design. Manufacturers strive to develop new materials and techniques that push the boundaries of transistor technology, resulting in ever-improving CPUs with higher processing power.

Now that we understand the role of logic gates in a CPU, let’s explore another critical component: the Arithmetic Logic Unit (ALU).

ALU (Arithmetic Logic Unit)

The Arithmetic Logic Unit (ALU) is a vital component of a CPU responsible for performing arithmetic and logical operations on data. It is the heart of the CPU’s computational power and plays a crucial role in executing instructions.

The ALU is built using a combination of logic gates and registers. It can carry out basic arithmetic operations like addition, subtraction, multiplication, and division on binary numbers. Additionally, it can perform logical operations such as AND, OR, XOR, and bitwise shifts.

When a program or instruction calls for a mathematical operation, the CPU uses the ALU to carry out the desired calculation. The ALU takes the input data from the CPU’s registers, performs the operation, and stores the result back into the registers.

For example, if the CPU is instructed to add two numbers, the ALU receives the operands from the register, performs the addition operation, and stores the result in another register. This result can then be used by subsequent instructions or stored in memory.

The ALU’s ability to perform arithmetic operations quickly and accurately is paramount to the overall speed and efficiency of the CPU. Manufacturers continuously strive to optimize the design and performance of the ALU to improve the overall computational capabilities of CPUs.

In modern CPUs, the ALU is often multi-functional, meaning it can handle multiple operations simultaneously or in rapid succession. This parallelism increases the overall processing speed and allows for efficient execution of complex algorithms.

Furthermore, the ALU includes additional circuitry for handling control signals, enabling it to perform conditional operations. For example, it can compare two values and determine if they are equal, less than, or greater than each other. This capability is essential for decision-making and branching within computer programs.

Overall, the ALU is a crucial component within a CPU, responsible for carrying out the calculations and logical operations necessary for the functioning of a computer. Its efficiency and capabilities directly impact the overall performance of the CPU and the speed at which computations are executed.

Now that we have explored the ALU, let’s examine another component that helps in the execution of instructions: the Control Unit.

Control Unit

The Control Unit is a crucial component of a CPU that coordinates and manages the execution of instructions. It plays a vital role in ensuring that the various components of the CPU work together harmoniously to carry out the desired operations.

The Control Unit receives instructions from memory and decodes them, determining the specific operation to be performed. It then orchestrates the flow of data between the different functional units of the CPU, such as the ALU, registers, and memory.

One of the key tasks of the Control Unit is to fetch instructions from memory and determine the sequence in which they need to be executed. It ensures that instructions are fetched, decoded, and executed in the correct order, following the program’s logical flow.

Additionally, the Control Unit is responsible for managing the timing and synchronization of the CPU’s internal operations. It uses a clock signal to control the timing of various tasks, ensuring that they occur in a coordinated and synchronized manner. This synchronization is crucial for the proper functioning of the CPU and preventing data corruption or errors.

Another important function of the Control Unit is to manage the transfer of data between the CPU and external devices. It facilitates input and output operations, allowing the CPU to communicate with peripherals such as keyboards, displays, and storage devices.

The Control Unit also handles the fetching and storing of data in memory. It coordinates the retrieval of data from memory when needed for calculations and instructions, as well as the storing of results back into memory for future use or for output.

In modern CPUs, the Control Unit is often implemented using microcode, which is a low-level code that provides specific instructions on how to carry out various operations. This microcode is stored in a special area of memory within the CPU and allows for flexibility and reusability of the control logic.

Overall, the Control Unit acts as the “traffic controller” within the CPU, ensuring that instructions are executed correctly and data flows smoothly between the different components. Without the Control Unit’s coordination and management, the CPU would not be able to carry out instructions efficiently or accurately.

Now that we have explored the Control Unit, let’s move on to another important component: Registers.

Registers

Registers are small, high-speed storage units within a CPU that store data used for immediate processing. They are essential components in the functioning of a CPU, providing temporary storage and quick access to critical data during instruction execution.

Registers are built using flip-flops, which are electronic circuits capable of storing a single bit of information. Each register can store a specific amount of data, typically represented in binary form.

There are different types of registers in a CPU, each serving a specific purpose. The most common types of registers include:

- Instruction Register (IR): This register holds the current instruction being executed by the CPU. It stores the opcode, which defines the operation to be performed, as well as any associated operands.

- Program Counter (PC): The Program Counter keeps track of the memory address of the next instruction to be fetched and executed. It is incremented after each instruction, allowing the CPU to sequentially fetch the next instruction in memory.

- General-Purpose Registers: These registers are used for storing intermediate results and operands during instruction execution. They provide quick access to data needed for arithmetic and logical operations.

- Status Register: The Status Register contains flags that indicate the outcome of previous operations, such as arithmetic overflow, zero result, or carry. These flags are used by the Control Unit to make decisions during program execution.

Registers play a vital role in improving the efficiency and speed of the CPU. Since they are located within the CPU, they can be accessed much faster than external memory. This allows for quicker data manipulation and reduces the need for frequent memory access.

Additionally, registers help to minimize bottlenecks in data transfer between different components of the CPU. Operations can be performed directly on data stored in registers, reducing the need for constant data movement to and from memory.

The number and size of registers vary depending on the CPU architecture. CPUs with a larger number of registers often have improved performance as they can store more data locally, reducing the need for frequent memory access.

Registers are a critical component in the execution of instructions within a CPU. They provide temporary storage and quick access to data, enabling the CPU to perform calculations and manipulate data efficiently. Without registers, the CPU would have to rely solely on external memory, significantly reducing its speed and performance.

Now that we have explored registers, let’s dive into another key component: Cache Memory.

Cache Memory

Cache memory is a type of high-speed memory that is located between the CPU and the main memory in a computer system. It serves as a temporary storage for frequently used data, providing quick access and reducing the need for accessing the slower main memory.

The purpose of cache memory is to bridge the speed gap between the fast CPU and the relatively slower main memory. It helps improve the overall performance of the computer system by reducing the time it takes to access data that is frequently used by the CPU.

Cache memory operates on the principle of locality of reference, which states that data that has been recently accessed is likely to be accessed again in the near future. Cache memory takes advantage of this principle by storing copies of frequently accessed data from the main memory.

There are different levels of cache memory within a CPU, typically referred to as L1, L2, and sometimes even L3 caches. The L1 cache is the closest and fastest cache to the CPU, with the smallest capacity. The L2 cache is larger and slightly slower, while the L3 cache, if present, is larger still but slower compared to the L1 and L2 caches.

When the CPU needs to access data, it first checks the L1 cache. If the data is found in the cache, referred to as a cache hit, it can be quickly retrieved. However, if the data is not present in the L1 cache, the CPU checks the subsequent levels of cache, until it either finds the data or encounters a cache miss.

In the case of a cache miss, the CPU needs to retrieve the data from the main memory. This process takes longer due to the slower access speed of the main memory. However, once the data is retrieved, it is not only used by the CPU but also stored in a cache level (if available) for future faster access.

The use of cache memory greatly improves the CPU’s efficiency by reducing the latency associated with memory access. It allows the CPU to retrieve frequently used data quickly, thereby keeping the CPU busy with actual processing tasks rather than waiting for data from the main memory.

Cache memory is a vital component of modern CPUs and is constantly evolving to meet the ever-increasing demands for faster and more efficient processing. Manufacturers continue to improve cache designs, increase cache sizes, and optimize cache management algorithms to further enhance CPU performance.

Now that we have explored cache memory, let’s move on to another crucial aspect: the clock.

Clock

The clock is a fundamental component of a CPU that provides synchronization and timing for the various operations within the computer system. It generates regular electronic pulses, known as clock cycles, which act as a metronome for the CPU’s internal processes.

Each clock cycle represents a fixed unit of time, typically measured in nanoseconds. The clock signal ensures that all components within the CPU start, process, and complete their operations in a coordinated manner, allowing for the orderly execution of instructions.

At the start of each clock cycle, the CPU fetches an instruction, decodes it, carries out the necessary operations, and stores the result. Once the operation is complete, the CPU moves on to the next clock cycle and repeats the process with the next instruction.

The frequency of the clock, measured in Hertz (Hz), determines the number of clock cycles per second. A higher clock frequency means more instructions can be executed in a given amount of time, resulting in improved CPU performance.

The clock speed is a critical factor in determining the overall speed of a CPU. However, it is worth noting that other factors can also impact performance, such as the efficiency of the CPU’s architecture, the number of cores, and the presence of cache memory.

Over the years, CPU clock speeds have significantly increased due to advances in semiconductor technology. However, there is a limit to how fast a CPU can operate before encountering issues such as excessive heat generation and power consumption.

To address these challenges, modern CPUs incorporate features such as dynamic frequency scaling, where the clock speed adjusts based on workload demands. This allows the CPU to operate at higher frequencies when needed, while reducing power consumption during periods of lower activity.

While the clock speed plays a crucial role in CPU performance, it is essential to consider other factors as well. The efficiency of the CPU’s architecture, the number and size of registers, the presence of cache memory, and the performance of other components all contribute to the overall processing power of the CPU.

The clock is an integral component that ensures the smooth and synchronized operation of a CPU. It provides a timekeeping mechanism that allows for the efficient execution of instructions and the coordination of various internal processes within the computer system.

Now that we have explored the role of the clock, let’s move on to another important aspect: Input/Output.

Input/Output

Input/Output (I/O) is a vital component of a computer system that enables communication between the CPU and external devices such as keyboards, mice, monitors, printers, and storage devices. It allows information to flow into and out of the computer system, facilitating the exchange of data and user interaction.

Input devices provide a means for users to input data and commands into the computer. Examples of input devices include keyboards, mice, touchscreens, scanners, and microphones. When you type on a keyboard or move the mouse, these devices send signals to the CPU, which interprets them and responds accordingly.

Output devices, on the other hand, provide a way for the computer to convey information to the user. Monitors, printers, speakers, and projectors are examples of output devices. They receive signals from the CPU and display or produce output in various forms, such as text, images, or sound.

Input and output operations involve the transmission of data between the CPU and the external devices. This data is typically transferred through channels called I/O ports. These ports are connected to the relevant devices, and the CPU communicates with them using specific protocols.

The CPU uses drivers, software programs, to interface with the various input and output devices. These drivers provide a standard set of commands and functions that the CPU can use to interact with the devices. They ensure compatibility and enable efficient data exchange.

As technology has advanced, the types and capabilities of input and output devices have evolved. For example, traditional keyboards have been complemented by touchscreens and voice recognition systems. Monitors have transitioned from bulky CRT displays to sleek and energy-efficient LCD and LED screens.

Efficient I/O operations are crucial for the overall performance of a computer system. Slow or inefficient I/O can lead to bottlenecks and reduced system responsiveness. To optimize I/O performance, techniques such as buffering, caching, and parallel processing are employed.

In addition to external devices, I/O operations also involve the transfer of data between the CPU and secondary storage devices like hard drives, solid-state drives, and optical drives. These devices allow for long-term storage and retrieval of data, ensuring that it is not lost when the system is powered off.

The ability to seamlessly handle input and output operations is imperative for a computer system to interact with users, exchange data with external devices, and access and store information efficiently. A well-designed I/O subsystem contributes significantly to the overall user experience and system functionality.

Now that we have explored the role of input and output in a computer system, let’s move on to the final section of our article: the bus system.

Bus System

The bus system is a critical component of a computer that connects various hardware components, allowing them to communicate and exchange data with each other. It serves as a pathway for transmitting information between the CPU, memory, and peripherals, enabling the seamless flow of data throughout the system.

A bus consists of a set of conductive wires or traces that carry electrical signals. These wires transmit different types of data, such as addresses, control signals, and actual data, between components. The bus system utilizes a standardized protocol to ensure compatibility and efficient data transfer.

There are typically three types of buses in a computer system:

- Data bus: This bus carries actual data between the CPU, memory, and other devices. It is bi-directional, allowing for the transfer of data from the CPU to memory or from memory to the CPU.

- Address bus: The address bus is responsible for transmitting memory addresses. It allows the CPU to specify the location in memory where data is being read from or written to.

- Control bus: The control bus carries control signals that coordinate and regulate the operation of the different components within the system. It includes signals for memory read and write operations, interrupt requests, and clock synchronization.

The bus system facilitates the transfer of data by breaking it down into small chunks called words or bytes. These units of data are sent through the bus in parallel, meaning multiple bits can be transmitted simultaneously. The width of the bus, measured in bits, determines the maximum amount of data that can be transferred in a single bus cycle.

The effectiveness of the bus system is influenced by several factors, including bus width, clock speed, and the number of devices connected to the bus. A wider bus allows for the transfer of larger chunks of data, while a higher clock speed increases the rate at which data can be transferred.

Modern computer systems often employ advanced bus architectures, such as the Peripheral Component Interconnect (PCI) bus or the newer PCIe (PCI Express) bus. These buses offer faster data transfer rates, increased bandwidth, and better scalability to accommodate the demands of modern computing applications.

Efficient bus design is crucial for maximizing system performance and avoiding data bottlenecks. Bus protocols such as Direct Memory Access (DMA) allow devices to access memory directly, reducing the involvement of the CPU and improving overall system efficiency.

The bus system plays a pivotal role in connecting the various components of a computer and facilitating the seamless exchange of data. It ensures that instructions and data can be retrieved from memory, processed by the CPU, and sent to peripherals in an efficient, coordinated manner.

Now that we have explored the role of the bus system in a computer, we have covered all the essential components that make up a CPU and contribute to its functioning.

Conclusion

The Central Processing Unit (CPU) is a complex and vital component of a computer system. It is responsible for executing instructions, performing calculations, and managing the overall operation of the system. Understanding the inner workings of a CPU can provide valuable insights into how computers function and process information.

In this article, we have explored the various components that make up a CPU, from transistors and logic gates to the Arithmetic Logic Unit (ALU), Control Unit, registers, cache memory, clock, input/output, and the bus system. Each of these components plays a crucial role in enabling the CPU to perform its tasks efficiently and effectively.

Transistors, as the building blocks of CPUs, control the flow of electric current within a circuit. Logic gates use interconnected transistors to perform logical and arithmetic operations. The ALU carries out calculations and logical operations, while the Control Unit coordinates and manages instruction execution.

Registers provide temporary storage and quick access to data, and cache memory bridges the speed gap between the CPU and main memory. The clock synchronizes the internal operations of the CPU, while the input/output system enables communication with external devices. The bus system connects the various components and facilitates data transfer.

By understanding the components of a CPU and how they work together, we gain insight into the remarkable capabilities of modern computers. The constant advancements in technology and design continue to push the boundaries of CPU performance, enabling faster, more efficient, and more powerful computational systems.

As technology continues to evolve, CPUs will become even more powerful and sophisticated, facilitating groundbreaking innovations in various industries. This understanding of CPU architecture provides us with a foundation to explore further topics in computer science and appreciate the incredible complexity and ingenuity behind the technology we rely on every day.

So, the next time you turn on your computer or use a smartphone, take a moment to appreciate the intricate design and remarkable capabilities of the CPU that brings these devices to life.