Introduction

The CPU, or Central Processing Unit, is often referred to as the brain of a computer. It is a vital component that performs the majority of the processing tasks in a computer system. From executing instructions to performing complex calculations, the CPU plays a crucial role in handling the various operations of the computer.

As technology has advanced, CPUs have become more powerful and capable of handling increasingly complex tasks. The constant evolution of CPUs has enabled computers to perform a wide range of functions, from running complex software applications to playing graphics-intensive video games.

In this article, we will explore the role of the CPU in a computer system and discuss its various components and functions. We will delve into concepts such as clock speed, cache memory, and instruction cycles to gain a better understanding of how the CPU processes information. Additionally, we will discuss the impact of factors such as interrupts and multithreading on the performance of the CPU.

Understanding the CPU and its functionalities is essential for both computer enthusiasts and everyday users. Whether you’re interested in building a computer, monitoring system performance, or simply curious about how your device operates, gaining knowledge about the CPU will give you valuable insights into the inner workings of a computer.

So, join us on this journey as we unravel the mysteries of the CPU and uncover the fascinating world of computer processing power.

Definition of CPU

The CPU, short for Central Processing Unit, is the primary component responsible for carrying out instructions and performing calculations in a computer system. It is often referred to as the brain of the computer as it controls and coordinates the various functions of the system.

The CPU acts as an intermediary between the computer’s hardware and software, enabling effective communication and execution of tasks. It interprets instructions from the computer’s memory and carries out the necessary operations to complete those instructions. These operations can include arithmetic calculations, data movement, logic comparisons, and more.

The CPU can be thought of as a complex electronic circuit made up of several components working together to process data. Its key components include the Arithmetic Logic Unit (ALU), which performs mathematical operations, the Control Unit (CU), which coordinates instructions and controls data flow, and various registers that temporarily store data and instructions.

While the CPU is a separate hardware component, it works closely with the computer’s memory, input/output devices, and other system components to facilitate seamless operation. It retrieves data and instructions from memory, performs calculations and operations on that data, and then sends the results back to memory or to an output device for further processing or displaying.

In essence, the CPU is responsible for the fundamental operations that make a computer function. It executes program instructions, manages data manipulation, and ensures the smooth functioning of the overall system. The performance of a CPU is determined by factors such as clock speed, cache memory, and the number of cores it possesses.

Overall, the CPU is an indispensable component of a computer system, providing the computing power necessary for running software applications, playing games, browsing the internet, and performing a vast array of tasks that we rely on computers for in our daily lives.

Components of CPU

The CPU (Central Processing Unit) consists of several key components that work together to handle the processing tasks of a computer system. These components include the Arithmetic Logic Unit (ALU), the Control Unit (CU), and various registers. Let’s explore each of these components in detail:

Arithmetic Logic Unit (ALU): The ALU is responsible for performing mathematical calculations and logical operations. It can perform tasks such as addition, subtraction, multiplication, division, and comparison operations like greater than or equal to, less than or equal to, etc. It operates on binary data, manipulating bits to perform the desired calculations.

Control Unit (CU): The Control Unit acts as the manager of the CPU. It coordinates the flow of data between the different components of the CPU and controls the execution of instructions. The CU fetches instructions from memory, decodes them, and sends signals to the ALU and other components to carry out the necessary operations.

Registers: Registers are small, high-speed memory units located within the CPU. They store data and instructions that are frequently used by the CPU for quick and efficient access. The CPU has various types of registers, including the instruction register (IR), program counter (PC), and general-purpose registers (such as the accumulator, index registers, and stack pointers).

These components work together in a coordinated manner to perform the processing tasks of the CPU. The ALU carries out the mathematical and logical operations, while the CU manages the control flow and ensures the correct execution of instructions. The registers provide temporary storage for data and instructions, allowing for fast access and manipulation by the CPU.

Besides these core components, the CPU is also connected to other critical components. The CPU communicates with the computer’s memory, input/output devices, and other peripheral devices via buses, allowing data to be transferred to and from these devices. The CPU interacts with the memory to fetch instructions and data, and it communicates with input/output devices to send or receive information.

Overall, the components of the CPU work in harmony to handle the processing tasks of a computer system. Their precise coordination and efficient operation are vital for the smooth functioning of the CPU and, consequently, the overall performance of the computer.

Arithmetic Logic Unit (ALU)

The Arithmetic Logic Unit (ALU) is a crucial component of the CPU (Central Processing Unit) that performs arithmetic and logical operations. It is responsible for carrying out mathematical calculations and executing logical comparisons required by the computer’s instructions.

The ALU operates on binary data, which means that it manipulates bits to perform calculations and comparisons. It can perform basic arithmetic operations, such as addition, subtraction, multiplication, and division, as well as logical operations like AND, OR, and NOT.

When the CPU receives an instruction that requires arithmetic or logical operations, the ALU is activated. It retrieves the necessary data from the registers or memory, performs the specified operation, and stores the result in a designated location.

The ALU functions through a combination of logic gates, which are electronic circuits that process binary signals. These gates, typically made up of transistors, carry out the necessary operations based on the input signals they receive. By combining multiple gates, the ALU can perform complex calculations and comparisons.

Furthermore, the ALU can also handle conditional operations. For example, it can perform comparisons, such as determining whether one value is greater than another. Based on the outcome of such comparisons, the ALU can set flags or trigger specific actions in the CPU control unit.

Modern CPUs often incorporate ALUs with wider data paths, allowing them to process multiple bits simultaneously. This improves the overall speed and efficiency of the CPU’s processing capabilities.

Although the ALU is a fundamental component of the CPU, its performance can vary based on factors such as clock speed, the number of transistors, and the complexity of the operations it can handle. These factors ultimately determine the ALU’s processing capabilities and significantly impact the overall performance of the CPU.

In summary, the ALU is a critical component of the CPU responsible for executing arithmetic and logical operations. It performs calculations and comparisons on binary data, enabling the computer to carry out a wide range of tasks efficiently.

Control Unit (CU)

The Control Unit (CU) is a vital component of the CPU (Central Processing Unit) that orchestrates the execution of instructions and manages the flow of data within the computer system. It acts as the supervisor, ensuring that each instruction is carried out correctly and efficiently.

The primary role of the CU is to fetch instructions from memory and direct the necessary resources to execute them. It retrieves instructions sequentially, one by one, from the memory and sends signals to other components of the CPU to carry out the required operations.

When a new instruction is fetched, the CU decodes it, determining what specific operation or set of operations the CPU needs to perform. It then issues the appropriate control signals to the relevant components, enabling them to execute the instruction correctly. The CU ensures that instructions are executed in the correct order and that the data flow within the CPU is synchronized.

One critical task performed by the CU is managing the control flow of instructions. It determines the next instruction to be fetched based on the program counter (PC) value, which keeps track of the memory address of the next instruction to be executed. The CU updates the PC to point to the next instruction after each instruction is executed, ensuring the proper sequence of instructions.

The CU also coordinates with the Arithmetic Logic Unit (ALU) to perform calculations and logical operations. It sends the necessary data and control signals to the ALU, indicating the desired operation to be executed. The CU then receives the result from the ALU and appropriately stores the outcome in memory or registers.

Interrupt handling is another essential responsibility of the CU. Interrupts are signals that interrupt the normal execution of instructions and require immediate attention. This can occur when an external event occurs, such as keyboard input or a disk drive operation. The CU pauses the current execution, saves the context, and handles the interrupt request, ensuring that the interrupt is appropriately handled before resuming the previous execution.

In modern CPUs, the CU is often implemented using microcode or control logic circuits. Microcode is a set of low-level instructions that define the behavior of the CPU at a fundamental level. It serves as an intermediary between high-level instructions and the hardware components of the CPU, enabling efficient control and execution of instructions.

Overall, the Control Unit plays a critical role in managing the execution of instructions and the flow of data within the CPU. Its coordination of fetch, decode, and execute stages ensures the efficient operation of the CPU, allowing it to carry out a wide range of tasks accurately and reliably.

Registers

Registers are small, high-speed memory units within the CPU (Central Processing Unit) that temporarily store data and instructions needed for immediate access and manipulation. These registers play a vital role in enhancing the performance and efficiency of the CPU by providing quick access to frequently used data.

The CPU contains various types of registers, each serving a specific purpose in the execution of instructions and management of data.

Instruction Register (IR): The Instruction Register holds the current instruction being executed by the CPU. It temporarily stores the fetched instruction from the memory before it is decoded and executed by other components of the CPU.

Program Counter (PC): The Program Counter keeps track of the memory address of the next instruction to be fetched and executed. It is incremented after each instruction is executed, ensuring the sequential execution of instructions.

General-Purpose Registers: General-purpose registers are used to store intermediate results, variables, and operands during the execution of instructions. These registers can be utilized by the CPU to perform arithmetic calculations, logical operations, and data manipulation. The number and size of general-purpose registers may vary depending on the specific CPU architecture.

Accumulator: The Accumulator is a register that holds the result of the most recent arithmetic or logical operation performed by the CPU. It is commonly used in simple CPUs where all arithmetic operations involve the accumulator.

Index Registers: Index registers are used to hold specific values that are frequently accessed during instruction execution. They provide convenient access to data structures, arrays, and other memory locations, improving performance by reducing memory access time.

Stack Pointer: The Stack Pointer points to the top of the stack, which is a region of memory used for temporary storage during subroutine calls and interrupts. It facilitates the efficient management of function calls and context switching in a computer system.

Registers operate at extremely high speeds, making them ideal for storing data that needs to be accessed quickly. Accessing data from registers is faster than accessing data from the computer’s main memory, resulting in improved performance and responsiveness.

By utilizing registers, the CPU can reduce the number of memory access operations, minimizing the system’s overall latency and increasing the efficiency of data manipulation. This efficient data handling contributes to the faster execution of instructions and enhances the overall performance of the CPU.

In summary, registers in the CPU provide temporary storage for data and instructions, enabling fast access and manipulation during the execution of instructions. They serve crucial roles in managing instruction execution, storing intermediate results, and optimizing data access, contributing to the efficiency and speed of the CPU’s operations.

Clock Speed

Clock speed is an essential factor in determining the performance of a CPU (Central Processing Unit). It refers to the rate at which the CPU can execute instructions and is measured in cycles per second, commonly known as Hertz (Hz).

The clock speed signifies the frequency at which the CPU’s internal clock generates electrical pulses to synchronize the operations of various components within the CPU. Each pulse represents one clock cycle, during which the CPU can perform a specific number of instructions or operations.

A higher clock speed means that the CPU can execute more instructions within a given timeframe, resulting in faster processing and better overall performance. For example, a CPU with a clock speed of 3.0 GHz can execute three billion instructions per second, while a CPU with a clock speed of 2.0 GHz can execute two billion instructions per second.

However, it is important to note that clock speed alone does not determine the overall performance of a CPU. Other factors, such as the CPU’s architecture, cache size, and the efficiency of its components, also play a significant role.

In the past, increases in clock speed were the primary means of improving CPU performance. However, as clock speeds approached theoretical limits and power consumption constraints became more prominent, manufacturers shifted their focus towards alternative approaches to enhance performance.

Today, many CPUs implement technologies such as pipelining, superscalar execution, and multiple cores to improve performance without solely relying on clock speed. These advancements enable the CPU to execute instructions in parallel and handle multiple tasks simultaneously, resulting in improved overall efficiency.

Modern CPUs often have a base clock speed, which is the default speed at which the CPU operates, and they may also have a boost clock speed that allows for increased performance temporarily under certain conditions.

Overclocking is a practice where users increase the clock speed of their CPU beyond its default specifications to achieve better performance. However, this should be done with caution, as it can increase power consumption, generate more heat, and potentially impact the stability and lifespan of the CPU.

In summary, clock speed is a crucial parameter in determining the performance of a CPU. A higher clock speed allows for more instructions to be executed in a given time, resulting in faster processing. However, clock speed should be considered in conjunction with other factors, such as architecture and efficiency, to fully assess a CPU’s performance.

Cache Memory

Cache memory is a high-speed, temporary storage area located within the CPU (Central Processing Unit) that holds frequently accessed data and instructions. Its purpose is to provide faster access to data compared to the computer’s main memory, improving overall system performance.

Cache memory operates on the principle of locality, which states that programs tend to access a relatively small portion of data and instructions repeatedly. By storing this frequently accessed data in the cache, the CPU can retrieve it more quickly, reducing the need to fetch data from the slower main memory.

Cache memory is organized in a hierarchy of levels, often referred to as L1, L2, and L3 cache. The L1 cache is the fastest and smallest, located nearest to the CPU cores, while the L3 cache is larger but slower and positioned farther away. This hierarchy allows for faster access to frequently used data and instructions, while still providing sufficient storage capacity.

When the CPU requires data, it first checks the cache memory. If the desired information is present in the cache, it is called a cache hit, and the data can be retrieved almost instantaneously. This reduces the latency associated with accessing the main memory and significantly improves performance.

However, if the data or instruction is not found in the cache (known as a cache miss), the CPU must fetch it from the main memory. This process takes longer and introduces some performance delay, but once the data is fetched, it may be stored in the cache for future use, improving subsequent access times.

Cache memory operates on the principle of the cache hierarchy. The L1 cache is dedicated to each CPU core and provides the fastest access times, while the larger and slower L2 and L3 caches are shared among multiple cores, allowing for effective utilization of resources.

Cache memory size is a critical factor in determining its effectiveness. A larger cache can hold more data and instructions, increasing the chances of a cache hit and reducing the number of cache misses. However, larger caches may also introduce higher latency due to increased access times.

Cache memory, along with other factors such as clock speed and architecture, contributes to the overall performance of a CPU. A well-designed cache hierarchy can significantly improve system performance by minimizing the time spent waiting for data to be fetched from the main memory.

In summary, cache memory is a high-speed storage area within the CPU that holds frequently accessed data and instructions. By storing frequently used information close to the CPU cores, cache memory reduces the time spent waiting for data from the slower main memory, leading to improved performance and faster execution of instructions.

Instruction Cycle

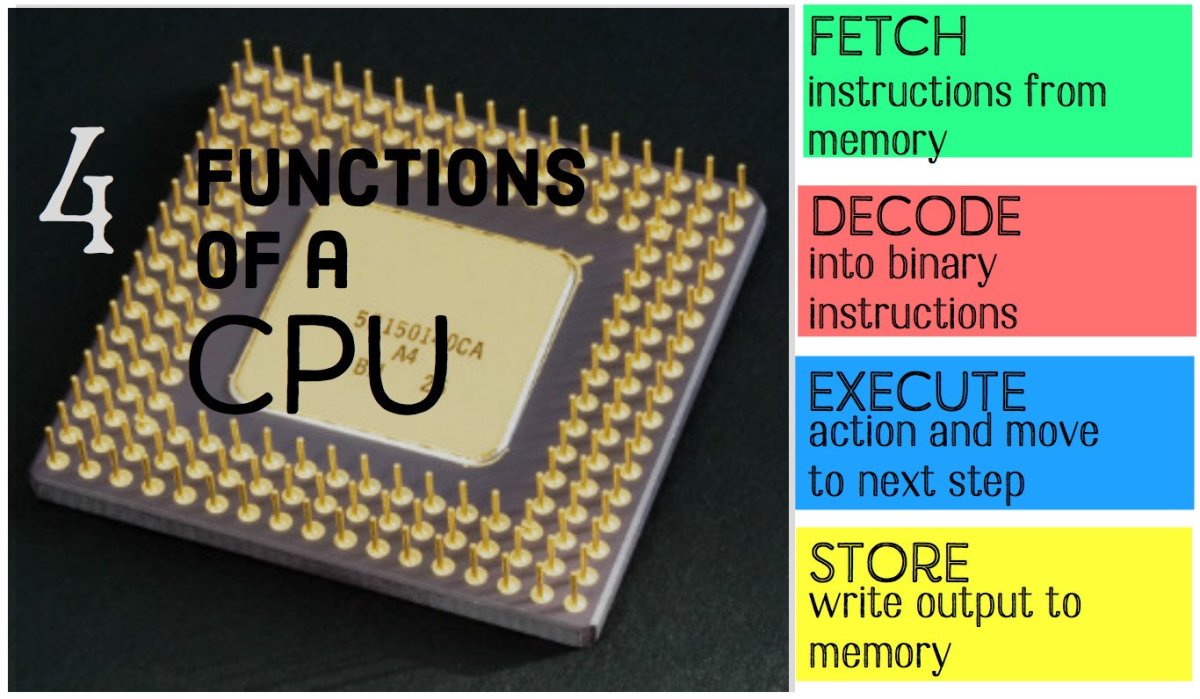

The instruction cycle, also known as the fetch-decode-execute cycle, is the sequence of steps that a CPU (Central Processing Unit) follows to execute a single instruction. It is a fundamental process that the CPU carries out repeatedly to process instructions and perform the required operations.

The instruction cycle consists of three primary stages: fetch, decode, and execute.

1. Fetch: In this stage, the CPU fetches the next instruction from memory. The memory address of the instruction is determined by the Program Counter (PC), which points to the location in memory where the next instruction is stored. The instruction is then loaded into the Instruction Register (IR) for subsequent processing.

2. Decode: Once the instruction is fetched, the CPU proceeds to decode it. The decode stage involves interpreting the instruction and determining the specific operation or set of operations that need to be performed. The control unit (CU) analyzes the opcode – the part of the instruction that specifies the operation – and generates the appropriate control signals to initiate the necessary actions.

3. Execute: After the instruction is decoded, the CPU enters the execute stage. In this stage, the CPU performs the operation specified by the instruction. This can involve tasks such as arithmetic calculations, data movement, logical comparisons, or fetching additional data from memory. The actual execution of the instruction may involve the ALU (Arithmetic Logic Unit), registers, and other components of the CPU, depending on the specific operation.

Once the execution of the instruction is complete, the CPU updates the PC to point to the next instruction, preparing for the next iteration of the instruction cycle.

The instruction cycle continues repeatedly, fetching, decoding, and executing instructions in a sequential manner until the program execution is complete or interrupted by an external event.

Interrupts are signals that can pause the current execution and divert the CPU’s attention to handle exceptional events. When an interrupt occurs, the CPU saves its current state, transfers control to a corresponding interrupt handler, and resumes execution from where it left off once the interrupt has been handled.

The instruction cycle is the foundation of the CPU’s operation, defining how instructions are processed and executed. Efficient instruction cycle timing and coordination are essential for optimal CPU performance, ensuring that instructions are executed accurately and efficiently.

In summary, the instruction cycle is a recurring set of operations that the CPU performs to process instructions. From fetching instructions to decoding and executing them, the instruction cycle is a critical process that enables the CPU to execute instructions and carry out the operations required by a program.

Fetch

The fetch stage is the first step in the instruction cycle, in which the CPU (Central Processing Unit) retrieves the next instruction from memory. It involves fetching the instruction from the memory address pointed to by the Program Counter (PC) and loading it into the Instruction Register (IR) for further processing.

The PC holds the memory address of the next instruction to be fetched and executed. At the beginning of the fetch stage, the CPU reads the value of the PC and sends it to the memory unit, requesting the instruction stored at that address.

Once the memory receives the request, it retrieves the instruction and sends it back to the CPU, which then loads it into the IR. The fetched instruction is usually a binary code that represents a particular operation or set of operations to be performed by the CPU.

After the instruction is loaded into the IR, the PC is incremented to point to the next memory address, preparing for the retrieval of the subsequent instruction in the next fetch cycle.

The fetch stage is critical for the proper execution of a program. It ensures that the CPU continually retrieves the next instruction in the correct sequence, enabling the program’s instructions to be executed in the desired order.

The efficiency of the fetch stage is influenced by various factors, including memory speed, cache performance, and instruction prefetching. Caches play a crucial role in improving the fetch stage by storing recently fetched instructions and predicting the instructions that are likely to be executed next. This helps reduce the number of memory accesses and improves overall performance.

In modern CPUs, pipelining is often employed to enhance the efficiency of the fetch stage. Pipelining divides the instruction execution process into multiple stages, allowing different stages to operate simultaneously. While one instruction is being fetched, the previous instruction is undergoing decoding and the instruction before that is being executed. This overlapping of stages helps improve the CPU’s overall throughput.

It is worth noting that fetching instructions from memory introduces a delay, as memory is slower than the CPU. This delay, often referred to as memory latency, can impact the overall performance of the CPU. Techniques like caching and prefetching are employed to minimize the impact of memory latency on the fetch stage.

In summary, the fetch stage is the initial step in the instruction cycle, where the CPU retrieves the next instruction from memory. It involves fetching the instruction pointed to by the PC and loading it into the IR. An efficient fetch stage, aided by caches and prefetching, ensures a smooth execution of program instructions and contributes to overall CPU performance.

Decode

The decode stage is an important step in the instruction cycle of a CPU (Central Processing Unit) where the fetched instruction is decoded and its meaning is determined. In this stage, the CPU analyzes the instruction to identify the specific operation or set of operations it represents.

After the fetch stage, the instruction is stored in the Instruction Register (IR). The instruction stored in the IR is in a machine-readable format, typically a binary code. The decode stage is responsible for interpreting this binary code and extracting relevant information about the instruction.

During the decode stage, the control unit (CU) of the CPU examines the opcode, which is the part of the instruction that specifies the operation to be performed. The CU decodes the opcode to determine the type of instruction and the specific action it requires.

The decode stage provides the necessary information for the CPU to execute the instruction correctly. It determines the appropriate control signals that need to be generated to initiate the desired actions, such as arithmetic calculations, data movement, or logical comparisons.

Depending on the architecture of the CPU and the specific instruction set it supports, the decode stage may involve decoding complex instructions or multiple stages of decoding for more intricate instructions.

For example, in a simple instruction set, an opcode might represent a basic arithmetic operation or a data movement instruction. In a more complex instruction set, the opcode might contain additional fields that provide information about register locations, addressing modes, or immediate values.

The decode stage ensures that the CPU understands the instruction and prepares the subsequent stages for executing it. It sets up the appropriate pathways and activates the necessary components within the CPU to carry out the required operation.

In addition to decoding the instructions, the decode stage may also perform other important tasks, such as checking for errors or ensuring that the operands and memory locations required by the instruction are accessible and valid.

The efficiency of the decode stage can impact the overall performance of the CPU. Complex instruction decoding or the presence of multi-step decoding processes can introduce additional latency and affect the CPU’s ability to execute instructions quickly.

To maximize efficiency, modern CPUs often employ advanced techniques like pipelining, where the decoding stage overlaps with other stages of the instruction cycle. This allows the CPU to simultaneously decode one instruction while executing a previously decoded instruction, improving overall throughput.

In summary, the decode stage is a critical step in the instruction cycle where the CPU interprets the fetched instruction and determines its meaning. By analyzing the opcode and extracting relevant information, the CPU prepares itself for the appropriate execution of the instruction in the subsequent stages.

Execute

The execute stage is a crucial step in the instruction cycle of a CPU (Central Processing Unit) where the actual operation specified by the decoded instruction is performed. In this stage, the CPU carries out the calculations, data movements, or logical comparisons necessary to execute the instruction.

After the instruction has been fetched and decoded, the CPU proceeds to the execute stage, where it performs the operation specified by the instruction. The specific action to be taken depends on the type of instruction and the operation it represents.

In the execute stage, the CPU coordinates the various components involved in carrying out the instruction. This includes interacting with the registers, the Arithmetic Logic Unit (ALU), and other functional units within the CPU.

If the instruction involves arithmetic calculations, such as addition, subtraction, multiplication, or division, the execution is performed by the ALU. The ALU receives the necessary operands from the registers or memory and performs the required operation. The result of the calculation is typically stored in a register or memory location.

In the case of data movement instructions, the execute stage involves transferring data between registers, memory, or I/O devices. The CPU fetches the data from the source location and moves it to the destination location, ensuring the data is correctly stored or transferred as specified by the instruction.

For instructions involving logical comparisons, such as equality checks or Boolean operations, the execute stage evaluates the comparison or condition and sets flags or updates status registers accordingly. These flags or status registers can be later used to control the flow of program execution or make decisions within the program.

During the execute stage, the CPU may also access memory to fetch additional data or instructions needed to complete the operation. If the required data or instructions are not already in the cache, the CPU retrieves them from the main memory, incurring additional latency compared to data access from the registers or cache.

Multiple instructions may be executing simultaneously in a pipelined CPU, where each stage operates concurrently with different instructions in different stages. This overlap of stages helps maximize the CPU’s utilization and improve overall performance.

The efficiency of the execute stage, along with the entire instruction cycle, is a significant factor in determining the CPU’s performance. Factors like clock speed, cache performance, and the number of pipelines can influence the speed and effectiveness of instruction execution.

In summary, the execute stage of the instruction cycle is when the CPU performs the operation specified by the decoded instruction. Whether it involves arithmetic calculations, data movement, logical comparisons, or other actions, the CPU utilizes its components to execute the instruction and carry out the desired operation.

Interrupts

Interrupts are signals that temporarily halt the normal execution of a CPU (Central Processing Unit) and divert its attention to handle special events or conditions. They serve as a means of transferring control from regular program flow to handle important tasks or events that require immediate attention.

Interrupts can be triggered by a variety of factors, including hardware events such as I/O device requests, timer or clock signals, and external events like hardware malfunctions or user inputs. When an interrupt occurs, the CPU suspends the current execution, saves its state, and transfers control to an interrupt handler or service routine specifically designed to handle the particular interrupt.

Interrupt handling provides several benefits. First, it allows the CPU to quickly respond to time-critical events without waiting for regular program execution to reach a particular point. This is especially important for real-time systems that require immediate responses to external events, such as control systems or data acquisition systems.

Second, interrupts enable asynchronous processing, where the CPU can handle multiple tasks concurrently. By interrupting the current execution, the CPU can service critical tasks as soon as they arise without having to wait for the completion of other ongoing tasks.

Interrupt handling involves several steps. When an interrupt occurs, the CPU saves its current state by preserving the program counter (PC) and other important registers, ensuring that the interrupted program can be resumed later without loss of information.

Next, the CPU transfers control to the appropriate interrupt handler or service routine. This routine is responsible for processing the specific event associated with the interrupt. It may involve tasks such as data transfer, data processing, error handling, or other operations necessary to handle the interrupt.

Once the interrupt handler completes its tasks, the CPU restores the saved state and resumes the interrupted program’s execution at the point where it was interrupted. This seamless transition allows the CPU to continue its regular program execution without disruption.

Modern CPUs often support multiple levels of interrupt priorities. This means that some interrupts take precedence over others, allowing critical tasks to be serviced first. Interrupt priorities help ensure that important events are promptly addressed, even in scenarios where multiple interrupts occur simultaneously.

Interrupt handling is a crucial aspect of operating system functionality, as it enables the OS to efficiently manage external devices, handle exceptional events, and provide essential services to running programs. Interrupts play a pivotal role in achieving efficient multitasking, where the CPU can switch between multiple tasks and allocate resources effectively.

In summary, interrupts are signals that temporarily interrupt the normal execution of the CPU to handle crucial tasks, events, or conditions. By allowing asynchronous processing and prioritizing critical events, interrupts play a vital role in efficient multitasking and provide the CPU with the ability to quickly respond to important events in real-time systems.

Multithreading

Multithreading is a technique that allows a CPU (Central Processing Unit) to execute multiple threads concurrently within a single process. Threads are independent sequences of instructions that can be scheduled and executed independently by the CPU. Multithreading enables improved utilization of CPU resources, increased system responsiveness, and enhanced overall performance.

In a single-threaded environment, the CPU can only execute one instruction stream at a time, typically resulting in idle periods when the CPU waits for I/O operations or other delays. Multithreading addresses this limitation by allowing the CPU to switch between different threads, effectively hiding the latency of certain operations and keeping the CPU busy by executing other instructions.

There are two main types of multithreading: hardware multithreading and software multithreading.

Hardware Multithreading: Also known as simultaneous multithreading (SMT), hardware multithreading is a feature provided by certain CPUs that allows for concurrent execution of multiple threads within a single CPU core. It achieves this by replicating certain components within the core, such as instruction fetch and decode units, allowing for parallel execution of instructions from different threads. Each thread is assigned a separate set of registers, enabling independent execution of instructions.

Software Multithreading: Software multithreading is a programming technique that involves explicitly dividing a program into multiple threads that can be executed concurrently on the CPU. The operating system or the application itself manages the scheduling and execution of these threads, alternating between them based on priority, fairness, or other scheduling algorithms. Software multithreading allows for parallel execution on CPUs that do not support hardware multithreading.

Multithreading offers several advantages. First, it improves system responsiveness by allowing multiple tasks to progress simultaneously. This is particularly important in scenarios where one thread is waiting for I/O operations while other threads can continue executing, making the system appear more responsive to users.

Second, multithreading enhances CPU resource utilization by utilizing idle cycles. When one thread encounters a delay, such as waiting for data, another thread can be scheduled, effectively utilizing the CPU during that idle period and increasing overall throughput.

Additionally, multithreading enables better performance scaling on multi-core CPUs. Each core can handle multiple threads, allowing for even more parallelism and optimal utilization of available resources.

However, multithreading does introduce its challenges. Coordinating data access and synchronization between threads, known as thread synchronization, becomes crucial to avoid data conflicts and maintain consistency. Proper synchronization mechanisms, such as locks, semaphores, or atomic operations, are required to ensure correct and synchronized execution of shared data across threads.

In summary, multithreading is a technique that allows for concurrent execution of multiple threads within a single CPU. It improves system responsiveness, increases CPU resource utilization, and facilitates better performance scaling on multi-core CPUs. While it introduces challenges related to thread synchronization, multithreading remains a powerful approach for enhancing performance and efficiency in modern computing systems.

CPU Performance

CPU (Central Processing Unit) performance refers to the speed and efficiency at which the CPU can execute instructions and carry out tasks. It is a critical factor in determining the overall performance and responsiveness of a computer system.

Various factors contribute to CPU performance, including clock speed, cache memory, number of cores, architecture, and instruction set. Let’s explore some of these factors in more detail:

Clock Speed: Clock speed, measured in cycles per second (Hz), determines the number of instructions a CPU can execute in a given timeframe. A higher clock speed generally results in faster processing, as more instructions can be executed within a set period. However, it’s important to note that clock speed alone does not necessarily indicate overall CPU performance.

Cache Memory: Cache memory is a high-speed memory built directly into the CPU that stores frequently accessed data and instructions. A larger cache with better hit rates can improve performance by reducing the time needed to fetch data from the slower main memory. It helps mitigate the impact of memory latency and improves the CPU’s ability to access frequently used information quickly.

Number of Cores: CPUs can have multiple cores, allowing them to handle multiple tasks concurrently. Each core can independently execute instructions, enabling parallel processing. This enhances multitasking capability and improves performance, particularly in scenarios where multiple applications or processes are running simultaneously.

Architecture: CPU architecture refers to the organization and design principles of the CPU’s internal components. Different CPU architectures have varying performance characteristics, such as instruction execution efficiency, throughput, and power consumption. Advanced architectural features, like superscalar execution or branch prediction, can significantly impact CPU performance.

Instruction Set: The instruction set architecture (ISA) defines the set of instructions supported by the CPU. The complexity and efficiency of the instruction set can affect the CPU’s ability to handle various tasks efficiently. Optimized instruction sets, like SIMD (Single Instruction, Multiple Data), can greatly enhance performance for specific types of computations, such as multimedia or scientific calculations.

It is important to note that CPU performance cannot be evaluated solely based on one factor. The interaction and balance between multiple factors, including clock speed, cache size, core count, architecture, and instruction set, determine the overall performance of the CPU and its suitability for different applications or workloads.

Manufacturers strive to improve CPU performance by innovating in areas like shrinking transistor sizes, improving efficiency, and introducing architectural enhancements. Higher performance CPUs are indispensable for demanding tasks like gaming, video editing, 3D rendering, scientific simulations, and data analysis, where faster and more efficient processing can significantly reduce task completion times.

However, it’s important to recognize that CPU performance is only one aspect of overall system performance. The CPU works in tandem with other components, such as memory, storage, and the operating system, to deliver efficient and responsive computing experiences.

In summary, CPU performance encompasses various factors, including clock speed, cache memory, core count, architecture, and instruction set. A well-balanced combination of these factors contributes to the overall speed and efficiency of a CPU, enabling it to execute instructions quickly and handle demanding tasks effectively.

Conclusion

The CPU (Central Processing Unit) is the brain of a computer system, responsible for executing instructions and performing calculations. It consists of various components, including the Arithmetic Logic Unit (ALU), Control Unit (CU), registers, and cache memory, which work together to process data and handle the operations of the computer.

Understanding the components and functions of the CPU provides insights into the inner workings of a computer. We have explored the crucial components of the CPU, such as the ALU, responsible for arithmetic and logical operations, and the CU, which coordinates instruction execution. Additionally, we delved into registers, which provide temporary storage, and cache memory, which enhances data access speed.

Key concepts like clock speed, instruction cycles, interrupts, and multithreading have been discussed, highlighting their impact on CPU performance. Clock speed determines the rate at which instructions are executed, while the instruction cycle guides the sequential operation of fetch, decode, and execute stages. Interrupts and multithreading enable parallel processing and facilitate efficient handling of events and tasks.

It is important to note that CPU performance is influenced by various factors beyond clock speed, such as cache size, core count, architecture, and the instruction set. These factors collectively contribute to the CPU’s ability to handle tasks efficiently and improve overall system performance.

As technology continues to advance, CPUs become more powerful, allowing for faster and more efficient processing. However, optimal CPU performance requires a balance among various components and factors, aligned with the specific needs and demands of different applications and workloads.

By gaining knowledge about the CPU and its functionalities, individuals can make informed decisions while choosing a CPU for their computing needs. It enables computer enthusiasts and everyday users to understand the capabilities and limitations of their systems, optimize software performance, and appreciate the role of the CPU in the seamless operation of their devices.

Overall, the CPU is a key component that drives the performance and functionality of a computer system. Its efficient operation, utilizing powerful components, intelligent architecture, and advanced features, ensures swift and accurate execution of instructions, contributing to enhanced productivity and a superior user experience.