Introduction

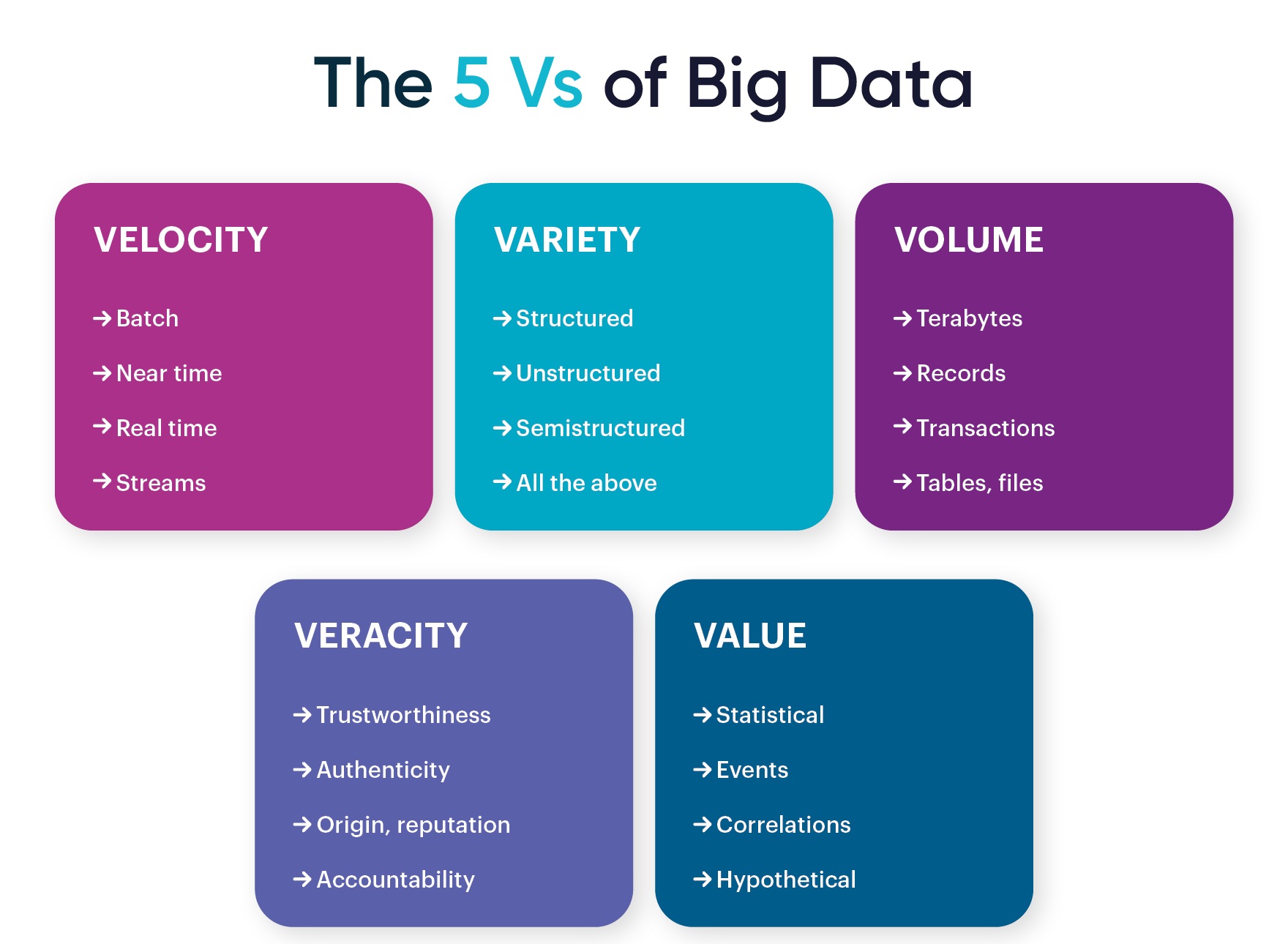

When it comes to understanding and harnessing the power of big data, it’s essential to consider the five V’s that define its characteristics. These five V’s – volume, velocity, variety, veracity, and value – provide a framework for analyzing and making sense of the massive amounts of data generated in today’s digital age.

With the increasing digitization of business processes and the advent of interconnected devices, organizations are faced with an unprecedented amount of data. This data explosion presents both opportunities and challenges. To effectively leverage big data, companies need to understand its unique properties, which are encapsulated in the five V’s.

In this article, we will explore each of the five V’s of big data, examining what they mean and why they are crucial to unlocking the full potential of data-driven insights. By understanding these V’s, businesses can develop strategies and infrastructure to capture, store, process, and analyze data effectively, ultimately leading to better decision-making and improved operational efficiency.

Volume

Volume refers to the sheer scale of data that organizations collect and manage. With the exponential growth of digital data, the volume of information being generated is staggering. Traditional data storage systems and tools are often unable to handle such massive amounts of data.

Big data technologies, such as cloud storage and distributed processing frameworks like Hadoop, have emerged to address this challenge. These technologies provide scalable and cost-effective solutions for storing and processing vast volumes of data. They allow organizations to capture and analyze data from multiple sources, including structured and unstructured data.

The volume of data presents both opportunities and challenges. On the one hand, organizations that can effectively harness and analyze large volumes of data gain valuable insights and competitive advantages. They can detect patterns, identify trends, and make informed decisions based on data-driven insights. On the other hand, managing such enormous data sets requires robust infrastructure and sophisticated data management techniques.

By taking advantage of big data technologies and adopting scalable storage solutions, organizations can store and process vast amounts of data efficiently. This enables them to uncover hidden relationships and discover actionable insights that can drive innovation, enhance customer experiences, and improve business operations.

Velocity

Velocity refers to the speed at which data is generated, processed, and analyzed. In today’s fast-paced digital landscape, data is being produced at an unprecedented rate. From social media updates and online transactions to sensor data from IoT devices, organizations are inundated with a constant stream of real-time data.

The rapid pace of data generation presents unique challenges and opportunities for businesses. To extract meaningful insights, organizations need to be able to capture and analyze data in real-time or near real-time. This requires agile and responsive data processing systems that can handle the velocity of incoming data.

Traditional batch processing methods are no longer sufficient for dealing with high velocity data. Instead, organizations are turning to technologies like stream processing and complex event processing (CEP) to enable real-time analysis of streaming data. These tools allow organizations to detect patterns, anomalies, and trends as they occur, enabling immediate action and decision-making.

By harnessing the power of velocity, organizations can gain a competitive edge. Real-time analytics help businesses respond quickly to market changes, customer preferences, and emerging trends. For example, in the retail industry, real-time data allows companies to personalize customer experiences, optimize inventory levels, and make targeted marketing interventions.

However, managing the velocity of data can be challenging. It requires robust data ingestion, processing, and storage infrastructure to handle the constant influx of data. Additionally, organizations need to ensure data quality and integrity while operating at high speeds. Adopting scalable, high-performance technologies and establishing data governance practices are essential to managing data velocity effectively.

Variety

Variety refers to the diverse types and formats of data that organizations encounter. In the past, most data was structured and fit neatly into predefined databases. However, with the advent of social media, sensors, and other sources, data is now available in a wide range of formats, including structured, semi-structured, and unstructured data.

Structured data refers to information that is organized and categorized in a predefined manner, such as information stored in a relational database. Semi-structured data, on the other hand, possesses some structure but does not conform to a rigid schema. Examples of semi-structured data include XML documents or JSON files. Finally, unstructured data refers to information that does not have a predefined structure, such as text documents, images, videos, and social media posts.

The variety of data presents both challenges and opportunities for organizations. On one hand, unstructured and semi-structured data contain valuable insights that can help organizations understand customer behavior, market trends, and sentiments. By analyzing this data, businesses can gain a deeper understanding of their customers and make more informed decisions.

However, managing and analyzing diverse data types can be complex. Traditional systems and tools are not designed to handle the variety and volume of data that organizations encounter today. Data integration and preprocessing become critical for transforming and harmonizing data from different sources and formats.

To tackle the variety of data, organizations need to employ specialized tools and techniques. Data lakes, which store data in its raw format, offer flexibility and agility in handling diverse data types. Advanced analytics platforms, such as natural language processing (NLP) and image recognition, enable organizations to extract valuable insights from unstructured data.

By effectively managing the variety of data, organizations can unlock new opportunities for innovation, gain deeper insights, and improve decision-making. The ability to harness diverse data types is especially valuable in fields such as healthcare, social media analysis, and customer sentiment analysis.

Veracity

Veracity refers to the reliability and accuracy of data. In the era of big data, organizations encounter a wide range of data from multiple sources, and not all of it can be guaranteed to be accurate or trustworthy. Veracity is crucial because making data-driven decisions based on inaccurate or unreliable information can lead to poor outcomes.

Data veracity encompasses several dimensions. Firstly, it includes the integrity and accuracy of the data itself. Organizations must ensure that the data they collect is free from errors, inconsistencies, and biases. This requires implementing robust data quality processes, including data validation, cleansing, and verification.

Secondly, veracity also relates to the trustworthiness and credibility of the data sources. Not all data sources are created equal, and organizations must assess the reliability and reputation of the sources from which they obtain data. Reliable sources with a proven track record of accuracy and security are more likely to provide trustworthy data.

Moreover, veracity encompasses the governance and control of data. Organizations need to establish data governance practices that ensure the proper handling, access, and sharing of data. This includes implementing security measures to protect sensitive data from unauthorized access and ensuring compliance with relevant regulations and data protection laws.

Veracity is a significant challenge for organizations, as the accuracy and reliability of data can vary significantly. However, by implementing data management and governance processes, organizations can improve data veracity. Leveraging advanced analytics techniques, such as data validation algorithms and anomaly detection, can also help identify and mitigate potential data quality issues.

Ensuring veracity is essential because reliable and accurate data is the foundation for making informed decisions and deriving meaningful insights. By addressing data veracity, organizations can enhance their data-driven decision-making capabilities and build trust in their data assets.

Value

Value is the ultimate goal of analyzing and leveraging big data. It refers to the benefits and insights that organizations gain from their data-driven efforts. While the sheer volume, velocity, variety, and veracity of data may seem overwhelming, the real value lies in extracting meaningful insights that lead to actionable outcomes.

Extracting value from big data requires a combination of sophisticated analytics, domain expertise, and business acumen. By analyzing large and diverse data sets, organizations can uncover hidden patterns, correlations, and trends that provide valuable insights into customer behavior, market dynamics, and operational efficiency.

One of the primary sources of value from big data is the ability to make data-driven decisions. Access to timely and accurate insights allows organizations to optimize operations, streamline processes, and identify new growth opportunities. For example, retailers can use data analytics to optimize inventory levels, improve demand forecasting, and personalize customer experiences.

In addition to operational improvements, big data also enables organizations to innovate and develop new products and services. By leveraging customer data and market insights, companies can identify unmet needs, spot emerging trends, and create innovative solutions that meet customer demands.

Furthermore, big data analytics can strengthen customer relationships by providing personalized experiences and tailored recommendations. By understanding customer preferences, organizations can anticipate needs, deliver targeted marketing campaigns, and improve customer satisfaction and loyalty.

The value of big data extends beyond individual organizations. Aggregated and anonymized data can be used for social good, such as public health surveillance, environmental monitoring, and urban planning. By sharing data and insights, organizations can contribute to collective knowledge and drive positive societal impact.

Realizing the value of big data requires a data-driven culture that embraces innovation, continuous learning, and collaboration. It also necessitates the adoption of advanced analytics tools and technologies, along with a robust data infrastructure capable of handling and processing large data volumes.

In summary, the value of big data lies in the insights and outcomes it generates. By leveraging the volume, velocity, variety, and veracity of data effectively, organizations can make smarter decisions, drive innovation, enhance customer experiences, and contribute to societal well-being.