The people at Amazon are at it again! Do they just keep on getting better and better, or worse?

The tech company has been in the news lately but all for the wrong reasons. It recently announced a major improvement made by its AI experts on the facial recognition program called “Rekognition”. The software can now detect fear as an emotion.

Amazon Rekognition: How Does It Work?

Facial recognition technology has been present for decades. However, it has now become more prevalent in usage. It has gone from simply identifying a person from an image or video to innovations. It specializes in identity authentication and personalized photo applications.

And then, Amazon comes in to up the ante.

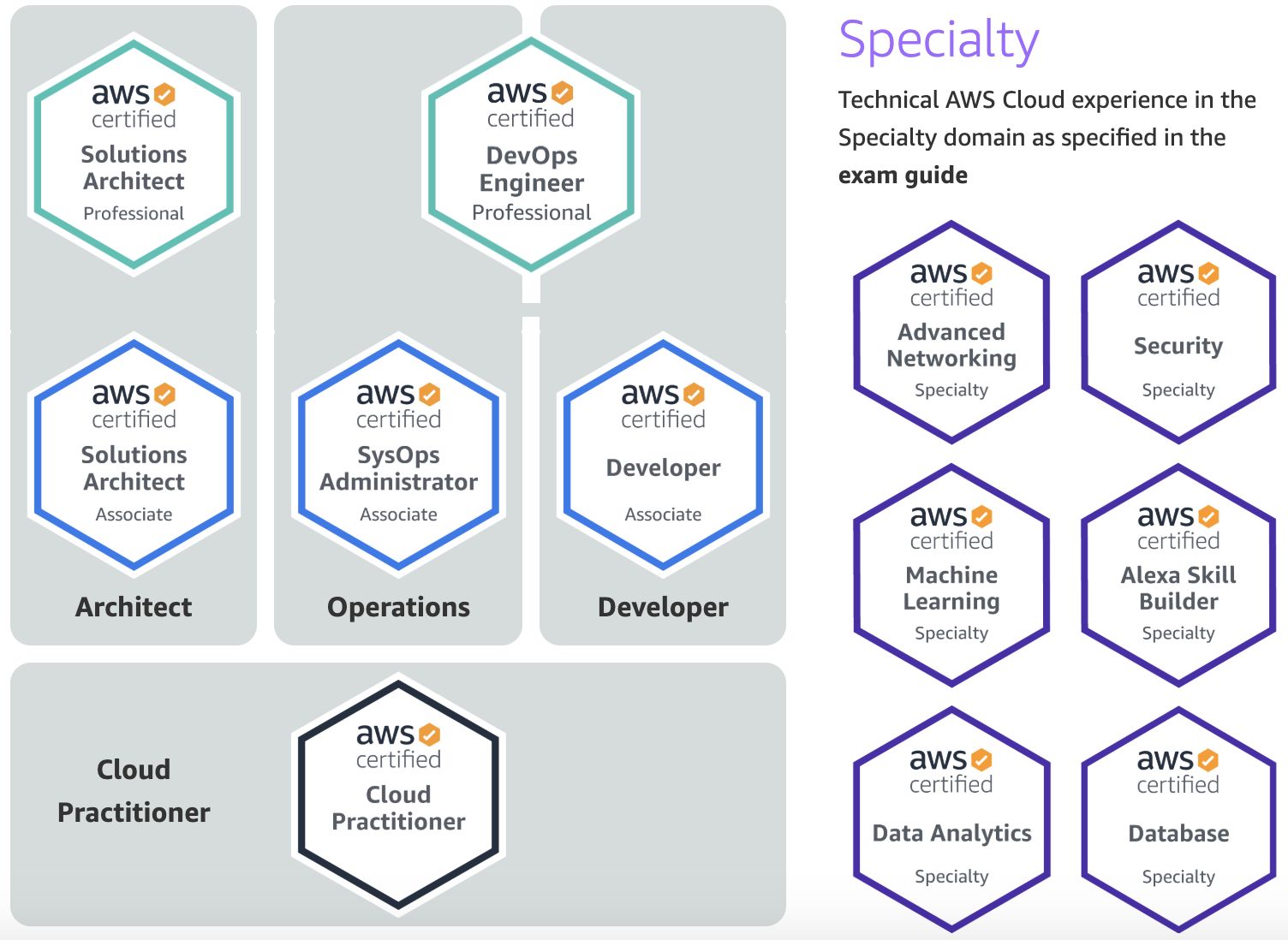

Initially released in 2016, Rekognition is one of multiple Amazon Web Services (AWS) offered by the company. It’s a cloud-based service and computer vision platform available for developers.

Used for facial recognition, it can identify and predict various expressions and emotions from pictures of people’s faces. As explained on their webpage, the software works when one provides “an image or video to the Amazon Rekognition API, and the service can identify objects, people, text, scenes, and activities.”

Aside from detecting inappropriate content as well, Amazon adds that the program can also detect, analyze, and compare faces for a wide variety of use cases, including user verification, cataloging, people counting, and public safety.

Rekognition Can Now Detect Fear Based on Facial Analysis

Amazon has since met some controversy regarding the recent updates on the software. It claims to have improved both the accuracy and functionality of Rekognition’s facial analysis features like gender identification, emotion, and age range estimation.

In a blog post released by the company, Amazon claims that Rekognition has “improved accuracy for emotion detection”. Now, it can even detect the emotion “fear”. This latest announcement struck a nerve with most groups in the United States.

Human versus Robot

It’s no secret that research and development in the field of artificial intelligence have already progressed in leaps and bounds. These innovations are now shaping and influencing the present way of life. Although, some experts would disagree, at least when it comes to facial recognition software.

UC Berkeley researchers published a study in February declaring that, “programs offered by tech companies generally analyze each face in isolation.” They further discussed that, “for a person to accurately read someone else’s emotions in a video requires paying attention to not just their face but also their body language and surroundings.”

They also analyzed that while there are scientific links and correlations between emotions and facial expressions, communicating them still varies among different cultures and situations. A single expression may mean more than one emotion. “It is not possible to confidently infer happiness from a smile, anger from a scowl, or sadness from a frown, as much of current technology tries to do when applying what are mistakenly believed to be scientific facts,” experts say.

Issues and Concerns

With the promotion of Amazon Rekognition came a slew of flak regarding its possible use for mass surveillance and intrusion of privacy.

Various activist groups argue its sale for use by the police and other law enforcement agencies. Last year, a reported sales pitch by Amazon to Immigration and Customs Enforcement (ICE) incited backlash from human rights advocates and the tech company’s employees. Audrey Sasson, Executive Director of Jews For Racial and Economic Justice, said: “Amazon provides the technological backbone for the brutal deportation and detention machine that is already terrorizing immigrant communities, and now Amazon is giving ICE tools to use the terror the agency already inflicts to help agents round people up and put them in concentration camps.”

The American Civil Liberties Union (ACLU) also performed a test. On this test, it compared photos of American politicians against Rekognition’s mugshot database. The result came with 28 false matches, mostly African-American and Latino people.

It seems that the criticisms heading Amazon’s way are justly warranted. Will the company opt for corrective improvements? Or is Rekognition the new Big Brother?