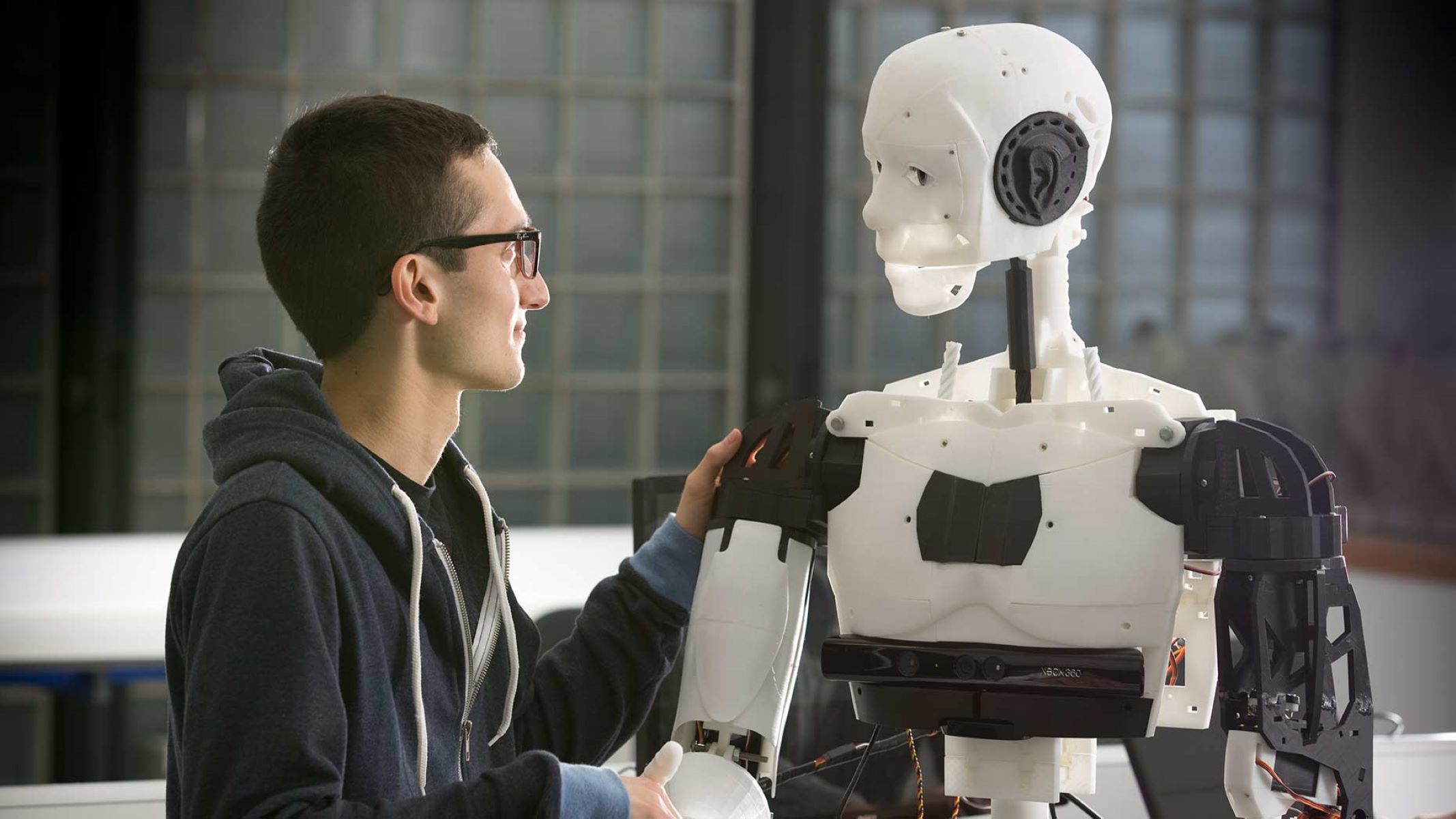

The Toyota Research Institute (TRI) is making significant advancements in robot learning by introducing a method that can teach a robot a new skill overnight. This breakthrough has the potential to revolutionize the field of robotics and pave the way for more efficient and adaptable machines.

Key Takeaway

The Toyota Research Institute (TRI) has developed an innovative method that can teach robots new skills overnight. This new approach significantly accelerates the learning process, requiring only dozens of diverse training cases. The system combines traditional robot learning techniques with diffusion models, enabling robots to adapt to diverse environments and operate in unstructured settings. TRI’s focus on developing “general purpose” robots that can learn and adapt to new tasks represents a paradigm shift in the field of robotics.

Fast Learning with Fewer Training Cases

TRI CEO and Chief Scientist, Gill Pratt, expressed his excitement about the remarkable speed at which this new method works. In the past, machine learning required millions of training cases, which was impractical for physical tasks, as machines often broke down before reaching even 10,000 training cases. However, the TRI system only requires dozens of diverse training cases, making it a much faster and more efficient learning process.

The system developed by TRI combines traditional robot learning techniques with diffusion models, similar to those used in generative AI models. By using this method, TRI has successfully trained robots on 60 different skills and counting.

Adapting to Diverse Environments

An important advantage of this new method is its ability to program skills that can function in diverse settings. Robots often struggle to operate in unstructured environments, and this method aims to address that challenge. Whether it’s navigating a warehouse, a road, or a house, the goal is for robots to adapt to changes and function seamlessly in different scenarios.

TRI’s focus extends beyond industrial applications. They are also dedicated to developing systems that can assist elderly individuals in living independently. By creating robots that can operate in various environments and navigate unpredictable scenarios, TRI aims to enhance the quality of life for older populations.

General Purpose Robots and Future Developments

TRI is at the forefront of developing “general purpose” robots, which can learn and adapt to new tasks. This represents a significant shift from traditional single-purpose robots that are limited to specific functions. While general-purpose robots are still a work in progress, TRI’s research and advancements provide promising insights into their development.

The system developed by TRI starts with teleoperation, a common tool in robot learning, where the robot is repetitively taught a task. By incorporating all available data, including sight and force feedback, the system builds a comprehensive understanding of the task at hand. Force feedback is particularly vital in tasks that require the robot to hold and manipulate objects correctly.

Once the initial training is complete, the system’s neural networks continue to learn overnight, allowing the robot to fully develop the desired skill by the next morning. The combination of diffusion policy and denoising diffusion processes enables the robot to learn and generate behaviors effectively.

Future Prospects and Scaling

TRI’s ultimate goal is to create more capable and adaptive robots that can interact with novel objects in new settings. By stringing together smaller behaviors, these robots can execute complex tasks with ease. As part of their future roadmap, TRI plans to create Large Behavior Models to enhance the learning capabilities of robots.

Gill Pratt and Boston Dynamics AI Institute Executive Director Marc Raibert will be discussing these breakthroughs and more at Disrupt’s Hardware Stage on Thursday. Their dialogue will shed further light on TRI’s advancements and the future of robot learning.