Spurred by the increasing threat of deepfakes, the Federal Trade Commission (FTC) is taking steps to modify an existing rule that currently prohibits the impersonation of businesses or government agencies. The proposed modification aims to extend this rule to cover all consumers, effectively combating the rising instances of deepfake scams.

Key Takeaway

The FTC is proposing to extend its existing rule to combat deepfakes and impersonation scams, aiming to protect consumers from the increasing threat posed by AI-enabled fraud. The move comes in response to growing public concern and aligns with other federal efforts to address the challenges associated with deepfakes.

FTC’s Proposed Expansion

The FTC’s proposed expansion of the rule seeks to address the use of AI tools by fraudsters to impersonate individuals with alarming accuracy and on a larger scale. FTC Chair Lina Khan emphasized the urgency of protecting Americans from impersonator fraud, especially with the proliferation of voice cloning and other AI-driven scams. The potential revisions to the rule could significantly strengthen the FTC’s ability to tackle AI-enabled scams that involve impersonating individuals.

Public Concern and Government Response

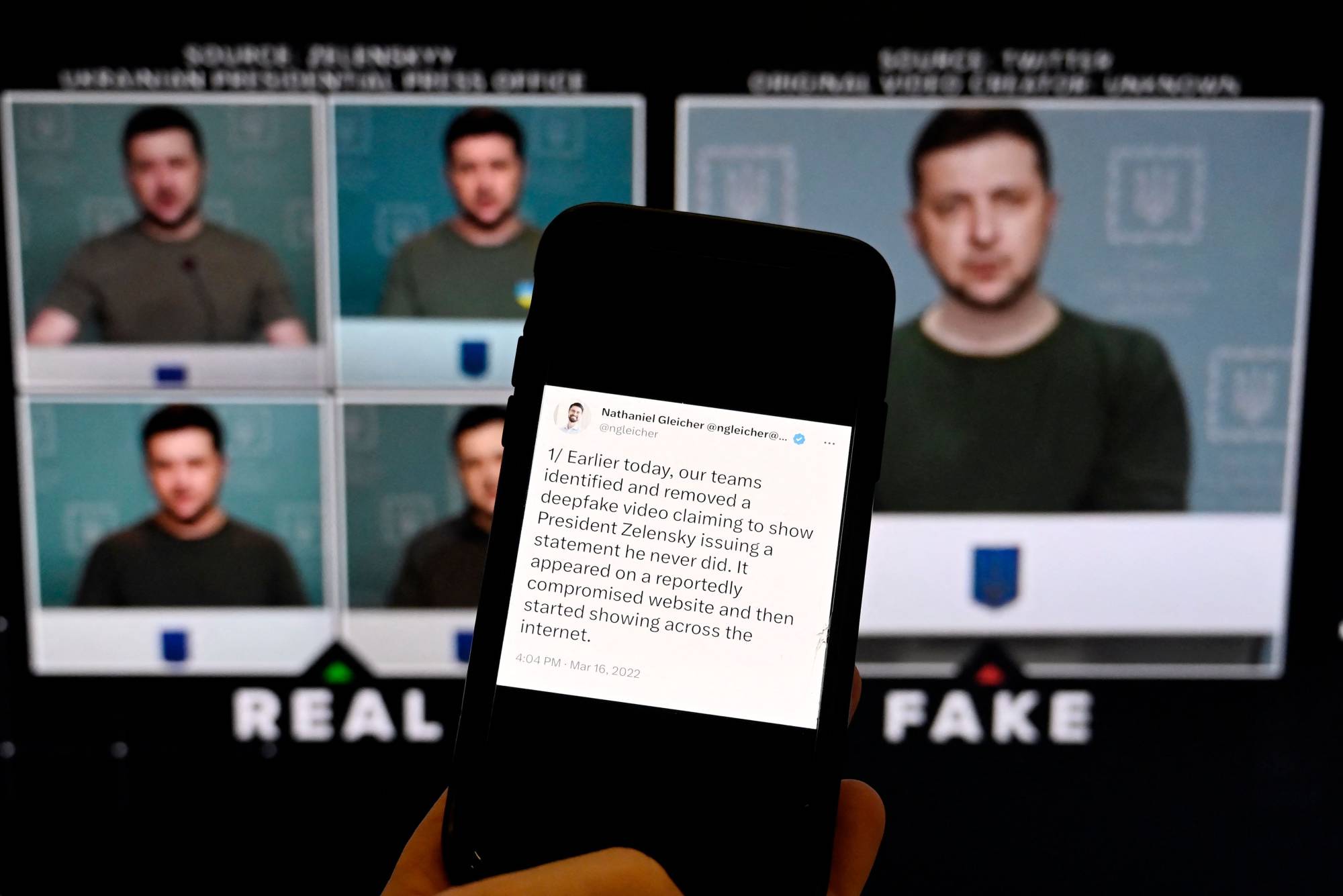

A recent poll revealed that a significant majority of Americans are deeply concerned about the spread of misleading video and audio deepfakes. Additionally, there is growing apprehension about the potential impact of AI tools on the dissemination of false information during the upcoming 2024 U.S. election cycle.

Notably, the FTC’s move aligns with the Federal Communications Commission’s (FCC) efforts to combat deepfakes, particularly AI-voiced robocalls. These regulatory actions represent the current federal initiatives to address the challenges posed by deepfakes and related technologies.

Legal Landscape and State Initiatives

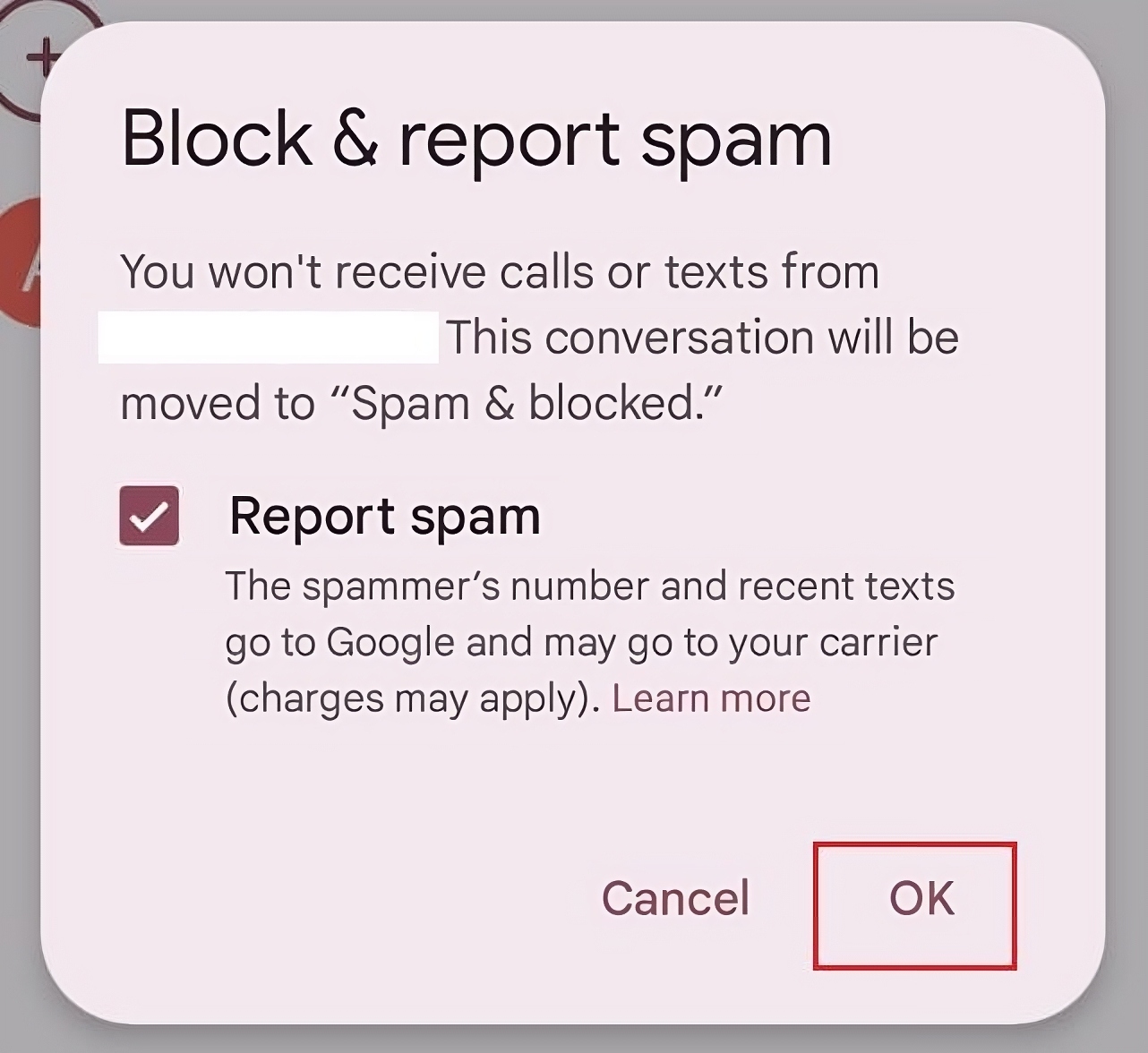

While there is no federal law specifically targeting deepfakes, some high-profile individuals have sought recourse through existing legal avenues such as copyright law and likeness rights. However, these approaches can be complex and time-consuming.

At the state level, several states have enacted laws to criminalize deepfakes, primarily focusing on non-consensual porn. As deepfake-generating tools become more sophisticated, it is anticipated that these laws will be expanded to encompass a broader range of deepfakes, including those used in political campaigning.