Introduction

Tokenization is a fundamental concept in the realm of data security, particularly in the field of payment processing and sensitive information handling. As businesses strive to protect customer data from potential breaches and fraud, understanding the concept of tokenization becomes crucial.

Tokenization refers to the process of replacing sensitive data, such as credit card numbers or personal identification information, with unique identification symbols called tokens. These tokens serve as placeholders for the original data and do not carry any intrinsic value. The purpose of tokenization is twofold: to enhance security by minimizing the risk of data exposure and to simplify the handling of sensitive information.

Tokenization works by generating a random token for each sensitive data element, which is then associated with the original data in a secure database or system. When a transaction or data query is initiated, the system retrieves the associated token to facilitate the process, while keeping the actual sensitive data hidden. This way, even if a hacker gains unauthorized access to the tokens, they would be of no use without the corresponding original data that resides in a separate secure location.

Although tokenization is a widely adopted security measure, it is not infallible. Tokenization failure can occur due to various reasons, and understanding these factors is crucial for organizations seeking to protect their sensitive data effectively. In this article, we will explore some common and uncommon reasons for tokenization failure and provide insights on how to fix such issues. By adhering to best practices, businesses can minimize the risk of tokenization failure and ensure the security of their customers’ data.

What is tokenization?

Tokenization is a data security technique that involves the substitution of sensitive information with non-sensitive placeholders called tokens. It is a widely adopted method for protecting sensitive data, particularly in the context of payment processing, where credit card numbers and other personal information need to be safeguarded.

Tokenization works by generating a random token for each sensitive data element, which serves as a reference to the original data without revealing any sensitive information. These tokens are unique and have no meaning or value outside of their association with the corresponding sensitive data.

The process of tokenization starts by identifying the sensitive data that needs to be protected, such as credit card numbers, social security numbers, or bank account details. Instead of storing this information in its original form, tokenization replaces it with tokens that have no direct correlation to the original data.

For example, let’s consider a credit card number, such as “1234-5678-9012-3456.” During the tokenization process, this number would be replaced with a random token, such as “1A3B5C2D6E4F8G9H.” The token is then securely stored in a database or system, while the original credit card number is retained in a separate location where it is encrypted and protected.

When a transaction or data query is initiated, the system retrieves the associated token and uses it to facilitate the process. The token acts as a reference point to access the original data securely. This way, even if a token is intercepted or compromised, it holds no meaning without the underlying sensitive information that remains securely stored.

Tokenization is different from encryption, where sensitive data is transformed using a mathematical algorithm and requires a decryption key to reverse the process. In tokenization, there is no need for decryption because the tokens themselves are meaningless and cannot be reversed to obtain the original data.

The primary objective of tokenization is to enhance data security and reduce the risk of data exposure in the event of a breach. By utilizing tokens in place of sensitive information, businesses can minimize the impact of potential security incidents and protect the confidentiality and integrity of their customers’ data.

How does tokenization work?

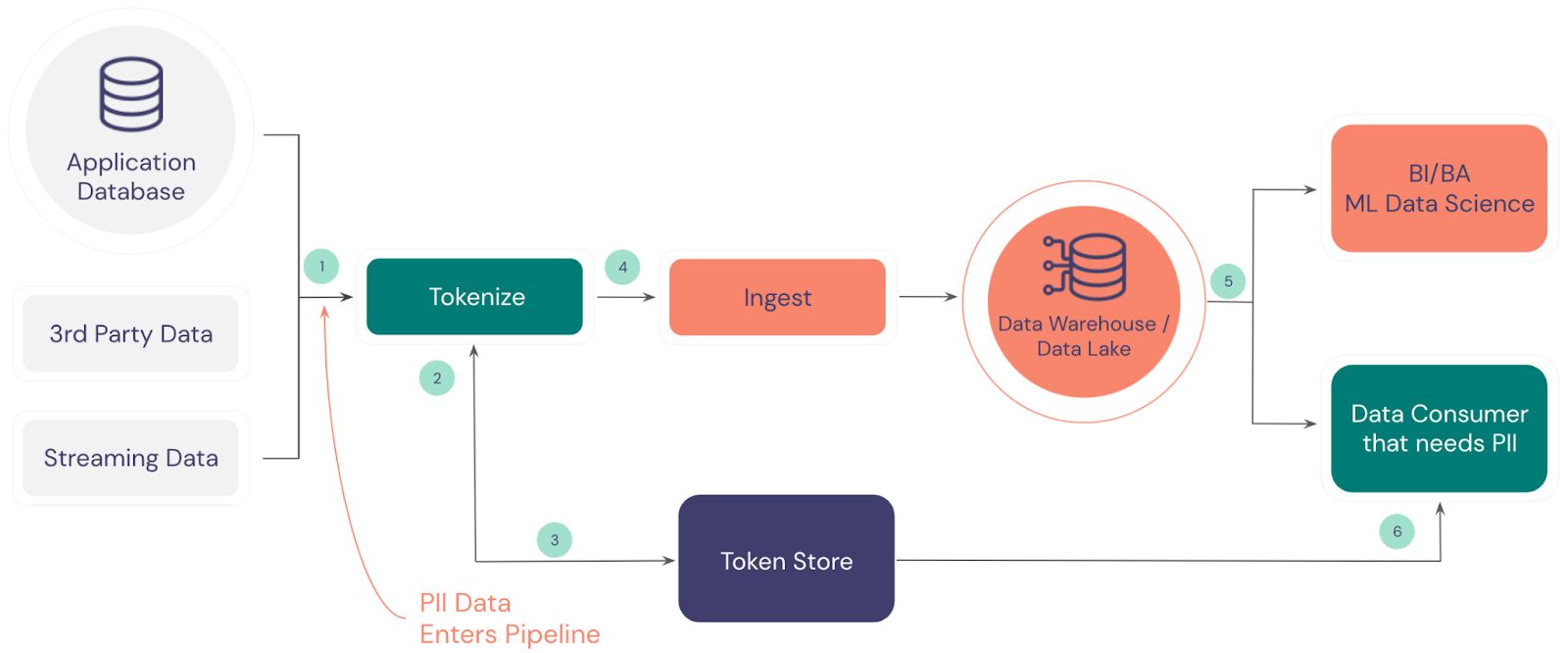

Tokenization involves a systematic process that replaces sensitive data with tokens, providing a secure and efficient method for handling and storing confidential information. Let’s explore how tokenization works in more detail:

1. Data identification: The first step in tokenization is identifying the sensitive data that needs to be protected, such as credit card numbers, social security numbers, or personal identification information.

2. Token generation: Once the sensitive data is identified, a tokenization system generates a random token for each data element. These tokens are unique and have no intrinsic meaning or value.

3. Token association: The generated tokens are then associated with their respective sensitive data in a secure database or system. This association enables the retrieval of the original data when needed.

4. Tokenization process: When a transaction or data query is initiated, the system uses the associated token to facilitate the process. The token acts as a reference point to access the original data securely.

5. Data handling: During data handling, the token replaces the sensitive information, ensuring that only the tokenized data is exposed or transmitted. This minimizes the risk of data exposure and protects the confidentiality of the actual sensitive information.

6. Secure storage: The original sensitive data is securely stored in a separate location, often encrypted, ensuring that it remains protected even if the tokens are compromised or intercepted.

7. Reverse mapping: When there is a need to retrieve the original data, the tokenization system uses a secure reverse mapping process to link the token back to the associated sensitive information. This mapping is done within a secure and controlled environment.

8. Transmission and processing: During transmission or processing, only the tokenized data is used, minimizing the risk of exposing sensitive information. This allows for secure data exchange and streamlined business operations.

9. Token validation: Anytime a token is received, it undergoes validation to ensure its integrity and authenticity. This validation process helps prevent unauthorized tampering or token substitution.

10. Data destruction: In certain cases, when the original sensitive data is no longer required, it can be securely destroyed, leaving only the tokens for future reference.

Overall, tokenization enables secure data handling, reduces the risk of data breaches, and simplifies the process of managing sensitive information. By separating the sensitive data from the tokens and ensuring their secure storage, businesses can enhance data security and maintain customer trust.

Common reasons for tokenization failure

While tokenization is an effective method for protecting sensitive information, it is not immune to potential failures. Understanding the common reasons for tokenization failure is essential for organizations to identify vulnerabilities and implement necessary safeguards. Here are some common reasons for tokenization failure:

1. Misconfiguration: Improper configuration of the tokenization system can lead to vulnerabilities. This can include issues such as using weak encryption algorithms, incorrect token generation methods, or inadequate access controls. Misconfiguration can weaken the effectiveness of the tokenization process and increase the risk of data exposure.

2. Inadequate key management: Effective tokenization relies on robust key management practices. If the encryption keys used to encrypt and decrypt the tokens are not properly protected, it can compromise the security of the tokens and the associated data. Inadequate key management can include storing encryption keys in insecure locations or failing to regularly rotate and update the keys.

3. Weak token generation: The strength and randomness of the tokens play a crucial role in the effectiveness of tokenization. If the token generation process is weak or predictable, it becomes easier for attackers to guess or reverse-engineer the tokens to obtain the original data. Weak token generation algorithms or insufficient entropy sources can lead to tokenization failure.

4. Lack of logging and monitoring: Monitoring and logging tokenization activities are vital for detecting and responding to potential threats or breaches. Without proper logging and monitoring mechanisms, it becomes challenging to identify suspicious activities or unauthorized access attempts. Lack of visibility into token usage can result in delayed response and tokenization failure.

5. Insider threats: Internal employees with privileged access to the tokenization system pose a potential risk. Unauthorized or malicious actions by insiders can compromise the security of the tokenized data. Insufficient controls surrounding access management and monitoring can leave organizations vulnerable to internal attacks and tokenization failure.

6. Integration issues: Tokenization relies on seamless integration with various systems and processes within an organization. Incompatibilities, configuration errors, or integration failures can lead to issues such as incomplete tokenization or inconsistent tokenization practices. This can result in tokenization failure, where some sensitive data remains unprotected or inaccessible.

7. Lack of regular updates and maintenance: Tokenization systems require regular updates and maintenance to address emerging security vulnerabilities and stay in line with industry best practices. Failure to apply patches, updates, or security fixes can leave the system exposed to known vulnerabilities, increasing the risk of tokenization failure.

By understanding and addressing these common reasons for tokenization failure, organizations can enhance their data security measures and prevent potential breaches. Implementing robust configuration practices, secure key management, and regular monitoring can greatly reduce the risk of tokenization failure and ensure the effectiveness of data protection strategies.

Uncommon reasons for tokenization failure

While common reasons for tokenization failure are well-known and often addressed, there are also uncommon factors that can contribute to the failure of tokenization systems. Organizations should be aware of these less common reasons to ensure a comprehensive approach to data security. Here are some uncommon reasons for tokenization failure:

1. Weak or compromised tokenization infrastructure: In some cases, the tokenization infrastructure itself may be weak or compromised. This can happen if the tokenization system is implemented using outdated or vulnerable technologies, or if the infrastructure is not properly secured. Attackers may exploit weaknesses in the infrastructure to gain unauthorized access to tokens and sensitive data.

2. Deteriorated safeguards over time: Tokenization systems should undergo regular assessments and tests to ensure their effectiveness. However, without proper ongoing maintenance and monitoring, safeguards may deteriorate over time. This can result in unnoticed vulnerabilities, configuration drift, or outdated security controls, ultimately leading to tokenization failure.

3. Improper handling of revoked or expired tokens: Tokens associated with revoked or expired sensitive data should be rendered invalid or removed from the system. However, improper handling of revoked or expired tokens can inadvertently allow their reuse or association with new data. This can compromise the security and integrity of the tokenization process.

4. Third-party vulnerabilities: Organizations often rely on third-party providers to handle tokenization processes. However, if these third-party vendors have vulnerabilities in their systems, it can lead to tokenization failure. Improper security measures, lack of vulnerability management, or compromised third-party infrastructure can expose tokens and compromise the security of the sensitive data.

5. Inadequate disaster recovery strategies: Tokenization systems should have robust disaster recovery strategies in place to ensure business continuity in the event of a system failure or data loss. However, organizations may overlook the need for effective backup and recovery plans, leaving the tokenization system at risk. Failure to recover tokens or restore systems in a timely manner can result in tokenization failure.

6. Lack of awareness and training: Human error can contribute to tokenization failure. The lack of awareness and training among employees regarding tokenization processes, data handling, and security best practices can lead to mistakes that compromise the effectiveness of tokenization. Proper education and training programs are necessary to ensure everyone understands their roles and responsibilities in maintaining the security of the tokenization system.

7. Regulatory compliance issues: Organizations must comply with industry and regional regulations regarding data protection and privacy. Failure to adhere to these compliance requirements can result in tokenization failure, as it can lead to legal repercussions and penalties. It is crucial for organizations to stay updated with relevant regulations and ensure their tokenization practices align with the required standards and guidelines.

By considering these uncommon reasons for tokenization failure, organizations can strengthen their data security measures and address potential vulnerabilities. This comprehensive approach to tokenization helps ensure the integrity and effectiveness of data protection strategies and safeguards against unforeseen risks or threats.

How to fix tokenization failure

Addressing tokenization failures promptly and effectively is crucial to maintaining data security and protecting sensitive information. When tokenization failure occurs, organizations should take immediate action to resolve the issues and strengthen their data protection strategies. Here are some steps to fix tokenization failure:

1. Identify the root cause: The first step in fixing tokenization failure is to identify the root cause. Conduct a thorough analysis of the system, configuration settings, and associated processes to determine what went wrong. This may involve reviewing logs, conducting vulnerability assessments, and analyzing system behaviors to pinpoint the underlying issues.

2. Update and secure the infrastructure: If the tokenization infrastructure is compromised or outdated, it is essential to update and secure it. This may involve patching vulnerabilities, upgrading software components, or implementing additional safeguards to strengthen the infrastructure’s security posture. Engaging with relevant vendors or third-party providers can help address any weaknesses in the infrastructure.

3. Enhance key management practices: Proper key management is crucial for effective tokenization. Strengthen key management practices by implementing secure measures, such as secure storage, rotation, and access controls. Regularly review and update encryption keys to minimize the risk of unauthorized access and maintain the integrity of the encryption process.

4. Implement necessary configuration changes: Review the tokenization system’s configuration settings. Ensure that the system is appropriately configured, including the token generation algorithms, encryption algorithms, access controls, and logging mechanisms. Make any necessary changes or adjustments to improve the system’s security and functionality.

5. Conduct employee training and awareness programs: Human error can contribute to tokenization failure. Conduct regular training and awareness programs to educate employees on data handling best practices, security protocols, and the importance of proper tokenization processes. This helps minimize the risk of human-induced errors and strengthens the overall data security culture within the organization.

6. Strengthen monitoring and auditing: Implement robust monitoring and auditing mechanisms to detect any potential tokenization failures or suspicious activities. Regularly review logs and monitor system activities to identify anomalies or unauthorized access attempts. This proactive approach helps detect and address tokenization failure promptly and prevent further data breaches or compromises.

7. Review and adhere to regulatory requirements: Ensure compliance with industry and regional regulations regarding data protection and privacy. Review and update tokenization practices to align with the required standards and guidelines. This helps mitigate legal risks and ensures data protection practices meet the necessary compliance requirements.

8. Perform regular assessments and tests: Regularly assess and test the tokenization system to identify any vulnerabilities or shortcomings. Conduct penetration testing, vulnerability assessments, or security audits to uncover potential weaknesses and address them before they can be exploited. Ongoing maintenance and testing help maintain the effectiveness of tokenization and minimize the risk of future failures.

By following these steps, organizations can effectively address tokenization failures and strengthen their data security measures. It is important to take a proactive approach to fix tokenization failure promptly, as it helps maintain customer trust, protects sensitive information, and safeguards the organization against potential data breaches.

Best practices to avoid tokenization failure

To prevent tokenization failure and ensure the secure handling of sensitive information, organizations should adhere to best practices that enhance the effectiveness and reliability of tokenization processes. By following these practices, businesses can minimize the risk of data breaches and maintain the integrity of their data security strategies. Here are some best practices to avoid tokenization failure:

1. Choose a reputable tokenization solution: Selecting a reliable and reputable tokenization solution is crucial. Conduct thorough research and choose a solution that has a proven track record in the industry. Consider factors such as security features, encryption algorithms used, and the solution provider’s reputation to ensure the effectiveness of tokenization.

2. Implement strong encryption: Ensure that strong encryption algorithms are utilized in tokenization processes. Implement industry-standard encryption methods to protect sensitive data effectively. Strong encryption reduces the possibility of unauthorized access to the tokens and enhances the security of the entire tokenization system.

3. Secure key management: Establish robust key management practices to safeguard encryption keys. Protect the keys through encryption, secure storage, and strict access controls. Regularly rotate and update the encryption keys to maintain their integrity and protect against potential key compromise.

4. Regularly review and update configurations: Review and update the tokenization system’s configurations periodically. Ensure that token generation algorithms, encryption algorithms, and other settings are set up correctly and align with the latest security guidelines. Regular configuration reviews help maintain the security and effectiveness of the tokenization process.

5. Implement access controls: Employ strong access control measures to restrict access to sensitive data and tokenization systems. Implement role-based access controls (RBAC), multi-factor authentication (MFA), and least privilege principles. Limit access to only authorized individuals who need it to perform their specific duties.

6. Conduct regular security assessments: Regularly assess the security of the tokenization system through penetration testing, vulnerability assessments, and security audits. These assessments help identify any weaknesses or vulnerabilities in the system and allow for timely remediation before they can be exploited.

7. Maintain thorough logging and monitoring: Implement comprehensive logging and monitoring mechanisms to track and analyze system activities and detect any suspicious activities or unauthorized access attempts. Regularly review logs for anomalies and establish real-time alerting systems for immediate response to potential security incidents.

8. Provide continuous employee training: Educate employees about the importance of data security and the best practices for handling sensitive information. Offer regular training sessions to keep employees updated on the latest security protocols, data handling procedures, and their responsibilities in maintaining data security.

9. Stay compliant with regulations: Adhere to industry and regional regulations related to data protection and privacy. Familiarize yourself with relevant compliance requirements and ensure that your tokenization practices meet those standards. Regularly review and update your practices to align with changing regulations.

10. Regularly update and patch systems: Apply regular software updates, security patches, and fixes to the tokenization system and associated infrastructure. Keep the system up to date with the latest security enhancements and address any known vulnerabilities promptly to prevent exploitation.

By following these best practices, organizations can establish a robust tokenization framework that minimizes the risk of tokenization failure and ensures the secure handling of sensitive data. Implementing these practices helps businesses maintain customer trust, comply with regulations, and protect their critical information assets.

Conclusion

Tokenization is a vital data security technique that helps protect sensitive information, such as credit card numbers and personal identification data. By replacing sensitive data with unique tokens, tokenization minimizes the risk of data exposure and simplifies the handling of confidential information.

In this article, we discussed the concept of tokenization, how it works, and explored common and uncommon reasons for tokenization failure. We also provided insights on fixing tokenization failures and outlined best practices to avoid such failures altogether.

To avoid tokenization failure, organizations should implement a reputable tokenization solution, employ strong encryption and robust key management practices. Regularly reviewing and updating configurations, implementing access controls and conducting security assessments are crucial for maintaining data security. Furthermore, organizations must continuously educate and train employees, adhere to compliance regulations, and keep systems up to date with software patches.

By following these best practices and promptly addressing tokenization failures, businesses can enhance data security, protect sensitive information, and maintain customer trust. Tokenization, when implemented effectively, can be a powerful tool in safeguarding data and minimizing the risk of data breaches.

Remember, tokenization is one piece of the larger data security puzzle. It should be used in conjunction with other security measures to create a comprehensive and layered approach to protect sensitive data. With a robust and well-implemented tokenization strategy, organizations can effectively mitigate the risks associated with handling sensitive information.