Introduction

Welcome to the world of machine learning, where artificial intelligence meets data analysis and prediction. In today’s digital age, machine learning has become a powerful tool for businesses and individuals alike, revolutionizing the way we understand and utilize data. Whether you are a developer, an entrepreneur, or simply someone curious about this exciting technology, this article will guide you through the process of building a machine learning app from scratch.

Machine learning is a subset of artificial intelligence that focuses on training computer systems to learn and make predictions or decisions without being explicitly programmed. By analyzing large datasets, machine learning algorithms can identify patterns, make predictions, and find hidden insights that humans may not be able to discern. This capability has paved the way for various applications such as recommendation systems, fraud detection, image and speech recognition, and much more.

In this article, we will explore the basics of machine learning and guide you through the process of building your own machine learning app. We will discuss how to gather data, prepare and preprocess it, choose the right algorithm, train and evaluate the model, and ultimately implement it in an application.

Building a machine learning app requires a combination of technical skills, algorithmic understanding, and creativity. Whether you are a beginner or have some experience, this article will provide you with the necessary knowledge and guidance to embark on your machine learning journey.

So get ready to dive into the exciting world of machine learning and discover how you can harness its power to build your own intelligent applications!

What is Machine Learning?

Machine learning is a subfield of artificial intelligence (AI) that focuses on developing algorithms and techniques that allow computers to learn and improve from experience without being explicitly programmed. Instead of following pre-defined rules, machine learning systems use data to automatically discover patterns, make predictions, and solve complex problems.

At its core, machine learning algorithms are designed to recognize patterns and extract valuable insights from large datasets. By analyzing the data, these algorithms can detect trends, identify correlations, and make predictions or decisions based on the patterns they uncover.

There are several types of machine learning approaches:

- Supervised Learning: In this approach, the machine learning algorithm is trained using labeled data, where the desired output is known. The algorithm learns to map input data to the correct output based on the provided labels. For example, a supervised learning algorithm can be trained to recognize handwritten digits by using a dataset where each image is labeled with the corresponding digit.

- Unsupervised Learning: Unlike supervised learning, unsupervised learning algorithms don’t have labeled data to learn from. Instead, they analyze the input data and try to find patterns or clusters without any predefined categories. This type of learning is useful for uncovering hidden structures or relationships in unstructured data. For example, an unsupervised learning algorithm can be used to segment customers into different groups based on their purchasing behaviors.

- Reinforcement Learning: Reinforcement learning is an interactive process where an agent learns to make decisions or take actions in an environment to maximize a reward signal. The agent explores the environment, receives feedback, and adjusts its actions accordingly. This type of learning is often used for tasks that involve sequential decision making, such as playing games or controlling robotic systems.

Machine learning has a wide range of applications across various industries. It is used in predictive analytics for forecasting sales, customer behavior, or stock market trends. It is also deployed in recommendation systems, where algorithms learn from user preferences to suggest personalized products or content. Additionally, machine learning is used in image and speech recognition systems, natural language processing, fraud detection, autonomous vehicles, and many other areas.

As the field of machine learning continues to advance, more complex algorithms and techniques are being developed to tackle even more challenging problems. From deep learning and neural networks to reinforcement learning and generative models, machine learning is continuously evolving and shaping the future of AI.

Understanding the Basics

Before diving into building a machine learning app, it’s important to grasp the foundational concepts and terminology used in this field. Understanding the basics will lay a solid groundwork for your journey into machine learning.

Data: At the heart of any machine learning project is data. Data serves as the fuel that drives the learning process. It can come in various forms, such as structured data (tabular data with defined columns and rows), unstructured data (images, text, audio), or even a combination of both. High-quality, representative, and well-labeled data is crucial for training accurate and reliable machine learning models.

Features: In machine learning, features refer to the individual measurable properties or characteristics of the data. Features are used to represent the input variables or attributes of the problem being solved. For example, in a spam detection system, features can include the presence of certain words, the length of the email, or the sender’s address. Selecting relevant and informative features is important for the effectiveness of the model.

Labels: Labels, also known as targets or outputs, are the desired outputs that the machine learning algorithm aims to predict or classify. In supervised learning, the training dataset is labeled, meaning that each sample in the dataset is paired with its corresponding label. In an email spam detection scenario, the labels would indicate whether an email is spam (1) or not spam (0).

Model: A model is a mathematical representation of the problem at hand and how inputs relate to outputs. In machine learning, a model is created using a specific algorithm and trained on data. The trained model can then be used to make predictions or decisions on new, unseen data. Models can vary in complexity, from simple linear regression to complex neural networks.

Training: Training a machine learning model involves feeding the algorithm with labeled data and allowing it to learn the underlying patterns and relationships. During training, the model adjusts its parameters to minimize the difference between its predictions and the true labels in the training data. The goal is to find the best set of parameters that generalize well to unseen data.

Evaluation: To assess the performance of a trained model, it needs to be evaluated using a separate set of data called the validation or test set. This evaluation helps determine how well the model generalizes to new, unseen data. Common evaluation metrics include accuracy, precision, recall, and F1 score, depending on the specific problem and model type.

Overfitting and Underfitting: Overfitting occurs when a model performs extremely well on the training data but fails to generalize to unseen data. This happens when the model has learned to memorize the training examples instead of capturing the underlying patterns. Underfitting, on the other hand, occurs when a model is too simplistic and fails to capture the complexity of the problem. Balancing between overfitting and underfitting is a critical part of building an effective machine learning model.

Cross-Validation: Cross-validation is a technique used to assess the performance of a model and mitigate overfitting. It involves splitting the dataset into multiple subsets and training the model on different combinations of these subsets. By averaging the results of the model’s performance on the different subsets, a more accurate estimate of its overall performance can be obtained.

By familiarizing yourself with these core concepts, you will have a solid foundation to explore and experiment with different machine learning algorithms and techniques. Now let’s move on to the next step: gathering and preparing the data.

Gathering Data

Gathering high-quality, relevant data is a crucial step in building a machine learning app. The success of your model heavily depends on the quality and representativeness of the data you use for training. Here are some steps to consider when gathering data:

Define Your Objective: Clearly define the problem you want to solve with your machine learning app. Identifying the specific task and desired outcome will help you determine the type of data you need to gather.

Data Sources: Look for reliable and diverse sources to gather your data. Depending on your problem domain, data sources can include public datasets, proprietary data, APIs, web scraping, or user-generated data. Ensure that you have the necessary permissions and rights to use the data.

Data Labels: If you’re working on supervised learning, you’ll need labeled data to train your model. Labeling data involves assigning the correct outputs or categories to each input sample. This can be a time-consuming process, and you may need to use manual labeling or crowdsourcing platforms to get high-quality labels.

Data Size: The amount of data you gather is also important. In general, more data can lead to better model performance, but there is a point of diminishing returns. Collecting a balanced dataset with a sufficient number of samples for each class or category is important to avoid bias and improve model accuracy.

Data Preprocessing: Depending on the nature of your data, you may need to preprocess it before using it to train your model. This can involve tasks such as cleaning the data by removing duplicates or outliers, handling missing values, normalizing numerical features, or encoding categorical variables.

Data Privacy and Ethics: It’s essential to respect privacy regulations and ethical considerations when gathering data. Ensure that you conform to data protection laws and maintain the privacy and confidentiality of any personal or sensitive information.

Data Storage and Management: Establish a data storage and management system that allows you to securely store and access your data. This system should provide efficient data retrieval and support versioning, backup, and collaboration to ensure the integrity and availability of your data.

Gathering data is a continuous process, and it’s important to regularly update and expand your dataset to improve your models over time. Be prepared to adapt and refine your data collection strategies as you gain insights and feedback from your machine learning experiments.

Once you have gathered and prepared your data, you’re ready to move on to the next step: preprocessing the data to make it suitable for training your machine learning model.

Preparing the Data

Preparing the data is a crucial step in building a successful machine learning app. Data preparation involves transforming and organizing the data in a way that allows the machine learning algorithm to effectively learn and make accurate predictions. Here are some key steps to consider when preparing your data:

Data Cleaning: Clean the data by removing any irrelevant or noisy information that could negatively impact the performance of your model. This includes handling missing values, handling outliers, and addressing any inconsistencies or errors in the data.

Data Transformation: Transform the data into a suitable format that can be fed into the machine learning algorithm. This can involve feature extraction, where you derive new features from the existing ones to enhance the predictive power of the model. It can also include scaling and normalization, which ensure that all features are on a similar scale to prevent any feature from dominating the learning process.

Feature Encoding: If your data includes categorical variables, you will need to encode them into a numerical format that the algorithm can work with. This can be done using techniques such as one-hot encoding or label encoding, depending on the nature of the categorical data and the requirements of the algorithm.

Feature Selection: Select the most relevant and informative features from your dataset. Removing redundant or irrelevant features can simplify the model and improve its performance by reducing overfitting and increasing interpretability.

Data Split: Split your dataset into training, validation, and testing sets. The training set is used to train the model, the validation set is used to tune hyperparameters and evaluate model performance, and the testing set is used to assess the final performance of the trained model on unseen data.

Data Augmentation: In some cases, you may need to augment your dataset by creating additional synthetic samples. This can be done through techniques such as image rotation, flipping, or adding noise, which can help increase the diversity of your data and improve the generalization capabilities of your model.

Data Balancing: If your dataset is imbalanced, meaning that one class or category has significantly fewer samples than others, you may need to balance the data to prevent the model from being biased towards the majority class. This can involve oversampling the minority class, undersampling the majority class, or using more advanced techniques such as SMOTE (Synthetic Minority Over-sampling Technique).

By preparing your data carefully, you can ensure that your machine learning model receives clean, organized, and relevant input, leading to improved performance and more accurate predictions. Once the data is prepared, you’re ready to move on to selecting the right algorithm for your machine learning app.

Choosing the Right Algorithm

Choosing the right algorithm is a crucial step in building a successful machine learning app. The algorithm you select will depend on the nature of your problem, the type of data you have, and the desired outcome you want to achieve. Here are some key considerations to keep in mind when choosing the right algorithm:

Problem Type: Determine the type of problem you are trying to solve. The problem can be classification, regression, clustering, or even recommendation. Each problem type requires a specific type of algorithm that is tailored to the problem’s characteristics and goals.

Data Complexity: Assess the complexity of your data. Does it have a linear or non-linear relationship? Are there clear boundaries between classes or categories? Understanding the complexity of your data will help you choose an algorithm that can effectively capture its underlying patterns.

Data Size: Consider the size of your dataset. Some algorithms work well with small datasets, while others require large volumes of data for effective training. If you have a limited amount of data, you may need to choose an algorithm that is less prone to overfitting.

Algorithm Performance: Research and evaluate the performance of different algorithms on similar problem domains. Look for algorithms that have been successful in similar applications and have demonstrated high accuracy and reliability. Consider factors such as computational efficiency and scalability, especially if you anticipate working with large datasets.

Algorithm Assumptions: Understand the assumptions and limitations of the algorithms you are considering. Some algorithms are more suited for specific types of data or assume certain characteristics of the data. Make sure the chosen algorithm aligns with the assumptions and requirements of your problem.

Algorithm Parameters: Consider the specific parameters and hyperparameters of each algorithm. Optimize the performance of your chosen algorithm by tuning these parameters using techniques such as grid search or random search. This can significantly impact the performance and generalization ability of the model.

Ensemble Methods: Consider using ensemble methods, which combine multiple algorithms to improve the overall performance of the model. Ensemble methods can increase the accuracy and robustness of predictions by aggregating the outputs of individual models.

Domain Expertise: Consider your expertise and familiarity with different algorithms. If you have experience or domain knowledge in a particular algorithm, it may be a good choice as you can leverage your expertise to optimize its performance and understand its outputs better.

It’s important to note that choosing the right algorithm often requires experimentation and iteration. It’s not uncommon to try multiple algorithms and compare their performance before settling on the most suitable one. Additionally, as new algorithms and techniques are constantly being developed, it’s important to stay updated and explore emerging algorithms that may better suit your problem.

Once you have chosen the right algorithm for your machine learning app, it’s time to move on to the next step: training the model.

Training the Model

Training the model is a crucial step in the machine learning pipeline. This is where the selected algorithm learns from the labeled data and adjusts its internal parameters to make accurate predictions. Here are the key steps involved in training a machine learning model:

Splitting the Data: Divide your dataset into training and validation sets. The training set is used to train the model, while the validation set is used to evaluate the model’s performance during training and make necessary adjustments.

Initializing the Model: Initialize the selected algorithm with a set of initial parameters. The specific initialization technique depends on the algorithm being used.

Feeding the Data to the Model: Feed the training data into the model. The model processes the input data and makes predictions based on its current set of parameters.

Calculating the Loss: Calculate the loss or error between the model’s predictions and the ground truth labels in the training data. The loss function measures how well the model is currently performing.

Updating the Parameters: Use an optimization algorithm, such as gradient descent, to update the model’s parameters based on the calculated loss. This step aims to minimize the loss and improve the model’s performance.

Iterating the Process: Repeat the process of feeding the data, calculating the loss, and updating the parameters for multiple iterations or epochs. This allows the model to gradually improve its predictions and learn the underlying patterns in the data.

Monitoring the Model: Monitor the model’s performance during training by evaluating its predictions on the validation set. This helps identify overfitting or underfitting issues and guides the adjustment of hyperparameters to optimize the model’s performance.

Early Stopping: Implement early stopping to prevent overfitting. If the model’s performance on the validation set starts to deteriorate, stop the training process to avoid wasting computing resources on a model that is not improving.

Regularization: Apply regularization techniques, such as L1 or L2 regularization, to prevent overfitting and promote model generalization. Regularization adds a penalty term to the loss function, discouraging the model from overly relying on certain features or parameters.

Model Evaluation: Finally, evaluate the trained model on the testing set, which contains unseen data, to assess its performance on new instances. This step provides a realistic estimate of the model’s accuracy and generalization ability.

Training a machine learning model requires careful attention to detail and experimentation. It’s important to strike a balance between underfitting and overfitting, ensuring that the model captures the underlying patterns in the data without memorizing the training samples. Properly training the model sets the stage for the next step: evaluating the model’s performance.

Evaluating the Model

Evaluating the performance of a trained machine learning model is essential to ensure its effectiveness and reliability. Here are key steps to follow when evaluating your model:

Performance Metrics: Select appropriate performance metrics based on the problem type and goals. Classification tasks often use metrics such as accuracy, precision, recall, and F1 score. For regression tasks, metrics like mean squared error (MSE) or R-squared can be used. Choose metrics that best capture the specific aspects of the problem you are solving.

Confusion Matrix: For classification problems, analyze the confusion matrix to get a detailed understanding of the model’s performance. The confusion matrix provides insights into true positives, true negatives, false positives, and false negatives, giving a deeper comprehension of the model’s accuracy and potential errors.

Cross-Validation: Use cross-validation techniques, such as k-fold cross-validation, to obtain a more robust estimate of the model’s performance. By dividing the data into multiple folds and training/evaluating the model on different combinations, you can reduce the impact of data variability and obtain a more reliable estimate of its performance.

Learning Curves: Analyze learning curves to observe how the model’s performance changes with respect to the amount of training data. Learning curves can help identify issues like underfitting (high bias) or overfitting (high variance) by analyzing performance trends as the model is trained on increasing amounts of data.

Overfitting and Underfitting Detection: Detect and address overfitting and underfitting issues, which can hinder the model’s ability to generalize to new data. Overfitting occurs when the model performs exceptionally well on the training data but fails to generalize to unseen data. Underfitting, on the other hand, occurs when the model is too simple to capture the underlying patterns. Adjusting the model’s complexity and regularization techniques can help mitigate these issues.

Comparison with Baseline Models: Compare the performance of your model with baseline models or existing solutions in the field. This provides context and allows you to assess whether your model yields better results. A good practice is to establish a benchmark and strive for performance improvements compared to existing solutions.

Interpretability and Explainability: Consider the interpretability and explainability of your model’s predictions, especially if your application involves high-stakes decisions. Some machine learning algorithms, such as decision trees or linear models, are more interpretable than others, like deep neural networks. Ensure you can explain how your model arrives at its predictions to gain trust and acceptance from stakeholders.

Error Analysis: Conduct thorough error analysis to understand the types of errors your model is making. Examine misclassified samples or predictions with high errors and consider if any patterns or biases exist. This analysis can guide future improvements and help uncover potential weaknesses or limitations of your model.

Evaluating your model’s performance is an ongoing process that requires iterative improvements and refinements. It helps you understand the strengths and weaknesses of your model, identify areas for enhancement, and guide decision-making processes as you move forward in building your machine learning app.

Improving the Model

Improving the performance of a machine learning model is an iterative process that involves fine-tuning various aspects to achieve better results. Here are key steps to take when seeking to enhance your model:

Feature Engineering: Revisit your feature selection or extraction process. Experiment with different feature combinations or create new features that may better capture the underlying patterns in the data. Consider domain knowledge or expert insights to guide this process.

Hyperparameter Tuning: Adjust the hyperparameters of your model to optimize its performance. Hyperparameters control the behavior and flexibility of the algorithm but are not learned from the data. Techniques such as grid search or random search can be employed to find the best set of hyperparameters.

Model Selection: Consider using different algorithms or ensemble methods to improve your model’s performance. Compare the results of various models, including both conventional and state-of-the-art algorithms, to identify the most suitable one for your problem.

Regularization Techniques: If your model is overfitting, apply appropriate regularization techniques, such as L1 or L2 regularization, to reduce complexity and prevent excessive reliance on specific features or parameters. Regularization helps control model complexity and improve generalization capabilities.

Data Augmentation: If you have a small dataset, consider augmenting your data by generating additional synthetic samples. Techniques such as rotation, flipping, or adding noise can enrich the training data and improve the model’s ability to generalize to new instances.

Ensemble Methods: Explore ensemble methods that combine multiple models or predictions to improve overall performance. Techniques such as bagging, boosting, or stacking can be effective in reducing bias, variance, or error rates, leading to more robust and accurate predictions.

Model Architectures: If you are working with deep learning models, experiment with different architectures, layer sizes, or activation functions to optimize performance. Regularization techniques like dropout or batch normalization can be used to prevent overfitting.

Data Balancing: If your dataset is imbalanced, balance the data by oversampling the minority class, undersampling the majority class, or using more advanced techniques such as SMOTE. This helps prevent bias and ensures that the model does not favor the majority class disproportionately.

Refining Evaluation: Revisit your evaluation metrics and test various thresholds or performance measures to better capture the desired outcome. Consider metrics specific to your application’s requirements, such as precision, recall, or area under the ROC curve (AUC).

Iterative Process: Improving a model is an iterative process. Continually assess the model’s performance, make adjustments, and implement feedback loops to iterate on the process. Utilize tools such as A/B testing or online learning to continuously monitor and enhance your model based on new data or user feedback.

Remember, improving a model is not about finding a perfect solution but rather striving for progressive enhancements. Experimentation, creativity, and a deep understanding of the problem domain are key to achieving better results and building a high-performing machine learning app.

Implementing the Model in an App

Implementing the trained machine learning model into an application is the final step in building a machine learning app. Here are key considerations when incorporating the model into your app:

Integration: Determine how the machine learning model will fit into your existing application architecture. Decide whether the model will be implemented as a standalone component or integrated into an existing module.

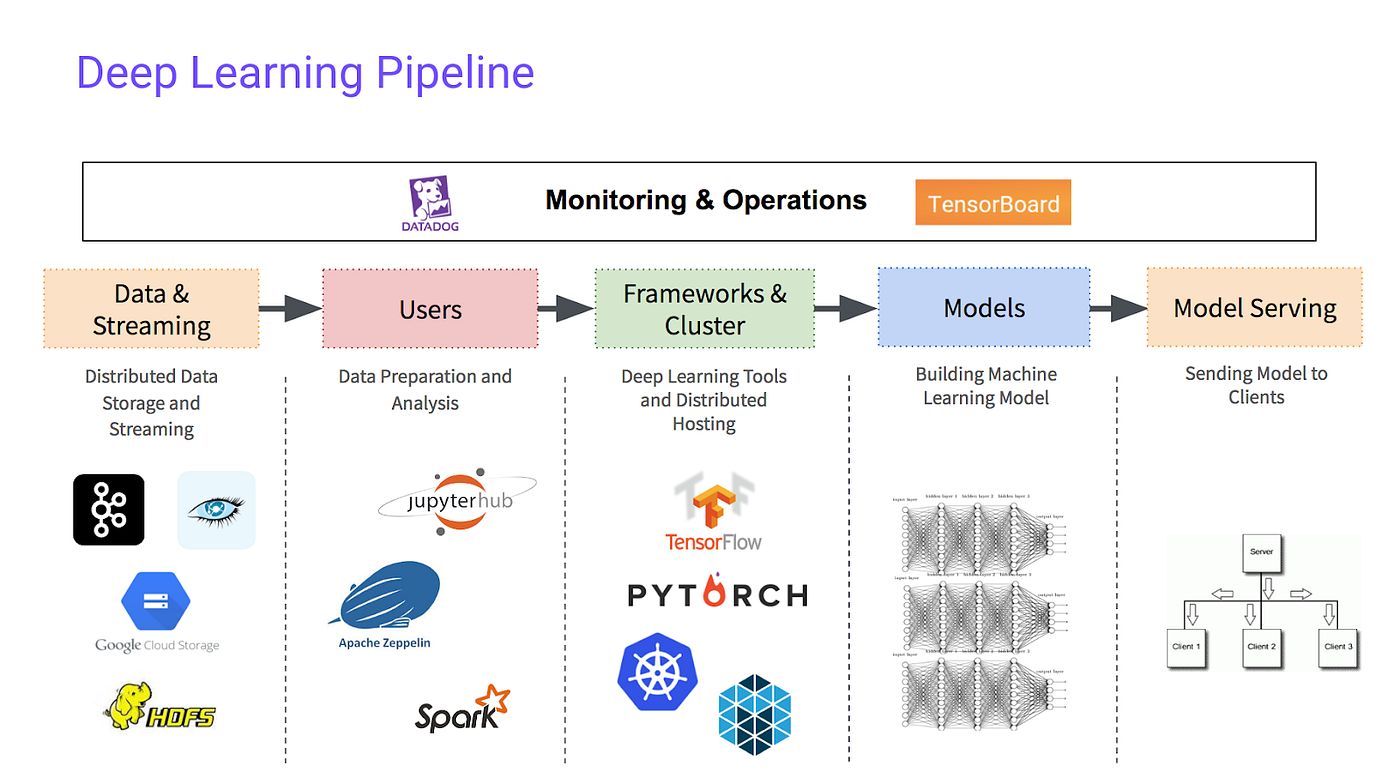

Model Deployment: Depending on your requirements, you can choose between different deployment options. You can deploy the model on the cloud, on-premises, or use a combination of both. Consider factors such as scalability, performance, and cost when making this decision.

API Development: Build an application programming interface (API) to expose the model’s functionality to your application. This allows other components or external systems to interact with your model and make predictions. Consider security measures and access controls to protect your model and its data.

Data Preprocessing: Ensure that the data preprocessing steps used during training are replicated in the app to prepare new incoming data for prediction. Implement the necessary transformations and encoding techniques to ensure consistency.

Input Validation: Validate and sanitize the input data to ensure it meets the model’s expectations and does not cause errors or security vulnerabilities. Handle potential edge cases, missing values, or incorrect data formats appropriately to avoid unexpected behavior.

Model Monitoring: Implement monitoring mechanisms to track the performance and behavior of the deployed model. Continuously evaluate its predictions, monitor data drift or concept drift, and be prepared to retrain or update the model when necessary.

Model Versioning and Rollback: Implement a versioning system to keep track of model changes and modifications. This allows you to rollback to a previous version if issues arise or if a newer version does not perform as expected. Proper version control ensures reliability and reproducibility.

User Interface: Design a user-friendly interface that interacts with the model’s predictions. Provide clear instructions, informative visualizations, or feature explanations to enhance user understanding and trust in the app’s predictions.

Performance Optimization: Optimize the app’s performance by considering factors like speed, response time, and resource utilization. Techniques like caching, parallelization, or model quantization can help improve the app’s efficiency and responsiveness.

Testing and Quality Assurance: Implement thorough testing methodologies to ensure the app’s reliability and accuracy. Perform unit tests, integration tests, and end-to-end tests to identify and resolve any issues or bugs. Validate the app’s performance against expected outputs and expected user experiences.

Implementing a machine learning model into an app requires technical expertise, attention to detail, and continuous monitoring. By carefully integrating the model and the app and ensuring a smooth user experience, you can deliver a powerful, intelligent application that leverages the benefits of machine learning.

Conclusion

Building a machine learning app is an exciting and rewarding endeavor that allows you to harness the power of data and artificial intelligence. Throughout this journey, we have explored the key steps involved in creating a machine learning app, starting from understanding the basics of machine learning to implementing the trained model into an application.

We began by understanding the fundamentals of machine learning, including the various types of learning approaches and the applications of this technology in different industries. We then delved into gathering high-quality data and preparing it for training, ensuring that the data is clean, relevant, and representative of the problem you are solving.

Next, we discussed the process of choosing the right algorithm for your machine learning app, considering the problem type, data complexity, and algorithm performance. We learned how to train and evaluate the model, iteratively improving its performance by fine-tuning parameters, feature engineering, and employing regularization and ensemble techniques.

Once the model was trained and evaluated, we explored the crucial steps of implementing it into an application, considering factors such as integration, data preprocessing, input validation, and user interface design. We highlighted the importance of performance optimization, testing, and continuous monitoring to ensure the reliability and efficiency of the app.

By following these steps and considering the best practices throughout the process, you can build a machine learning app that leverages the capabilities of artificial intelligence to make accurate predictions, solve complex problems, and deliver value to users.

However, it’s important to note that the field of machine learning is constantly evolving, with new algorithms, techniques, and trends emerging regularly. As you continue your journey, it’s essential to stay updated, experiment with new approaches, and refine your skills to keep pace with advancements in this exciting field.

Building a machine learning app requires a combination of technical skills, domain knowledge, creativity, and dedication. By staying curious, embracing challenges, and continuously learning, you can unlock the potential of machine learning and create innovative and impactful applications.