Introduction

Welcome to the fascinating world of machine learning! In the past few decades, machine learning has emerged as one of the most transformative and revolutionary technologies. It has revolutionized industries, empowered businesses, and transformed the way we live and work.

Machine learning, in simple terms, refers to the ability of computer systems to learn and make intelligent decisions without explicit programming. It is a subfield of artificial intelligence that focuses on the development of algorithms that enable machines to learn from data and improve their performance over time.

With the exponential growth of data and advancements in computing power, machine learning has seen remarkable progress, enabling computers to perform tasks that were once considered the realm of human intelligence. From speech recognition to image classification, from autonomous vehicles to recommendation systems, machine learning is now a ubiquitous presence in our daily lives.

The journey of machine learning dates back to the early days of computing when scientists and researchers began exploring the idea of creating machines that could learn from data. Over the years, significant milestones and breakthroughs have paved the way for the development of sophisticated machine learning models and algorithms.

In this article, we will delve into the fascinating history of machine learning, exploring its early beginnings, the birth of artificial neural networks, the emergence of statistical learning theory, the development of support vector machines, and the revolution of deep learning. We will also examine the current state of machine learning, its applications, and its profound impact on various industries.

Join us on this enlightening journey as we unravel the incredible story of how machine learning has evolved and shaped the course of technology as we know it today.

The Early Beginnings of Machine Learning

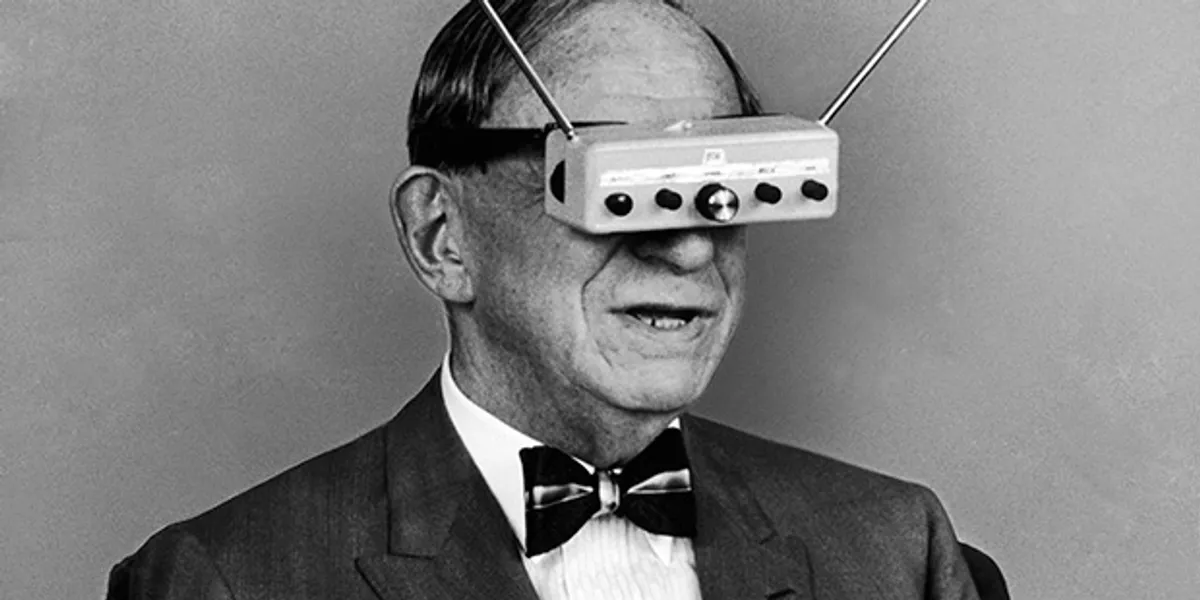

The roots of machine learning can be traced back to the early days of computing, when scientists and researchers began to explore the concept of creating machines that could learn and make decisions autonomously. While the term “machine learning” was not coined until much later, the groundwork for this revolutionary technology was laid many decades ago.

In the 1950s and 1960s, the field of artificial intelligence (AI) emerged, with pioneers like Alan Turing and Marvin Minsky, who sought to create machines that could mimic human intelligence. These early AI researchers were interested in developing algorithms and techniques that would enable computers to learn from data and improve their performance over time.

At this early stage, machine learning was focused on symbolic reasoning and knowledge representation. Researchers developed algorithms that could manipulate symbols and logical expressions, laying the foundation for future advancements in machine learning.

One notable breakthrough during this time was the invention of the Perceptron, a type of artificial neural network. Developed by Frank Rosenblatt in 1957, the Perceptron was inspired by the structure and function of the human brain. It consisted of interconnected artificial neurons that could process and transmit information. This development paved the way for the future development of more sophisticated neural network models.

Another significant contribution to the early development of machine learning was the field of pattern recognition. Researchers began exploring techniques for automatically identifying and classifying patterns in data. This included the development of algorithms for character recognition, speech recognition, and handwriting recognition.

As computing power increased and more data became available, researchers started experimenting with statistical techniques for machine learning. In the 1970s, researchers such as Terry Winograd and Joshua Lederberg worked on projects that combined symbolic reasoning with probabilistic models. These approaches allowed machines to reason under uncertainty and make decisions based on probabilistic outcomes.

Despite these early advancements, machine learning was still in its infancy, with limited practical applications. However, the groundwork and principles established during this time laid the foundation for future breakthroughs in the field.

In the next sections, we will explore how machine learning continued to evolve with the birth of artificial neural networks, the emergence of statistical learning theory, and the development of support vector machines. Stay tuned for the exciting journey into the history of machine learning.

The Birth of Artificial Neural Networks

In the late 1950s and early 1960s, a breakthrough occurred in the field of machine learning with the birth of artificial neural networks. Inspired by the structure and function of the human brain, researchers began developing algorithms and models that mimicked the behavior of biological neurons.

The early developments in artificial neural networks primarily focused on the Perceptron, a type of neural network model invented by Frank Rosenblatt in 1957. The Perceptron consisted of artificial neurons, or “nodes,” connected in layers. Each node had a set of inputs and produced an output based on a weighted sum of those inputs. The output was then passed on to the next layer of nodes, gradually transforming the inputs to produce a final output.

Rosenblatt’s work on the Perceptron laid the foundation for future advancements in artificial neural networks. He demonstrated that the Perceptron could be trained to make binary classifications by adjusting the weights of the connections between nodes. This training process, known as “supervised learning,” marked a significant milestone in the field of machine learning.

However, the initial excitement around artificial neural networks was short-lived. In 1969, Marvin Minsky and Seymour Papert published a book called “Perceptrons” that highlighted the limitations of the Perceptron. They showed that a single-layer Perceptron could not solve certain types of problems, such as XOR, effectively dampening enthusiasm for artificial neural networks.

Despite this setback, research and development in artificial neural networks continued in academia. In the 1980s, new breakthroughs led to the rediscovery and resurgence of neural networks. Researchers such as John Hopfield and David Rumelhart made significant contributions by introducing the concept of “backpropagation,” a technique for training multi-layer neural networks.

Backpropagation revolutionized the field by allowing neural networks to learn and make predictions from complex, high-dimensional data. It involves calculating the error between the network’s predicted output and the desired output and then adjusting the weights based on this error. With backpropagation, neural networks became capable of solving a wide range of complex problems.

In the late 1980s and early 1990s, artificial neural networks gained popularity and found applications in various domains, including image recognition, speech processing, and natural language understanding. However, the limitations of computing power at the time constrained their potential impact.

It was not until the 2000s, with the advent of more powerful hardware and the accumulation of vast amounts of training data, that artificial neural networks truly began to shine. This set the stage for the subsequent developments in machine learning, such as the emergence of statistical learning theory and the rise of deep learning.

Stay tuned for the next sections, where we will explore these exciting milestones and delve into the evolution of machine learning.

The Emergence of Statistical Learning Theory

In the 1990s, a new era of machine learning emerged with the development of statistical learning theory. This branch of machine learning focuses on understanding and analyzing the underlying statistical properties of data to make predictions and decisions.

One of the key figures in the development of statistical learning theory is Vladimir Vapnik. In collaboration with his colleagues, Vapnik developed the theory of support vector machines (SVMs). SVMs are a class of algorithms that use statistical principles to construct decision boundaries and make predictions.

At the heart of statistical learning theory is the concept of minimizing the expected prediction error. The theory emphasizes the trade-off between model complexity (the ability to fit the training data) and generalization (the ability to make accurate predictions on unseen data). The goal is to find a model that achieves the best balance between these two factors.

Vapnik and his colleagues addressed this trade-off by introducing the concept of “structural risk minimization” (SRM). SRM aims to find a balance between fitting the training data and avoiding overfitting, which occurs when a model becomes too complex and performs poorly on new data.

The development of statistical learning theory had a profound impact on the field of machine learning. It provided a theoretical framework for understanding the principles behind learning from data and introduced rigorous methods for model selection and evaluation.

With the emergence of statistical learning theory, machine learning researchers gained a deeper understanding of the complexities involved in training and optimizing machine learning models. This led to the development of new algorithms and techniques, paving the way for advancements in various domains, including natural language processing, computer vision, and data mining.

Moreover, the principles of statistical learning theory contributed to the formulation of ensemble learning techniques, such as random forests and gradient boosting. These techniques combine multiple models to make more accurate predictions and have become widely used in applied machine learning.

As computing power continued to increase and the availability of data expanded, statistical learning theory played a crucial role in shaping the direction of machine learning research. It continues to be a fundamental part of the field, providing the theoretical foundation for understanding the statistical properties of data and guiding the development of new algorithms.

In the next section, we will explore the development of support vector machines (SVMs), a key application of statistical learning theory that has had a significant impact on machine learning.

The Development of Support Vector Machines

In the 1990s, the field of machine learning witnessed a significant breakthrough with the development of support vector machines (SVMs). SVMs are a class of algorithms that utilize statistical principles to construct decision boundaries and make predictions.

The development of SVMs can be attributed to the work of Vladimir Vapnik and his colleagues, who built upon the foundations of statistical learning theory. They introduced the notion of support vectors, which are data points that lie closest to the decision boundary between different classes. SVMs aim to find the optimal decision boundary that maximizes the margin between support vectors.

One of the key advantages of SVMs is their ability to effectively handle high-dimensional data. Through the use of a mathematical technique called the kernel trick, SVMs can implicitly map data points into higher-dimensional feature spaces, making them capable of capturing complex relationships and making accurate predictions.

Furthermore, SVMs are known for their robustness to noise and outliers. By focusing on support vectors, which are the most influential points in the training data, SVMs are less affected by the presence of noisy or erroneous data points.

The development and popularity of SVMs brought about significant advancements in the field of machine learning. Researchers and practitioners began applying SVMs to a wide range of applications, including classification, regression, and outlier detection.

SVMs have been successfully used in various domains, such as text classification, image recognition, and bioinformatics. They have shown exceptional performance in scenarios with complex decision boundaries and limited training data.

The success and impact of SVMs can be attributed to their mathematical foundations and solid theoretical underpinnings. The optimization algorithms used to train SVMs, such as quadratic programming and convex optimization, ensure that the model finds the best possible decision boundary based on the training data.

Moreover, SVMs have inspired the development of other related algorithms and techniques. For instance, kernel methods, which involve using nonlinear transformations in feature spaces, have found widespread application in various machine learning tasks.

Over the years, advancements in computing power and the availability of large-scale datasets have further propelled the development and adoption of SVMs. Additionally, researchers continue to explore variations and improvements to the original SVM formulation, such as incorporating more flexible loss functions and exploring online learning techniques.

As we move forward in the exciting history of machine learning, the next section will take us on a journey through the revolution of deep learning, a breakthrough that has transformed the field and unleashed unprecedented capabilities.

The Revolution of Deep Learning

The revolution of deep learning has had an extraordinary impact on the field of machine learning. Deep learning, a subfield of artificial intelligence, has revolutionized the way computers process and understand data, enabling remarkable advancements in areas such as computer vision, natural language processing, and speech recognition.

Deep learning is based on the concept of neural networks, which are inspired by the structure and function of the human brain. While neural networks have been around since the 1950s, it wasn’t until the late 2000s and early 2010s that deep learning experienced a breakthrough.

What sets deep learning apart from traditional machine learning approaches is the ability to learn hierarchical representations of data. Deep neural networks consist of multiple layers of interconnected artificial neurons, with each layer building upon the previous one to extract increasingly complex features. By learning these hierarchical representations, deep learning models can capture intricate patterns and relationships in the data.

A key factor in the success of deep learning is the availability of large-scale datasets and advancements in computing power. Deep neural networks require substantial amounts of labeled data for training, and powerful GPUs have made it possible to process these huge datasets efficiently.

The breakthrough moment for deep learning came in 2012 when a deep convolutional neural network (CNN) called AlexNet won the ImageNet Large-Scale Visual Recognition Challenge. This competition involved classifying millions of images into thousands of different categories. AlexNet’s impressive performance demonstrated the power of deep learning and sparked a surge of interest in the field.

Since then, deep learning has continued to flourish, driving breakthroughs in multiple domains. Deep neural networks have achieved state-of-the-art results in image classification, object detection, and image segmentation tasks. They have also revolutionized natural language processing, enabling machines to understand and generate human-like text.

One of the significant advantages of deep learning is its ability to automatically learn feature representations from raw data. Traditional machine learning approaches often require manual feature engineering, where domain experts meticulously design and extract relevant features from the data. In deep learning, the neural network learns these features automatically, eliminating the need for extensive manual feature engineering.

Deep learning has also benefited from the development of powerful architectures such as recurrent neural networks (RNNs) and long short-term memory (LSTM) networks. These architectures excel at handling sequential data and have made significant advancements in tasks such as language modeling, machine translation, and speech recognition.

The impact of deep learning has extended beyond academia and research labs. It has transformed industries such as healthcare, finance, and transportation. Deep learning algorithms are being used to develop innovative medical diagnostics systems, analyze financial market data, and power autonomous vehicles.

As the field of deep learning continues to evolve, researchers are investigating techniques to make neural networks more interpretable, address the challenges of training deep models with limited data, and improve the robustness and safety of deep learning systems.

The revolution of deep learning represents a remarkable milestone in the history of machine learning. Its advancements have pushed the boundaries of what machines can accomplish, opening doors to new possibilities and reshaping entire industries.

Machine Learning Today: Applications and Impact

Machine learning has become deeply ingrained in our daily lives, transforming various industries and revolutionizing the way we work, communicate, and make decisions. From personalized recommendations to self-driving cars, machine learning is powering innovative solutions and impacting society in profound ways.

One of the most prominent applications of machine learning is in the field of healthcare. Machine learning algorithms are being used to analyze medical data and detect patterns that can aid in early disease diagnosis. They are also being employed to assist in medical imaging interpretation, drug discovery, and personalized treatment plans. Machine learning is playing a vital role in improving patient outcomes and reducing healthcare costs.

In the realm of finance, machine learning algorithms are used to detect fraudulent transactions, assess creditworthiness, optimize trading strategies, and minimize risks. These algorithms analyze historical data, identify trends, and make predictions to inform decision-making in the financial industry. Machine learning has the potential to enhance the efficiency and accuracy of financial processes and improve risk management practices.

E-commerce and online platforms heavily rely on machine learning to provide personalized recommendations to users. By analyzing user behavior and preferences, machine learning algorithms can suggest products, articles, or movies tailored to individual tastes. This level of personalization enhances the user experience, increases customer satisfaction, and drives business growth.

Machine learning has also made significant advancements in the field of natural language processing (NLP). Language translation, sentiment analysis, chatbots, voice recognition, and virtual assistants are just a few examples of NLP-powered applications that have become integral parts of our digital interactions. These technologies enable us to communicate with machines more naturally and efficiently.

In the transportation sector, machine learning algorithms are at the core of self-driving cars and autonomous vehicles. These algorithms process real-time sensor data, analyze road conditions, and make informed decisions to navigate through traffic and ensure passenger safety. The development of self-driving technology has the potential to revolutionize transportation, improving traffic flow, reducing accidents, and increasing mobility.

Machine learning is also playing a critical role in addressing environmental issues. It is used to analyze weather data, predict climate patterns, and develop models for renewable energy production and optimization. Machine learning algorithms can help identify and mitigate risks associated with climate change, enabling more effective policymaking and sustainable resource management.

While the applications of machine learning are vast, there are still challenges to overcome. Ensuring the ethical use of machine learning, addressing bias in algorithms, and protecting individual privacy are ongoing concerns that require careful consideration and regulation.

The impact of machine learning on society cannot be overstated. It is transforming industries, empowering businesses, and shaping the way we live and interact with technology. As machine learning continues to advance, its potential for positive impact will only grow, ushering in a future where intelligent machines become even more integral to our daily lives.

Conclusion

The journey through the history of machine learning has revealed a remarkable evolution, from its early beginnings to the revolution of deep learning. Over the years, machine learning has grown from theoretical concepts to powerful algorithms that have reshaped industries and transformed our world.

The early pioneers of machine learning laid the foundation for the development of artificial neural networks, statistical learning theory, and support vector machines. These breakthroughs paved the way for the emergence of deep learning, which has revolutionized the field and unleashed unprecedented capabilities.

Today, machine learning is an integral part of our daily lives. Its applications span across various sectors, including healthcare, finance, e-commerce, transportation, and environmental management. Machine learning algorithms provide personalized recommendations, assist in medical diagnoses, power self-driving cars, and enable natural language processing, among many other remarkable achievements.

As machine learning continues to advance, we must address challenges such as ethical considerations, algorithmic bias, and privacy concerns. These issues require us to navigate carefully and ensure that machine learning technologies are developed and deployed responsibly.

The future of machine learning holds immense potential. Advancements in computing power, data availability, and algorithmic innovations will continue to push the boundaries of what machines can accomplish. We can expect further innovations in areas such as reinforcement learning, interpretability, and the intersection of machine learning with other disciplines such as robotics and quantum computing.

Machine learning has undoubtedly transformed our world, impacting industries, empowering businesses, and enhancing human lives. The journey of machine learning is far from over. As we embrace this technology and navigate its opportunities and challenges, we can look forward to a future where intelligent machines work in synergy with human intelligence, making our world more efficient, innovative, and inclusive.