Introduction

Welcome to the world of data security, where the protection of sensitive information is of paramount importance. As technology continues to advance, so too do the methods employed by cybercriminals to steal and exploit data. In this ever-evolving landscape, organizations need robust security measures to safeguard valuable information and maintain the trust of their customers.

One powerful technique that plays a pivotal role in data security is tokenization. This process, which acts as an effective alternative to traditional encryption methods, offers enhanced protection for sensitive data by replacing it with a unique identifier, known as a token. By doing so, the original data is concealed, reducing the risk of unauthorized access or misuse.

Tokenization has gained significant traction in recent years, as organizations recognize its ability to enhance data security while minimizing the impact on business operations. In this article, we will explore what tokenization is, how it works, its benefits, and its key components. We will also compare it to other data protection methods and discuss the challenges associated with its implementation.

So, whether you’re a business owner looking to safeguard customer data or an individual interested in learning about data security, let’s delve into the world of tokenization and explore how it can help protect sensitive information.

What is Tokenization?

Tokenization refers to the process of replacing sensitive data with a unique identifier, known as a token. This technique is used to enhance data security by concealing the original data, making it significantly more challenging for unauthorized individuals to access or exploit the information.

When sensitive data is tokenized, it undergoes a transformation where it is replaced with a random sequence of characters. This process typically occurs using algorithms that generate unique tokens for each piece of data. The tokens themselves have no inherent meaning and are impossible to revert back to the original data without access to the tokenization system’s decryption and mapping mechanism.

The primary objective of tokenization is to ensure that sensitive data remains secure, even if it is intercepted or accessed without authorization. By replacing the original data with a token, organizations can minimize the risk of exposure and mitigate the potential impact in the event of a data breach.

One key aspect to note is that the tokens themselves hold no value or sensitive information. Instead, they act as references that allow authorized users to retrieve or process the original data as needed. This decoupling of sensitive information improves data security since the tokens are not valuable or exploitable even if they are intercepted by adversaries.

It is important to differentiate between tokenization and encryption, as they are distinct techniques. While encryption converts data into a ciphertext using complex algorithms and encryption keys, tokenization replaces the data with a non-sensitive random string. Encryption is reversible with the right decryption keys, whereas tokenization is non-reversible by design.

In summary, tokenization is the process of replacing sensitive data with unique, non-sensitive tokens to enhance data security. By doing so, organizations can reduce the risk of unauthorized access and minimize the potential impact of a data breach.

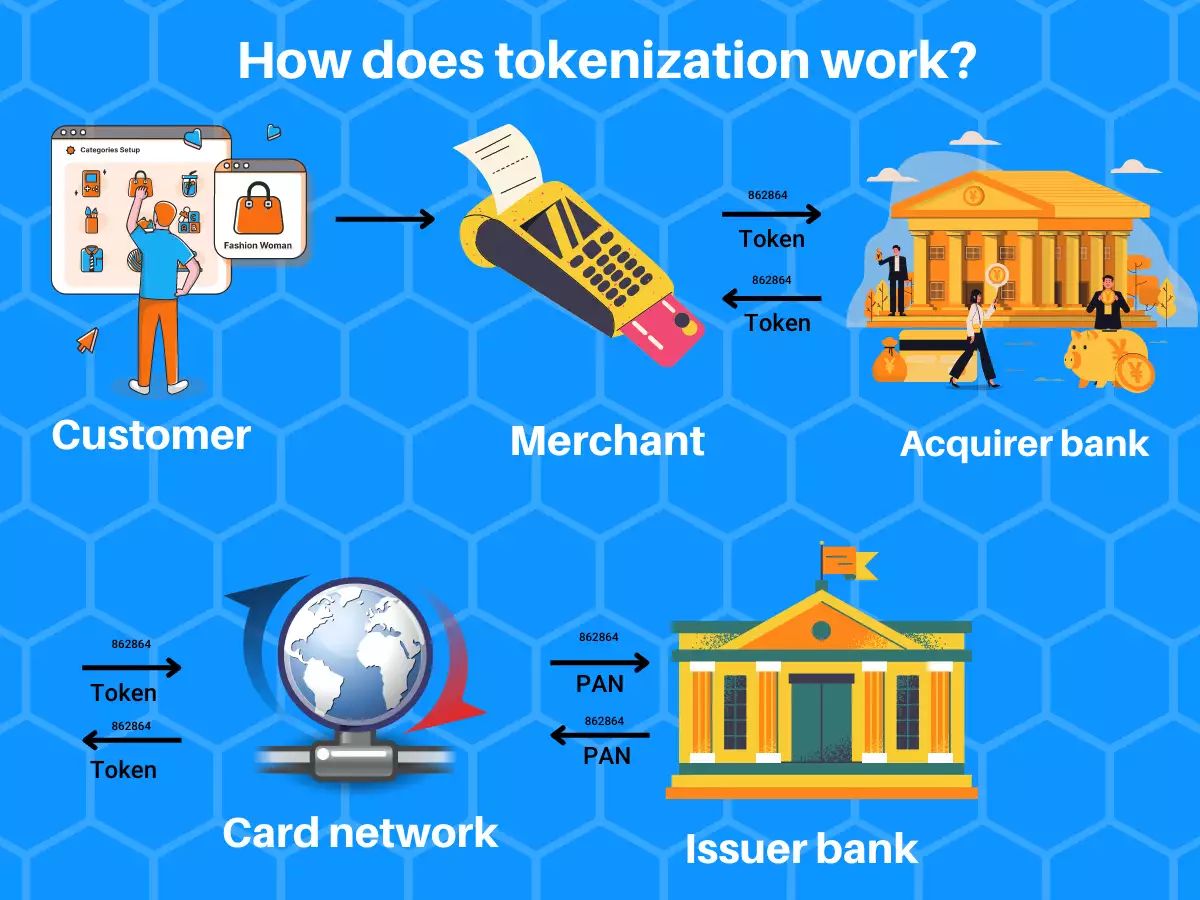

How Does Tokenization Work?

Tokenization involves several key steps to ensure the secure transformation of sensitive data into tokens. Let’s explore the process in more detail:

- Data Discovery: The first step in tokenization is identifying and classifying the sensitive data that needs to be protected. This could include credit card numbers, social security numbers, or any other personally identifiable information.

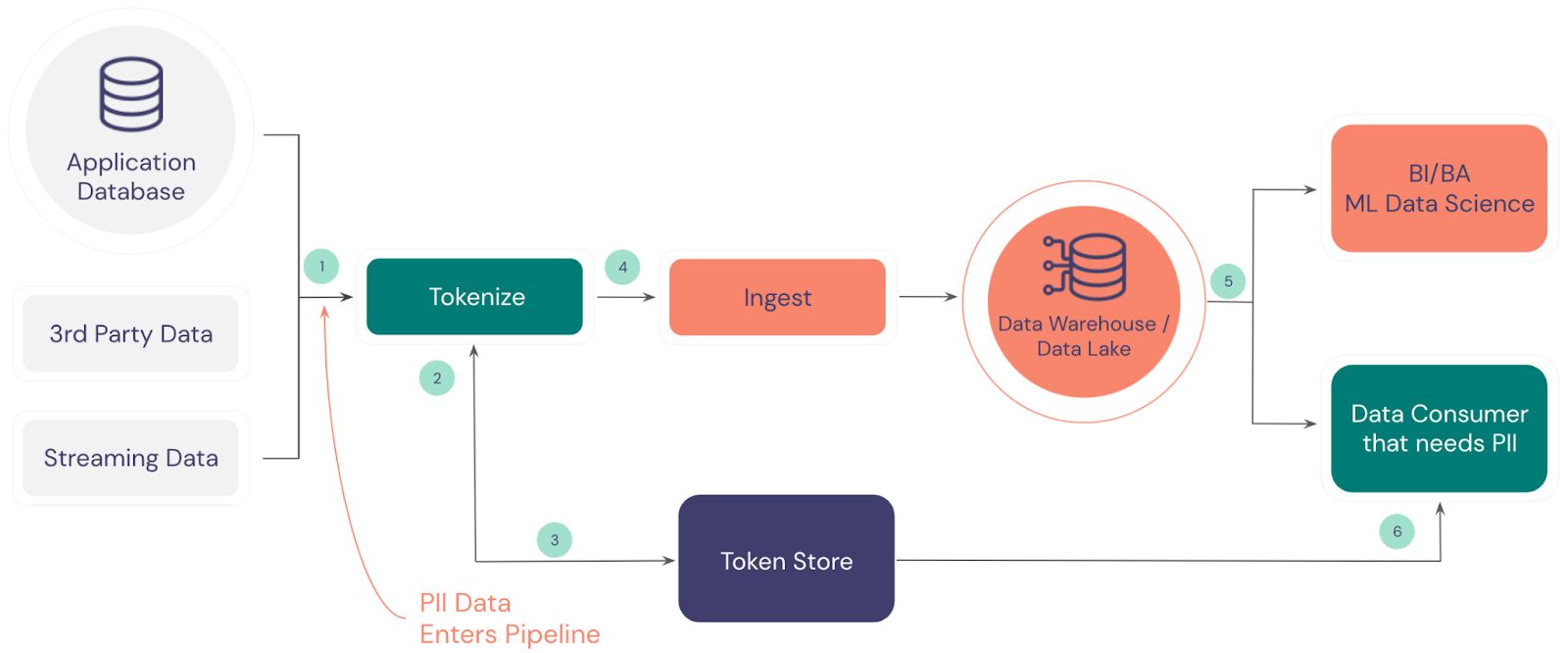

- Tokenization System Setup: Once the sensitive data is identified, organizations implement a tokenization system. This system consists of a secure database or token vault that stores the mapping between the original data and its corresponding token.

- Data Tokenization: The next step involves the actual tokenization process. The sensitive data is sent to the tokenization system, which generates a unique token as a replacement. This token is then stored in the token vault along with the original data-token mapping.

- Data Storage: The original sensitive data is securely stored or archived, typically using strong encryption methods. This ensures that even if the encrypted data is somehow compromised, the sensitive information remains protected.

- Data Retrieval: When authorized users need to access or process the original data, they retrieve the corresponding token from the token vault. The token is then passed to the tokenization system, which uses the mapping to retrieve the original data and provide it to the authorized user.

It is important to note that the tokenization process is irreversible. Unlike encryption, which can be decrypted with the appropriate keys, tokens cannot be reversed back to the original data without access to the tokenization system.

Furthermore, tokenization offers an added layer of security through the use of tokens that have no intrinsic meaning or value. Even if an unauthorized individual gains access to the tokens, they are unable to extract any sensitive information from them.

Tokenization systems also employ robust security measures to protect the token vault and ensure the integrity of the data. This includes stringent access controls, encryption of the stored data, and monitoring for any suspicious activity.

In summary, tokenization works by replacing sensitive data with unique tokens through a secure process. The tokens act as references to retrieve the original data when needed, offering increased security and protection against unauthorized access.

Benefits of Tokenization in Data Security

Tokenization offers numerous benefits when it comes to enhancing data security. Let’s take a closer look at some of the key advantages:

- Improved Data Protection: Tokenization provides an additional layer of security by replacing sensitive data with tokens. This means that even if the tokens are compromised, they are meaningless and cannot be reversed to reveal the original data. It significantly reduces the risk of unauthorized access and minimizes the potential impact of a data breach.

- Reduced Scope of Compliance: By tokenizing sensitive data, organizations can minimize their scope of compliance with regulatory requirements such as the Payment Card Industry Data Security Standard (PCI DSS). Since tokens are not considered sensitive information, they are not subject to the same compliance regulations as the original data.

- Streamlined Business Operations: Tokenization allows organizations to store and process tokens instead of sensitive data, reducing the complexity and cost associated with securing and managing sensitive information. It simplifies data storage, retrieval, and processing, leading to improved efficiency and productivity in day-to-day business operations.

- Faster Transactions: When it comes to payment processing, tokenization facilitates faster and more seamless transactions. By storing and using tokens instead of actual credit card numbers, organizations can bypass the need to transmit and process sensitive cardholder data during each transaction. This not only speeds up the process but also reduces the risk of data exposure during payment transactions.

- Enhanced Customer Trust: Implementing tokenization demonstrates a commitment to data security, which can enhance customer trust and confidence. Customers feel more comfortable knowing that their sensitive information is being protected using advanced security measures. This can lead to increased customer loyalty and satisfaction.

- Scalability and Flexibility: Tokenization can easily scale with growing data volumes and business needs. As organizations generate and process more data, the tokenization system can handle the increased workload without compromising security or performance. Additionally, the flexibility of tokenization allows organizations to tokenize different types of sensitive data, catering to a wide range of business requirements.

Overall, tokenization provides significant benefits in the realm of data security. It enhances protection, streamlines operations, reduces compliance scope, speeds up transactions, builds customer trust, and offers scalability and flexibility to meet evolving business needs.

Use Cases of Tokenization

Tokenization is a versatile data security technique that can be applied across various industries and use cases. Let’s explore some common scenarios where tokenization proves to be valuable:

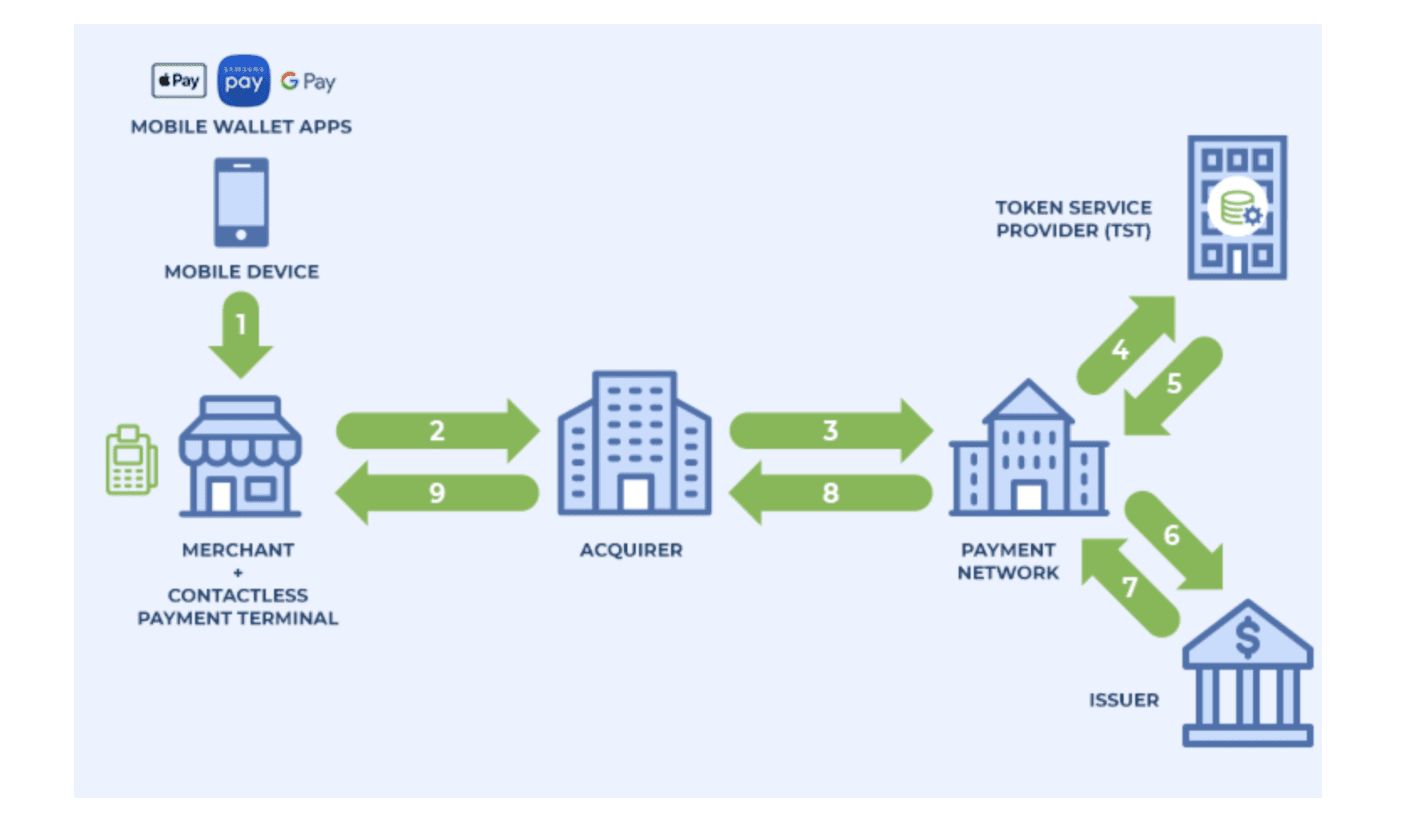

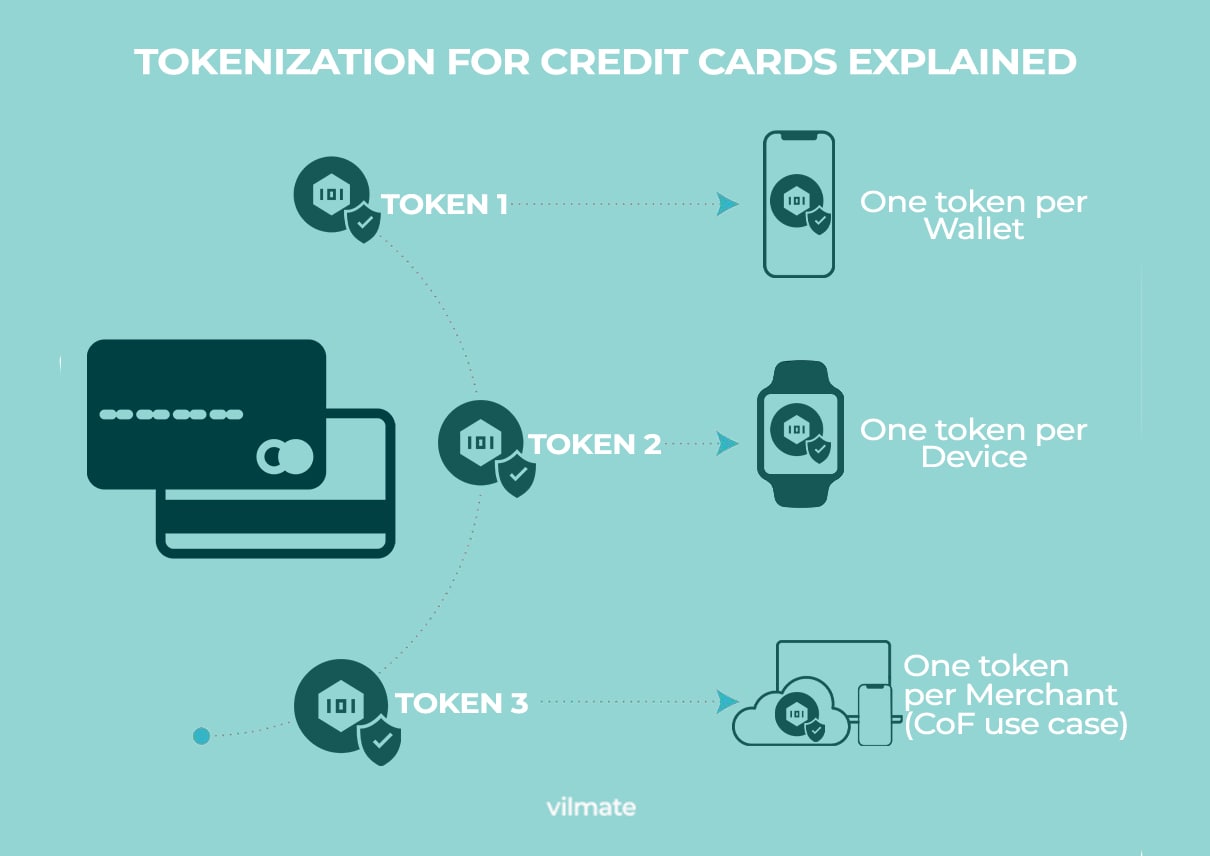

- Payment Card Industry (PCI) Compliance: Tokenization is widely used in the payment card industry to secure sensitive cardholder data. By tokenizing credit card numbers, organizations can minimize their scope of compliance with PCI regulations and reduce the risk of data breaches during payment transactions.

- Healthcare: In the healthcare industry, tokenization can help protect sensitive patient data, such as social security numbers and medical records. By replacing this information with tokens, healthcare providers can ensure the privacy and security of patient records while still being able to securely access and process the necessary information.

- E-commerce: Tokenization plays a crucial role in securing online transactions for e-commerce websites. By tokenizing credit card information at the point of sale, retailers can safeguard sensitive customer data and streamline the checkout process, leading to a more secure and seamless online shopping experience.

- Mobile Payments: Tokenization is a key security measure in the realm of mobile payments. By tokenizing the payment credentials stored on mobile devices, such as smartphones or wearables, organizations can ensure the security of the payment process while enabling customers to make quick and convenient transactions.

- Loyalty Programs: Tokenization can be utilized to secure customer loyalty program data, such as membership numbers and reward points. By tokenizing these details, organizations can protect customer information, improve the efficiency of loyalty programs, and prevent fraud or abuse of the rewards system.

- Internet of Things (IoT): With the growing prevalence of IoT devices, tokenization offers a valuable security measure for ensuring the privacy and protection of sensitive data transmitted by these devices. By tokenizing device identifiers and other sensitive information, organizations can minimize the risk of unauthorized access or manipulation of IoT data streams.

These are just a few examples of the diverse use cases where tokenization can enhance data security and protect sensitive information. Tokenization’s versatility makes it a valuable technique in various industries, where the protection of sensitive data is of utmost importance.

Tokenization vs Encryption

Tokenization and encryption are both widely used techniques to protect sensitive data, but their approaches and characteristics differ significantly. Let’s compare tokenization and encryption to understand their key differences:

1. Reversibility: Encryption is a reversible process, meaning that the original data can be decrypted back to its original form using the appropriate decryption key. Tokenization, on the other hand, is a non-reversible process, making it nearly impossible to revert tokens back to the original data without access to the tokenization system’s decryption and mapping mechanism.

2. Data Mapping: Encryption does not include a separate data mapping mechanism. Once the data is encrypted, the encrypted form is stored and used as needed. Tokenization, however, involves the creation of a token and the mapping of this token to the original data. The token serves as a reference to retrieve the original data when necessary.

3. Performance: Encryption can add computational overhead, as it involves complex algorithms and cryptographic operations to transform the data into ciphertext and decrypt it back to plaintext. Tokenization, on the other hand, generally offers better performance since the tokenization process is typically simpler and faster.

4. Scope of Compliance: Encryption is subject to compliance regulations, such as PCI DSS, which requires secure key management and adherence to encryption standards. Tokenization, on the other hand, may provide a wider scope of compliance. Tokens are often considered non-sensitive and may not be subject to the same regulations as the original data.

5. Token Value: Tokens generated through tokenization have no intrinsic meaning or value. Even if an unauthorized individual gains access to the tokens, they cannot extract any sensitive information from them. Encryption, however, retains the value of the original data in the encrypted form, requiring additional security measures to protect the encryption keys.

6. Key Management: Encryption relies on the secure management of encryption keys to ensure data security. Losing or compromising encryption keys can result in data loss or unauthorized access. Tokenization generally requires secure token vault management, ensuring the integrity and security of the mapping between tokens and the original data.

In summary, while both tokenization and encryption are data protection techniques, they employ different strategies to achieve data security. Encryption is reversible, provides encryption keys, and retains the value of the original data. Tokenization is non-reversible, uses a data mapping mechanism, generates tokens with no value, and often offers compliance advantages. The choice between tokenization and encryption depends on the specific security requirements and business needs of an organization.

Tokenization vs Masking

When it comes to data security, tokenization and masking are two techniques that organizations can utilize to protect sensitive information. Let’s compare tokenization and masking to understand their key differences:

1. Data Replacement: Tokenization involves replacing sensitive data with a unique identifier or token, while masking involves partially or fully obfuscating the original data without altering its format. Tokenization replaces the data with a random sequence of characters that hold no meaning, whereas masking retains the data format but obscures certain characters or digits.

2. Reversibility: Tokenization is a non-reversible process. Tokens cannot be reversed back to the original data unless the tokenization system’s decryption and mapping mechanism are available. Masking, on the other hand, is reversible as the original data remains intact, and the masking technique can be reversed to reveal the complete information.

3. Data Usage: Tokenization decouples the sensitive data from the token, allowing organizations to store and process tokens without exposing the original data. Masking, however, maintains the original data in a modified form, making it necessary to implement additional security measures to ensure the protection of the data throughout its lifecycle.

4. Usability: Tokenization offers enhanced usability compared to masking. Authorized users can retrieve and process the original data using the tokenization system’s mapping and decryption mechanisms. In contrast, masking requires additional steps to de-identify the masked data to make it usable again, potentially impacting operational efficiency and usability.

5. Compliance Considerations: Tokenization and masking have different implications for compliance requirements. Tokenization can provide a wider scope of compliance, as tokens are often considered non-sensitive, reducing the need for stringent security measures around their storage. Masking, however, may still be subject to compliance regulations since the masked data retains the format and partial information of the original data.

6. Data Access: Tokenization provides secure access to the original data by retrieving it through the token. Only authorized users with access to the tokenization system can retrieve and use the original data. Masking, on the other hand, involves a reversible process, allowing authorized users to access and retrieve the full original data without any additional decryption or mapping steps.

Both tokenization and masking have their unique advantages and use cases in data security. Tokenization provides enhanced security and usability with non-reversible transformation and decoupling of data, suitable for scenarios where complete data replacement is acceptable. Masking, on the other hand, is suitable when it is important to retain the original data format and reversible access is required while still providing some level of data protection.

Key Components of Tokenization

Tokenization involves several key components that work together to ensure the effective implementation and operation of the tokenization system. Let’s explore the essential elements of tokenization:

1. Tokenization Algorithm: The tokenization algorithm is the core component responsible for the transformation of sensitive data into tokens. It utilizes cryptographic techniques to generate unique, non-reversible tokens that replace the original data. The algorithm ensures the randomness and uniqueness of the tokens, making them extremely difficult to guess or reverse-engineer.

2. Token Vault: The token vault is a secure repository where the mapping between the original data and its corresponding token is stored. This component is pivotal in the tokenization process, as it enables the retrieval of the original data when needed. The token vault should have robust access controls, encryption, and monitoring mechanisms in place to ensure the security and integrity of the token and mapping data.

3. Tokenization System: The tokenization system encompasses the overall infrastructure and software that facilitates the tokenization process. This includes the tokenization algorithm, token vault, and other necessary components. The system should have robust security measures in place to protect against unauthorized access, monitor for any suspicious activity, and ensure the smooth generation, usage, and management of tokens.

4. Data Discovery and Classification: Data discovery and classification involve identifying and categorizing sensitive data within an organization. This step is critical in determining which data needs to undergo tokenization for enhanced protection. Organizations need to have a robust process in place to identify and classify the sensitive data that falls within the scope of tokenization.

5. Encryption and Key Management: Encryption and key management play a crucial role in securing the tokenization system and the sensitive data it holds. Encryption should be used to protect the data at rest, whether it’s the original data stored separately or the information within the token vault. Additionally, secure key management practices should be implemented to protect encryption keys and ensure they are accessible only to authorized personnel.

6. Access Controls and Audit Trail: Access controls are essential in restricting access to the tokenization system and token vault. Only authorized individuals should have access to generate tokens, retrieve the original data, or modify the tokenization system’s configurations. An audit trail should also be implemented to monitor and log activity within the tokenization system, ensuring accountability and facilitating forensic analysis in case of any security incidents.

In summary, the key components of tokenization include the tokenization algorithm, token vault, tokenization system, data discovery and classification process, encryption and key management practices, as well as access controls and audit trail. By ensuring the proper implementation and integration of these components, organizations can enhance data security and protect sensitive information effectively.

Challenges of Tokenization

While tokenization is a powerful data security technique, its implementation is not without challenges. Here are some of the key challenges organizations may face when implementing tokenization:

1. Data Integrity: Maintaining data integrity is crucial when using tokenization. Organizations need to ensure that the mapping between tokens and the original data remains accurate and consistent. Any errors or inconsistencies in the mapping can lead to data loss or incorrect retrieval of information, affecting the overall integrity of the system.

2. Key Management: The secure management of encryption keys used in the tokenization process is a critical challenge. Strong key management practices are essential to prevent unauthorized access, loss, or theft of the keys. Without proper key management, the security of the tokenization system and the protected data can be compromised.

3. Token Vault Security: The security of the token vault, where the mapping between tokens and original data is stored, is of utmost importance. It needs to be protected from unauthorized access, hacking attempts, and internal threats. Robust access controls, encryption, monitoring, and auditing mechanisms should be implemented to mitigate the risk of data breaches or tampering.

4. Scalability: As the volume of data grows, scalability becomes a challenge. Organizations need to ensure that the tokenization system can handle the increasing workload without compromising performance. Scaling up the system’s infrastructure and considering factors like database capacity, processing power, and network bandwidth are crucial to maintain efficiency as the amount of data being tokenized increases.

5. Legacy Systems Compatibility: Integrating tokenization with existing legacy systems can be challenging. Legacy systems may not be designed to work seamlessly with tokenization solutions, requiring additional development and customization to ensure the compatibility and smooth operation of the tokenization process. This may require significant time, effort, and resources to overcome.

6. Token Format Consistency: Ensuring consistent token formats across different systems within an organization or in integration with external systems can be a challenge. Inconsistent token formats can lead to compatibility issues, data retrieval errors, and difficulties in maintaining data consistency across different systems or platforms.

While these challenges exist, they can be effectively addressed with careful planning, implementation, and ongoing maintenance of the tokenization system. Organizations must prioritize robust security measures, proper key management, and regular monitoring to overcome these challenges and ensure the successful implementation of tokenization for enhanced data security.

Best Practices for Implementing Tokenization

Implementing tokenization requires careful planning and consideration to ensure its effectiveness and security. Here are some best practices to follow when implementing tokenization:

1. Assess Data Sensitivity: Conduct a thorough assessment of your organization’s data to identify and classify sensitive information that needs to be tokenized. This will help determine the scope and requirements for implementing tokenization effectively.

2. Evaluate Tokenization Solutions: Research and evaluate different tokenization solutions available in the market. Consider factors such as security features, scalability, ease of integration, vendor reputation, and compliance certifications to select the most suitable solution for your organization’s specific needs.

3. Implement Strong Key Management: Develop and implement a robust key management strategy for securing encryption keys used in the tokenization process. This includes proper key generation, storage, rotation, and access controls to ensure the confidentiality and integrity of the keys.

4. Secure Token Vault: Apply rigorous security measures to protect the token vault, where the mapping between tokens and original data is stored. Utilize strong access controls, encryption, and monitoring mechanisms to safeguard the vault from unauthorized access or tampering.

5. Encrypt Data at Rest: Encrypt the original data stored separately from the token vault to provide an additional layer of security. This helps protect the data in case of unauthorized access to the encrypted storage or backup systems.

6. Regularly Monitor and Audit: Implement monitoring and auditing mechanisms to track access to the tokenization system, detect any unusual activities, and ensure compliance with security policies. Regularly review logs and conduct audits to identify potential vulnerabilities or security breaches.

7. Test and Validate: Conduct thorough testing, including penetration testing and vulnerability assessments, to identify any potential weaknesses or vulnerabilities in the tokenization implementation. Regularly validate the effectiveness of the tokenization system, including data retrieval accuracy and performance.

8. Maintain Compliance: Ensure that your tokenization implementation aligns with relevant industry regulations and compliance standards, such as PCI DSS. Stay informed about updates or changes to compliance requirements and adjust your tokenization practices accordingly.

9. Provide Employee Training: Educate employees on the purpose and benefits of tokenization, as well as proper data handling practices. Train them on how to securely access and utilize tokenized data, and raise awareness about the importance of following security protocols and best practices.

10. Stay Updated: Stay informed about the latest advancements and security recommendations related to tokenization. Participate in industry forums and engage with security experts to stay updated on evolving threats and best practices.

By following these best practices, organizations can implement tokenization effectively, enhance data security, and reduce the risk of unauthorized access or data breaches.

Conclusion

Tokenization is a powerful technique that offers enhanced data security by replacing sensitive information with unique tokens. Through this process, organizations can significantly reduce the risk of unauthorized access or data breaches, while still maintaining the usability and integrity of their data.

Throughout this article, we have explored the concept of tokenization, its key components, and its benefits in data security. We discussed how tokenization works and compared it to other data protection methods such as encryption and masking. We also delved into the challenges associated with tokenization and provided best practices for its effective implementation.

By leveraging tokenization, organizations can protect sensitive data in various use cases, ranging from payment card industry compliance to healthcare, e-commerce, and mobile payments. Tokenization not only enhances security but can also streamline business operations and build customer trust.

However, it is essential to recognize that tokenization alone is not a complete data security solution. It should be considered as part of a comprehensive security strategy, accompanied by robust key management practices, encryption of stored data, and other security measures to ensure the utmost protection.

As technology continues to evolve, so do the techniques used by cybercriminals to exploit data. It is crucial for organizations to stay informed about the latest advancements in tokenization and continuously evaluate and improve their security practices.

In conclusion, tokenization is a valuable tool in the realm of data security, empowering organizations to protect sensitive information effectively. By implementing tokenization and following best practices, organizations can safeguard their data, enhance customer trust, and mitigate data breach risks in today’s ever-evolving digital landscape.