Google released a set of deepfakes on September 24, 2019, to help in the studying of the deepfake AI technology. The company hired hundreds of actors who consented to have their faces manipulated on video. This is Google’s effort to support the advancement of deepfake detection research.

What Are Deepfakes?

Deepfakes are a product of deep learning. Deep learning is a technology that enabled many modern robots and artificial intelligence technologies to thrive. Deepfake can change one’s appearance and voice through video manipulation.

The current wave of deepfakes originated from 2017 when Reddit users shared their deepfakes. Many deepfakes depict celebrities’ faces swapped into pornographic content. Because of this, social media platforms Reddit and Twitter banned pornographic deepfake contents on their websites. Pornhub banned deepfakes for involuntary pornography on their site too.

Non-pornographic deepfakes on the internet depict celebrity faces in comedic scenarios. One popular example is Nicolas Cage’s face in different movies. Deepfake swaps celebrity faces in celebrity interviews for entertainment purposes. The AI produces scary videos too. As a result, deepfake has gained popularity and advanced quickly.

Realistic Deepfake

Deepfakes have only existed for no more than three years but the technology is quickly developing. A normal viewer can spot deepfake videos from the original one in 2017. But doing this in 2019 is so much harder.

Deepfake technology is speedily advancing its abilities to copy facial features and mannerisms. Add in a good actor or impressionist like Jim Meskimen, deepfake AI will be able to fool anyone. This is why companies like Google and Facebook support the studies for deepfake detention.

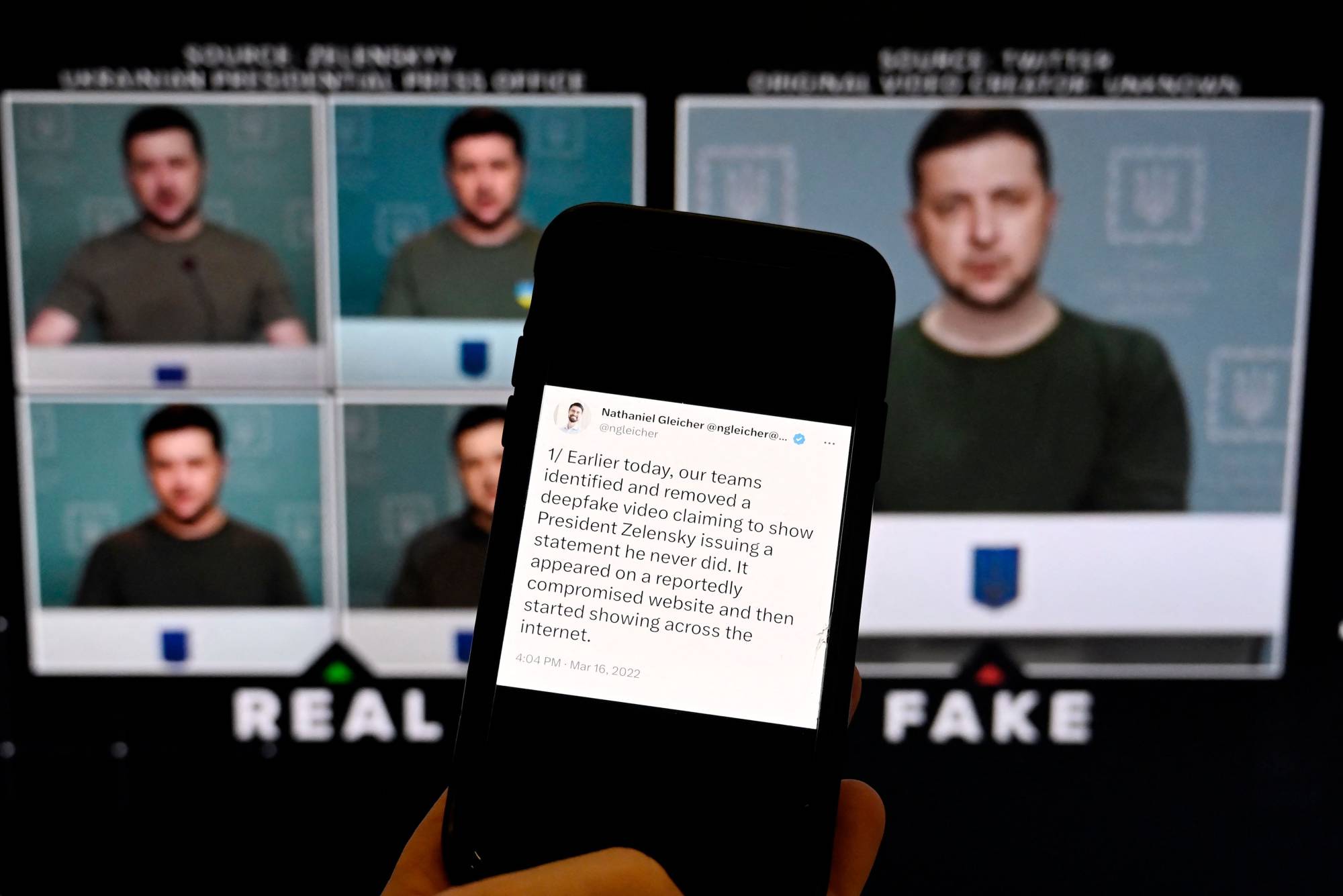

There are high risks and a lot of worry about people using deepfake for political agenda. Risks include the 2020 election, fake news on Facebook and other social media sites, and cyberbullies and hate-speech. All these, on top of the initial pornographic and entertainment purposes of deepfakes, pushed the technology world to further detection research.

The plan is to create another AI that can detect deepfakes. So Google released hundreds of deepfake videos with their corresponding deepfake as data in the study. However, this technology is rapidly growing. We would still need human intelligence to spot and determine fake from the original videos.

Deepfake Detection Research

Like all other technologies, deepfakes have their pros and cons. So Facebook uses deep learning, the kind of technology behind deepfake, to spot fake news. The technology determines the originality and authenticity of news articles by scanning its words and photos.

So tech companies released the Deepfake Detention Challenge in late 2019 because of highly realistic deepfake videos. Technology companies challenge the world to help create a new mechanism that can spot deepfake media.

Google shows support with its deepfake videos data set release.

“We firmly believe in supporting a thriving research community around mitigating potential harms from misuses of synthetic media,” Software Engineer at Google Research Nicholas Dufour said in a blog post.