Introduction

GPU programming has revolutionized the world of computing by unleashing the immense power and parallel processing capabilities of graphics processing units. While GPUs were originally designed for rendering stunning visuals in video games, their potential for general-purpose computing has been unlocked through the use of specialized programming techniques.

GPU programming refers to the process of utilizing GPUs to perform complex calculations and computations, extending beyond their conventional graphics-rendering capabilities. By leveraging the parallel processing architecture of GPUs, developers can significantly accelerate the execution of computationally intensive tasks, making it a valuable tool for a wide range of applications.

This article explores the importance of GPU programming and its benefits, as well as its applications in various fields. Additionally, we will discuss the challenges and limitations associated with GPU programming.

So, if you’re curious about the world of GPU programming and want to understand why it has become a game-changer in the computing industry, read on to discover how this technology has transformed the way we approach complex calculations.

What is GPU Programming?

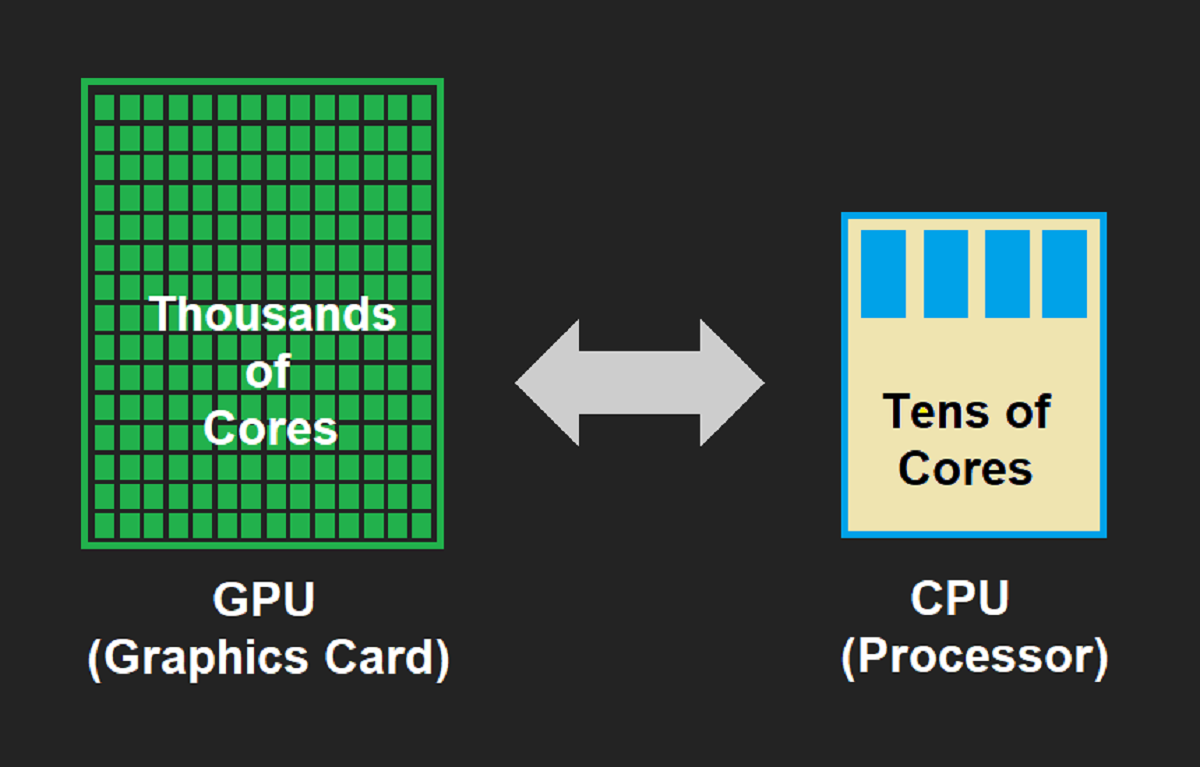

GPU programming involves utilizing the processing power of graphics processing units (GPUs) for non-graphical tasks. GPUs consist of thousands of smaller cores, making them ideal for executing multiple tasks simultaneously. Unlike central processing units (CPUs), which are designed for sequential processing, GPUs excel at parallel processing, thereby significantly boosting performance for certain types of computations.

Traditionally, GPUs were primarily used for rendering graphics in video games and other visually demanding applications. However, with advancements in programming techniques, developers have leveraged the computational power of GPUs to perform complex calculations in fields such as scientific research, machine learning, and data analysis.

GPU programming involves writing code that can execute on the GPU, utilizing languages like CUDA (Compute Unified Device Architecture), OpenCL (Open Computing Language), or specialized libraries. These programming languages provide the necessary tools and functionality to tap into the parallel processing capabilities of GPUs.

One of the key concepts in GPU programming is the idea of data parallelism. In data parallelism, large sets of data are divided into smaller chunks, and each chunk is assigned to a separate GPU core. These individual cores then execute the same operations on their respective chunks simultaneously, allowing for faster processing and improved performance.

Overall, GPU programming enables developers to harness the immense computing power of GPUs for a wide range of applications beyond graphics rendering. By effectively utilizing the parallel processing capabilities of GPUs, developers can significantly speed up computations and perform complex calculations in a more efficient manner.

Why is GPU Programming Important?

GPU programming has emerged as a crucial technology in various fields, offering significant advantages over traditional CPU-based computing. Here are some key reasons why GPU programming is important:

- Enhanced Performance: GPUs are designed to handle massive amounts of data simultaneously, allowing for highly parallel processing. This parallelism results in accelerated computations and improved performance, especially for tasks involving complex calculations or large datasets.

- Parallel Processing: Unlike CPUs, which typically consist of a few cores, GPUs house thousands of smaller cores. This parallel processing capability enables the simultaneous execution of numerous threads, making GPUs ideal for tasks that require massive parallelism, such as machine learning algorithms, simulations, and scientific calculations.

- Handling Large Data Sets: With the increasing availability of big data, efficiently processing and analyzing vast amounts of data has become a necessity. GPUs excel at processing large datasets, thanks to their ability to perform multiple computations simultaneously. This makes GPU programming essential for applications such as data science, deep learning, and image processing.

- Energy Efficiency: GPUs offer a high performance-to-power ratio compared to CPUs. Their parallel processing architecture allows for efficient utilization of resources and reducing power consumption. This energy efficiency makes GPU programming an attractive solution for green computing and energy-conscious applications.

- Accelerating Specific Workloads: Certain tasks can benefit greatly from GPU acceleration. For instance, rendering realistic graphics in video games, simulating fluid dynamics, or training complex neural networks requires massive computational power. GPU programming enables these workloads to be processed faster, leading to better user experiences, faster simulations, and more accurate predictions.

In summary, GPU programming holds immense importance in the computing world. Its ability to optimize performance, handle large datasets, and provide energy-efficient computing make it a valuable tool across various industries and applications.

Benefits of GPU Programming

GPU programming offers a multitude of benefits that have transformed the landscape of computing. Let’s explore some of the key advantages:

- Improved Performance: GPU programming harnesses the parallel processing power of GPUs, resulting in significant performance improvements compared to traditional CPU-based computing. By distributing tasks across thousands of cores, GPU programming enables simultaneous execution of multiple operations, leading to faster computations and reduced processing times.

- Parallel Processing: One of the standout features of GPU programming is its ability to process tasks in parallel. GPUs consist of numerous smaller cores that can work simultaneously, allowing for efficient execution of highly parallelizable tasks. This makes it a game-changer for applications that involve complex calculations, simulations, and data processing.

- Handling Large Data Sets: With the exponential growth of data in various industries, processing and analyzing large datasets have become more challenging. GPU programming comes to the rescue with its ability to handle massive amounts of data in parallel. This enables faster data processing, accelerating tasks such as data analytics, machine learning, and scientific simulations.

- Reduced Latency: Latency, or the delay between a command and its execution, can affect real-time applications. GPU programming reduces latency by speeding up computations, making it particularly beneficial for real-time graphics and gaming, where high frame rates and responsive user interactions are crucial.

- Energy Efficiency: Despite their increased processing power, GPUs have a high performance-to-power ratio compared to CPUs. By utilizing GPUs for computationally intensive tasks, energy consumption can be reduced, leading to cost savings and a smaller environmental footprint.

These benefits have made GPU programming indispensable in various applications. From graphics and gaming to machine learning and scientific computing, leveraging the power of GPUs has opened up new possibilities for accelerated processing, improved performance, and enhanced user experiences.

Improved Performance

One of the primary advantages of GPU programming is the significant improvement in performance it offers compared to traditional CPU-based computing.

GPUs are designed to handle massive amounts of data simultaneously, thanks to their highly parallel architecture. Unlike CPUs, which typically consist of a few cores, GPUs house thousands of smaller cores that can execute multiple instructions in parallel.

This parallel processing capability allows GPU programming to excel in tasks that require intensive computations or data processing. By distributing the workload across multiple cores and executing them simultaneously, GPUs can deliver faster results.

For example, in graphics rendering, GPU programming enables real-time rendering of complex 3D scenes with high frame rates. The parallel processing power of GPUs allows for quick calculations of lighting, shadows, and textures, resulting in visually stunning and immersive experiences in video games or visual effects in movies.

But the benefits of improved performance go beyond gaming and graphics. GPU programming has found applications in fields such as machine learning, scientific simulations, and data analytics. The ability to process massive datasets and perform complex calculations in parallel greatly enhances the speed and efficiency of these tasks.

Machine learning algorithms, for instance, often require the processing of large amounts of data and the training of complex models. The parallel processing power of GPUs allows for faster model training and more efficient data analysis, enabling quicker insights and predictions.

In scientific simulations, GPU programming enables researchers and scientists to simulate complex phenomena such as fluid dynamics, weather patterns, or molecular interactions. These simulations require vast computational power, and utilizing the parallel processing capabilities of GPUs significantly speeds up the simulations, leading to faster discoveries and insights.

Furthermore, GPU programming has also proven valuable in financial analytics, where high-performance computing is essential for handling vast amounts of data and executing complex financial models efficiently. The parallel processing power of GPUs significantly reduces the time required for performing risk analysis, portfolio optimization, or pricing complex derivative instruments.

In summary, GPU programming’s ability to harness parallel processing power allows for improved performance in a variety of fields. From real-time graphics rendering to machine learning and scientific simulations, GPU programming enables faster computations, quicker insights, and enhanced user experiences.

Parallel Processing

Parallel processing is at the core of GPU programming and is a fundamental concept that distinguishes it from traditional CPU-based computing. The ability to execute multiple tasks simultaneously on thousands of cores is what makes GPUs a powerful tool for achieving high-performance computing.

In GPU programming, parallel processing refers to the execution of multiple threads or tasks simultaneously. This is accomplished by dividing a large task into smaller subtasks and assigning them to different cores within the GPU. Each core operates independently on its assigned task, performing computations concurrently.

By leveraging parallel processing, GPU programming can significantly speed up computations and improve overall performance. This is particularly beneficial for tasks that can be easily divided into parallelizable subtasks, such as matrix operations, image processing, and simulations.

For example, consider a matrix multiplication task. In traditional CPU-based computing, the multiplication of two matrices is performed sequentially. However, in GPU programming, the matrices can be divided into smaller blocks, and each block can be processed simultaneously by different cores within the GPU. This parallel processing approach accelerates the matrix multiplication significantly.

The parallel processing capabilities of GPUs also find extensive use in machine learning and deep learning algorithms. Training neural networks involves performing numerous matrix operations, making them highly suitable for parallel execution on GPUs. The ability to process multiple training examples simultaneously results in faster model training and improved training efficiency.

Another area where parallel processing shines is scientific simulations. Complex simulations, such as fluid dynamics or particle physics simulations, involve solving numerous equations concurrently. GPUs can distribute these computational tasks across their cores, allowing for faster and more realistic simulations.

Furthermore, parallel processing enables real-time graphics rendering in video games and computer-generated animations. GPUs can process geometry, textures, lighting, and other graphical components simultaneously, resulting in smooth rendering and immersive visual experiences.

It is important to note that not all tasks can benefit equally from parallel processing. Some tasks involve dependencies or sequential steps that cannot be parallelized effectively. In such cases, the performance gains may be limited. However, developers can still leverage the parallel processing capabilities of GPUs by identifying and optimizing the parallelizable aspects of the task.

In summary, parallel processing is a key feature of GPU programming that enables the simultaneous execution of multiple tasks. This allows for faster computations, improved performance, and enhanced efficiency in a wide range of applications, including machine learning, scientific simulations, and real-time graphics rendering.

Handling Large Data Sets

With the exponential growth of data in various industries, efficiently processing and analyzing large data sets has become increasingly challenging. GPU programming offers a powerful solution for handling and processing massive amounts of data in parallel.

Traditional CPUs have limited parallel processing capabilities due to their relatively small number of cores. In contrast, GPUs are equipped with thousands of smaller cores, allowing them to process data in parallel at a much larger scale.

When it comes to handling large data sets, GPU programming provides several advantages:

- Parallel Memory Access: GPUs have fast and efficient memory access, enabling them to retrieve and process data from large datasets simultaneously. This parallel memory access greatly accelerates data processing tasks and reduces overall execution time.

- Data Parallelism: GPU programming employs a technique called data parallelism, where large data sets are divided into smaller chunks, and each chunk is processed by an individual GPU core. This parallelism allows for distributed and simultaneous processing of data, resulting in faster execution and improved performance.

- Accelerated Data Analytics: Data analytics tasks, such as statistical analysis, data mining, and machine learning, often involve processing large amounts of data. GPU programming can leverage its parallel processing power to accelerate data analytics algorithms, enabling quicker insights, more accurate predictions, and faster decision-making.

- Real-Time Processing: In applications where the processing of large data sets in real-time is required, such as video processing or sensor data analysis, GPU programming proves invaluable. The parallel processing capabilities of GPUs allow for the simultaneous handling of multiple data streams, ensuring timely analysis and response.

- Scientific Simulations: Scientific simulations often deal with massive datasets and complex calculations. GPU programming facilitates faster simulations by distributing the computational workload across multiple GPU cores, enabling researchers and scientists to analyze large datasets and simulate intricate phenomena more efficiently.

By leveraging the power of GPUs, developers can tackle the challenges posed by large data sets and achieve faster, more efficient data processing. GPU programming enables organizations to unlock the value hidden within their vast amounts of data, leading to better decision-making, improved insights, and enhanced productivity across various disciplines.

Applications of GPU Programming

GPU programming has found widespread applications across various industries and fields, harnessing the immense computational power of graphics processing units for a wide range of tasks. Let’s explore some of the key areas where GPU programming is making a significant impact:

- Graphics and Gaming: GPU programming has revolutionized the gaming industry by enabling stunning visual effects and realistic graphics. The parallel processing power of GPUs allows for real-time graphics rendering, dynamic lighting, and advanced shader effects, creating immersive gaming experiences.

- Machine Learning and Artificial Intelligence: Machine learning algorithms heavily rely on processing large amounts of data. GPU programming accelerates the training and execution of neural networks, improving the efficiency of tasks such as image recognition, natural language processing, and predictive analytics.

- Scientific and Engineering Computing: GPU programming plays a vital role in scientific simulations, such as weather prediction, fluid dynamics, and molecular dynamics. GPUs can handle the immense computational workload of these simulations, enabling faster and more accurate results.

- Cryptocurrency Mining: Cryptocurrency mining, particularly for currencies like Bitcoin and Ethereum, requires intense computational power. GPU programming has become the go-to method for cryptocurrency mining, as GPUs can perform the necessary calculations more efficiently than CPUs.

- Data Analytics and Big Data Processing: The ability to handle large datasets and perform complex computations in parallel makes GPU programming valuable for data analytics tasks. It accelerates data processing, enables faster data visualization, and improves the efficiency of data mining and pattern recognition algorithms.

- Medical Imaging and Healthcare: GPU programming has shown significant potential in medical imaging applications, such as MRI and CT scans. GPUs can accelerate image reconstruction and processing tasks, leading to quicker and more accurate diagnosis and treatment planning.

- Computer Vision: Computer vision applications, such as object detection, face recognition, and autonomous driving, heavily rely on analyzing and processing visual data. GPU programming provides the necessary power for real-time analysis and decision-making in these applications.

- Virtual Reality and Augmented Reality: GPU programming is crucial for delivering immersive experiences in virtual reality (VR) and augmented reality (AR) applications. It enables real-time rendering of high-resolution graphics and ensures smooth and responsive user interactions.

- Financial Modeling and Risk Analysis: GPU programming helps accelerate financial modeling, portfolio optimization, and risk analysis tasks. It enables faster calculations for pricing complex financial derivatives and performing risk simulations, improving decision-making in the finance industry.

These are just a few examples of the wide-ranging applications of GPU programming. As technology continues to advance, we can expect to see further utilization of GPU programming in various other domains, as more industries recognize and harness the benefits of GPU-accelerated processing.

Graphics and Gaming

One of the most significant areas where GPU programming has made a tremendous impact is in the realm of graphics and gaming. The parallel processing power of GPUs has revolutionized the way visual effects are rendered and has enabled the creation of immersive gaming experiences.

GPU programming has allowed game developers to push the boundaries of graphics, delivering stunning visuals and realistic simulations. The parallel nature of GPU processing enables complex calculations and visual effects to be applied in real-time, enhancing the overall visual fidelity of games.

Real-time rendering of graphics is made possible through GPU programming techniques such as vertex and fragment shaders. These shaders define how objects are transformed and how they appear onscreen, allowing for impressive lighting, shading, and special effects.

With GPU programming, game developers can create visually captivating scenes, including realistic lighting models, dynamic shadows, detailed textures, and advanced particle systems. The ability to perform these computations in parallel on the GPU significantly boosts the overall visual quality and immersion of games.

Furthermore, GPU programming facilitates the implementation of complex physics simulations in games. With the help of physics engines and GPUs, developers can accurately simulate the behavior of rigid bodies, cloth, fluids, and other dynamic elements. This adds a level of realism and interactivity to game worlds, enhancing the gaming experience for players.

Another significant advancement in GPU programming for gaming is the use of graphics APIs (Application Programming Interfaces) such as DirectX and OpenGL. These APIs provide a standardized way for game developers to access and utilize the power of GPUs for graphics rendering. By leveraging these APIs, developers can tap into the full potential of GPU programming while ensuring compatibility across different hardware platforms.

In addition to graphics rendering, GPU programming plays a vital role in optimizing game performance. By offloading certain computationally intensive tasks to the GPU, such as physics calculations or AI simulations, CPU resources are freed up, allowing for smoother gameplay and improved frame rates.

Moreover, GPU programming has facilitated the rise of virtual reality (VR) and augmented reality (AR) gaming. The ability to render high-resolution graphics in real-time, coupled with the responsiveness of GPU processing, enables immersive and interactive experiences in virtual and augmented environments.

In summary, GPU programming has transformed the world of gaming, enabling visually stunning graphics, complex physics simulations, and interactive virtual reality experiences. It has expanded the possibilities for game developers, pushing the boundaries of what is visually achievable and providing gamers with more immersive and realistic gaming experiences.

Machine Learning and Artificial Intelligence

Machine learning (ML) and artificial intelligence (AI) have experienced significant advancements in recent years, and GPU programming has played a crucial role in their development. The parallel processing power of GPUs has revolutionized the field, enabling faster model training, improved accuracy, and breakthroughs in various AI applications.

GPU programming accelerates ML and AI algorithms by harnessing the highly parallel architecture of GPUs. ML algorithms, such as deep learning neural networks, involve performing numerous matrix multiplications and calculations. GPUs excel in these operations, as they can process multiple computations simultaneously, significantly speeding up the training and inference processes.

Training deep neural networks, often involving millions of parameters, can be computationally demanding. GPU programming allows for efficient gradient computations and weight updates across numerous network layers, reducing training time from potentially weeks to days or even hours.

Additionally, GPU programming enables the deployment of AI models for real-time inference. With GPUs, complex AI models can process many inputs simultaneously, allowing for rapid decision-making in various applications, including image recognition, natural language processing, and autonomous vehicles.

Deep learning architectures, such as convolutional neural networks (CNNs), recurrent neural networks (RNNs), and generative adversarial networks (GANs), have all benefitted from GPU programming. These architectures power advancements in computer vision, speech recognition, language translation, and many other AI tasks.

Besides model training, GPU programming has also proven valuable in enabling large-scale data preprocessing and feature extraction. Processing massive datasets, such as images or text, can be accelerated by distributing the workload across GPU cores, allowing for faster data preparation and efficient feature engineering.

Furthermore, GPU programming has facilitated the development of AI in various domains, including healthcare, finance, and autonomous systems. GPUs have been instrumental in medical image analysis, allowing for faster diagnostics, accurate disease classification, and better treatment planning.

In the financial sector, GPU programming has improved risk analysis, algorithmic trading, and fraud detection. The parallel processing capabilities of GPUs enable the rapid analysis of vast amounts of financial data, leading to more informed decision-making and timely responses to market conditions.

GPU programming has also played a vital role in autonomous systems, such as self-driving cars and robotics. Real-time object detection, tracking, and decision-making require fast and efficient processing, which GPUs deliver through their parallel computing power.

In summary, GPU programming has revolutionized the field of machine learning and artificial intelligence. By leveraging the parallel processing capabilities of GPUs, ML and AI algorithms can be trained faster, inference can be performed in real-time, and complex AI models can be deployed efficiently. GPU programming has opened up new possibilities and accelerated advancements in computer vision, natural language processing, healthcare, finance, and various other AI-driven applications.

Scientific and Engineering Computing

Scientific and engineering computing tasks often involve complex calculations and simulations that demand significant computational power. GPU programming has emerged as a game-changer in these fields, enabling researchers and engineers to tackle complex problems more efficiently and effectively.

One of the key advantages of GPU programming in scientific and engineering computing is its ability to handle computationally intensive simulations. Whether it’s simulating the behavior of fluid dynamics, modeling climate patterns, or analyzing molecular interactions, GPUs excel at performing parallel computations on large datasets.

By leveraging the parallel processing power of GPUs, researchers can dramatically reduce the time required for simulations. The massive number of cores available in GPUs enables the simultaneous execution of multiple calculations, significantly accelerating the simulation process. This allows scientists and engineers to gain insights and make faster progress in their research.

Another critical aspect of scientific and engineering computing is data analysis. Whether analyzing large datasets from experiments or processing massive amounts of sensor data, GPU programming provides the computational muscle needed for fast and efficient data analysis.

GPU programming allows for parallel processing of data, enabling researchers to extract meaningful information and patterns more quickly. From analyzing complex data sets in genomics to processing seismic data for oil exploration, GPU programming provides researchers with the tools to handle and analyze vast amounts of data in a timely manner.

Additionally, GPU programming has found applications in computational chemistry, where it accelerates processes such as molecular dynamics simulations and quantum chemistry calculations. The parallel processing power of GPUs enables researchers to study complex chemical reactions and analyze the behavior of molecules more efficiently and accurately.

Furthermore, GPU programming is paving the way for advancements in materials science and engineering. Researchers can use GPUs to simulate the behavior of materials under different conditions, optimizing performance, and discovering new materials with desired properties.

In the field of structural engineering, GPU programming allows engineers to simulate and analyze complex systems, predicting their behavior under various conditions. This enhances the design process, ensuring structural safety and efficiency in building and infrastructure projects.

Overall, GPU programming has transformed scientific and engineering computing, enabling faster simulations, efficient data analysis, and enhanced modeling capabilities. GPU-accelerated computing empowers researchers and engineers to tackle complex challenges in fields ranging from physics and chemistry to materials science and structural engineering.

Cryptocurrency Mining

Cryptocurrency mining, particularly for popular currencies such as Bitcoin and Ethereum, requires significant computational power. The emergence of GPU programming has revolutionized the field of cryptocurrency mining, enabling miners to perform complex calculations more efficiently and effectively.

GPU programming provides a massive boost to cryptocurrency mining by leveraging the parallel processing capabilities of graphics processing units. Unlike traditional CPU-based mining, where each core performs computations sequentially, GPU mining allows for the simultaneous execution of multiple calculations across thousands of GPU cores.

The parallel nature of GPU programming enables miners to solve complex mathematical puzzles, verify transactions, and generate new cryptocurrency units more quickly. Mining algorithms, such as the SHA-256 algorithm used by Bitcoin, can be executed in parallel on GPU cores, significantly increasing mining efficiency.

GPU programming has become the go-to method for cryptocurrency mining due to several reasons:

- Increased Hash Rate: The parallel processing power of GPUs enables miners to achieve higher hash rates, which directly impact mining performance. A higher hash rate translates to a greater number of calculations made per second, enhancing the likelihood of successfully mining new blocks and earning rewards.

- Energy Efficiency: GPUs offer a better performance-to-energy ratio compared to CPUs when it comes to cryptocurrency mining. The parallel architecture of GPUs allows for more efficient utilization of resources, leading to reduced energy consumption and lower operational costs for miners.

- Flexibility and Compatibility: GPU programming is compatible with various mining software and algorithms, providing miners with flexibility in choosing the cryptocurrencies they want to mine. Whether it’s Bitcoin, Ethereum, or other altcoins, GPU programming can be tailored to accommodate different mining requirements.

- Market Liquidity: Cryptocurrencies mined using GPU programming can be easily exchanged or sold on cryptocurrency exchanges. This liquidity allows miners to convert their mining rewards into other cryptocurrencies or fiat currencies, providing opportunities for profit-making and investment diversification.

It is worth noting that as the popularity of cryptocurrencies increases, the mining difficulty also rises. Miners constantly strive to enhance their mining capabilities, and GPU programming has become an essential tool in this pursuit. Miners often invest in powerful GPUs and assemble mining rigs consisting of multiple GPUs to maximize their mining potential.

However, it is important to consider that as more miners enter the market, competition grows, and the mining landscape becomes more challenging. In some cases, specialized hardware known as ASICs (Application-Specific Integrated Circuits) may offer even greater efficiency and mining power for specific cryptocurrencies.

Nevertheless, GPU programming remains a popular and accessible option for many cryptocurrency miners, enabling them to participate in the mining process and contribute to the decentralized ledger systems that cryptocurrencies are built upon.

In summary, GPU programming has revolutionized cryptocurrency mining by providing efficient and energy-effective computational power. It enables miners to achieve higher hash rates, reduces energy consumption, and offers flexibility in mining different cryptocurrencies. With GPU programming, mining has become a widespread activity, contributing to the decentralized nature of cryptocurrencies and facilitating transactions in various digital economies.

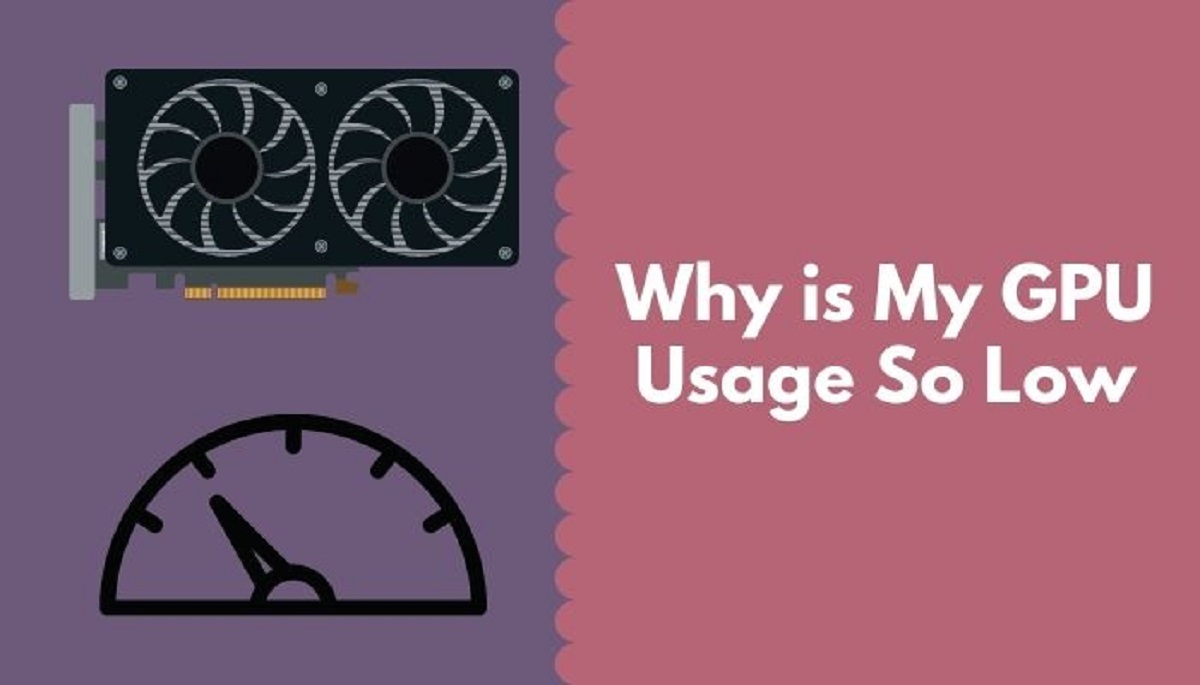

Challenges and Limitations of GPU Programming

While GPU programming offers significant benefits and has revolutionized various fields, it also comes with a set of challenges and limitations that developers and users need to consider. Let’s explore some of the main challenges:

- High Cost and Power Consumption: GPUs can be expensive, especially when configuring a system with multiple high-end GPUs. Additionally, GPUs consume more power compared to CPUs, leading to increased electricity costs and higher cooling requirements.

- Limited Memory and Bandwidth: GPUs have relatively limited on-board memory compared to CPUs. This limitation can be a hindrance when working with large datasets or complex algorithms that require extensive memory access. Moreover, the memory bandwidth between the GPU and other system components can become a bottleneck, affecting overall performance.

- Programming Complexity: Compared to traditional CPU programming, GPU programming can be more challenging due to its unique architecture and programming model. Developers need to learn specialized programming languages, such as CUDA or OpenCL, and understand GPU-specific optimizations to fully leverage the parallel processing capabilities.

- Limited Software Support: GPU programming has been adopted by various industries and fields, but not all software tools and libraries have comprehensive support for GPU acceleration. This can limit the availability and compatibility of off-the-shelf software solutions, requiring developers to invest time and resources into custom implementations.

- Algorithmic Limitations: While GPUs excel at highly parallelizable tasks, not all algorithms or computational tasks can fully benefit from GPU programming. Some algorithms are inherently sequential or have complex dependencies, making them less suitable for parallel execution on GPUs. In such cases, the performance gains may be limited.

- Scaled Performance: The performance gains achieved through GPU programming often depend on the scale and specifics of the task at hand. Smaller or less parallelizable tasks may not experience a substantial performance boost on GPUs compared to CPUs. As a result, developers need to assess the applicability of GPU programming for their specific use cases.

Overcoming these challenges requires careful consideration, along with proper optimization and resource management. It is important to weigh the benefits and limitations of GPU programming in relation to the specific requirements and constraints of the intended applications.

Despite these challenges, the continuous advancements in GPU technology and the growing ecosystem of GPU programming tools and libraries contribute to mitigating these limitations. Developers are continuously pushing the boundaries of GPU programming, finding innovative solutions and optimizing algorithms to maximize the benefits of GPU-accelerated computing.

In summary, while GPU programming offers significant advantages, it also poses challenges such as high cost, power consumption, programming complexity, limited software support, and specific algorithmic requirements. Understanding these limitations and making informed decisions about when and how to utilize GPU programming can lead to successful implementations and the realization of its full potential.

High Cost and Power Consumption

One of the major challenges of GPU programming is the high cost associated with acquiring and utilizing graphics processing units (GPUs). GPUs designed for high-performance computing tasks can be quite expensive, particularly those with the latest architecture and higher core counts. Building a system with multiple high-end GPUs can significantly increase the overall cost of a GPU programming setup.

In addition to the initial investment, the power consumption of GPUs is another factor to consider. GPUs consume more power compared to central processing units (CPUs) due to their highly parallel architecture and extensive computational capabilities. This higher power consumption leads to increased electricity costs and may require additional cooling infrastructure to dissipate the heat generated by the GPUs.

For organizations or individuals looking to adopt GPU programming, the cost of procuring and maintaining the necessary hardware can be a significant barrier. Moreover, as technology advances, the cost of acquiring newer GPUs with improved performance may further escalate, requiring ongoing investments to stay up to date.

Despite the high cost, it is important to note that GPUs provide a significant boost in computing power and performance, which can offset the initial investment over the long term. The improved efficiency and accelerated processing capabilities of GPUs can result in faster time-to-solution and increased productivity, making them a worthwhile investment for certain applications.

To address the power consumption challenge, efforts have been made to develop more energy-efficient GPUs. GPU manufacturers have introduced power-saving features and technologies to reduce the overall energy consumption without sacrificing performance. Additionally, advancements in cooling technologies, such as liquid cooling, help manage and dissipate the heat generated by GPUs efficiently.

As technology continues to evolve, it is likely that both the cost and power consumption of GPUs will reduce over time, making GPU programming more accessible and affordable. Furthermore, cloud-based GPU computing services are emerging, providing an alternative to on-premises GPU installations. These services offer the advantage of scalability, flexibility, and cost optimization, allowing users to pay for GPU resources on-demand.

Therefore, while the initial cost and power consumption associated with GPU programming can be significant challenges, the potential benefits and advancements in technology provide opportunities for cost optimization and improved efficiency. Organizations and individuals should carefully evaluate the cost-benefit analysis, considering the specific requirements, long-term goals, and budgetary constraints in determining the feasibility and viability of GPU programming.

Limited Memory and Bandwidth

One of the key limitations of GPU programming is the limited on-board memory available on graphics processing units (GPUs), as compared to the larger memory capacities of central processing units (CPUs). This limitation can pose challenges when working with large datasets or complex algorithms that require extensive memory access.

The limited memory on GPUs can result in the need to partition or subdivide large datasets into smaller, more manageable portions that can fit within the available GPU memory. This may introduce additional complexity in terms of managing data movement between CPU and GPU memory or between different GPU memory spaces.

In addition to the limited memory, the bandwidth, or the rate at which data can be transferred between the GPU and other system components, can also present a bottleneck in GPU programming. Transferring data between main memory and GPU memory can be a time-consuming process and impact overall performance.

However, it is important to note that advancements in GPU technology and memory infrastructure have helped to mitigate these limitations to some extent. Modern GPUs now offer larger onboard memory capacities, allowing for the processing of larger datasets without the need for excessive data transfers between CPU and GPU memory.

To optimize memory usage, developers often employ techniques such as data compression, data tiling, or hierarchical memory access patterns. These approaches help maximize the efficiency of memory allocation and minimize data transfers, improving overall performance.

Moreover, GPU programming APIs and libraries provide memory management tools and techniques to aid developers in optimizing memory usage. These tools allow for intelligent allocation and utilization of memory, reducing the impact of the limited memory capacity on GPU performance.

When dealing with bandwidth limitations, strategies such as overlapping computation and data transfer or minimizing unnecessary data transfers can be employed to mitigate the impact on performance. Additionally, advancements in interconnect technologies, such as PCIe, have improved the bandwidth between the GPU and other system components, reducing the impact of data transfer on overall performance.

While limited memory and bandwidth are challenges inherent to GPU programming, careful consideration and optimization can help mitigate their impact. By adopting efficient memory management techniques, utilizing available memory effectively, and minimizing data transfers, developers can work around these limitations and achieve optimal performance even with constrained resources.

As GPU technology continues to evolve, we can expect further advancements in memory capacity and bandwidth capabilities, further alleviating these limitations. It is essential for GPU programmers to stay updated with the latest developments and advancements in GPU architecture and memory infrastructure to leverage these improvements effectively.

In summary, limited memory and bandwidth are challenges associated with GPU programming. However, by employing memory optimization techniques and leveraging advancements in GPU technology, developers can mitigate the impact of these limitations and achieve optimal performance in their GPU-accelerated applications.

Programming Complexity

One of the challenges of GPU programming is the increased complexity compared to traditional CPU programming. GPU programming requires specialized knowledge, as it involves understanding the unique architecture and programming models of graphics processing units (GPUs).

GPU programming languages, such as CUDA (Compute Unified Device Architecture) and OpenCL (Open Computing Language), have been developed to provide a programming interface for accessing and utilizing the parallel processing capabilities of GPUs. However, these languages introduce new concepts and syntax that require learning and mastering.

The programming model of GPUs differs significantly from that of CPUs. GPUs are structured for massive parallel processing, with thousands of processing cores executing computations simultaneously. This parallel nature of GPUs introduces challenges in designing algorithms and distributing workloads efficiently across the available cores.

Additionally, GPU programming often involves writing low-level code, which requires developers to have a deep understanding of hardware architecture and optimization techniques. Proper memory management, synchronization, and data movement between CPU and GPU are crucial aspects of GPU programming that require careful consideration.

Optimizing performance in GPU programming involves identifying and exploiting parallelism within algorithms, coordinating threads effectively, and minimizing data dependencies. Achieving efficient GPU utilization may require rethinking and restructuring code to take advantage of the massive parallelism offered by GPU architectures.

Fortunately, libraries and frameworks have been developed to simplify GPU programming and abstract away some of the complexity. These libraries, such as cuDNN (CUDA Deep Neural Network library) or TensorFlow, provide high-level APIs and functions that optimize the execution of common GPU operations, making it easier for developers to leverage GPU acceleration without delving into low-level programming details.

Despite these tools, GPU programming still requires a learning curve, and developers need to invest time and effort to gain expertise in GPU programming techniques. Proficiency in GPU programming involves understanding the principles of parallel computing, thread coordination, memory management, and optimization techniques specific to GPU architectures.

Fortunately, resources such as documentation, online tutorials, and community support are available to assist developers in mastering GPU programming. The growing community of GPU programmers and researchers is continuously sharing knowledge and best practices, making it easier for new programmers to dive into the world of GPU programming.

In summary, GPU programming introduces complexity due to the specialized knowledge required, the unique architecture of GPUs, and the need to optimize code for parallel execution. However, with the availability of programming languages, libraries, and community support, developers can overcome these challenges and unlock the immense computational power and performance gains offered by GPUs.

Conclusion

GPU programming has become a game-changer in various industries, offering significant benefits and revolutionizing the way we approach complex computations and data processing. The parallel processing power of graphics processing units (GPUs) offers improved performance, accelerated processing of large datasets, and enhanced efficiency in diverse applications.

From graphics and gaming, where GPU programming enables stunning visual effects and immersive experiences, to machine learning and artificial intelligence, where GPUs accelerate model training and inference, the applications of GPU programming are vast and far-reaching.

Scientific and engineering computing has also seen remarkable advancements with GPU programming. Researchers and engineers can now tackle complex simulations and data analysis tasks more efficiently, leading to faster discoveries and improved insights. Financial modeling, risk analysis, and cryptocurrency mining are additional areas benefitting from GPU programming by harnessing its computational power and parallel processing capabilities.

Challenges exist in GPU programming, such as the high cost and power consumption of GPUs, limited memory capacity, programming complexity, and algorithmic requirements. However, ongoing developments in GPU technology, memory infrastructure, and programming tools continue to address and mitigate these challenges, making GPU programming more accessible and efficient.

To harness the full potential of GPU programming, developers need to acquire specialized knowledge, learn programming languages and optimization techniques, and stay updated with the latest advancements in GPU technology. The GPU programming community, along with available resources and libraries, plays a crucial role in supporting and facilitating the adoption of GPU programming techniques.

As we move forward, GPU programming will continue to evolve, driving innovation and enabling faster, more efficient computational solutions in a wide range of domains. With its significant impact on graphics and gaming, machine learning, scientific computing, and other areas, GPU programming paves the way for exciting advancements and applications that were once deemed computationally challenging or infeasible.