Rite Aid, a U.S. drugstore giant, has been prohibited from utilizing facial recognition software for a period of five years. This decision comes in the wake of the Federal Trade Commission’s (FTC) discovery that the company’s “reckless use of facial surveillance systems” resulted in the humiliation of customers and the compromise of their sensitive information.

Key Takeaway

Rite Aid has been banned from employing facial recognition technology for five years due to its reckless deployment, which led to false accusations and humiliation of customers, as per the FTC’s findings.

FTC’s Order and Implications

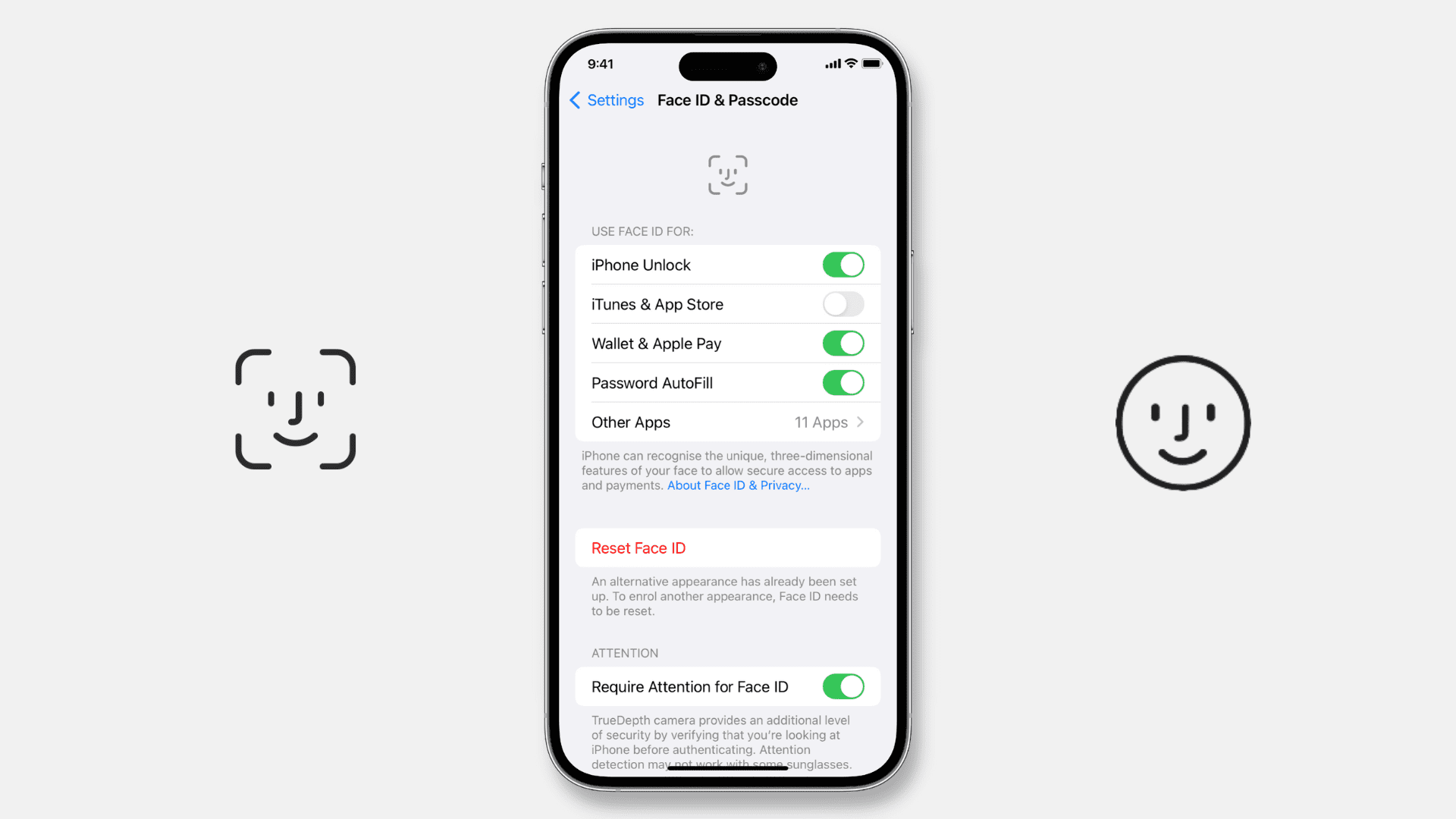

The FTC’s Order, which is contingent upon approval from the U.S. Bankruptcy Court following Rite Aid’s Chapter 11 bankruptcy filing, mandates the deletion of any images collected through the facial recognition system, along with any derived products. Furthermore, Rite Aid is required to establish a robust data security program to protect any personal data it gathers.

Unveiling the Reckless Deployment

A 2020 Reuters report revealed that Rite Aid had covertly implemented facial recognition systems across approximately 200 U.S. stores over an eight-year period, commencing in 2012. The rollout was primarily concentrated in lower-income, non-white neighborhoods, serving as a testing ground for the technology.

Allegations and Consequences

The FTC’s allegations against Rite Aid include the creation of a “watchlist database” containing images of customers purportedly involved in criminal activities at the company’s stores. These images, frequently of subpar quality, were sourced from CCTV or employees’ mobile phone cameras. When a customer whose image matched an entry on the database entered a store, employees would receive an automatic alert, often resulting in unwarranted accusations and actions against the customers.

Controversy and Bias

The use of facial recognition software has sparked widespread controversy, prompting cities to impose bans and politicians to advocate for regulation. Rite Aid’s case also sheds light on inherent biases in AI systems, as the FTC noted that the technology was more prone to generating false positives in stores situated in plurality-Black and Asian communities. Additionally, the company failed to test or measure the accuracy of the facial recognition system before or after deployment.

Rite Aid’s Response

In response to the FTC’s findings, Rite Aid expressed its disagreement with the core allegations, emphasizing that the technology pilot program was discontinued in a limited number of stores over three years ago.