Introduction

Machine learning has revolutionized various industries by enabling computers to learn and make decisions without explicit programming. Deep neural networks (DNNs) are a crucial component of machine learning, playing a vital role in solving complex problems that were once considered challenging for computers to comprehend. In this article, we will explore the concept of DNNs in machine learning, their functionality, benefits, applications, and limitations.

DNNs are artificial neural networks with multiple layers of interconnected nodes, called neurons, that are designed to mimic the behavior of the human brain. These networks are exceptionally adept at processing vast amounts of data, identifying patterns, and making accurate predictions or classifications.

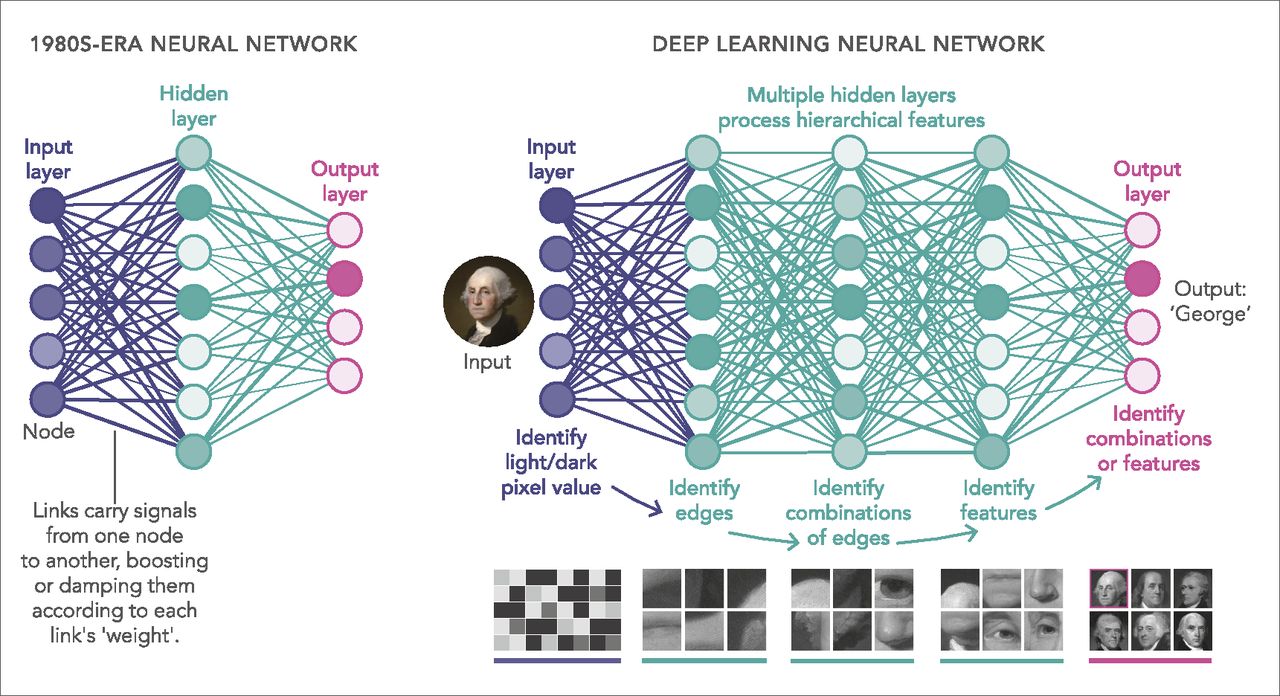

The key feature that sets DNNs apart from traditional neural networks is their depth. While traditional neural networks typically have a few hidden layers, DNNs can contain dozens or even hundreds of layers. This depth allows DNNs to learn and understand complex representations of data, leading to higher accuracy and more sophisticated decision-making capabilities.

DNNs have proven to be highly effective in various domains, such as image recognition, natural language processing, speech recognition, and recommendation systems. They have played a significant role in advancing technologies like self-driving cars, virtual assistants, and personalized advertising.

Despite their numerous advantages, DNNs also bring their fair share of challenges. One of the primary challenges is the need for an enormous amount of labeled data to train the network effectively. Moreover, training a DNN requires significant computational resources and time due to the complex calculations involved. Additionally, overfitting and interpretability of the learned models are other challenges that researchers continue to tackle.

In the following sections, we will dive deeper into the workings of DNNs and explore the benefits, applications, as well as the limitations of this powerful tool in machine learning. So, let’s unravel the complexities of DNNs and understand their impact on the world of machine learning and artificial intelligence.

Definition of DNN in Machine Learning

Deep neural networks (DNNs) are a class of artificial neural networks that are specifically designed to handle complex and high-dimensional data. They are a form of machine learning algorithms that have significantly advanced the field of artificial intelligence (AI) in recent years.

At their core, DNNs are composed of multiple layers of interconnected nodes, known as neurons. Each neuron takes inputs, applies a mathematical transformation (activation function) to these inputs, and produces an output. The output from one layer serves as the input to the next layer, resulting in a hierarchical representation of the data.

The distinguishing characteristic of DNNs is their depth, meaning they have a large number of hidden layers. Traditional neural networks usually have only a few hidden layers, whereas DNNs can have dozens or even hundreds of them. This depth allows DNNs to learn increasingly abstract and complex features in the data, enabling them to make more accurate predictions.

DNNs learn from data through a process called training. During training, the network is presented with input data along with the desired output (also known as the target). The network adjusts its internal parameters, known as weights, based on the comparison between the predicted output and the target. This iterative process continues until the network’s performance improves and it can accurately generalize to unseen data.

One popular technique used to train DNNs is called backpropagation, which is a gradient-based optimization algorithm. It involves calculating the gradients of the network’s weights with respect to a given loss function and updating the weights in the direction that minimizes the loss. This iterative adjustment of weights gradually improves the network’s ability to make accurate predictions.

DNNs excel at tasks such as image recognition, natural language processing, speech recognition, and generative modeling. They have outperformed traditional machine learning algorithms in various domains, producing state-of-the-art results on benchmark datasets. Their ability to automatically learn complex patterns and features from raw data makes them highly effective in handling real-world problems.

In the next sections, we will delve deeper into how DNNs work and explore their benefits and applications in machine learning. So, let’s continue our journey into the fascinating world of deep neural networks.

How DNN Works

Deep neural networks (DNNs) operate by using a series of interconnected layers of artificial neurons to process and analyze data. Each layer in the network consists of multiple neurons that receive inputs, apply a mathematical transformation to these inputs, and produce an output. The output from one layer becomes the input for the next layer, allowing the network to learn increasingly complex representations of the data.

The first layer of a DNN is called the input layer, which receives the raw data. In image recognition tasks, for example, each pixel of an image becomes an input node in the input layer. The output of this layer is then passed to one or more hidden layers, which perform computations on the input and progressively extract more abstract features. These hidden layers are responsible for capturing the patterns and relationships within the data.

Each neuron in the hidden layers applies a mathematical transformation, known as an activation function, to its inputs. This activation function introduces non-linearities into the network, enabling it to learn complex patterns that cannot be captured by linear computations alone. Common activation functions include the sigmoid function, the rectified linear unit (ReLU), and the hyperbolic tangent function.

The final layer of a DNN is the output layer, which produces the network’s prediction or classification. The number of neurons in the output layer depends on the specific task at hand. For instance, in a binary classification problem, the output layer may contain two neurons representing the two possible classes. In a regression problem, the output layer may consist of a single neuron that predicts a continuous value.

Training a DNN involves iteratively adjusting the weights of the neurons to minimize the difference between the network’s predicted outputs and the true outputs. This process is typically done through a technique called backpropagation, where the error signal propagates from the output layer back through the network, updating the weights along the way. This iterative process continues until the network’s performance reaches a satisfactory level.

One of the reasons DNNs are so successful is their ability to automatically learn feature representations from the data. Through the iterative training process, the network discovers and leverages the underlying structure and patterns in the data, gradually improving its ability to make accurate predictions or classifications. This makes DNNs powerful tools for handling complex and high-dimensional data.

In the following sections, we will explore the benefits and applications of DNNs in machine learning. So, let’s continue our journey to discover the impact of deep neural networks on various domains.

Benefits of DNN in Machine Learning

Deep neural networks (DNNs) have revolutionized the field of machine learning and have several key advantages that make them highly valuable in solving complex problems. Here are some of the benefits of using DNNs in machine learning:

- Ability to learn complex representations: DNNs excel at learning intricate patterns and representations from raw data. With their deep architecture and multiple layers, they can capture hierarchical representations, allowing them to understand complex relationships and make accurate predictions.

- High accuracy: DNNs have achieved state-of-the-art results in various domains, surpassing traditional machine learning algorithms. Their ability to automatically learn and extract relevant features from data leads to higher accuracy and improved performance on challenging tasks.

- Handling high-dimensional data: DNNs are well-suited for handling high-dimensional data, such as images, audio, and text. They can effectively process and analyze these types of data, extracting meaningful representations that can be used for tasks like classification, object detection, and language translation.

- Transfer learning: DNNs trained on large datasets for certain tasks can be used as a starting point for solving related tasks. Through transfer learning, the knowledge and representations learned by the network can be transferred to new tasks, reducing the amount of training data and computation required.

- End-to-end learning: DNNs allow for end-to-end learning, meaning they can learn directly from the raw input to the desired output. This eliminates the need for manual feature engineering, as the network automatically learns relevant features from the data, making the modeling process more efficient.

- Ability to handle unstructured data: DNNs are capable of processing unstructured data, such as images, audio, and text, without the need for explicit feature engineering. This makes them suitable for tasks like image recognition, speech recognition, and natural language processing.

These benefits make DNNs a powerful tool in machine learning, enabling advancements in various fields such as computer vision, speech recognition, natural language processing, and many more. By leveraging the capabilities of DNNs, researchers and practitioners can tackle complex problems and achieve remarkable results.

In the next section, we will explore the wide range of applications where DNNs have made significant contributions. So, let’s dive into the fascinating world of DNN applications in machine learning.

Applications of DNN in Machine Learning

Deep neural networks (DNNs) have found extensive applications across various domains, leveraging their ability to process complex and high-dimensional data. Let’s explore some of the key applications where DNNs have made significant contributions in machine learning:

- Image recognition: DNNs have revolutionized image recognition by achieving remarkable accuracy in tasks such as object detection, image classification, and image segmentation. They can learn to recognize objects, animals, and people in images with high precision, enabling applications like self-driving cars, medical imaging, and facial recognition systems.

- Natural language processing: DNNs have made significant advancements in natural language processing tasks, including language translation, sentiment analysis, named entity recognition, and question answering. DNNs can learn the underlying structure of language and generate human-like responses, enabling the development of intelligent chatbots, virtual assistants, and language translation systems.

- Speech recognition: DNNs have played a pivotal role in improving speech recognition systems. By training on extensive speech datasets, DNNs can recognize and transcribe spoken words with high accuracy, contributing to advancements in voice assistants, transcription services, and speech-to-text applications.

- Recommendation systems: DNNs have been instrumental in improving recommendation systems used by e-commerce platforms, streaming services, and social media platforms. By analyzing user behavior and preferences, DNN-based recommendation systems can provide personalized recommendations, enhancing user experience and engagement.

- Generative modeling: DNNs have been extensively used in generative modeling tasks such as image synthesis and text generation. These models can generate highly realistic images, simulate new data samples, and even create human-like text, contributing to advancements in computer graphics, virtual reality, and creative content generation.

- Medical diagnosis: DNNs have shown remarkable potential in medical diagnosis by analyzing medical images, such as X-rays, MRIs, and CT scans. They can assist healthcare professionals in detecting diseases, tumors, and abnormalities, enabling early diagnosis and more accurate treatment planning.

These applications are just the tip of the iceberg when it comes to the potential of DNNs in machine learning. DNNs continue to be at the forefront of research and innovation, enabling advancements in a wide range of fields, from autonomous vehicles to drug discovery.

In the following section, we will discuss the challenges and limitations associated with DNNs, shedding light on the areas where further research and development are needed. So, let’s explore the other side of the coin and understand the challenges faced by DNNs in machine learning.

Challenges and Limitations of DNN

While deep neural networks (DNNs) have shown remarkable success in various applications, they also come with certain challenges and limitations that need to be addressed. Here are some of the key challenges associated with DNNs in machine learning:

- Requirement of large labeled datasets: Training DNNs typically requires large amounts of labeled data to achieve optimal performance. Collecting and annotating such datasets can be time-consuming and costly, especially in domains with limited labeled data or where labeling is subjective, such as medical diagnosis or sentiment analysis.

- Computational complexity and resource requirements: DNN training and inference can be computationally intensive, often requiring powerful hardware resources like GPUs or TPUs. Training large DNN models with many layers and parameters can be time-consuming, making it challenging for researchers with limited resources.

- Overfitting and generalization: DNNs are prone to overfitting, where the model performs well on the training data but fails to generalize to new, unseen data. Mitigating overfitting requires techniques such as regularization, dropout, and early stopping to ensure the model’s ability to make accurate predictions on unseen data.

- Model interpretability: DNNs are often referred to as “black box” models because they lack interpretability. Understanding how the network arrived at a particular decision can be challenging, especially in critical domains like healthcare. Interpretable DNN models are an active area of research, aiming to provide insights into the inner workings of these complex architectures.

- Data bias and fairness: DNNs can be influenced by biases present in the training data, leading to biased predictions or reinforcing societal biases. Ensuring fairness and mitigating bias in DNN models is an ongoing research challenge, requiring efforts to improve data collection strategies, address ethical considerations, and develop fairness-aware algorithms.

- Adversarial attacks: DNNs are vulnerable to adversarial attacks, whereby an adversary intentionally manipulates the input to deceive the model. These attacks can lead to misclassification or compromising the security of the model. Developing robust and adversarially resistant models is an active research field in securing DNNs.

These challenges highlight the evolving nature of DNNs, calling for continued research and development to overcome them. Researchers and practitioners are actively working to address these limitations and develop techniques to enhance the robustness, interpretability, and fairness of DNNs in machine learning.

In the next section, we will conclude our exploration of deep neural networks, summarizing the key insights we have gained throughout this article. So, let’s wrap up our journey into the fascinating world of DNNs in machine learning.

Conclusion

Deep neural networks (DNNs) have undoubtedly transformed the field of machine learning, enabling computers to learn and make complex decisions without explicit programming. With their ability to process high-dimensional data, learn complex representations, and achieve state-of-the-art performance across various domains, DNNs have become a powerful tool in the realm of artificial intelligence (AI).

Throughout this article, we have explored the definition of DNNs and how they work, delving into their multiple layers, activation functions, and the training process. We have discussed the benefits of DNNs, including their ability to handle complex data, high accuracy, and their potential for end-to-end learning. Additionally, we have investigated their applications in image recognition, natural language processing, speech recognition, recommendation systems, generative modeling, and medical diagnosis.

Despite their numerous advantages, DNNs also face challenges and limitations. These include the requirement for large labeled datasets, computational complexity, overfitting, model interpretability, data bias, and vulnerabilities to adversarial attacks. However, ongoing research and development are dedicated to addressing these challenges and advancing the capabilities of DNNs in machine learning.

DNNs continue to push the boundaries of AI and have the potential to revolutionize various industries, from healthcare to autonomous systems. Their remarkable ability to learn from data and extract meaningful representations make them indispensable in solving complex problems and making accurate predictions.

As the field of machine learning and AI continues to evolve, it is essential to stay up to date with the latest developments in DNNs. Advancements in techniques for training, optimization, interpretability, and fairness will further enhance the capabilities and impact of DNNs in the future.

In conclusion, DNNs have brought tremendous advancements to machine learning, enabling computers to mimic the complexity and efficiency of the human brain. By harnessing the power of DNNs, researchers and practitioners can unlock new possibilities and drive innovation across a wide range of applications. The ongoing exploration and refinement of DNNs will undoubtedly shape the future of AI and propel us into a world where machines possess even greater intelligence and capabilities.