Introduction

When it comes to data science, having sufficient RAM (Random Access Memory) is crucial for efficient and effective analysis. As a data scientist, you know that handling large datasets and running complex algorithms require significant computational power, and RAM plays a crucial role in providing the necessary resources.

RAM is a type of computer memory that temporarily stores data that is actively being used. Unlike your computer’s hard drive, which stores data long-term, RAM allows for faster access to frequently used information, allowing your system to quickly read and write data.

In the realm of data science, RAM is essential for several reasons. Firstly, it allows you to store and manipulate large datasets, which is often required for tasks such as exploratory data analysis, feature engineering, and model training. Secondly, RAM enables you to run resource-intensive algorithms and complex calculations without experiencing system slowdowns or crashes. Finally, having sufficient RAM can improve the overall performance of your data science workflows and accelerate the time it takes to complete tasks.

However, determining how much RAM you need for data science can be a challenging task. Several factors come into play, including the size of your datasets, the complexity of your algorithms, and the specific tools and libraries you’re using. In this article, we’ll explore these factors in more detail, provide guidelines for determining your RAM requirements, and offer tips for optimizing RAM usage to ensure smooth and efficient data science operations.

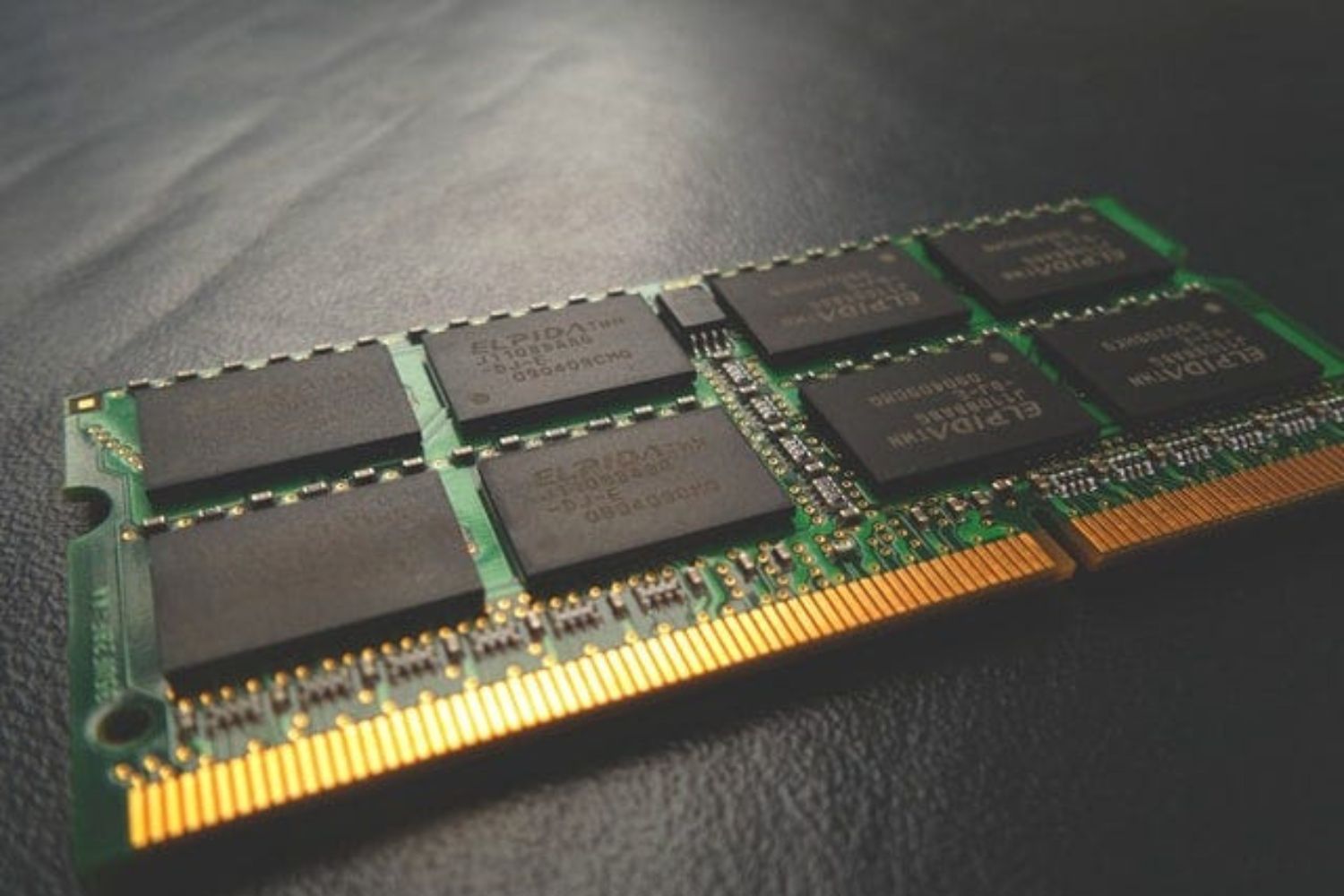

What is RAM?

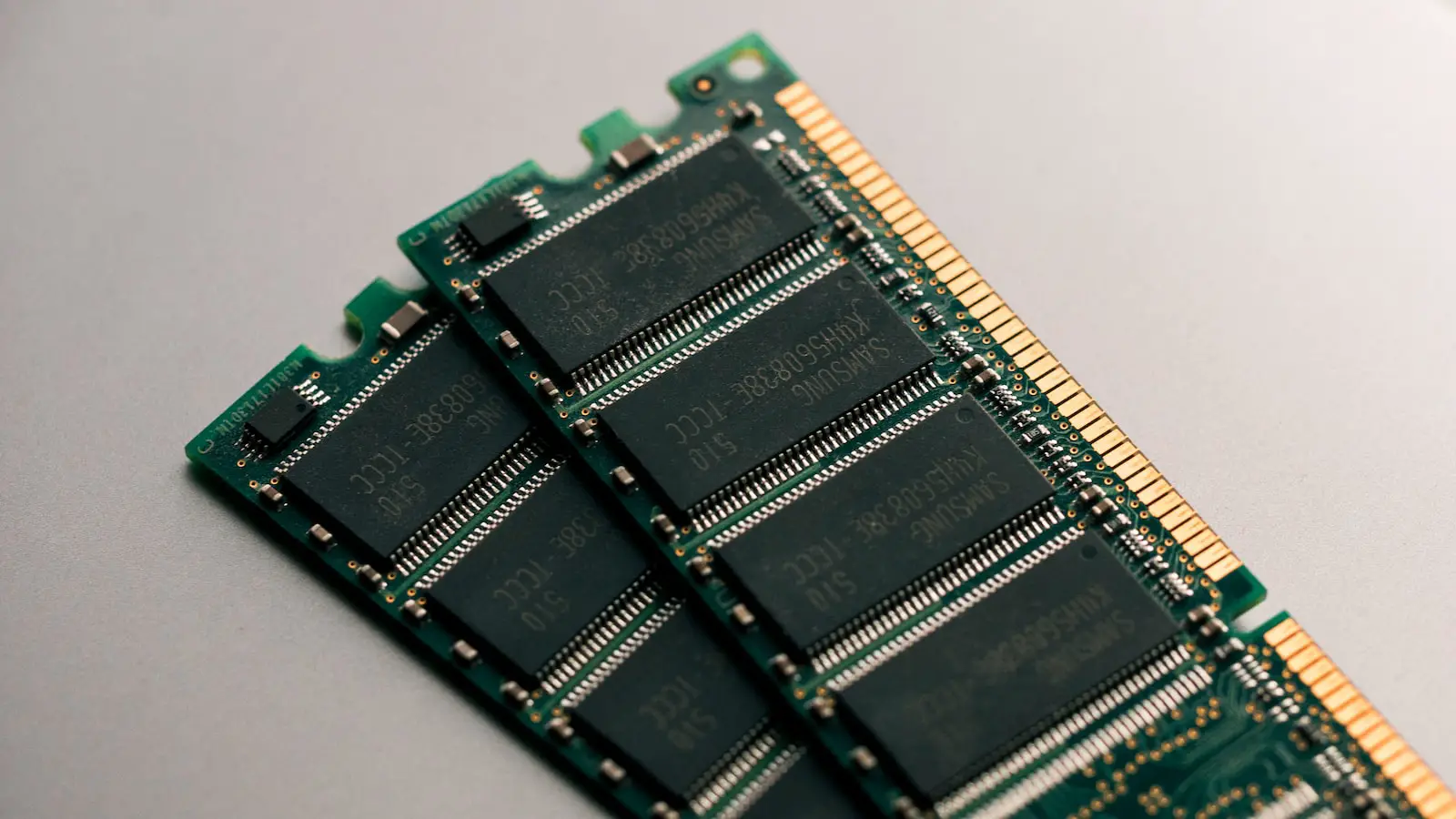

RAM, or Random Access Memory, is a fundamental component of a computer’s memory system. It is a form of volatile memory that stores data that is actively being used by the computer’s operating system, software applications, and processes. Unlike a computer’s hard disk drive (HDD) or solid-state drive (SSD), RAM allows for fast and temporary data storage, providing quick access to information that the computer needs to execute tasks efficiently.

RAM is made up of small electronic circuits that can store and retrieve data rapidly. It is physically installed on the computer’s motherboard and works closely with the processor (CPU) to facilitate the execution of instructions and the manipulation of data. When you open an application or perform a task on your computer, the necessary data is loaded into RAM from the storage device, allowing for faster access and retrieval.

One of the key characteristics of RAM is its volatile nature. This means that the data stored in RAM is lost when the computer shuts down or loses power. Unlike non-volatile storage devices like HDDs and SSDs, RAM requires constant power to retain the information stored within it. Therefore, it is essential to save important data to a more permanent storage medium, such as a hard drive or the cloud, to avoid data loss.

The size of RAM in a computer is typically represented in gigabytes (GB) and determines the system’s capacity to handle data and perform tasks efficiently. More RAM allows for the storage of larger datasets and the seamless execution of memory-intensive operations. However, it is important to note that increasing RAM alone does not necessarily make a computer faster. The overall performance is influenced by various factors, including the CPU, storage devices, and the software being utilized.

In summary, RAM is a vital component of a computer’s memory system that provides quick access to actively used data. It allows for efficient execution of tasks by storing and retrieving information rapidly. Understanding how RAM works and its importance in data science will help you make informed decisions about the amount of RAM you need for your specific workloads.

Why is RAM important for data science?

In the field of data science, RAM plays a critical role in performing complex analyses and handling large datasets. Here are some key reasons why RAM is essential for data science:

1. Handling large datasets: Data scientists often work with massive amounts of data, sometimes reaching several gigabytes or even terabytes in size. RAM allows for quick and efficient access to this data, enabling smooth and seamless manipulation and analysis. Without sufficient RAM, working with large datasets can become sluggish, impacting the overall productivity and efficiency of data science tasks.

2. Running resource-intensive algorithms: Data science involves applying various algorithms and models to extract actionable insights from data. Many of these algorithms, such as machine learning algorithms or complex statistical computations, can be computationally demanding. RAM provides the necessary space to store intermediate results and perform calculations, ensuring that algorithms can run smoothly and without performance degradation.

3. Avoiding disk I/O bottlenecks: When data doesn’t fit entirely into RAM, the system relies on virtual memory, which involves swapping data between RAM and the hard disk. This process, known as disk input/output (I/O), is much slower compared to accessing data directly from RAM. Insufficient RAM can lead to frequent disk I/O, causing significant performance bottlenecks and slowing down data science tasks.

4. Enabling exploratory data analysis: Exploratory data analysis (EDA) is a crucial step in the data science workflow. RAM allows you to load and explore the data quickly, visualize patterns, and perform initial data cleaning. With sufficient RAM, you can efficiently iterate through different analysis approaches, ensuring a more productive and insightful exploration process.

5. Accelerating model training: Machine learning models often require extensive training, which involves repeatedly processing large amounts of data and adjusting model parameters. With more RAM, you can load larger datasets into memory, speeding up the training process and reducing the time it takes to develop and fine-tune machine learning models.

In data science, RAM is a crucial resource that directly affects the performance, speed, and efficacy of data analysis tasks. Having sufficient RAM ensures that you can handle large datasets, run resource-intensive algorithms, avoid disk I/O bottlenecks, and accelerate the overall data science workflow, ultimately leading to more accurate and efficient data-driven insights.

Factors to consider when determining how much RAM you need

Determining the right amount of RAM for your data science needs requires careful consideration of several factors. Here are some key factors to consider when determining how much RAM you need:

1. Dataset size: The size of the datasets you are working with is one of the primary factors to consider. Larger datasets require more RAM for efficient processing and analysis. Assess the typical size of your datasets and ensure that you have enough RAM to load and manipulate them comfortably.

2. Complexity of algorithms: The complexity of the algorithms you use in your data science workflows can significantly affect RAM requirements. More complex algorithms or those that involve large calculations may require additional memory to store intermediate results and perform computations.

3. Software requirements: Different data science tools and software may have varying RAM requirements. Some tools, libraries, or frameworks may be more memory-intensive than others. Research the RAM requirements of the specific software you use and ensure that your system meets those requirements.

4. Concurrent processes: Consider the number of concurrent processes or tasks you typically run simultaneously. Each process will allocate a certain amount of RAM, so if you frequently work with multiple processes concurrently, you will need additional RAM to accommodate them all without performance degradation.

5. Experimentation and prototyping: If you engage in frequent experimentation and prototyping, you may need extra RAM. Iterating through different methodologies and approaches requires loading and manipulating multiple datasets and models concurrently, which can consume significant memory resources.

6. Future scalability: Consider your future needs for scalability. If you anticipate increasing data sizes or working on more complex projects in the near future, it’s wise to plan for additional RAM to accommodate these growing requirements.

7. Operating system and other applications: Remember that your operating system and any other applications running simultaneously also require a portion of your system’s RAM. Ensure that you account for these needs when determining the RAM requirements solely for your data science tasks.

It’s important to strike a balance between having enough RAM for efficient processing and avoiding over-provisioning. Allocating too much RAM can be wasteful and may not result in significant performance gains. Conversely, insufficient RAM can lead to slow execution, frequent paging to disk, and performance bottlenecks.

By carefully considering these factors and evaluating your specific data science requirements, you can determine the optimal amount of RAM needed for your workloads, ensuring smooth and efficient data analysis and modeling processes.

Typical RAM requirements for common data science tasks

The RAM requirements for data science tasks can vary depending on the specific task and the size and complexity of the data involved. Here are some typical RAM requirements for common data science tasks:

Data preprocessing: Data preprocessing tasks, such as cleaning, transforming, and normalizing data, usually require a moderate amount of RAM. The amount of RAM needed will depend on the size of the dataset and the complexity of the preprocessing steps.

Feature engineering: Feature engineering involves creating new features from existing data to improve the performance of machine learning models. RAM requirements for feature engineering depend on the size of the dataset and the complexity of feature engineering techniques used. More complex feature engineering techniques, such as creating interaction terms or encoding categorical variables, may require additional RAM.

Exploratory data analysis (EDA): EDA involves visualizing and summarizing data to gain insights and understand the underlying patterns. RAM requirements for EDA depend on the size of the dataset and the complexity of the visualizations and statistical computations involved. Larger datasets or visualizations with high-resolution graphics may require more RAM.

Model training: The RAM requirements for model training can vary significantly based on the type of algorithm, the size of the dataset, and the complexity of the model. Machine learning models that require intensive calculations, such as deep learning models, often require more RAM. Larger datasets used for training can also contribute to higher RAM requirements.

Model evaluation and validation: Evaluating and validating machine learning models typically requires loading the trained model, preprocessing new data, and performing predictions. The RAM required for model evaluation and validation is generally moderate, depending on the size of the model and the new data used for validation.

Large-scale data analysis: When working with big data or large-scale data analysis, such as analyzing social media streams or processing massive log files, the RAM requirements can be significant. Handling large datasets with distributed computing frameworks like Apache Spark may require additional RAM to store intermediate results and handle the parallel execution of tasks.

Optimization and hyperparameter tuning: Optimization techniques, such as gradient descent or genetic algorithms, require sufficient RAM to store intermediate results and update model parameters. Hyperparameter tuning, which involves searching for the best set of hyperparameters for a model, may also require additional RAM to facilitate the exploration of different hyperparameter combinations.

It’s important to note that these RAM requirements are general guidelines, and the actual RAM needed may vary based on specific factors, such as the hardware configuration, software optimization, and the efficiency of algorithms and code implementation.

To determine the appropriate RAM allocation for your data science tasks, consider the size of your datasets, the complexity of your algorithms, and the specific requirements of the tools and libraries you are using. This will help ensure that you have sufficient RAM to perform your data science tasks efficiently and effectively.

How to monitor and optimize RAM usage

Monitoring and optimizing RAM usage is essential for ensuring efficient data science workflows and avoiding performance bottlenecks. Here are some strategies to effectively monitor and optimize RAM usage:

1. Monitor RAM usage: Use system monitoring tools to keep track of your RAM usage. Operating systems provide built-in task managers or resource monitors that display real-time RAM usage. Third-party tools like htop or Process Explorer offer more detailed insights into memory consumption. Regularly monitor RAM usage to identify any patterns or spikes and gauge whether you have sufficient RAM for your specific tasks.

2. Identify memory-intensive processes: Identify processes or applications that consume excessive amounts of RAM. Data science tools like Python libraries or statistical software may consume significant memory resources. Optimize code, algorithms, or workflow steps that consume excessive RAM by implementing more efficient data processing techniques or employing more memory-friendly algorithms.

3. Optimize data loading and processing: Efficiently load and process data to minimize RAM usage. Instead of loading entire datasets into memory, consider using techniques like data streaming or chunk processing, where data is loaded and processed in smaller manageable chunks. This reduces the overall memory footprint and allows for more efficient use of available RAM.

4. Clear unnecessary data structures: Release memory occupied by unnecessary data structures or variables. This can be achieved by explicitly deleting objects, clearing caches, or using garbage collection mechanisms provided by programming languages. Removing unnecessary data from memory helps free up RAM for subsequent tasks.

5. Optimize memory caching: Utilize memory caching techniques to reduce disk I/O and improve overall performance. Caching frequently used data in RAM can significantly mitigate the need for disk access, enhancing the speed of subsequent data retrieval and manipulation tasks.

6. Compact and reduce data size: Compress or reduce the size of data, especially if it contains redundant or unnecessary information. Formats like compressed sparse matrix representations or data compression algorithms can help reduce the memory footprint while minimizing the impact on data integrity.

7. Use efficient data structures: Choose appropriate data structures that optimize memory usage. For example, using sparse data structures instead of dense ones for sparse datasets can significantly reduce RAM requirements. Libraries like SciPy and NumPy provide efficient data structures for handling large datasets effectively.

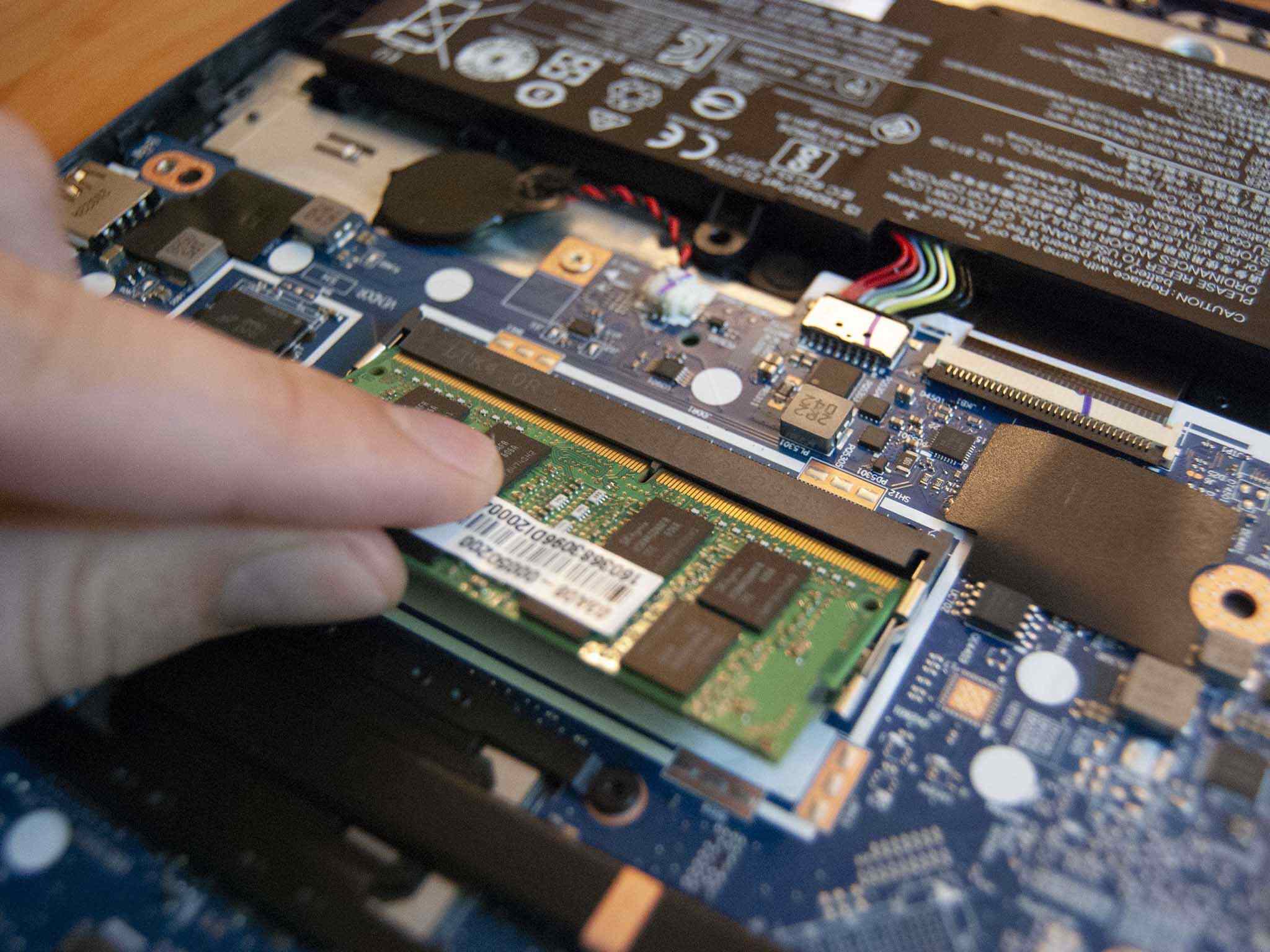

8. Upgrade hardware: If you find that your current RAM capacity is consistently insufficient for your data science tasks, consider upgrading your hardware. Increasing the physical RAM in your machine provides more memory resources for handling larger datasets and running memory-intensive algorithms.

By actively monitoring RAM usage and implementing optimization strategies, you can ensure efficient memory management and improve the overall performance of your data science workflows. Each task or project may have unique optimization opportunities, so regularly assess your RAM usage and adapt your optimization techniques accordingly to maximize the efficiency of your data science work.

Tips for maximizing RAM efficiency in data science work

To make the most of your available RAM and ensure efficient data science work, consider the following tips for maximizing RAM efficiency:

1. Avoid unnecessary duplication: Minimize unnecessary duplication of data in memory. Instead of creating multiple copies of the same dataset or object, modify and operate on a single instance, reducing the memory footprint. Be mindful of how functions and algorithms handle data and avoid unintentional duplications.

2. Batch processing: Break down large tasks into smaller batches and process them iteratively. This approach allows you to work with manageable subsets of data at a time, reducing the overall RAM usage. Batch processing is particularly useful when dealing with large datasets or complex computations.

3. Use memory-mapped files: When working with large datasets that don’t fit entirely in RAM, consider using memory-mapped files. Memory mapping allows you to map a file directly to memory, accessing parts of it as needed, without loading the entire file into RAM. This technique can significantly reduce RAM usage and improve overall performance.

4. Optimize code and algorithms: Review your code and algorithms for potential optimization opportunities. Look for memory-efficient data structures and algorithms that can achieve the same results with less memory consumption. Avoid unnecessary calculations or redundant computations that impact memory usage without adding value to your analysis.

5. Reduce unnecessary dependencies: Minimize the number of libraries and dependencies you use in your data science work. Each library and package consumes memory resources, so assess if you truly need all of them and remove any unused or redundant dependencies. This can help reduce RAM usage and streamline your workflow.

6. Downsample or sample data: If your analysis allows, downsampling or sampling data can help reduce the memory requirements. Instead of working with the entire dataset, consider working with a representative sample. This can still provide valuable insights while requiring less RAM to operate.

7. Optimize memory management: Efficiently manage memory by releasing resources when they are no longer needed. Close files, release handles, and explicitly deallocate memory when appropriate. Proper management of memory resources ensures that RAM is available for other processes and reduces the likelihood of crashes or slowdowns.

8. Utilize parallel processing: Leverage parallel processing techniques to distribute computations across multiple cores or machines. Parallelization helps distribute the memory load and enables efficient use of available resources. Tools like multiprocessing or distributed computing frameworks like Apache Spark can be beneficial for memory-intensive tasks.

9. Regularly clean up resources: Clean up any temporary or intermediary files, caches, and objects created during the data science process. This prevents unnecessary memory occupation and improves overall RAM efficiency. Regularly assess and remove any files or objects that are no longer needed.

By implementing these tips, you can maximize the efficiency of your RAM usage in data science work. Each tip contributes to reducing memory overhead and ensuring that your memory resources are effectively utilized, leading to improved performance and productivity in your data analysis tasks.

How to choose the right RAM for your data science needs

Choosing the right RAM for your data science needs is crucial to ensure optimal performance and efficiency in your work. Here are some factors to consider when selecting the right RAM for your data science tasks:

1. RAM capacity: Determine the amount of RAM you require based on the size of your datasets and the complexity of your algorithms. Larger datasets and memory-intensive tasks generally require more RAM. Assess the memory requirements of your specific data science workflows and choose a RAM capacity that can comfortably handle your workload.

2. RAM type and speed: Consider the type and speed of RAM that is compatible with your computer system. Check the motherboard specifications to determine the supported RAM types (e.g., DDR4, DDR3) and the maximum speed supported. Opt for a RAM type and speed that matches your system’s capabilities for optimal performance.

3. Error correction (ECC) RAM: Depending on the nature of your data science work, consider if you need Error Correction Code (ECC) RAM. ECC RAM has error correction capabilities that detect and correct data errors, enhancing data integrity. It is particularly beneficial for critical and sensitive applications but may come at a higher cost.

4. Dual-channel or quad-channel configuration: Configure your RAM modules in a dual-channel or quad-channel configuration, if supported by your motherboard. This setup enables simultaneous data transfer between the RAM modules, enhancing memory bandwidth and overall performance. Consult your motherboard documentation for guidance on the optimal RAM configuration.

5. Compatibility with your system: Check the compatibility of the RAM with your computer system, including the motherboard, CPU, and operating system. Some systems may have specific requirements or limitations on the type and capacity of RAM they can support. Consult the manufacturer’s specifications or online resources to ensure compatibility.

6. Future upgradability: Consider your future needs for upgradability. If you anticipate handling larger datasets or more complex analyses in the future, it is wise to choose a RAM configuration that allows for easy expansion or replacement. Ensure that your motherboard has available RAM slots and supports higher capacities for future upgrades.

7. Budget considerations: Balance your RAM requirements with your budget constraints. RAM prices can vary based on factors such as capacity and speed. Assess your needs and allocate a budget that provides sufficient RAM capacity and performance without exceeding your financial limitations.

8. Brand and warranty: Consider reputable brands known for producing reliable and high-quality RAM modules. Look for warranties that offer coverage and support in case of any issues. Research customer reviews and ratings to get an idea of the performance and reliability of different RAM modules.

Taking into account these factors, choose the RAM that best meets your specific data science needs. Remember to consider the capacity, type, speed, compatibility, and future upgradability of the RAM within your budget. By selecting the right RAM, you can ensure smooth and efficient data analysis, modeling, and machine learning processes.

Conclusion

RAM plays a crucial role in data science, providing the necessary resources to handle large datasets, run complex algorithms, and ensure efficient data analysis. By understanding the importance of RAM in data science and considering various factors, you can determine the appropriate RAM capacity for your specific needs.

Factors such as dataset size, algorithm complexity, software requirements, and concurrent processes should be considered when determining how much RAM you need. Additionally, monitoring and optimizing RAM usage, maximizing RAM efficiency, and choosing the right RAM configuration are crucial for achieving optimal performance and productivity in data science work.

Monitoring RAM usage, identifying memory-intensive processes, and optimizing data loading and processing contribute to efficient RAM management. Tips such as minimizing data duplication, utilizing memory-mapped files, and using memory-efficient data structures help maximize RAM efficiency in data science tasks. Furthermore, selecting the appropriate RAM capacity, type, speed, and considering future upgradability aligns your system with the demands of data science work.

By following these guidelines, you can ensure that your RAM resources are effectively utilized, leading to improved performance, faster analysis, and more efficient data-driven insights in your data science endeavors.