In a groundbreaking move, Patronus AI, a startup founded by two former AI experts from Meta, has launched an innovative solution for evaluating and testing large language models (LLMs) in regulated industries. With a focus on industries that demand near-zero tolerance for errors, the company aims to provide a managed service that identifies potential issues and ensures the safety and accuracy of LLMs.

Key Takeaway

- Patronus AI, a startup founded by former AI experts from Meta, has launched a managed service to evaluate and test large language models (LLMs) in regulated industries.

- The company’s product automates and scales the process by scoring models, generating test cases, and benchmarking models against specific criteria.

- Patronus AI focuses on highly regulated sectors to ensure LLMs are safe and accurate, detecting instances of inappropriate outputs or business-sensitive information.

- The startup plans to hire additional talent, emphasizes diversity, and aims to be a trusted third party in model evaluation.

- The successful $3 million seed round was led by Lightspeed Venture Partners.

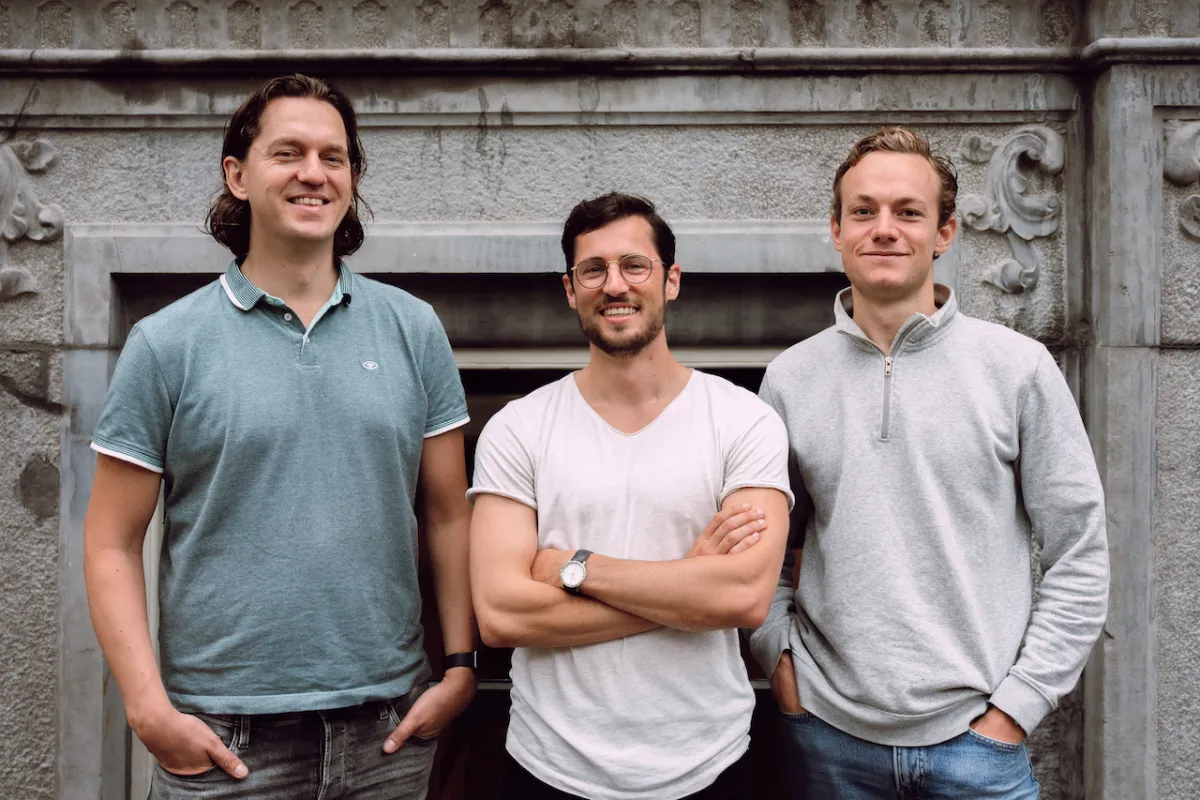

Rebecca Qian, the Chief Technology Officer (CTO) at Patronus AI, previously spearheaded responsible Natural Language Processing (NLP) research at Meta AI. Her co-founder and CEO, Anand Kannappan, played a key role in developing explainable Machine Learning (ML) frameworks at Meta Reality Labs. Today, their startup emerges from stealth, making their product widely available and announcing a successful $3 million seed round.

Automating and Scaling the LLM Evaluation Process

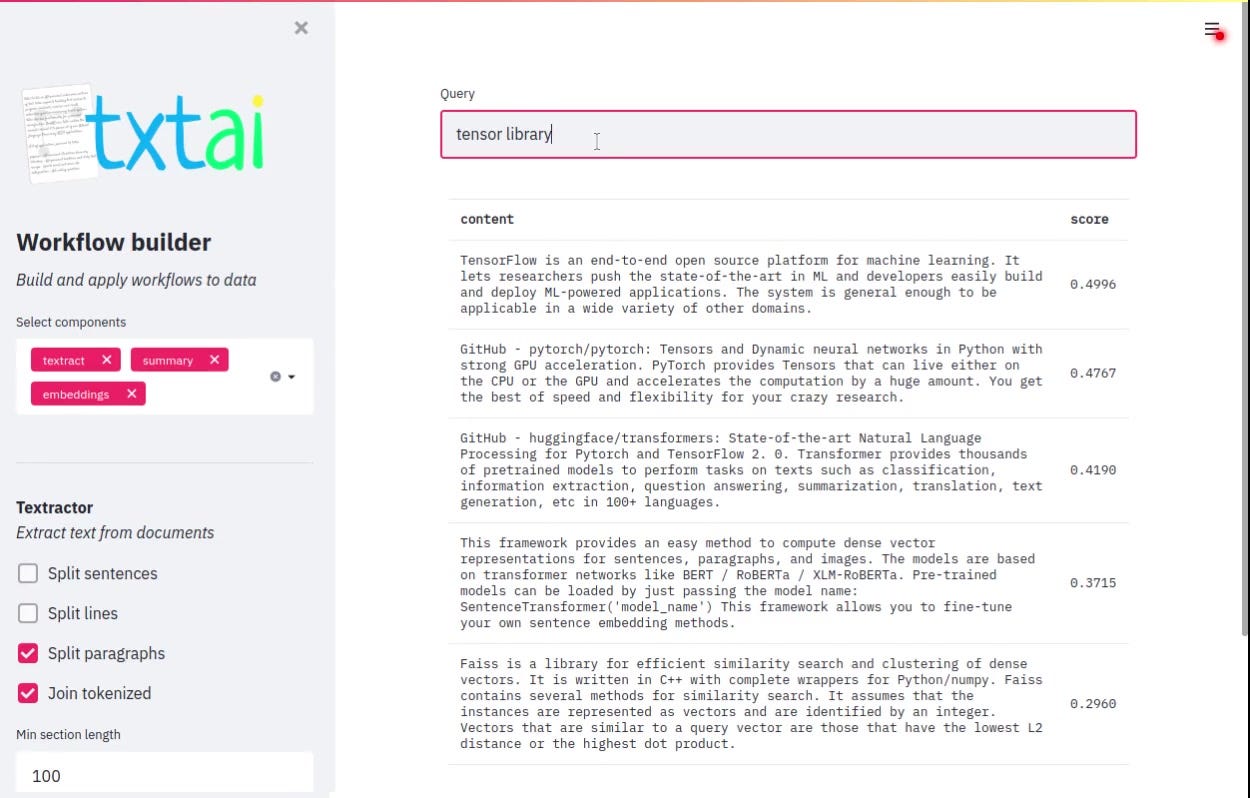

Patronus AI seeks to automate and scale the entire process of evaluating and testing large language models. The product encompasses three essential steps to ensure the reliability and accuracy of LLMs.

The first step involves scoring models in real-world scenarios, enabling users to assess key criteria such as the likelihood of hallucinations. By generating adversarial test suites and subjecting the models to stress tests, the product creates extensive test cases to identify areas of concern. Finally, the platform benchmarks models using various criteria tailored to specific requirements, enabling users to select the most suitable model for their use case.

Qian emphasizes the importance of comparing different models, stating, “We help users identify the best model for their specific use case. For instance, we compare models to determine factors such as failure rates and hallucinations, enabling users to make informed decisions.”

Ensuring Safety in Highly Regulated Industries

Patronus AI primarily targets highly regulated industries where incorrect answers generated by LLMs can have severe consequences. By detecting instances where models produce business-sensitive information or inappropriate outputs, the company helps organizations ensure the safety and reliability of the LLMs they utilize.

Kannappan notes that Patronus AI aims to be a trusted third party in the evaluation of models, providing an unbiased and independent perspective. “Anyone can claim that their LLM is the best, but we are the credibility checkmark,” he affirms.

With the startup currently employing six full-time team members, the company plans to expand its workforce in the near future to meet the growing demands of the industry. As diversity is a core value for Patronus AI, Qian stresses its importance, stating, “We are deeply committed to diversity, starting from the leadership level at Patronus. As we continue to grow, we will prioritize implementing programs and initiatives that foster an inclusive workspace.”