Introduction

Welcome to the fascinating world of web scraping with PHP! In the digital era, the internet is an abundant source of information, with websites containing valuable data that can be harnessed for various purposes. Web scraping, also known as web harvesting or data extraction, is the process of automatically retrieving and extracting data from websites. With PHP, a powerful and versatile scripting language, you can leverage its capabilities to scrape websites and extract the desired information efficiently.

Web scraping has gained immense popularity due to its wide range of applications. Whether you’re a researcher collecting data for analysis, a business owner looking to gather market insights, or a developer in need of data for your application, web scraping can be a valuable tool in your arsenal.

In this article, we will explore the basic steps involved in web scraping using PHP. We will start by installing the necessary tools and libraries, followed by understanding the HTML structure of websites, and selecting the elements to scrape using CSS selectors. We will then delve into the process of scraping data using PHP and handling various website structures. Additionally, we’ll cover parsing the scraped data and storing it for further analysis.

Before diving into the technical aspects, it’s essential to touch upon the best practices and legal considerations of web scraping. While web scraping can provide extensive benefits, it is crucial to respect website owners’ privacy and adhere to ethical guidelines. We will discuss these considerations to ensure that your web scraping activities are conducted responsibly.

By the end of this article, you will have a solid foundation to embark on your web scraping journey with PHP. So, let’s roll up our sleeves, equip ourselves with PHP, and uncover the power of web scraping!

What is web scraping?

Web scraping is a technique used to extract data from websites. It involves retrieving information from web pages, collecting specific data points, and organizing it in a structured format. With web scraping, you can automate the process of gathering data from multiple websites, saving time and effort.

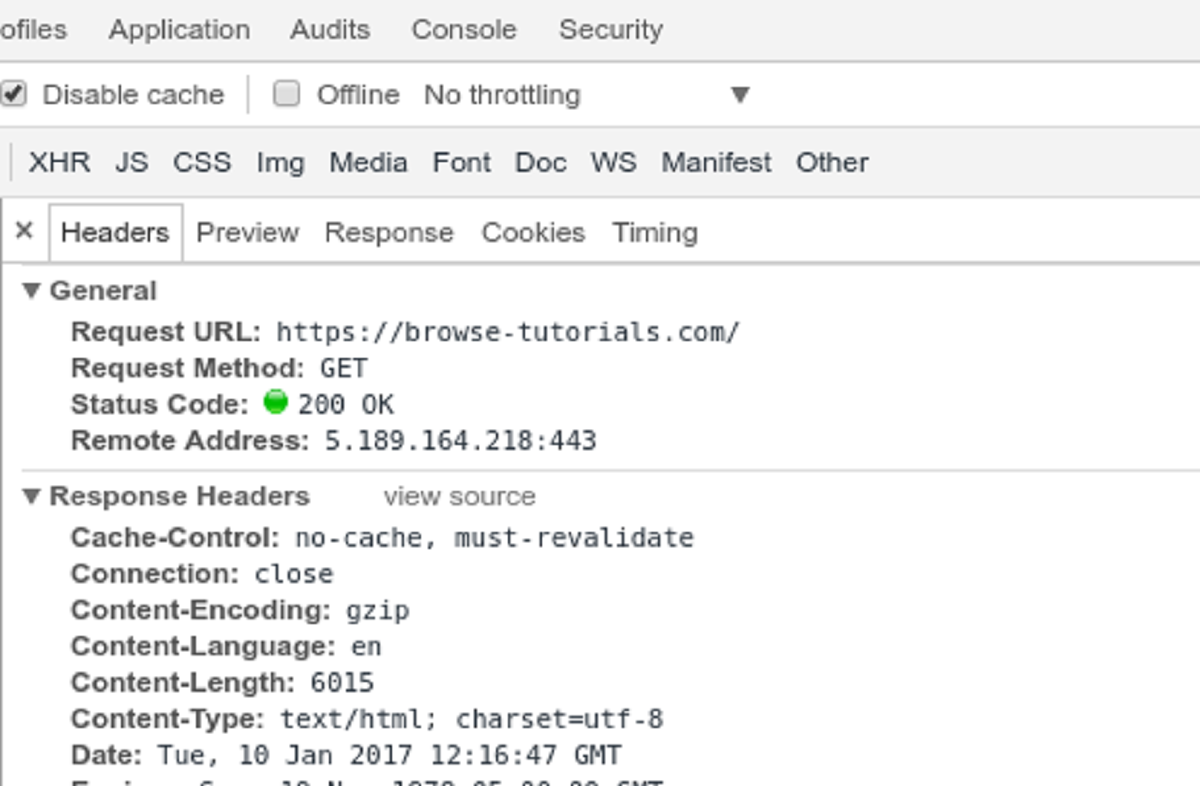

At its core, web scraping involves sending HTTP requests to a website, downloading the HTML code of the page, and extracting the desired information. This information can include text, images, links, tables, and more, depending on the specific data you are interested in.

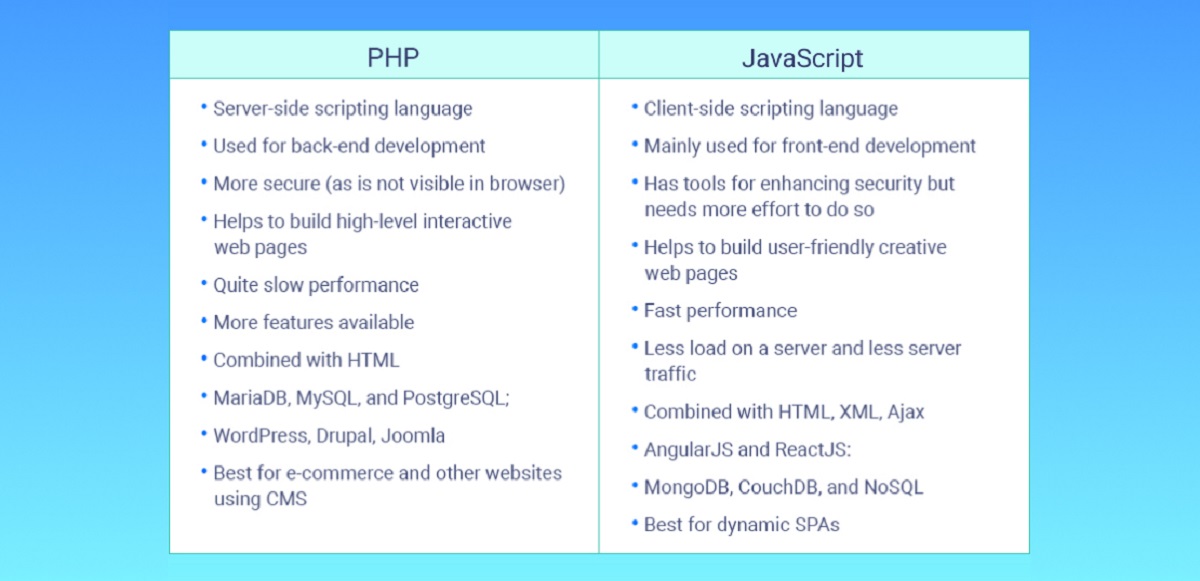

Web scraping can be performed manually, but this approach is time-consuming and inefficient, especially when dealing with a large amount of data or multiple websites. That’s where the power of automation comes in. By using programming languages like PHP, you can write scripts to automate the web scraping process, making it faster, more accurate, and scalable.

Web scraping finds applications in various domains. Researchers can scrape data from academic journals, online databases, or social media platforms to gather information for analysis and insights. Business professionals can scrape competitor websites to monitor pricing, customer reviews, and product information. Marketers can scrape websites for lead generation or market research. And developers can scrape data to populate their applications or extract information for data-driven decision making.

However, it’s important to note that not all websites allow web scraping. Some websites have strict terms of service that prohibit their data from being scraped. Additionally, there may be legal and ethical considerations to keep in mind when scraping certain types of data. It’s crucial to understand the rules and guidelines governing web scraping and ensure compliance.

Web scraping has revolutionized the way we gather and utilize data from the internet. With PHP, you have a powerful tool in your hands to automate the process and extract valuable information. In the next sections, we will explore the steps to perform web scraping with PHP and discuss best practices to ensure successful and responsible scraping.

Why scrape a website for data?

Web scraping offers numerous benefits and can be an invaluable tool in a variety of situations. Let’s explore some of the key reasons why scraping a website for data is advantageous:

1. Access to valuable information: Websites contain a wealth of data, from product listings and prices to news articles and user reviews. By scraping websites, you can gather this information and use it for various purposes, such as market research, competitor analysis, or content aggregation.

2. Real-time data monitoring: Many websites continuously update their content. By scraping these sites, you can monitor changes in real time, such as stock prices, social media trends, or news updates. This enables you to stay up to date with the latest information and make informed decisions.

3. Automation and efficiency: Manually collecting data from websites can be time-consuming and repetitive. With web scraping, you can automate the process and extract data from multiple websites simultaneously. This saves you valuable time and allows you to focus on analyzing the collected data rather than gathering it.

4. Competitive intelligence: Scraping competitor websites provides valuable insights into their offerings, pricing, promotions, and customer reviews. This information can help you stay competitive in the market, identify opportunities, and make informed business decisions.

5. Lead generation: Web scraping can be a powerful tool for generating leads. By scraping websites and extracting contact information, you can build targeted email lists, identify potential customers, and reach out to them with your products or services.

6. Research and analysis: Researchers and analysts across various domains can benefit from scraping websites. Whether it’s collecting data for academic research, analyzing market trends, or conducting sentiment analysis on social media platforms, web scraping provides a vast amount of data for exploration and insight generation.

It’s important to note that while web scraping offers numerous benefits, it’s essential to respect the terms of service and legal guidelines set by websites. Some websites explicitly prohibit scraping, while others may require specific permissions or use of APIs. Adhering to these guidelines ensures ethical and responsible scraping practices.

In the next sections, we will dive into the technical aspects of web scraping with PHP, covering the steps involved and best practices to follow. By mastering the art of web scraping, you can unlock the power of data and gain a competitive edge in your endeavors.

Basic steps for web scraping with PHP

Web scraping with PHP involves a series of steps to extract data from websites efficiently. Let’s walk through the basic steps involved in the web scraping process:

1. Identify the target website: Determine the website from which you want to extract data. It can be a single website or multiple websites depending on your requirement.

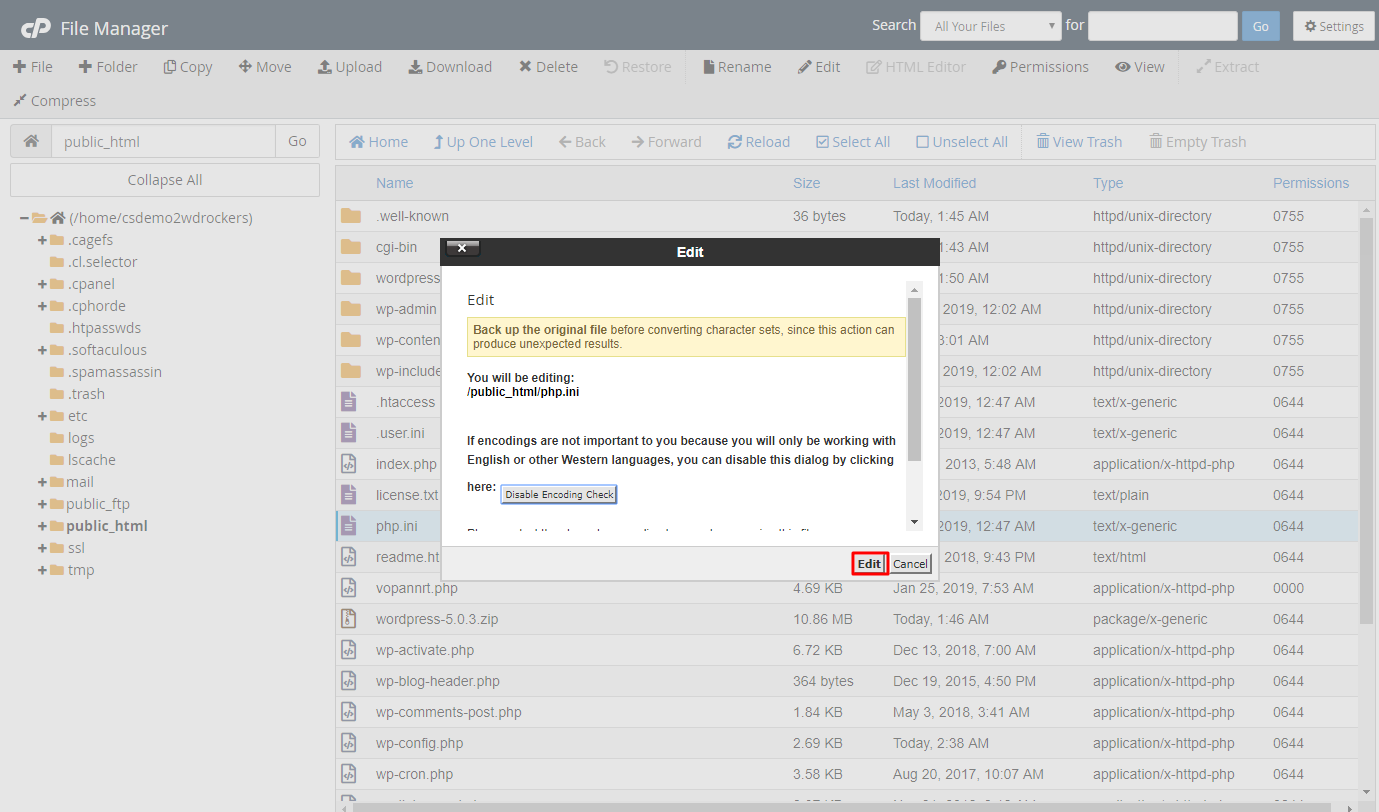

2. Install the necessary tools and libraries: To begin web scraping with PHP, you need to set up the required tools and libraries. This includes installing PHP on your system and utilizing external libraries like cURL, Guzzle, or DOMDocument for handling HTTP requests and parsing HTML.

3. Understand the HTML structure: Inspect the HTML structure of the target website using browser developer tools. This will help you identify the specific HTML elements that contain the data you want to scrape.

4. Select elements to scrape with CSS selectors: Use CSS selectors to pinpoint the HTML elements to scrape. CSS selectors allow you to target elements based on their class, ID, attributes, or hierarchical relationships. This granular selection ensures accurate extraction of the desired data.

5. Scrape the data: Using PHP, send an HTTP request to the target website’s URL and retrieve the HTML content. Parse the HTML document using the DOM manipulation capabilities of PHP to extract the selected elements identified in the previous step. Extract the desired data and store it in variables or arrays.

6. Handle different website structures: Websites vary in their structure and layout. Some websites may have a straightforward structure, while others may require more complex scraping techniques. Be prepared to adapt your scraping logic to handle different scenarios and edge cases.

7. Parse and clean the scraped data: Once the data is extracted, you may need to further process and clean it. This can involve removing unnecessary whitespace, converting data types, filtering out unwanted characters, or performing additional parsing to extract specific information.

8. Store the scraped data: Determine the appropriate storage method for the extracted data. You can save it in a local file, a database, or an external service for further analysis or integration with other applications.

9. Implement error handling and retries: Implement robust error handling mechanisms to handle potential issues such as connection failures, timeouts, or website changes. Incorporate retries to ensure the scraping process continues even in the face of transient errors.

10. Respect website terms of service and legal guidelines: Always ensure you comply with the website’s terms of service and legal requirements. Respect robots.txt files, honor request frequency limits, and obtain necessary permissions if required. Be mindful of potential legal implications and scrape responsibly.

Understanding these basic steps will lay the foundation for successful web scraping with PHP. In the upcoming sections, we will delve deeper into each step, exploring techniques, strategies, and best practices to perform effective web scraping using PHP.

Installing required tools and libraries

Before you can start web scraping with PHP, you need to ensure that you have the necessary tools and libraries installed on your system. These tools and libraries will provide the functionality required to handle HTTP requests, parse HTML, and perform data manipulation. Here are the essential components you’ll need to install:

1. PHP: PHP is the core programming language you’ll be using for web scraping. Ensure that PHP is installed on your system, preferably the latest version, to take advantage of its features and improvements. You can download PHP from the official PHP website (php.net) and follow the installation instructions for your operating system.

2. cURL: cURL is a command-line tool and PHP library for transferring data using various protocols, including HTTP. It allows you to send HTTP requests, handle cookies, and interact with web servers. cURL is widely used in web scraping to retrieve HTML content from websites. To install cURL, you can refer to the cURL documentation or use package managers like Homebrew (for macOS) or apt-get (for Linux).

3. Guzzle: Guzzle is a popular PHP HTTP client library that simplifies the process of sending HTTP requests and handling responses. It provides a high-level API for interacting with websites and offers features like request retries, timeouts, and middleware. You can install Guzzle using Composer, the dependency management tool for PHP. Simply add Guzzle as a dependency in your composer.json file and run composer install to install it.

4. DOMDocument: The DOMDocument class in PHP provides a convenient way to parse HTML and XML documents, manipulate their contents, and extract specific elements. It is built-in with PHP, so there is no additional installation required. You can use DOMDocument to traverse the HTML structure of a website, select elements using CSS selectors, and extract the desired data.

These tools and libraries form the foundation of web scraping with PHP. Depending on your specific requirements, you might also need to install additional libraries or modules. For example, if you’re working with JavaScript-heavy websites, you might consider using headless browsers like Puppeteer or libraries that can execute JavaScript, such as PhantomJS or Selenium.

Once you have PHP, cURL, Guzzle, and DOMDocument installed, you’re ready to dive into the exciting world of web scraping. In the next sections, we will explore the HTML structure of websites and learn how to select elements for scraping using CSS selectors.

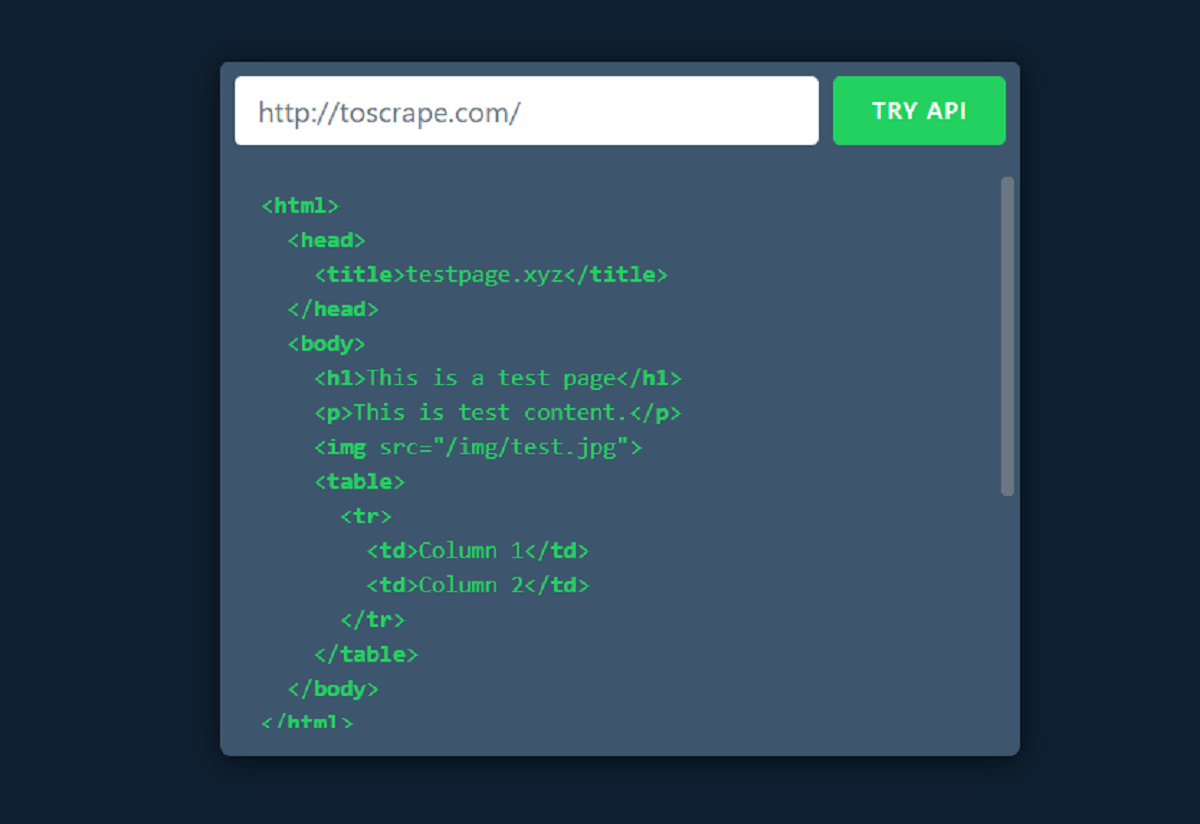

Understanding the HTML structure of a website

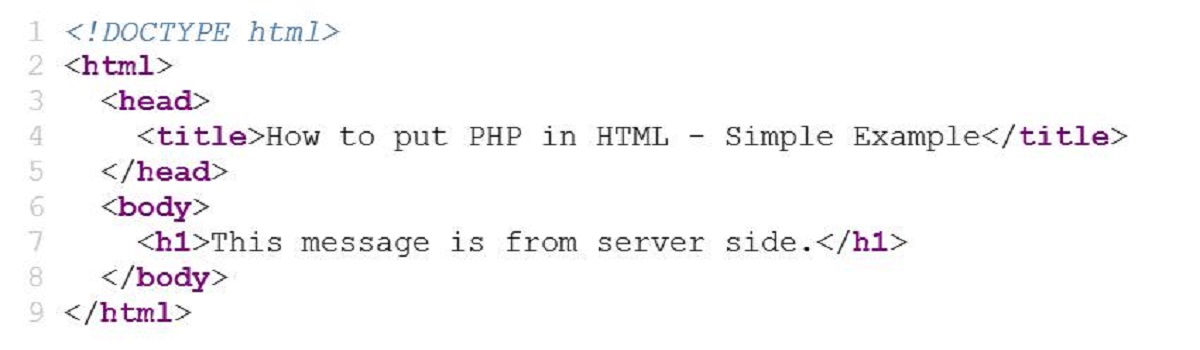

Having a solid understanding of the HTML structure of a website is fundamental to effective web scraping with PHP. HTML (Hypertext Markup Language) is the standard language used for creating web pages, and it serves as the foundation for how websites are structured and organized.

When you visit a website, your web browser receives an HTML document that defines the structure and content of the page. This document is composed of HTML tags, nested elements, attributes, and text content. By inspecting the HTML structure of a website, you can identify the specific elements that contain the data you want to extract.

To gain insights into the HTML structure, you can use the developer tools provided by modern web browsers. Right-click on a webpage and select “Inspect” or press Ctrl+Shift+I (or Command+Option+I on Mac) to open the developer tools. Within the developer tools, navigate to the “Elements” or “Inspector” tab, which displays the HTML structure of the page.

Once you have the HTML structure in front of you, explore the elements and their relationships. Elements are enclosed within HTML tags, such as <div>, <p>, or <h1>. Elements can be nested within each other, forming a hierarchical structure. Parent elements contain child elements, and they can also have siblings at the same level.

Inspecting the HTML structure will help you identify the specific elements that hold the data you want to scrape. These elements can be headings, paragraphs, tables, list items, or any other HTML tags that encapsulate the desired information. By analyzing the class names, IDs, attributes, or their positions within the structure, you can pinpoint the elements relevant to your scraping needs.

Understanding the HTML structure is crucial for utilizing CSS selectors, which allow you to target specific elements with precision. CSS selectors act as filters, selecting elements based on their attributes, classes, IDs, or relationships with other elements. Selectors provide a powerful way to extract data selectively from a website.

By combining your knowledge of the HTML structure with CSS selectors, you can efficiently scrape the desired data. The next section will delve into the usage of CSS selectors for selecting elements to scrape with PHP. With a solid understanding of the HTML structure and CSS selectors, you’ll be well-equipped to navigate websites and extract valuable information for your scraping endeavors.

Selecting elements to scrape with CSS selectors

When it comes to web scraping with PHP, selecting the elements you want to scrape from a website is a critical step. CSS selectors provide a powerful and flexible way to target specific elements based on their attributes, classes, IDs, or relationships with other elements.

CSS selectors allow you to precisely identify the elements that contain the data you want to extract. By using CSS selectors, you can filter out irrelevant elements and focus only on the ones that matter. This level of granular selection ensures accurate and targeted scraping.

To select elements with CSS selectors, you need to understand the various types of selectors and their syntax. Here are some commonly used CSS selectors:

- Element selector: Selects all elements of a specific type. For example, the selector

pwill select all<p>tags. - Class selector: Selects elements that have a specific class attribute. For example, the selector

.my-classwill select all elements with the class “my-class”. - ID selector: Selects an element with a specific ID attribute. For example, the selector

#my-idwill select the element with the ID “my-id”. - Attribute selector: Selects elements that have a specific attribute. For example, the selector

[data-url]will select all elements with the attribute “data-url”. - Combination selectors: You can combine multiple selectors to target specific elements based on their relationships or combinations of attributes. For example, the selector

div.my-classwill select all<div>tags with the class “my-class”.

When scraping a website, you can use CSS selectors to identify the HTML elements that contain the data you want to extract. For example, if you want to scrape the titles of news articles from a website, you can use a class selector to target the elements with the class “news-title”. Similarly, if you want to scrape all the links on a webpage, you can use an element selector for <a> tags.

Once you have selected the elements using CSS selectors, you can utilize PHP’s DOM manipulation capabilities to extract the desired data. By accessing the properties and methods of the selected elements, you can retrieve their text content, attributes, or even navigate to their child elements.

Understanding and utilizing CSS selectors is a key skill for effective web scraping with PHP. In the upcoming sections, we will explore the process of scraping data using PHP and demonstrate how CSS selectors can be incorporated to extract specific elements for further processing.

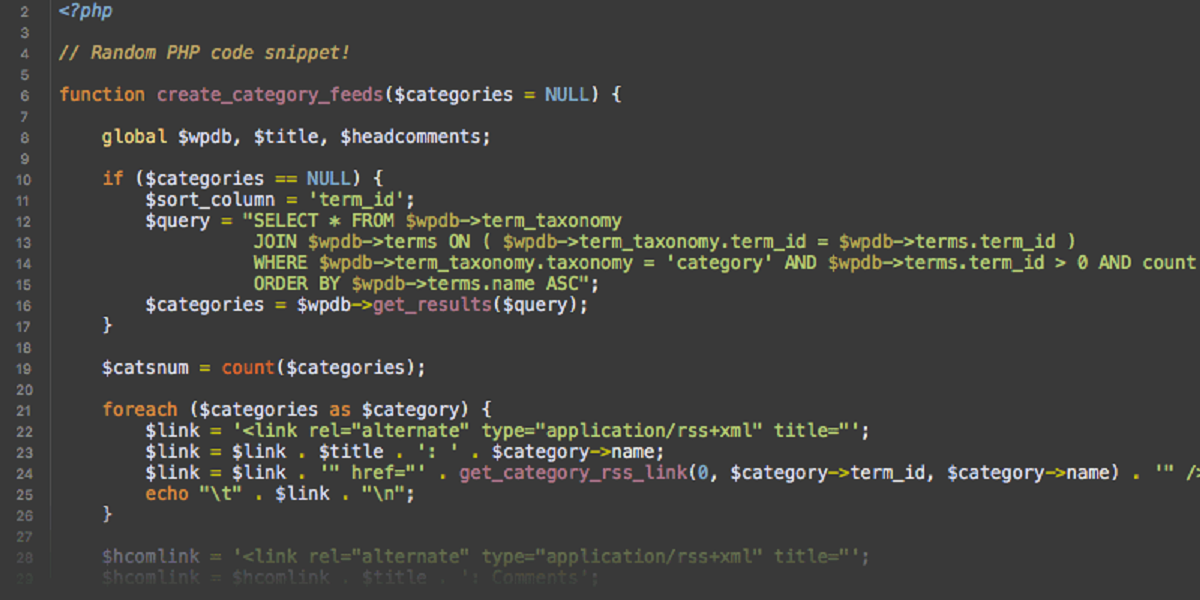

Scraping data using PHP

Now that we have a solid understanding of CSS selectors and the HTML structure of a website, let’s dive into the process of scraping data using PHP. With PHP’s powerful DOM manipulation capabilities and the knowledge of CSS selectors, we can efficiently extract the desired information from web pages.

The first step in scraping data with PHP is to retrieve the HTML content of the target website. This is accomplished by sending an HTTP request to the website’s URL using PHP’s built-in functions like cURL or Guzzle. Once the HTML content is obtained, we can use PHP’s DOMDocument class to parse and manipulate the HTML.

Using DOMDocument, we can select elements on the page using CSS selectors. We can use the getElementsByTagName() method to retrieve elements by their tag name, or utilize the querySelectorAll() method to select elements using CSS selectors directly.

Once we have selected the desired elements, we can access their properties and methods to retrieve the information we want. For example, we can use the textContent property to extract the text content of an element, or the getAttribute() method to retrieve the value of a specific attribute.

In some cases, the scraped data may require further processing or cleaning. This can include removing unnecessary whitespace, converting data types, or applying regular expressions to extract specific information. PHP provides a wide range of functions and libraries to facilitate these tasks, allowing us to manipulate the scraped data according to our needs.

As we scrape data from multiple elements, it’s important to consider how we store the scraped information. Depending on our requirements, we can store the data in variables, arrays, or even write it to a file or database for further analysis or integration with other applications.

While performing web scraping with PHP, it’s crucial to handle various scenarios and errors gracefully. This includes implementing error handling mechanisms to capture potential issues like HTTP request failures or invalid HTML structures. Additionally, we should be mindful of the website’s terms of service and any usage restrictions to ensure responsible and ethical scraping practices.

By leveraging PHP’s DOM manipulation capabilities, CSS selectors, and data processing functions, we can effectively scrape data from websites and extract the desired information. In the following sections, we’ll explore advanced techniques and best practices to enhance our web scraping capabilities with PHP.

Handling different types of website structures

When it comes to web scraping, websites can have varying structures and layouts. Some websites may have a straightforward and well-organized HTML structure, while others may be more complex and dynamic, utilizing JavaScript frameworks or AJAX requests to load content. To scrape data effectively, it’s important to be prepared to handle different types of website structures.

Here are a few techniques and considerations for handling different website structures when performing web scraping with PHP:

1. Static HTML websites: Static websites have a simple HTML structure with static content that does not change frequently. Scraping data from static websites is usually straightforward, as you can identify the HTML elements you need to target using CSS selectors. Simply retrieve the HTML content and use PHP’s DOMDocument to select and extract the desired data.

2. Dynamic HTML websites: Dynamic websites, on the other hand, rely on JavaScript to load and render content dynamically. In such cases, PHP’s DOMDocument may not be sufficient to scrape the desired data since it does not execute JavaScript. To handle dynamic websites, you might need to use techniques like headless browsers (e.g., Puppeteer or Selenium) or libraries that can execute JavaScript (e.g., PhantomJS) to fully load and interact with the website before scraping.

3. AJAX-driven websites: Many modern websites utilize AJAX requests to fetch and display content asynchronously. In these cases, the initial HTML response might be minimal, and the actual data is loaded and injected into the DOM dynamically using JavaScript. To scrape data from AJAX-driven websites, you can inspect the network requests made by the website and identify the API endpoints that fetch the data. You can then simulate these requests using PHP to retrieve the desired data.

4. Pagination and infinite scrolling: Websites often display data in chunks or multiple pages, requiring scraping of multiple pages to obtain complete information. For paginated websites, you can loop through the pages, updating the URL parameters or following the “next” buttons to scrape all the data. For websites with infinite scrolling, where additional content is dynamically loaded as the user scrolls, you might need to simulate scrolling and handle AJAX requests to collect the full set of data.

5. Captchas and access restrictions: Some websites implement measures to prevent scraping, such as captchas or access restrictions. While it’s important to respect website policies and legal guidelines, there might be cases where you need to overcome these challenges. Techniques like using proxy servers, rotating user-agents, or employing anti-captcha services can help circumvent these obstacles, but caution must be exercised to ensure compliance and responsibility.

Handling different website structures requires adaptability and a combination of techniques depending on the specific scenarios you encounter. By understanding the website’s structure, identifying the relevant data, and employing the appropriate approaches, you can overcome challenges and successfully scrape data from various types of websites.

In the upcoming sections, we will explore advanced strategies and best practices for web scraping with PHP, equipping you with the knowledge to optimize your scraping efforts and handle even the most complex website structures.

Parsing scraped data

After successfully scraping data from a website using PHP, the next step is to parse and process the extracted data. Parsing involves transforming the raw scraped data into a structured format that is easier to work with and analyze. Depending on the type of data you have scraped, different parsing techniques may be required.

Here are some techniques for parsing scraped data in PHP:

1. Text processing: If the scraped data is primarily text-based, you can perform text processing techniques to clean, format, and extract relevant information. This can include removing unwanted characters or whitespace, converting date or numeric strings into appropriate formats, or applying regular expressions to extract specific patterns from the data.

2. HTML parsing: In some cases, the scraped data may contain HTML elements or tags. To work with this data efficiently, you can utilize PHP libraries like DOMDocument to parse the HTML and extract the desired information. This allows you to navigate the HTML structure, access specific elements, and retrieve their text content, attributes, or child elements.

3. XML parsing: If the scraped data is in XML format, PHP provides libraries such as SimpleXML or XMLReader for parsing XML documents. These libraries allow you to traverse the XML structure, access individual elements, and extract the required data using XPath expressions or simple iteration.

4. JSON parsing: JSON (JavaScript Object Notation) is a popular format for transmitting structured data. If the scraped data is in JSON format, PHP’s built-in functions like json_decode() allow you to parse the JSON string and convert it into a PHP array or object. This enables easy access and manipulation of the data within the PHP environment.

5. Data transformation: Depending on the intended use of the scraped data, you might need to transform it into a different format or structure. This can involve reshaping the data into a tabular format, converting it into a specific data type, or aggregating and summarizing the data for further analysis. PHP provides a wide range of functions and libraries to facilitate data transformation tasks.

By parsing the scraped data, you can prepare it for further analysis, integration, or visualization. You can store the parsed data in a structured format, such as arrays or objects, and leverage the power of PHP to manipulate and work with the data efficiently.

It is important to note that data parsing can vary based on the specific requirements of your project and the nature of the scraped data. Exploring the available parsing techniques and understanding the data structure will help you process and extract meaningful insights from the scraped data.

In the next sections, we will explore strategies for storing the scraped and parsed data, as well as best practices for successful web scraping with PHP.

Storing the scraped data

Once you have successfully scraped and parsed the data using PHP, the next step is to determine how to store it for further use, analysis, or integration with other applications. The choice of storage method depends on various factors, such as the size and structure of the data, the intended use, and the scalability requirements of your project.

Here are some common approaches for storing scraped data with PHP:

1. Local files: Storing the scraped data in local files is a simple and straightforward option. You can write the data to a text file, CSV file, or even serialize and store it as JSON or XML. This method is suitable for smaller datasets or when immediate access is not required. However, it may not be the most efficient option for large or frequently updated datasets.

2. Databases: Databases provide a structured and efficient way to store and manage large quantities of scraped data. PHP supports various database systems such as MySQL, PostgreSQL, and SQLite through extensions like PDO. You can create tables to store the data and use SQL queries to insert, retrieve, update, and delete records. Databases provide capabilities for data indexing, querying, and advanced data manipulation.

3. Cloud storage: Cloud storage services like Amazon S3, Google Cloud Storage, or Microsoft Azure Blob Storage offer scalable and reliable options for storing scraped data. These services provide APIs and SDKs that can be integrated with PHP to upload and retrieve data. This approach is particularly useful when dealing with large datasets or when you need to collaborate with a distributed team.

4. Content Management Systems (CMS): If you are scraping data to populate a website or an existing content management system, integrating with the CMS’s API is an ideal option. Many CMS platforms, such as WordPress or Drupal, provide APIs that allow you to perform CRUD (Create, Read, Update, Delete) operations on their data structures. By utilizing the appropriate API endpoints, you can directly store the scraped data into the CMS.

5. NoSQL databases: NoSQL databases like MongoDB or CouchDB offer flexible and schema-less data storage. They are particularly useful when dealing with unstructured or semi-structured data. With PHP, you can leverage MongoDB’s official driver or other libraries to store and retrieve the scraped data.

When deciding on the storage approach, consider factors such as the volume and nature of the data, the required accessibility and scalability, and the integration capabilities with other systems. Additionally, ensure that you adhere to any legal and ethical considerations regarding the storage and use of the scraped data.

By selecting the appropriate storage method, you can securely preserve the scraped data and harness its value for analysis, visualization, or any other purposes that align with your project goals.

In the upcoming sections, we will explore best practices for web scraping with PHP, including handling legal and ethical considerations, as well as strategies for ensuring efficient and reliable scraping processes.

Best practices for web scraping with PHP

Web scraping with PHP can be a powerful and valuable tool, but it’s important to follow best practices to ensure effective and responsible scraping. Here are some best practices to consider when performing web scraping with PHP:

1. Respect website terms of service: Before scraping a website, review its terms of service and understand any specific guidelines or restrictions regarding scraping. Some websites may explicitly prohibit scraping, while others may have specific usage policies or require permissions. Adhere to these guidelines to ensure compliance and prevent legal issues.

2. Identify yourself as a bot: When making HTTP requests to websites, include a user-agent header indicating that you are a bot or automated process. This helps websites differentiate between legitimate traffic and potential abuse, and it promotes responsible scraping practices.

3. Use rate limiting and delays: Implement rate limiting or delays between your requests to avoid overloading the website’s servers. Respect any requests for specific time intervals between requests specified in the website’s robots.txt file. This ensures that your scraping activities do not disrupt the website’s regular operations.

4. Handle errors and exceptions gracefully: Implement error handling mechanisms to handle potential errors and exceptions during the scraping process. This includes handling connection failures, timeouts, and data inconsistency. Robust error handling ensures the scraping process continues smoothly and avoids unexpected interruptions.

5. Be mindful of website load: Consider the impact of your scraping activities on the target website’s server load. Avoid requesting excessive amounts of data or scraping at an unnaturally high frequency. Strive to be a responsible web scraper and minimize unnecessary strain on the website’s resources.

6. Be cautious with personal and sensitive data: Exercise caution when scraping websites that contain personal or sensitive information. Avoid collecting or storing personally identifiable information without proper consent or legal authorization. Handle scraped data responsibly and in compliance with applicable data protection laws.

7. Regularly review and update your scraping scripts: Websites may undergo changes in their layout, structure, or data formats over time. Regularly review and update your scraping scripts to ensure they adapt to any changes. This helps maintain the accuracy and reliability of your scraping process.

8. Document your scraping activities: Keep track of the websites you are scraping, the data you are extracting, and any relevant details in a documentation system. This provides transparency and ensures that you can refer back to your scraping activities if needed.

9. Test and validate your scraping code: Before deploying your scraping code, thoroughly test and validate it on different websites and scenarios. Verify that it extracts the intended data accurately and handles potential edge cases effectively. Regular testing and validation help ensure the consistency and effectiveness of your scraping process.

10. Be cautious with parallel scraping: When scraping multiple websites concurrently, be mindful of the impact on your resources and the target websites. Respect website-specific restrictions and ensure that parallel scraping does not cause undue strain or excessive load.

By following these best practices, you can enhance the efficiency, reliability, and ethical aspects of your web scraping activities with PHP. Responsible scraping not only ensures compliance with legal and ethical guidelines but also promotes positive relationships with website owners and fosters a healthy web ecosystem.

In the final section, we will summarize the key concepts discussed and reinforce the importance of responsible web scraping with PHP.

Legal and ethical considerations

When engaging in web scraping activities with PHP, it’s essential to be aware of and adhere to the legal and ethical considerations surrounding web scraping. By staying within ethical boundaries and complying with legal requirements, you can ensure responsible and respectful scraping practices. Here are some important aspects to consider:

1. Terms of service: Before scraping a website, thoroughly review its terms of service. Some websites explicitly prohibit scraping, while others may have specific usage limitations or requirements. Adhering to these terms is crucial to maintain ethical scraping practices and avoid potential legal consequences.

2. Respect robots.txt guidelines: The robots.txt file on a website specifies the crawling permissions and restrictions for web scrapers. It is good practice to respect the guidelines outlined in the robots.txt file. The file may specify crawl rate limits, restricted areas, or even disallow scraping altogether for specific parts of the website.

3. Personal and sensitive data: Exercise caution when scraping websites that contain personal or sensitive information. Avoid scraping and storing personally identifiable information without proper consent or legal authorization. Treat personal and sensitive data with the utmost respect and handle it in compliance with applicable data protection laws.

4. Publicly available data: Focus on scraping publicly available data that is intended for consumption. Avoid bypassing login systems or accessing restricted areas of websites without permission. Scrapping non-publicly available data can infringe upon privacy rights and legal boundaries.

5. Attribute original sources: When using scraped data or sharing it with others, attribute the original source appropriately. Providing proper attribution helps maintain credibility and respects the efforts of the website owner and content creators.

6. Do not overload websites: Be mindful of the impact of your scraping activities on the target websites. Avoid excessive requests or scraping at an artificially high frequency, as this can overload servers and disrupt the normal functioning of the website. Implement rate limiting, delays, or rotate IP addresses when necessary to reduce the load on the websites.

7. Monitor website updates: Regularly monitor the websites you scrape for any changes in their terms of service, robots.txt file, or structure. Stay updated with any new guidelines or restrictions they might impose and adjust your scraping practices accordingly.

8. Be transparent and proactive: If you anticipate scraping large amounts of data or conducting scraping activities that might potentially impact a website, it’s advisable to reach out to the website owner or seek permission. Being transparent about your intentions and seeking consent demonstrates a proactive and responsible approach to web scraping.

It’s important to note that legal regulations surrounding web scraping can vary based on jurisdiction and website-specific terms. Familiarize yourself with the legal requirements applicable to your project and seek legal advice when necessary.

By adhering to these legal and ethical considerations, you can ensure that your web scraping activities with PHP are conducted responsibly, respecting the rights of website owners, and contributing to a positive web ecosystem.

Conclusion

Congratulations! You have now gained a solid understanding of web scraping with PHP. From the basic steps of installation and understanding the HTML structure of a website to selecting elements with CSS selectors, scraping data, parsing it, and storing it for further use, you are equipped with the necessary knowledge to embark on your web scraping journey.

Throughout this article, we have explored best practices to ensure responsible and ethical scraping, such as respecting website terms of service, being mindful of personal and sensitive data, and handling legal considerations. By following these guidelines, you can conduct web scraping activities with PHP in a responsible manner, fostering positive relationships with website owners and maintaining compliance with legal requirements.

Remember to regularly review and update your scraping scripts, test and validate your code, and handle errors gracefully to ensure the efficiency and reliability of your scraping process.

Web scraping with PHP opens up a world of possibilities, enabling you to extract valuable information, conduct research, gain competitive insights, and automate data collection tasks. However, it is essential to use web scraping responsibly, respecting the rights and policies of website owners, and complying with legal and ethical guidelines.

As you embark on your web scraping journey, continue to stay informed about legal regulations and any changes in website terms of service or guidelines. Strive for transparency, seek necessary permissions, and attribute sources appropriately when using the scraped data.

With PHP’s robust features, the ability to manipulate HTML and CSS selectors, and a strong adherence to responsible practices, you can harness the power of web scraping to gather valuable data, gain insights, and fuel your data-driven endeavors.

So, take what you have learned, put it into practice, and go forth to explore the vast realm of web scraping with PHP. Happy scraping!