Introduction

The graphic processing unit (GPU) is a crucial component in modern computer systems, playing a vital role in rendering high-quality images and videos, as well as accelerating computations in various applications. While the central processing unit (CPU) is responsible for general-purpose computing tasks, the GPU specializes in handling complex graphical operations and parallel computations. Its efficiency in processing large amounts of data simultaneously has made it an essential tool for tasks ranging from gaming to scientific simulations and machine learning.

Originally designed for rendering graphics in video games, GPUs have evolved significantly over the years, becoming powerful processors capable of executing thousands of mathematical operations in parallel. This parallel computing architecture allows GPUs to handle computationally intensive tasks more efficiently than traditional CPUs. As a result, GPUs are employed in a wide range of applications that require heavy computation, such as image and video processing, physics simulations, artificial intelligence, and cryptocurrency mining.

Understanding how a GPU works is essential for developers, scientists, and anyone working with graphics-intensive applications. In this article, we will delve into the inner workings of a GPU, exploring its various components, its role in a computer system, parallel programming techniques, and its evolution over time. By grasping the fundamentals of GPU architecture and programming models, we can harness the full power of these devices and push the boundaries of what is possible in graphics and computation.

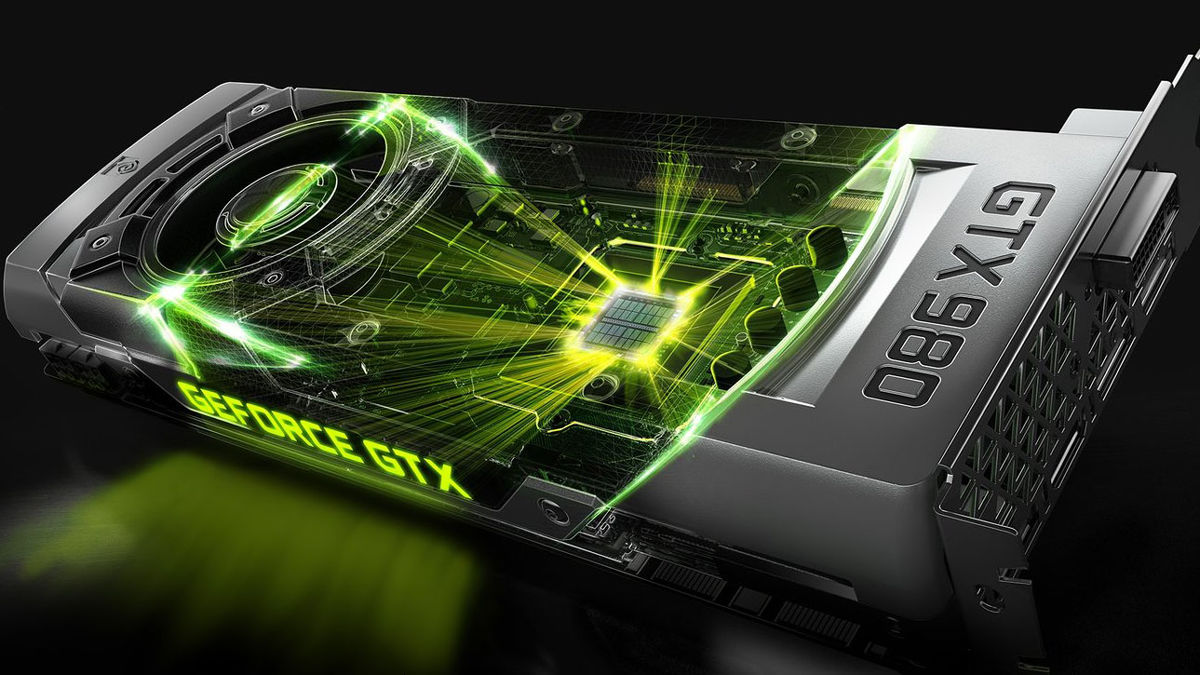

What is a GPU?

A GPU, short for graphics processing unit, is a specialized electronic circuit that is designed to rapidly manipulate and alter memory to accelerate the creation and rendering of images, videos, and graphics. GPUs were initially developed for computer gaming and rendering high-resolution graphics in real-time. However, their capabilities have expanded to become essential for a variety of applications beyond gaming, including scientific simulations, machine learning, data analysis, and cryptocurrency mining.

Unlike the central processing unit (CPU), which is responsible for general-purpose computing tasks, GPUs are optimized for parallel processing and efficient execution of multiple calculations simultaneously. This capability is achieved through the use of thousands of smaller cores or shader units that work together to handle massive amounts of data simultaneously. These cores operate in parallel to efficiently process and manipulate the vast amounts of visual and numerical data required for graphics rendering and complex computations.

Essentially, a GPU is a massively parallel processor that can perform arithmetic and logic operations on a large number of data sets concurrently. This parallel processing capability allows GPUs to quickly perform tasks such as rendering high-resolution 3D graphics, image and video processing, and simulations involving complex calculations.

Over time, GPUs have evolved to support not only graphics processing but also general-purpose computing. This shift has been driven by the increasing demand for computational power in fields such as artificial intelligence, scientific research, and data analytics. By leveraging the massive parallelism of GPUs, researchers, developers, and scientists can exponentially accelerate the execution of complex algorithms and computations, enabling breakthroughs in various domains.

It is important to note that while GPUs excel in parallel workloads, they may not be as efficient as CPUs for sequential tasks. CPUs are optimized for single-thread performance and excel at tasks that require sequential execution. However, when it comes to computationally intensive workloads that can be parallelized, GPUs provide a significant performance advantage.

In the next sections, we will explore the components of a GPU, delve into its inner workings, and understand how parallel programming techniques can effectively harness its power.

The Role of a GPU in a Computer System

The role of a graphics processing unit (GPU) in a computer system is to handle and accelerate the processing of visual and graphics-related tasks. While the central processing unit (CPU) is responsible for general-purpose computing, the GPU takes on the specific task of rendering and displaying high-quality images, videos, and graphics in real-time.

One of the primary functions of the GPU is to generate and render 2D and 3D graphics. When you play a graphically intensive video game or watch a high-definition movie, it is the GPU that performs the complex calculations required to create realistic and visually appealing images. The GPU processes data points, vertices, textures, and shaders to transform them into the final output displayed on your screen.

In addition to gaming and multimedia tasks, GPUs have become increasingly important in other fields as well. For example, in the field of scientific research, GPUs are used to accelerate simulations and calculations required for modeling complex physical phenomena. The parallel processing capabilities of GPUs enable scientists to run simulations faster and more efficiently, enabling breakthroughs in various fields like astrophysics, molecular dynamics, and climate modeling.

Another area where GPUs have gained significant traction is in machine learning and artificial intelligence. These disciplines involve processing large amounts of data and training complex deep learning models. GPUs offer substantial performance boosts by leveraging parallel processing, making them ideal for training deep neural networks and performing inference tasks. As a result, GPUs have become an indispensable tool for researchers and developers working on cutting-edge AI applications.

Furthermore, GPUs have found applications in data visualization, medical imaging, architectural design, and even cryptocurrency mining. In data visualization, GPUs allow for the creation of complex and interactive visual representations of large datasets, enabling analysts and scientists to gain valuable insights. Medical imaging relies on GPUs to process and render high-resolution scans, aiding doctors in diagnosing and treating patients. Architects and designers use GPUs to render realistic 3D models of buildings and environments, facilitating the design process. Cryptocurrency miners utilize the computational power of GPUs to solve complex mathematical problems, validating transactions and earning digital currencies.

Overall, the role of a GPU in a computer system goes beyond traditional graphics rendering. Its parallel processing capabilities and computational power enable it to tackle a wide range of tasks that require massive amounts of data processing and intensive calculations. With the continuous advancement of technology, GPUs are expected to play an even more significant role in shaping the future of computing.

Components of a GPU

A graphics processing unit (GPU) consists of several key components that work together to accelerate graphics rendering and parallel computing tasks. Each component plays a vital role in ensuring efficient processing and optimal performance. Let’s explore the key components of a GPU:

1. Shader Cores: Shader cores, also known as stream processors or CUDA cores, are the heart of a GPU. These small processing units execute instructions and perform calculations, working in parallel to process large data sets simultaneously. The more shader cores a GPU has, the more complex computations it can handle.

2. Texture Mapping Units (TMUs): TMUs are responsible for applying textures to the surfaces of 3D objects. They fetch texture data from memory and apply it to the appropriate triangles or polygons, ensuring realistic shading and detailing. GPUs may have multiple TMUs to handle the complex texturing required for high-quality graphics.

3. Rasterization Units: Rasterization units take the data generated by the shaders and convert them into pixels on the screen. They determine the color, depth, and other attributes of each pixel, mapping the final image to the screen. Rasterization units also perform hidden surface removal and apply anti-aliasing techniques to improve image quality.

4. Memory Interface: The memory interface is responsible for communication between the GPU and the video memory. It ensures quick access to the data required for rendering and computation. The memory interface includes dedicated memory controllers, caches, and high-speed buses to exchange data efficiently.

5. Frame Buffer: The frame buffer is a dedicated area of video memory used to store the final image that will be displayed on the screen. It holds the pixel values, color information, depth data, and other attributes for each screen pixel. The frame buffer is constantly updated as the GPU processes data and generates the final output.

6. Memory Hierarchy: GPUs have multiple levels of memory hierarchy to optimize data access and reduce latency. This hierarchy typically includes global memory, shared memory, and local memory. Global memory is the largest, providing long-term storage for data. Shared memory is a small, high-speed memory that allows for efficient communication and data sharing among threads within a GPU block. Local memory serves as private memory for individual threads.

7. PCI Express Interface: The GPU connects to the motherboard via a PCI Express interface, allowing communication with other system components, such as the CPU, RAM, and storage devices. The PCI Express interface provides high-bandwidth data transfer to ensure smooth and efficient data exchange.

These components work together harmoniously to process and render complex graphics, execute parallel computations, and deliver high-performance results. GPUs continue to evolve, with advancements in architecture, memory technology, and connectivity, enabling increasingly realistic graphics and enabling breakthroughs in fields like machine learning and scientific research.

Graphics Pipeline

The graphics pipeline is a series of stages that a graphics processing unit (GPU) follows to process and render 3D graphics. It encompasses various tasks, including geometry processing, shading, and rasterization, to transform data into the final image displayed on a screen. Understanding the graphics pipeline is crucial for optimizing performance and achieving visually stunning graphics.

The graphics pipeline can be divided into several stages:

1. Vertex Processing: The first stage of the graphics pipeline involves processing individual vertices. The GPU takes in 3D model data, including vertices and their attributes, such as position, color, and texture coordinates. It performs transformations, such as translation, scaling, and rotation, to position the vertices correctly in 3D space.

2. Primitive Assembly: In this stage, the GPU combines the transformed vertices into geometric primitives, such as triangles or lines. These primitives form the basic building blocks required for rendering objects. The GPU also calculates attributes like normals and tangents to facilitate lighting and shading calculations.

3. Geometry Processing: This stage involves performing computations on the geometric primitives to achieve the desired visual effects. Tasks include applying transformations and performing complex calculations to determine the position, orientation, and appearance of vertices and primitives. The GPU can also perform operations such as tessellation, which subdivides primitives to add detail and smoothness to the final result.

4. Rasterization: Rasterization is the process of converting the 3D geometric primitives into 2D pixels on the screen. The GPU determines which pixels are covered by the primitives and calculates their attributes, such as color and depth values. This information is passed on to subsequent stages for further processing.

5. Fragment Processing: After rasterization, the GPU processes individual pixels, known as fragments, and determines their final color based on shading models, lighting calculations, and texture mapping. This stage also involves techniques like anti-aliasing to smooth jagged edges and improve image quality.

6. Texture Mapping: Texture mapping is the process of applying 2D images, known as textures, onto the surfaces of 3D objects. The GPU fetches texture data from memory and applies it to the fragments, giving the objects their visual appearance and detail. Texture mapping can simulate materials, patterns, and realistic lighting effects.

7. Output Merger: In the final stage of the pipeline, the GPU combines the fragments with the existing frame buffer, where the final image is stored. It performs operations like blending, depth testing, and stencil testing to determine the final pixel values that will be displayed on the screen. The output merger stage is responsible for generating the final image, ready for display.

The graphics pipeline is highly parallel, with each stage executing in parallel across multiple cores or shader units within the GPU. This parallelism allows for efficient processing of large amounts of data required for realistic graphics rendering. It is important for developers to understand the intricacies of the graphics pipeline to optimize their rendering algorithms, minimize bottlenecks, and leverage the full potential of modern GPUs.

Data Parallelism and SIMD Architecture

Data parallelism is a crucial concept in modern graphics processing units (GPUs) that allows for efficient processing of massive amounts of data in parallel. It involves executing the same instructions simultaneously on multiple data elements, achieving significant performance improvements for tasks that can be parallelized. GPUs utilize a specific architecture called Single Instruction, Multiple Data (SIMD) to enable data parallelism and accelerate computations.

In a SIMD architecture, a single instruction is executed on multiple data elements in parallel. This approach leverages the parallel processing capabilities of GPUs, which consist of thousands of shader cores or CUDA cores. Each core is capable of executing the same instruction on different data elements simultaneously. This means that a single instruction can be applied to multiple pixels, vertices, or data points simultaneously, greatly reducing the overall processing time.

Data parallelism is particularly effective for tasks involving computations that can be performed independently on multiple data elements. Examples include image and video processing, simulations, scientific calculations, and machine learning algorithms. By applying the same instruction to multiple data elements in parallel, GPUs can process large datasets much faster than traditional sequential processors.

It is worth noting that data parallelism requires data to be organized in a way that can be easily distributed across the GPU cores. This is typically achieved through the use of arrays or vectors, where each element corresponds to a separate data point. The SIMD architecture operates on these data elements concurrently, executing the same instruction on all of them simultaneously.

To enable data parallelism, GPUs employ various techniques, such as warps or wavefronts. These are groups of threads that execute the same instruction, each with its own data element. The threads within a warp or wavefront are executed in lockstep, allowing for efficient parallel execution. This enables GPUs to process massive amounts of data simultaneously, achieving high computational throughput.

While SIMD architecture and data parallelism are well-suited for certain types of computations, it is important to note that not all tasks can fully benefit from this approach. Some algorithms involve dependency between data elements or sequential processing, which can limit the effectiveness of SIMD and parallel execution. In such cases, CPUs, which are optimized for sequential processing, may be more suitable.

Overall, data parallelism and the SIMD architecture are essential components of modern GPUs. They enable highly efficient and parallel execution of instructions on multiple data elements, allowing GPUs to process massive datasets and perform complex computations significantly faster than traditional processors. Understanding the principles of data parallelism is crucial for developers and researchers working with GPUs, as it allows them to harness the full potential of these powerful processing units.

GPU Memory Hierarchy

The memory hierarchy of a graphics processing unit (GPU) plays a vital role in optimizing data access and maximizing computational performance. GPUs possess various levels of memory, each with its own characteristics and purposes. Understanding the GPU memory hierarchy is crucial for developers and researchers to effectively utilize the available memory resources and minimize data latency.

The GPU memory hierarchy typically consists of three main levels:

1. Global Memory: Global memory is the largest and slowest level of memory in the GPU hierarchy. It provides long-term storage for data and is accessible by all the GPU cores. Global memory is used to store large datasets, textures, and other resources required for graphics rendering and computation. Though it offers high capacity, accessing global memory can introduce significant latency due to its slower speed compared to other levels of memory. Developers must optimize memory access patterns and minimize unnecessary data transfers to improve performance.

2. Shared Memory: Shared memory is a faster and smaller level of memory that is shared among threads within a block or workgroup. It enables efficient data sharing and communication between threads. Shared memory has lower latency compared to global memory, making it ideal for storing frequently accessed data and intermediate results during computations. Developers can leverage shared memory to exploit data locality and minimize memory access overhead, enhancing overall performance.

3. Local Memory: Local memory is private memory associated with each individual thread. It serves as a temporary storage space for thread-specific data that cannot fit in registers or shared memory. Local memory has the highest latency among the three levels and should be used sparingly. Data stored in local memory is typically accessed infrequently and can be offloaded to global memory whenever possible. Ensuring proper memory access patterns and minimizing local memory usage are important for optimizing performance.

In addition to these levels of memory, modern GPUs may also include other specialized memory structures, such as constant memory, texture memory, and cache memory. These structures provide additional optimizations for specific types of data and access patterns. For example, constant memory is used to store read-only data that is accessed frequently, such as constants and lookup tables. Texture memory is optimized for texture access, providing fast and efficient texture sampling for graphics applications.

Managing the GPU memory hierarchy effectively requires careful consideration of data placement, data transfers between different memory levels, and minimizing memory access overhead. Developers need to utilize memory coalescing techniques, optimize memory access patterns, and exploit data parallelism to maximize memory bandwidth and minimize latency. Utilizing memory hierarchy efficiently can significantly improve the performance of GPU applications, especially those involving large-scale data processing, computations, and graphics rendering.

Understanding the characteristics and behavior of the GPU memory hierarchy is essential for unlocking the full potential of the GPU and ensuring efficient utilization of memory resources. Optimizing memory usage and control over memory operations are vital factors in achieving high-performance GPU applications.

Parallel Programming on GPUs

Parallel programming on graphics processing units (GPUs) has revolutionized the field of high-performance computing, enabling developers to take advantage of the massive parallel processing power offered by these devices. GPUs are uniquely designed to handle data-parallel workloads, making them ideal for computationally intensive tasks that can be divided into parallel tasks. Understanding the principles of parallel programming on GPUs is essential for effectively harnessing their power and achieving significant performance improvements.

GPU programming involves writing code that can be executed by thousands of shader cores or CUDA cores simultaneously. Known as kernels, these code segments are executed concurrently by different threads, each working on a different portion of the data. The ability to divide the workload among multiple threads allows GPUs to process large datasets and perform complex computations much faster than traditional processors.

To exploit the parallelism offered by GPUs, developers utilize programming frameworks and languages specifically designed for GPU programming, such as CUDA (Compute Unified Device Architecture) and OpenCL (Open Computing Language). These frameworks provide the necessary tools and APIs to manage and control the execution of parallel code on the GPU. They allow developers to specify the number of threads, organize them into thread blocks or workgroups, and efficiently manage memory transfers between the CPU and GPU.

Parallel programming on GPUs typically involves the following steps:

1. Data Partitioning: Developers need to divide the data into smaller chunks that can be processed independently by individual threads. This is often done by mapping the data to the threads in a way that ensures balanced workloads and avoids data dependencies.

2. Thread Hierarchy and Synchronization: GPU programming frameworks enable developers to organize threads into thread blocks or workgroups, each containing multiple threads. Thread blocks can cooperate with each other by sharing data through shared memory and synchronize their execution using barriers and synchronization primitives.

3. Memory Access Optimization: Maximizing memory access efficiency is crucial for achieving high performance on GPUs. Developers can optimize memory access patterns, minimize global memory accesses, and utilize shared memory and local memory efficiently to reduce memory latency and bandwidth overhead.

4. Kernel Optimization: Developers can optimize the kernel code by exploiting data parallelism, reducing thread divergence, and minimizing control flow divergence. Techniques like loop unrolling, memory coalescing, and ensuring coalesced memory access can enhance the performance of the GPU code.

5. Error Handling and Debugging: Debugging parallel code on GPUs can be challenging. GPU programming frameworks provide tools and techniques to identify and debug errors, such as runtime error checking, advanced profiling, and debugging APIs.

Parallel programming on GPUs unlocks immense computational power, making it suitable for a wide range of applications, including scientific simulations, machine learning, data analytics, computer vision, and graphics rendering. Developers should analyze their algorithms and identify computationally intensive tasks that can be effectively parallelized to benefit from the GPU’s parallel processing capabilities.

As GPUs continue to evolve, with advancements in architecture, memory technology, and programming frameworks, the field of parallel programming on GPUs will deepen further. Evolving software and programming techniques for GPUs will enable developers to fully leverage their potential and drive innovation in various domains.

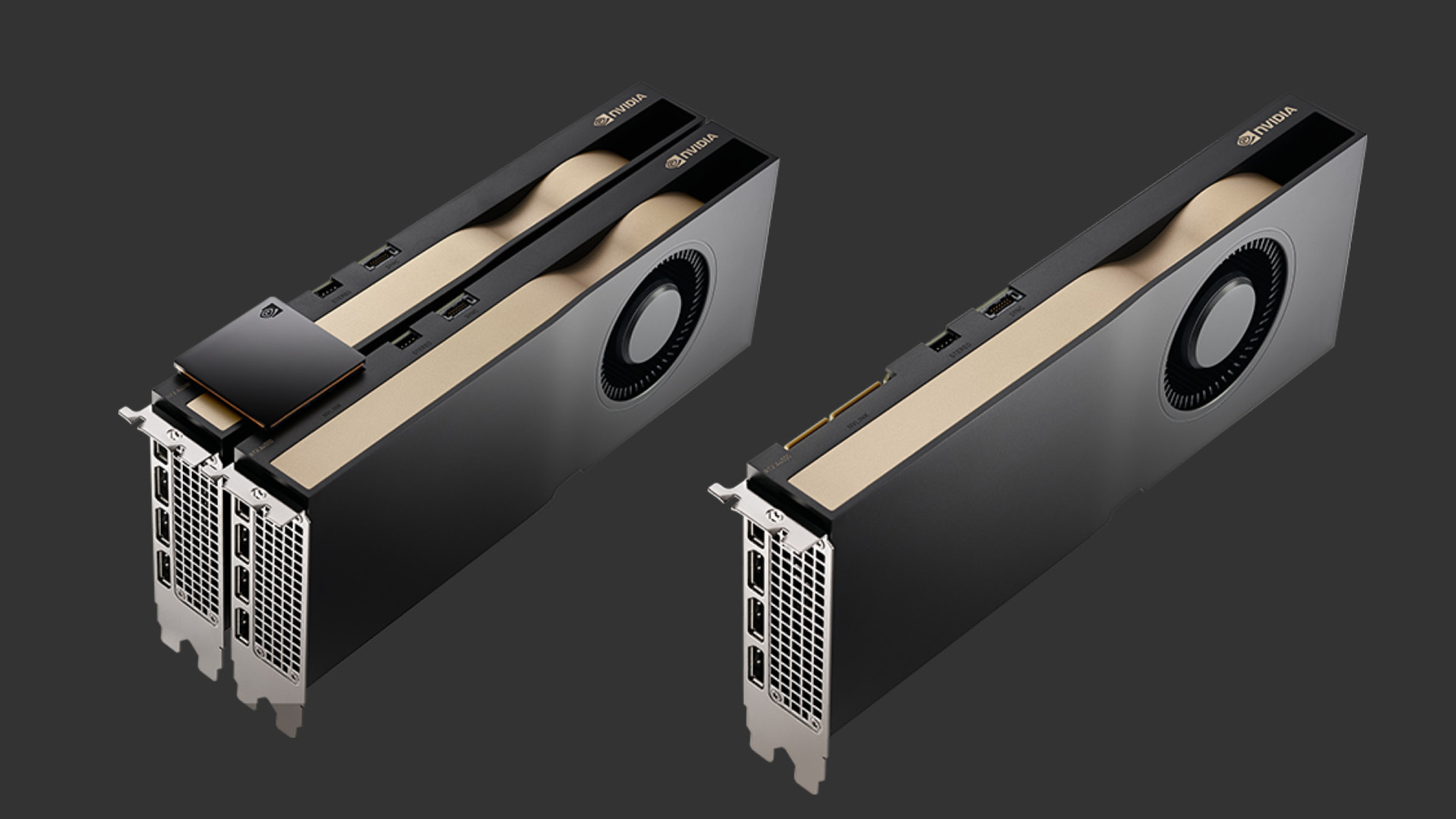

Types of GPUs

Graphics processing units (GPUs) come in various types, each designed for specific applications and target markets. GPUs are tailored to meet the needs of diverse industries, ranging from gaming and entertainment to scientific research and artificial intelligence. Understanding the different types of GPUs can help users select the right GPU for their specific requirements. Here are some common types of GPUs:

1. Gaming GPUs: Gaming GPUs are designed to deliver high-performance graphics and smooth gaming experiences. These GPUs prioritize rendering realistic and immersive visuals, supporting features like real-time ray tracing, high-refresh-rate displays, and advanced shading techniques. Gaming GPUs often come with dedicated software optimizations and cooling mechanisms to ensure optimal gaming performance.

2. Professional GPUs: Professional GPUs, also known as workstation GPUs, are optimized for professional applications like computer-aided design (CAD), 3D modeling, animation, and video editing. These GPUs offer enhanced stability, precision, and reliability for demanding workflows. They often have larger memory capacities, higher memory bandwidth, and support for specialized drivers and software certifications.

3. Data Center GPUs: Data center or server-grade GPUs are designed for high-performance computing (HPC) and artificial intelligence (AI) workloads. These GPUs are optimized for parallel processing and accelerating complex computations in areas like scientific research, machine learning, and data analytics. Data center GPUs often feature higher memory capacities, extensive memory bandwidth, and support for specialized libraries and frameworks like CUDA and TensorRT.

4. Embedded GPUs: Embedded GPUs are integrated into system-on-chip (SoC) devices like smartphones, tablets, and gaming consoles. These GPUs are designed to balance performance with power efficiency, making them suitable for mobile devices and embedded systems. Embedded GPUs enable smooth graphics rendering, video playback, and support for modern graphical user interfaces.

5. Integrated GPUs: Integrated GPUs, also known as onboard or integrated graphics, are integrated directly into the central processing unit (CPU). These GPUs are designed for low-power devices like laptops and entry-level desktops. While they may not offer the same level of performance as dedicated GPUs, integrated GPUs enable basic graphics acceleration, multimedia playback, and casual gaming without the need for a separate graphics card.

6. AI-specific GPUs: With the rise of artificial intelligence and deep learning, specialized GPUs tailored for AI workloads have emerged. These AI-specific GPUs offer optimized hardware and software features to accelerate AI training and inference tasks. They often leverage tensor cores, specialized deep learning libraries, and support for frameworks like TensorFlow and PyTorch.

It is important to consider the specific requirements of an application or use case when selecting a GPU. Factors like performance, power consumption, memory capacity, software compatibility, and budget should be taken into account. Additionally, staying informed about the latest advancements and releases within each type of GPU can help users make informed decisions and stay up-to-date with the rapidly evolving GPU landscape.

Evolution of GPUs

The evolution of graphics processing units (GPUs) has been remarkable, driven by advancements in technology and the increasing demand for high-performance graphics and parallel computing. GPUs have come a long way from their initial role as graphics accelerators for gaming, growing into powerful processors that excel in tasks requiring massive parallel processing. Let’s explore the key milestones in the evolution of GPUs.

1. Early GPU Development: In the early days, GPUs were primarily focused on rendering graphics for video games. Companies like NVIDIA and ATI (now AMD) led the way with their dedicated graphics cards that improved image quality, texture mapping, and 3D rendering. These early GPUs laid the foundation for the parallel processing capabilities that would dominate their evolution.

2. GPGPU and General-Purpose Computing: The concept of general-purpose computing on GPUs (GPGPU) emerged as developers recognized the vast potential of GPUs for accelerating other computationally intensive tasks beyond graphics. GPUs offered high parallelism and memory bandwidth, making them ideal for scientific simulations, data processing, cryptography, and other fields. The birth of programming frameworks like CUDA and OpenCL enabled developers to harness the power of GPUs for general-purpose computing.

3. Rise of Parallel Computing: As demand for parallel computing increased, GPUs began specializing in parallel processing, deploying thousands of cores to execute many tasks simultaneously. Graphics APIs, such as DirectX and OpenGL, were adapted to embrace parallel programming paradigms and facilitate the integration of GPUs into a broader range of applications. This shift marked a turning point in the evolution of GPUs, transforming them into highly parallel processors.

4. Advancements in Graphics Quality: GPUs continued to evolve technologically, enabling increasingly realistic graphics rendering. Innovations like real-time ray tracing, advanced shading techniques, and global illumination significantly improved the visual quality of games and computer-generated imagery (CGI). These advancements pushed the boundaries of what is visually possible in both virtual reality and traditional gaming.

5. AI and Deep Learning: GPUs have also played a significant role in the booming field of artificial intelligence (AI) and deep learning. The parallel architecture of GPUs enables them to accelerate the training and inference processes of deep neural networks, making them indispensable for complex AI applications. GPU manufacturers have incorporated specialized tensor cores and deep learning frameworks on their GPUs to cater specifically to AI workloads.

6. Integration and Miniaturization: With the growth of mobile and embedded systems, GPUs have become increasingly integrated into system-on-chip (SoC) designs. Integrated GPUs offer power-efficient graphics acceleration and multimedia capabilities, enabling smooth user experiences on devices such as smartphones, tablets, and gaming consoles. This integration has driven miniaturization, allowing GPUs to deliver impressive performance in smaller and more energy-efficient packages.

7. Cloud-Based GPU Computing: Cloud computing has emerged as a platform for GPU-accelerated computing. Cloud service providers now offer GPU instances, allowing developers and organizations to access powerful GPU resources remotely. This has facilitated the adoption of GPUs for a wider range of applications while reducing the need for local hardware investments.

The evolution of GPUs has transformed them from dedicated graphics accelerators to high-performance parallel processors that drive advancements in gaming, scientific research, AI, and more. The continued innovation in GPU technology promises exciting possibilities for the future, as GPUs become increasingly integrated, power-efficient, and capable of handling even more sophisticated computations.

Conclusion

The evolution of graphics processing units (GPUs) has revolutionized computer technology, enabling high-performance graphics rendering, parallel computing, and breakthroughs in fields such as scientific research, artificial intelligence, and visual effects. GPUs have come a long way from their early origins as gaming graphics accelerators to becoming powerful processors that excel in data-parallel workloads.

Today, GPUs are built with thousands of shader cores or CUDA cores, enabling them to handle massive amounts of data simultaneously. They offer parallel processing capabilities that are ideal for computationally intensive tasks that can be divided into parallel workloads. The availability of programming frameworks like CUDA and OpenCL has empowered developers to harness the power of GPUs for general-purpose computing and accelerate a wide range of applications.

The different types of GPUs, including gaming GPUs, professional GPUs, data center GPUs, embedded GPUs, and AI-specific GPUs, cater to diverse industries and workloads. From delivering immersive gaming experiences and supporting complex 3D modeling to accelerating scientific simulations and training deep neural networks, GPUs have become essential tools in various domains.

The evolution of GPUs has also driven advancements in graphics quality, enabling features like real-time ray tracing, advanced shading techniques, and global illumination. Visuals in games and computer-generated imagery continue to reach new heights of realism and immersion, pushing the boundaries of what we perceive on our screens.

Furthermore, the integration of GPUs into small, power-efficient devices has allowed for enhanced experiences on mobile devices, gaming consoles, and embedded systems. The availability of cloud-based GPU computing has also made GPUs more accessible and scalable, opening up opportunities for organizations and developers to leverage powerful GPU resources remotely.

As the demand for processing power continues to rise, GPUs will continue to evolve, offering advancements in architecture, memory technology, and programming frameworks. The future of GPUs holds the promise of even greater computational power, improved energy efficiency, and further optimization for AI and deep learning workloads.

In conclusion, the evolution of GPUs has transformed computer technology by delivering high-performance graphics rendering, enabling parallel computing, and driving advancements in various domains. GPUs have become an indispensable force, empowering developers, researchers, and organizations to tackle complex challenges and push the boundaries of what is possible in the computing world.